DiCoDe: Diffusion-Compressed Deep Tokens for Autoregressive Video Generation with Language Models

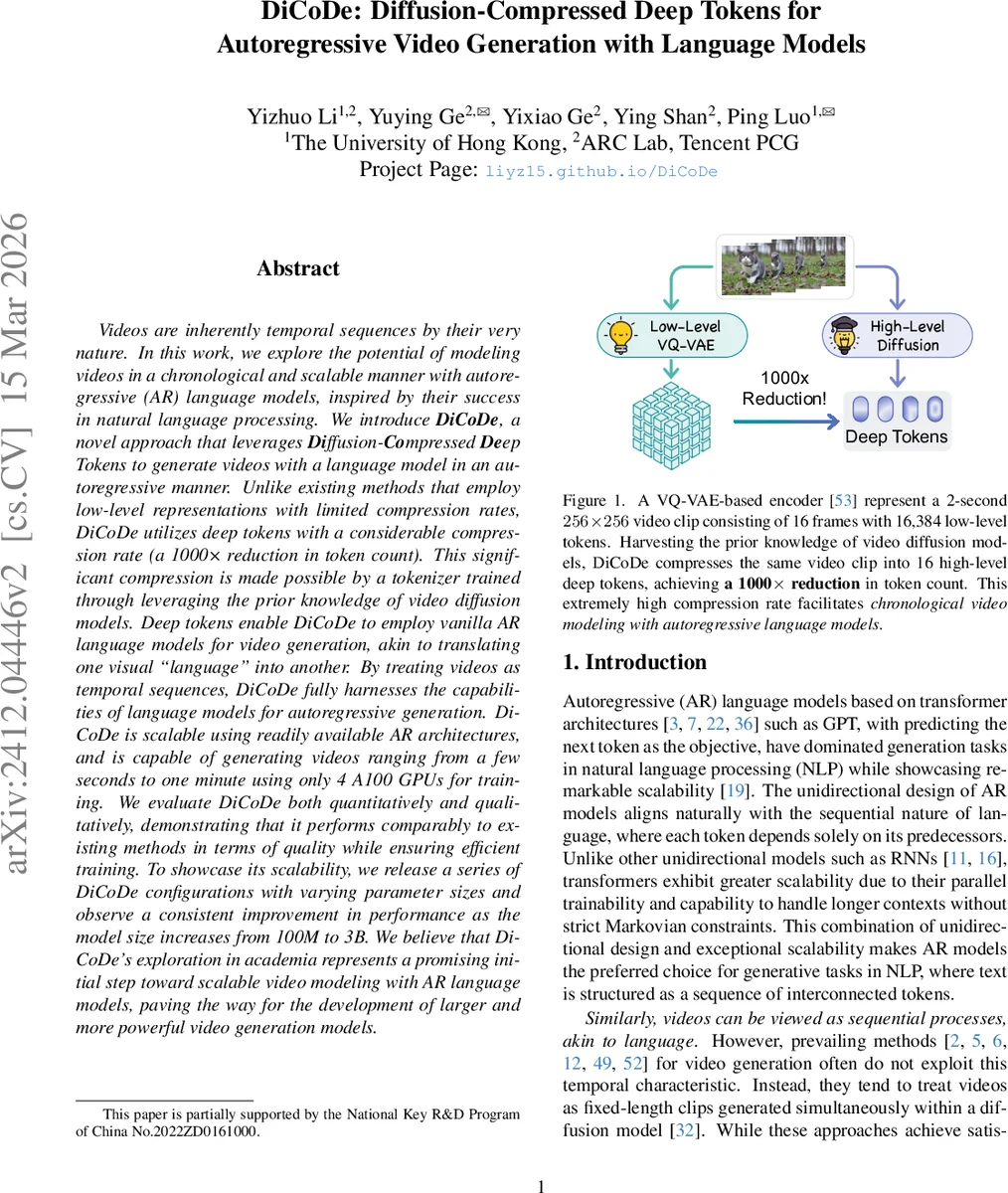

Videos are inherently temporal sequences by their very nature. In this work, we explore the potential of modeling videos in a chronological and scalable manner with autoregressive (AR) language models, inspired by their success in natural language processing. We introduce DiCoDe, a novel approach that leverages Diffusion-Compressed Deep Tokens to generate videos with a language model in an autoregressive manner. Unlike existing methods that employ low-level representations with limited compression rates, DiCoDe utilizes deep tokens with a considerable compression rate (a 1000x reduction in token count). This significant compression is made possible by a tokenizer trained through leveraging the prior knowledge of video diffusion models. Deep tokens enable DiCoDe to employ vanilla AR language models for video generation, akin to translating one visual “language” into another. By treating videos as temporal sequences, DiCoDe fully harnesses the capabilities of language models for autoregressive generation. DiCoDe is scalable using readily available AR architectures, and is capable of generating videos ranging from a few seconds to one minute using only 4 A100 GPUs for training. We evaluate DiCoDe both quantitatively and qualitatively, demonstrating that it performs comparably to existing methods in terms of quality while ensuring efficient training. To showcase its scalability, we release a series of DiCoDe configurations with varying parameter sizes and observe a consistent improvement in performance as the model size increases from 100M to 3B. We believe that DiCoDe’s exploration in academia represents a promising initial step toward scalable video modeling with AR language models, paving the way for the development of larger and more powerful video generation models.

💡 Research Summary

DiCoDe (Diffusion‑Compressed Deep Tokens) proposes a novel pipeline for autoregressive (AR) video generation that treats video as a sequence of highly compressed continuous tokens, enabling the use of vanilla transformer‑based language models. The authors identify a core bottleneck in prior AR video methods: low‑level VQ‑VAE or VQ‑GAN tokenizers produce thousands of discrete tokens per second of video, making long‑duration generation infeasible for current AR architectures. To overcome this, DiCoDe introduces “deep tokens” that achieve a 1,000× reduction in token count (e.g., a 2‑second, 16‑frame clip is represented by only 16 continuous tokens).

The tokenization process consists of two stages. First, each video frame is passed through an image encoder E, yielding a set of high‑dimensional features Zₜ. A query transformer Q, equipped with a fixed set of learnable queries, attends to these features and outputs N_q continuous vectors per frame—these are the deep tokens. Second, a pretrained video diffusion model (trained on large‑scale video data) is repurposed as a decoder: conditioned on the deep tokens of the first and last frames of a short clip, it learns to reconstruct the intermediate frames. This leverages the diffusion model’s prior knowledge, allowing the assumption that a short clip can be faithfully regenerated from its boundary tokens.

Because deep tokens are continuous, the standard cross‑entropy loss used for discrete token prediction is inapplicable. DiCoDe therefore models each token’s distribution with either a Gaussian or a Gaussian Mixture Model (GMM). The AR transformer predicts the parameters (mean, variance, and mixture weights) of this distribution, and training minimizes the negative log‑likelihood (NLL). This explicit variance modeling avoids the collapse associated with naïve L₂ loss and captures uncertainty inherent in continuous latent spaces.

For generation, a text prompt is tokenized and placed at the beginning of the sequence, followed by a special

Comments & Academic Discussion

Loading comments...

Leave a Comment