TDMM-LM: Bridging Facial Understanding and Animation via Language Models

Text-guided human body animation has advanced rapidly, yet facial animation lags due to the scarcity of well-annotated, text-paired facial corpora. To close this gap, we leverage foundation generative models to synthesize a large, balanced corpus of …

Authors: Luchuan Song, Pinxin Liu, Haiyang Liu

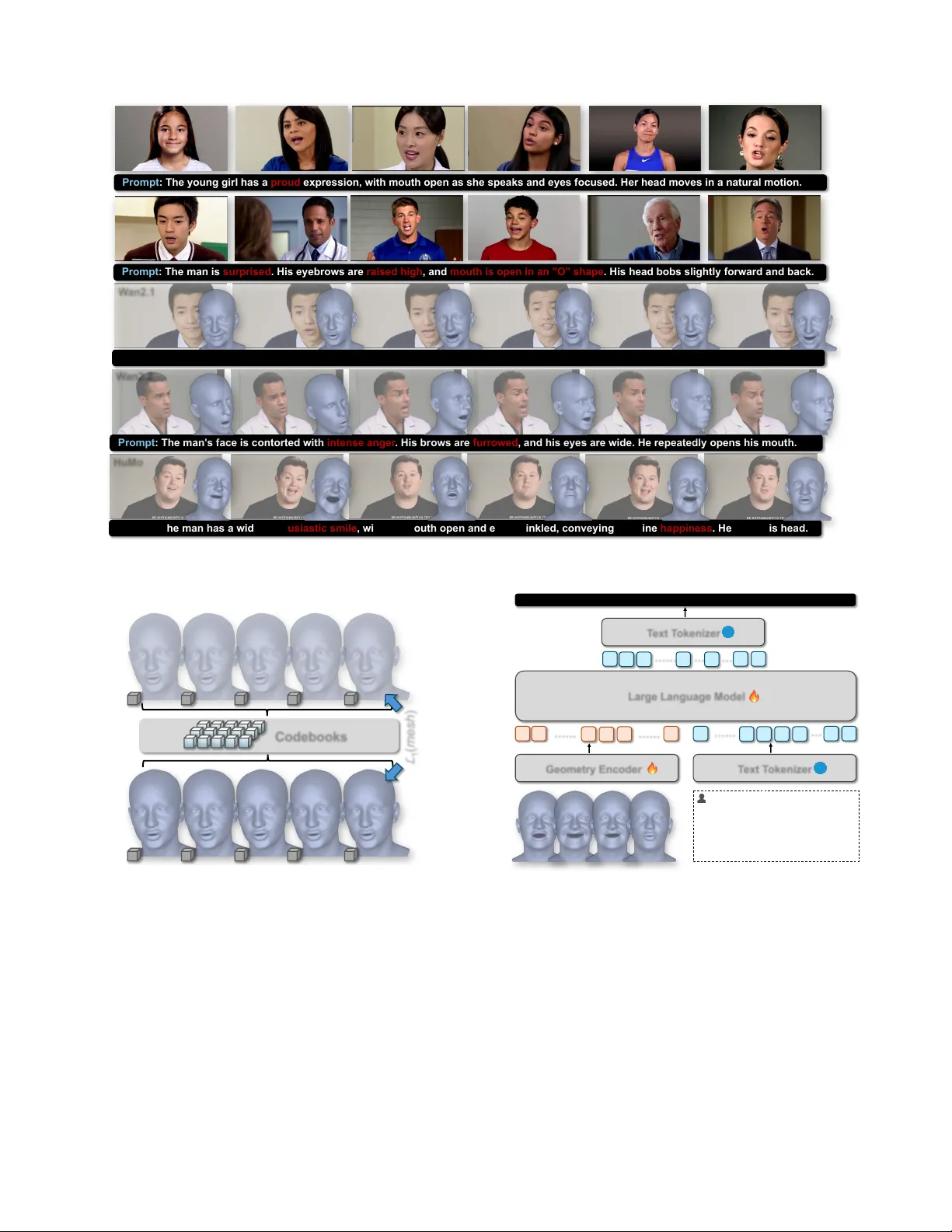

TDMM-LM: Bridging F acial Understanding and Animation via Language Models † Luchuan Song 1 , * Pinxin Liu 1 , Haiyang Liu 2 , Zhenchao Jin, Y olo Y unlong T ang 1 , Zichong Xu 1 , Susan Liang 1 , Jing Bi 1 , Jason J Corso 3 , 4 , Chenliang Xu 1 1 Uni versity of Rochester 2 Uni versity of T okyo 3 Uni versity of Michigan 4 V ox el51 lsong11@ur.rochester.edu, { pliu23, yunlong.tang, sliang22 } @ur.rochester.edu, haiyangliu1997@gmail.com, jjcorso@eecs.umich.edu, chenliang.xu@rochester.edu Open 3D FaceV id User : Please describe expressions and movem e n ts of the 3D facial s equence in the video. Agent : The person is speakin g in a slightly hap p y tone, keeping the head almost still. User : Please describe expressions and moveme n ts of the 3D facial s equence in the video. Agent : A clear look of surprise o n the face, and th e head moved up and down. User : The person is speaking wi th the expression of s t r ong a nge r . User : The person is speaking wi th slight happiness on his face. User : A person is speaking with smile on the face. An d h i s h ead i s n o d d i n g . Figure 1. Overvie w of the proposed Open3DFaceV id dataset and 3D facial understanding/animation pipeline. The left panel visualizes the Open3DFaceV id corpus, which covers a wide range of identities, emotions, and speaking styles generated via text-to-video (T2V) models. The right panel illustrates our interacti ve 3D facial interf ace: giv en a 3DMM sequence, the user prompts the agent to describe expressions and head motion in natural language, and the agent returns fine-grained, parameter-based interpretations. In the re verse direction, the agent is able to condition on user prompts to generate new 3DMM trajectories with controllable emotion and pose. Please refer to https://songluchuan.github.io/TDMM- LM/ for visualization results and datasets. Abstract T ext-guided human body animation has advanced rapidly , yet facial animation lags due to the scar city of well- annotated, te xt-pair ed facial corpora. T o close this gap, we lever age foundation gener ative models to synthesize a lar ge, balanced corpus of facial behavior . W e design pr ompts suite covering emotions and head motions, gener- ate about 80 hours of facial videos with multiple gener ators, and fit per -frame 3D facial par ameters, yielding lar ge-scale (pr ompt and parameter) pairs for training. Building on this dataset, we pr obe language models for bidir ectional com- petence over facial motion via two complementary tasks: (1) Motion2Langua ge: given a sequence of 3D facial pa- † Project Leader . * Equal contribution. rameters, the model produces natur al-language descrip- tions capturing content, style, and dynamics; and (2) Lan- guage2Motion: given a prompt, the model synthesizes the corr esponding sequence of 3D facial parameter s via quan- tized motion tokens for downstr eam animation. Extensive experiments show that in this setting language models can both interpr et and synthesize facial motion with str ong gen- eralization. T o best of our knowledge, this is the first work to cast facial-parameter modeling as a language pr oblem, establishing a unified path for text-conditioned facial ani- mation and motion understanding. 1. Introduction Multimodal large language models (MLLMs) [ 3 , 15 , 17 , 20 , 24 , 33 , 35 , 44 , 45 , 47 , 61 , 62 , 64 , 67 , 70 ] have sig- 1 22.4% 37.6% 37.9% .6% .7% .8% (b) T2V Contributions Proportion (c) Emotion W ords (d) Prompts W ords (a) Control C ategories 57. 1% 10. 23% 10. 20% 7. 55% 5. 94% 8. 98% Figure 2. The analysis of the Open3DFaceV id dataset. W e summarize the control categories induced by prompts and their corresponding video counts, broken down by underlying T2V backbones. W e further visualize the vocab ulary with word clouds, separately for emotion- related terms and for full-text prompts, to highlight the di versity and salienc y of affecti ve descriptors. nificantly advanced visual understanding through joint rea- soning o ver images, audio, and language. Modern sys- tems [ 2 , 19 , 23 , 51 , 57 ] achie ve state-of-the-art results on a wide range of perception tasks [ 7 , 9 , 25 , 31 , 34 , 59 ]. Re- cent domain-specialized MLLMs [ 14 , 37 , 48 , 60 , 69 ] fur - ther demonstrate strong potential for human-centered visual reasoning. Howe ver , fine-grained facial-beha vior understanding re- mains fundamentally limited. T oken inef ficiency is a major bottleneck: existing V -LLMs must process every frame as a large set of image tokens. T o reduce cost, models down- sample video or sparsely sample ke yframes. While accept- able for coarse actions, this is detrimental for facial mo- tion, where expressions unfold ov er only a few frames and temporal continuity is crucial. Micro-expressions such as brow raises, lip twitches, or brief smirks often disappear when frames are dropped; e ven retained frames yield hun- dreds of image tokens per second, restricting temporal con- text and forcing models to ignore subtle dynamics. The result is a systematic bias to ward static, neutral facial be- havior . Emotion imbalance in existing training corpora amplifies this issue. Lar ge-scale MLLMs [ 51 , 60 , 69 ] are mostly trained on in-the-wild videos ( e.g ., Y ouT ube, T ik- T ok, V oxCeleb [ 5 , 38 ]), which ov erwhelmingly feature neu- tral, frontal talking heads. High-intensity or atypical e xpres- sions (e.g. pouting, frowning, laughing, smirking) are rare. Coupled with temporal sparsity from do wnsampling, mod- els learn a narrow , low-v ariance facial prior and struggle to perceiv e or generate expressi ve motion. These limitations raise a central question: “ Can fine- grained facial emotion and movement be r epr esented using low-dimensional geometric signals (e.g ., 3D facial param- eters) that preserve subtle temporal variation while avoid- ing r edundant visual tokens? ” A major obstacle is the lack of class-balanced, richly annotated facial-motion data. Ex- isting datasets [ 6 , 13 , 16 , 30 , 56 ] rely on studio capture and provide coarse, one-hot emotion labels, lacking the di- versity and open-form descriptions needed for language- driv en modeling. T o ov ercome this limitation, we intro- duce Open3DFaceV id , a scalable synthetic pipeline that generates di verse facial videos using multiple T2V mod- els [ 55 , 58 ]. W e curate a le xicon of ∼ 200 emotion and facial-action descriptors ( e.g ., grin, pout, smirk, squint), uniformly sample all categories to ensure balanced cov er- age, and extract 3DMM parameters [ 8 ] for ev ery clip. This yields a lar ge corpus of paired videos, text descriptions, and high-quality 3D facial-motion trajectories. Dataset charac- teristics are shown in Fig. 3 . W ith this dataset providing the necessary cov erage and annotation richness, we explore a modeling paradigm that bypasses image tokens entirely . W e train a geometry VQ- V AE [ 53 ] that discretizes 3DMM sequences into a percep- tually coherent codebook, producing compact geometry to- kens that replace image tokens in the MLLM input space. This structured representation preserves subtle dynamics, remov es visual redundanc y , and drastically reduces token consumption. Finetuning a LLM on paired motion–te xt samples enables it to directly reason o ver 3D facial motion. Built on this alignment interface, our framework sup- ports two complementary directions using the same LLM: (1) Motion2Language : interpreting geometry-token se- quences to produce natural-language descriptions of emo- tion, intensity , micro-expressions, and head motion; (2) Language2Motion : generating expressi ve 3D f acial- motion trajectories directly from user prompts, enabling fine-grained, text-driv en facial animation. Extensiv e exper- iments validate the effecti veness of our dataset, geometry- token representation, and unified modeling frame work. W e demonstrate strong performance across facial-motion un- derstanding and language-driven animation. In summary , our contributions include: • W e present Open3DFaceV id , an ∼ 80-hour synthetic facial-motion corpus generated using multiple foundation T2V models. It provides the largest collection to date of paired T ext2F ace annotations with di verse emotions, sub- jects, and intensity variations. • W e extend the LLM to the Motion2Language setting, enabling natural-language interpretation of 3D facial mo- tion. Given a facial-motion tok en sequence, the model produces compact, expressi ve descriptions of emotions, 2 micro-expressions, and head mo vements. • W e further introduce a Language2Motion framework that generates 3D facial motion from natural-language prompts. By conditioning an autoregressi ve geometry decoder on word-lev el LLM embeddings, users can pre- cisely control fine-grained facial dynamics through text. 2. Related W ork T ext-to-Motion Generation T ext–motion alignment un- derpins much of modern motion understanding [ 28 , 29 , 54 ], with models such as MotionCLIP [ 52 ] and TMR [ 40 ] map- ping language descriptions to dynamic motion sequences. Building on this foundation, recent work has shifted to- ward te xt-to-motion generation: autoregressi ve models [ 11 , 12 , 32 , 43 , 65 , 66 ] tokenize motion and decode it in a language-like space. Supported by large-scale body motion datasets [ 11 , 26 , 41 , 42 ], these approaches enable natural and e xpressiv e full-body movement generation. In contrast, progress in facial animation has lagged behind, largely due to the absence of emotion-rich, text-aligned corpora. T ext-to-V ideo Generation T ext-to-video generation has made significant progress in recent years. Early diffusion- based [ 10 , 21 , 68 , 72 , 73 ] frame works factorize the gener- ation via intermediate image conditioning, improving sta- bility and quality [ 46 ]. More recent w orks target human- centric video or long shot sequences: Identity-Preserving T2V ensures consistent human identity while generating high-fidelity video [ 63 ], and T ext-to-Multi-Shot V ideo Gen- eration addresses transitions and scene continuity [ 18 ]. Commercial systems such as Sora2 [ 39 ]/V eo3 [ 58 ] hav e fur- ther demonstrated text-dri ven open-world video generation. 3. Open3DFaceV id W e b uild our dataset by programmatically prompting a fam- ily of T ext-to-V ideo (T2V) models [ 49 ] to synthesize fa- cial videos that can be reconstructed in our 3D setting. The prompt space cov ers a large attribute le xicon, including sub- ject descriptors ( e.g ., male, female, young lady) and affec- tiv e cues ( e .g ., joy , anger , smile), to encourage broad cov er- age o ver identities and emotions. T o av oid o verfitting to the biases of a single generator , we instantiate the pipeline with multiple underlying T2V models ( e.g ., W an-2.1/2.2 [ 55 ], Open-Sora [ 71 ], HuMo [ 4 ], V eos [ 58 ]), which expands the range of styles and mitigates model-specific artifacts. Prompts Construction The prompt design factorizes into facial appearance and video dynamics. On the f acial side, we sample a rich space of affecti ve descriptions, varying both emotion type and intensity , and augment them with frequent micro-expressions such as blinking, pouting, and related facial actions to increase behavioral di versity . On the video side, we employ a small set of templated prompts that constrain camera motion, encouraging stable framing. For example, instructions like “ The fr ame r emains steady , with the head and shoulders center ed ” are applied to keep the subject fix ed in vie w and reduce cinematic jitter . W e try to maintain the balance between the number of facial emo- tion categories and the number of subject categories in the prompts. Programmatically generated templates are then re- fined with the ChatGPT -4.1 [ 1 ], yielding natural, con versa- tional descriptions that better resemble daily language while preserving the intended control condition. Human-Centric T2V Synthesis W e apply the polished prompts as control condition to synthesize a large collec- tion of facial videos. T o a void collapsing the motion dis- tribution onto the prior of any single generator , we instan- tiate the pipeline with a suite of base T2V models. For each backbone, the facial-attribute portion of the prompt is kept standardized, while only the video-related clauses are lightly adapted to match model-specific preferences in framing and cinematography . Our corpus contains roughly 60K synthetic clips, each about 4–6 seconds. T o narrow the gap to real data, we augment this set with about 10K wild clips and deri ve prompt-style annotations with a vi- sion–language model (Gemini [ 51 ]). Geometry F acial Estimation W e estimate facial motion with 3D Morphable Model (3DMM) [ 8 ] parameters, fitted to monocular videos in our corpus to recov er per-clip iden- tity , expression, and head pose. Specifically , we adopt the FLAME [ 22 ] model to regress facial parameters. 4. Language-Motion Alignment Base on Open3DFaceV id , we learn a shared space be- tween 3D facial motion (3DMM) and natural language, en- abling both interpretation and synthesis of expressi ve f a- cial beha vior . The alignment pipeline has three key com- ponents: (1) the Geometry VQ-V AE operates on facial ge- ometry rather than continuous 3DMM parameters, map- ping sequences into the discrete token space; (2) an LLM- based motion interpr eter that directly decodes geometry- token sequences into natural-language descriptions, sup- porting Motion2Language understanding; (3) a shar ed lan- guage–motion transformer that conditions geometry-token prediction on w ord-lev el embeddings, enabling controllable Language2Motion synthesis. Geometry VQ-V AE Shown in Fig. 4 , we quantize facial dynamics into a discrete latent space. Rather than dis- cretizing on 3DMM parametersm which can map similar expressions to different coef ficient patterns. W e operate di- rectly on reconstructed facial geometry , ensuring that visu- ally similar expressions are encoded with consistent tokens. This design mitigates the many-to-one ambiguity in which multiple expression codes map to nearly facial geometry . Motion2Language T o translate 3D facial motion into nat- ural language, we utilize geometry tokenizer to tokenize fa- 3 Prompt : The young man h as an earnest and slightly concerned expression. His eyebrows are raised and furrowed as he speaks . Pr o m p t : T h e ma n ' s fa c e i s c o n to r te d w i th in t e n s e a n g e r . H is b r o w s a r e fu r r o w e d , a n d h is e y e s a r e w id e . H e r e p e a t e d ly o p e n s h is m o u t h . Prompt : T h e ma n h a s a w i d e, enth u si ast i c sm i l e , w i th his m o u t h o p e n a n d e yes cr in k le d , c o n v e y in g genu in e ha ppi ne s s . H e bobs h is h e a d . Wa n 2 . 1 Wa n 2 . 2 HuMo Pr o m p t : T h e ma n i s su r p r i sed . H is e y e b r o w s a r e ra i s e d h i g h , a n d mo u th i s o p e n i n a n "O " s h a p e . H is h e a d b o b s s lig h t ly f o r w a r d a n d b a c k . Pr o m p t : T h e y o u n g g i r l h a s a pr oud exp r essi o n , w i t h m o u t h o p en as sh e sp eaks an d eyes f o cu sed . Her h ead m o ves i n a n at u r al m o t i o n . Figure 3. Dataset over view . T op two rows: starting from a fixed text prompt, we v ary the random seed and emphasize dif ferent prompt keyw ords to modulate facial identity and video attributes, showcasing subjects across different genders. Bottom three rows: we recover FLAME facial parameters and pair the resulting trajectories with the corresponding prompt, forming T ext–3DMM dataset. Codebooks 1 4 9 7 2 1 4 9 7 2 ℒ 1 ( mesh ) Figure 4. Geometry-aware facial tokenization learning. W e quan- tize facial expression codes into a discrete codebook and enforce reconstruction in mesh space. The input facial expression codes are mapped to code indices, decoded back to FLAME meshes (bot- tom), and supervised with an L 1 loss on verte x positions cial geometry , where discrete tok ens are fed as symbolic ob- servations to the LLM. Instead of relying on image inputs, the LLM directly consumes sequences of geometry tokens, producing rich free-form descriptions of emotions, intensi- ties, micro-expressions (e.g., blinking, pouting), and head dynamics. Each training instance provides a geometry- token sequence and an accompanying te xtual description. Geometry Enc oder Te x t To k e n i z e r Large Language Model Te x t To k e n i z e r …… …… …… … … …… … Agent : The person has a happiness expression as sp eaking. Th e head is still. Z 1 Z 2 Z i Z i+1 Z i+2 Z 4096 T 1 T i T i+1 T i+3 T i+2 T n-1 T n T * 1 T * 2 T * 3 T * i+1 T * i T * n-1 T * n [User Interaction]: Summarize the face in one short sentence; rely only on the codes: Create a minimal description of the face encoded by these tokens: Describe the person's facial appeara nce in one brief sentence: From the token stream, describe the face video motion: Figure 5. Motion2Language. Geometry sequences are encoded into discrete facial tokens by the geometry encoder and fed, to- gether with text tokens from the user prompt, into a LLM. Condi- tioned only on these geometry tok ens, the agent generates natural- language descriptions of expression/head motion, enabling inter- activ e question to answering about 3D facial beha vior . T o improv e linguistic coverage without altering semantics, we expand each annotation with multiple paraphrases pro- duced by an auxiliary LLM. As shown in Fig. 5 , the LLM is instruction-tuned to map geometry-token observations to natural-language responses conditioned on task templates. 4 [User Interaction]: The person has a warm and engaging facial expressi on. He offers a gentle, closed- lip smile . The man has a warm and gentle smile, with his eyes crinkling kindly at the corners. The person has a serious and concerned exp ression. Her brow is slightly furrowed, T5 T ext Encoder Geometry Enc oder Autoregression Tra n sf or me r …… …… Z 1 Z 2 Z i Z i+1 Z i+2 Z 4096 Geometry Dec oder …… …… Z 1 Z 2 Z i Z i+1 Z i+2 Z 4096 …… … T 1 T i T i+1 T i+3 T i+2 T n-1 T n T oken Masks Figure 6. Language2Motion. The user provides a natural- language description of the desired facial behavior (top left). The text tokenizer con verts the prompt into word-level tokens, while a paired 3D facial sequence is encoded into discrete geometry to- kens by the geometry encoder . The autore gressiv e transformer predicts future geometry tokens conditioned on the text prefix. Methods Cor E ↑ Cor M ↑ Cor I ↑ USER E ↑ USER M ↑ [GPT -4] [Human] HumanOmni [ 50 ] 1.84 1.17 1.09 2.04 1.00 Gemini2.5 VLM [ 56 ] 2.45 2.91 3.51 2.88 3.41 Ours 4.02 3.35 3.63 4.29 3.79 T able 1. Quantitative e valuation of Motion2Language. Mean 1–5 correctness scores from GPT -4 and human raters for emotion (Cor E , USER E ), motion (Cor M , USER M ) and intensity (Cor I ). W e bold the best. Our geometry-token model consistently outper- forms HumanOmni and Gemini-2.5 VLM across the metrics. Language2Motion Compared to full-body scenarios, text- driv en facial motion generation remains considerably more challenging. A vailable datasets are smaller , f acial actions are subtler , and con ventional text-to-motion pipelines com- press the entire prompt into a global embedding, losing the token-le vel cues that dictate fine-grained muscular dynam- ics. T o le verage the linguistic granularity , we introduce a wor d-level langua ge pr efix that injects pretrained LLM em- beddings into an autoregressi ve facial-motion transformer . Importantly , this prefix is processed by the text encoder which takes the user’ s text prompt, produces token-le vel embeddings, and conditions the motion decoder without modifying the vocabulary of the language model. As illus- trated in Fig. 6 , this prefix-based fusion preserves the struc- ture of the prompt and permits individual words to steer lo- calized facial movements, enabling controllable and seman- tically aligned 3D facial-motion generation. 5. Experiments 5.1. Dataset Details The Open3DF aceV id has a total duration of 81 hours and contains 57.2K video clips ranging from 4 to 6 seconds. Methods Cor E ↑ Cor M ↑ Cor I ↑ USER E ↑ USER M ↑ [GPT -4] [Human] HumanOmni [ 69 ] 3.66 1.59 1.42 3.82 1.27 Gemini2.5 VLM [ 51 ] 4.21 3.17 3.92 4.17 3.82 Ours 4.02 3.35 3.63 4.29 3.79 T able 2. Quantitative evaluation of Motion2Language with nat- ural images input. W e employ the same correctness metric as in T able 1 , while replacing geometry-image inputs with natural facial images to better adapt the ev aluation setting to VLMs. Methods L 2 ↓ FD ↓ T ok ↑ Cor E ↑ USER ↑ [Parameters] [Language] T2M-X [ 27 ] 0.471 47.59 0.671 2.21 3.40 T2M-GPT [ 65 ] 0.226 37.04 0.895 3.57 3.91 Ours 0.219 31.75 0.920 4.13 3.95 T able 3. Quantitati ve e valuation of Language2Motion. Compar- ison with T2M-X and T2M-GPT on parameter-space measures ( L 2 /FD/T ok) and language-based metrics (Cor E /USER). L 2 /FD assess expression/pose fidelity , T ok is token-level accurac y . The videos in datasets are standardized to 25 FPS and the resolution is resized 618 × 360 . W e employ 32 H200 GPUs to synthesize videos in T2V setting using W an2.2 (5B and 14B), HuMo (17B), Open-Sora (11B) and W an2.1 (14B), consuming roughly 400 GPU Hours in total. W e obtain V eo2/3 samples via its API interface. Due to the high per- clip generation cost, we include a modest number of V eo2/3 generated videos in our corpus. For face estimation, we use the FLAME template to regress per-frame expression, and head rotation (yaw/roll/pitch) parameters within videos. W e present the model implementation details in the Appendix. 5.2. Baselines Giv en the challenges of constructing text–motion datasets, there exist fe w directly comparable prior methods. W e therefore adopt the most relev ant techniques as baselines across both Motion2Language and Language2Motion. Motion2Language. W e ev aluate against strong vi- sual–language models applied directly to video frames. (1) HumanOmni [ 69 ] , a human-centric VLM designed for holistic audio–visual reasoning. W e treat it as a vision–only model by sampling video frames from dataset and prompt- ing it to describe head motion and emotion. During infer- ence, it operates entirely in pixel space. (2) Gemini VLM , a general-purpose multimodal model [ 51 ]. W e uniformly sample video frames and provide an instruction prompt ask- ing for descriptions of head pose and affecti ve state. Language2Motion. W e draw baselines from text-to- human-motion generation. (3) T2M-X [ 27 ] , a transformer- based text-to-motion model that represents motion as dis- crete tokens and autoregressiv ely predicts pose sequences; we adapt its facial-motion branch as a baseline. (4) T2M- GPT [ 65 ] , a GPT -style generative model trained on discrete motion codes. It directly models the distrib ution of tok- enized motion trajectories conditioned on text descriptions. W e follo w their discrete VQ-token formulation and train the 5 User : Pl ease give me a compact, fluent descriptio n of the geometry face here (only focus on emotion and head pose). Ground - Tru t h : A young girl speaks wit h a slight boredom expression. Her head remains relatively still, wit h only slight, natural movements as she talks. Gemini -2.5 Pro : The video displays a 3D geometr ic model of a human face that is animated to open and close its mouth, as if speaking or singing. HumanOmni : Smile . Our Mo tion 2 Language : The person speaks as the brows furrow in a worried expression, the head is almost still. User : Please summarize the facial features (emotion and head motion) within one sentence from the geometry sequence. Ground - Tru t h : The woman’s expression is one of intense anger and disbelief. Her brow is deeply furrowed as she speaks. Her head moves in sharp, punctuated motions, shaking slightly. Gemini -2.5 Pro : The geometric face animates through a speaking or singing motion by opening and closing its mouth while performing a subtle side- to -side head turn . HumanOmni : Open mouth wide . Our Motion 2 Language : The person speaks in keeping the amplified angry look, the head is slight shaking in speaking. User : Please w rite a br ief natural - language description of the face emotion and head motion from the geometry sequence. Ground - Tru t h : The woman's facial expression is surprise or emphasis. Her head moves subtly , nodding and turning to punctuate her words . Gemini -2.5 Pro : T he 3D model of the f ace animates through a speech-like motion, opening and closing its mouth . Simultaneously , the head performs a subtle side- to -side rotation, turning slightly from its right towards its lef t . HumanOmni : Smile . Our Motion 2 Language : The person recites a brief line, with a very strong joyful demeanor . The head motion is slight nodding . Figure 7. Qualitativ e comparison for Motion2Language. For rep- resentativ e clips, we show the input frames/geometry . The Gem- ini/HumanOmni operate on rendered geometry , while our model relies on 3D facial tokens. Our approach produces more accurate descriptions of emotion/motion, closely matching GT annotations. facial-motion pathway on our dataset for f air comparison. 5.3. Quantitative and Qualitative Evaluations Motion2Language. W e validate: (1) geometry-token se- quences preserve sufficient expressiv e information for accu- rate semantic interpretation, and (2) the compact tokeniza- tion provides higher efficienc y than image-token VLMs. T o quantify these aspects, we measure correctness in e x- pression (Cor E ), motion (Cor M ), and intensity (Cor I ) us- ing GPT -4 as an automatic judge on the 2K test clips, and collect human ratings (USER E , USER M ) on 300 samples. Moreov er , to ensure a fair comparison with the baseline methods, we use natural facial videos/images as inputs, as shown in T ab . 2 . W e observe that commercial models such as Gemini achieve leading performance in the natural- image setting. Ne vertheless, it is important to note that our method uses only a single token as input for each image, which is substantially fewer than the number of input to- T h e w o m a n h a s a b r i g h t a n d f r i e n d l y e xp r e ssi o n , sm i l i n g w i d e l y . H e r h e a d i s m o st l y st a t i o n a r y . T h e m a n b e g i n s w i t h a se r i o u s a n d f o cu se d e xp r e ssi o n . T h e n l e a n s f o r w a r d su r p r i se e xp r e ssi o n . T2M -X LM -Listener Ours Ours LM -Listener T2M -X Figure 8. Qualitativ e comparison for Language2Motion. Given prompts, we visualize generated 3D facial motion from different method. Our model produces expressions and head poses that more faithfully follo w the described affect T rain-Corpus Cor E ↑ Cor M ↑ Cor I ↑ USER E ↑ USER M ↑ [GPT -4] [Human] MEAD [ 56 ] 3.03 1.29 3.59 2.92 1.18 Y ouT ube [Gemini] 2.74 3.92 3.76 3.14 3.65 Open3DFaceV id 3.97 3.44 3.85 4.05 3.75 T able 4. Ablation on training corpus for Motion2Language. Identical architectures are trained on MEAD, Y ouT ube (Gemini- annotated) and Open3DFaceV id. The metrics defined in Sec. 5.3 . kens required by Gemini. Fig. 7 compares model outputs under identical prompts. HumanOmni and Gemini-2.5 Pro, both trained primarily on natural images, struggle to interpret temporal dynamics of facial motions, resulting in correctness scores close to 1. This reflects a domain gap rather than a failure of the task itself. In contrast, our model consistently recov ers the cor- rect emotion and motion semantics from 3DMM-derived tokens, demonstrating that structured geometric representa- tions retain the key information needed for facial-beha vior reasoning. Furthermore, our method encodes each frame with a single geometry token instead of 300–500 visual to- kens, yielding a more efficient and temporally responsi ve understanding pipeline. Language2Motion. W e test: (1) geometry-token genera- 6 (1) Open3DFac eV id (2) MEAD (3) Y ouTube Happy Angry Surprise Sad Fear Disgust N eutral Excited Cry Interest Worry Prou d Shy Happy Angry Sad Fear Contempt Disgust Surprise Neutra l Calm Neutral Happy Sa d Laugh Joyful Cool Disappoint Depressed Flat Smile Angry Total Labels = 187 Total Labels = 8 Total Labels = 37 … … Figure 9. Emotion coverage across datasets. T op-row: emotion-label distributions for (1) Open3DFaceV id, (2) MEAD [ 56 ], and (3) Y ouT ube-deri ved clips, showing the number and balance of emotion categories (187/8/37 labels, respectively). Bottom-row: 2D projections of the corresponding facial (e xpression+pose) video embeddings, where Open3DFaceV id exhibits rich, well-separated clusters, while MEAD and Y ouT ube provide coarser and less diverse affectiv e coverage. Each point in the t-SNE [ 36 ] plots corresponds to the facial- expression features of a single video clip. Due to color variety limitations, we are unable to display all categories. T rain-Corpus L 2 ↓ FD ↓ T ok ↑ Cor E ↑ USER ↑ [Parameters] [Language] MEAD [ 56 ] 0.217 32.14 0.892 3.56 2.88 Y ouT ube [Gemini] 0.259 33.47 0.910 3.17 3.59 Open3DFaceV id 0.226 32.48 0.915 3.90 3.92 T able 5. Ablation on training corpus for Language2Motion. Iden- tical models are trained on MEAD, Y ouT ube (Gemini-annotated), and Open3DFaceV id. Training on Open3DFaceV id yields the best trade-off between geometric fidelity and te xt–motion alignment. tion conditioned on language can faithfully follow the in- tended facial semantics, and (2) the predicted 3DMM tra- jectories exhibit realistic temporal dynamics despite using a compact discrete representation. T o validate these as- pects, we adopt quantitati ve and human-centric metrics. T ext–motion consistency is assessed through human ratings (USER) on 300 samples. Low-le vel reconstruction fidelity is measured using L 2 distance on expression and pose. Mo- tion realism is ev aluated via Fr ´ echet Distance (FD), com- paring feature distributions of generated and ground-truth sequences. T oken prediction accuracy (T ok) reflects dis- crete code fidelity within our VQ token space. Finally , we compute expression correctness (Cor E ) using the trained Motion2Language model as an external semantic e valuator , providing an independent measure of alignment. As shown in T ab . 3 , our method achie ves consistently higher scores across all metrics, supporting both claims: the model generates semantically aligned f acial motion and maintains high-quality temporal structure. T2M-GPT per- forms reasonably well due to its autoregressiv e architecture but falls short on semantic metrics, highlighting the benefit of conditioning on a stronger LLM backbone. Qualitative T h e yo u n g m a n o n co n t i n u e s sp e a ki n g , w i t h h i s l i p s p u sh e d f o r w a r d i n a l i n g e r i n g p o u t . Open3DFaceVid Yo u Tu b e MEAD Figure 10. The qualitati ve ablation on training corpus for Lan- guage2Motion. W e visualize facial motion generated by models trained on ablation datasets. The MEAD- and Y ouT ube-trained models miss the “pout” beha vior , whereas the our model produces clear lip protrusion (red arrows), matching the description. examples in Fig. 8 sho w that our model responds sensiti vely to nuanced instructions ( e.g ., transitioning from “focused” to “surprised” ) and that higher-capacity v ariants capture expressi ve affect ( e.g ., “joyful” ) more distinctly than com- peting methods. 5.4. Ablation Study Dataset Ablation. T o understand the v alue of our synthetic corpus, we compare Open3DFaceV id against two repre- sentativ e alternativ es: (1) the MEAD [ 56 ] studio-captured 7 User : Please give a brief natural - language description of the face emotion and head motion from the input geometry sequence. Ground - Tru t h : The man's expression transitions from worried and concerned to one of sudden shock and disbelief. His eyebrows, initially furrowed, and his mouth opens in an "O" of surprise. Tra i n on MEAD : The person is speaking with a slight fear . The head is almost still. Tra i n on Yo uTu b e : The person speaks with an engaged and expressive face. The eyes are wide before briefly closing and reopening as he talks. The head remains relatively still and forward -facing. Tra i n on Open 3 DFaceVid : The person speaks from subtle worried to surprised. The head is move from left to right . Figure 11. Qualitative ablation on corpus for Motion2Language. Giv en user query , we sho w responses from models trained on MEAD, Y ouTube and Open3DFaceV id. The MEAD- and Y ouT ube-trained variants produce generic or partially correct de- scriptions, while the Open3DFaceV id model most accurately cap- tures the subtle worry-to-surprise transition and head motion. Tr a in in g I te r a t io ns To k e n s A c c u r a c y Motion2La nguage Language2M otion Qwen3s Llamas Figure 12. The scaling behavior of Motion2Language and Lan- guage2Motion. Left: token accuracy over training iterations for Motion2Language with Qwen3 backbones of different sizes (.6B- 32B). Right: token accuracy for Language2Motion with LLaMA- based backbones (0.7B-3B). dataset with one-hot emotion labels, con verted into textual prompts; and (2) an in-the-wild Y ouT ube set automatically annotated by Gemini. These tw o sources reflect the two dominant data paradigms in facial-behavior research, con- trolled lab capture and unconstrained internet videos, both of which are known to lack the fine-grained expressiv e co v- erage required for language-driv en modeling. Evaluation Pr otocol. W e follow a TMR-style [ 40 ] proto- col to assess whether each dataset provides semantically reliable text–motion pairs. For e very dataset, we train a CLIP-like text–motion encoder on its paired annotations and project all samples into a shared embedding space. If a dataset contains high-quality , well-aligned annotations and exhibits sufficient expression diversity , its embeddings should form well-separated clusters corresponding to dif- ferent facial categories. Conv ersely , datasets with limited variation or noisy annotations should collapse into ov erlap- ping or ambiguous regions. The t-SNE visualizations in Fig. 9 confirm this behav- ior . Open3DFaceV id displays clear and balanced clusters across 187 labels, reflecting both the richness of our curated lexicon and the reliability of the T2V generation pipeline in follo wing prompts. MEAD, despite being high-quality T h e p e r so n sp e a ks w i t h o u t h e si t a t i o n , p a i r e d w i t h a hi gh - e n e r g y h a p p y e xp r e ssi o n . T h e p e r so n sp e a ks w i t h o u t h e si t a t i o n , p a i r e d w i t h a h i g h - e n e r g y angr y e xp r e ssi o n . The person speaks without hesitation , paired with a low happy expression. Figure 13. Language-controlled expressiv e ablation. W e modify one keyword in prompt and let the Language2Motion model gen- erate the corresponding facial motion. The two happy prompts produce dif ferent intensity level, while the angry prompt yields clearly distinct mouth shapes, demonstrating fine-grained te xt con- trol ov er emotional style. capture, offers only eight coarse labels and limited expres- siv e variation, leading to tighter , less informati ve clusters. Y ouTube data, ev en after Gemini annotation, remains hea v- ily ske wed to ward neutral expressions and exhibits consid- erable overlap, highlighting the dif ficulty of recovering sub- tle facial beha viors from uncontrolled videos. Downstr eam comparisons. W e next train both Mo- tion2Language and Language2Motion models on each dataset. For Motion2Language, the broader coverage of Open3DFaceV id yields noticeably richer semantic under- standing (Fig. 11 ), even though its head-motion range is slightly narrower than the Y ouT ube variant (reflected by Cor M in T ab. 4 ). For Language2Motion, models trained on our dataset produce clearer expression articulation, the lin- gering pout in Fig. 10 for instance, demonstrating stronger text–motion alignment. Although MEAD occasionally shows lower feature-space distances due to its simpler la- bel space, models trained on Open3DFaceV id achiev e the highest USER scores (T ab . 5 ), indicating superior percep- tual quality and semantic fidelity . Overall, these ablations sho w that neither controlled studio capture nor in-the-wild videos offer the di versity or balance needed for expressi ve facial-motion learning. Our synthetic corpus provides significantly stronger annota- tion quality , more comprehensive expressi ve coverage, and more reliable text–motion alignment, benefiting both Mo- tion2Language and Language2Motion tasks. Additional analysis of dataset bias and synthetic-data ef fecti veness is 8 provided in the Appendix. Scaling Behavior . W e present the scaling behavior by plotting token accuracy for Motion2Language and Lan- guage2Motion in Fig. 12 . For Motion2Language, perfor- mance saturates around 4B–8B parameters, and larger back- bones offer limited gains. In contrast, Language2Motion benefits more strongly from scale, with lar ger models yield- ing higher token-generation accuracy . Language2Motion Keyw ords. As shown in Fig. 13 , we obtain facial motions by changing only a single word in the prompt. The edit can adjust style intensity ( “high-energy”- “low” ) or swap the affecti ve category itself ( “happy”- “angry” ). These results demonstrate both the model nu- anced language understanding and its ability to translate fine-grained prompts into controllable facial motion. 6. Conclusion W e study a previously underexplored direction, aligning language with 3D facial motion. W e first analyze a key bottleneck, the scarcity of f acial datasets with paired text and address it by synthesizing a lar ge-scale corpus of fa- cial videos with T2V models. Building on this resource, we study two complementary settings: Motion2Language and Language2Motion. W e hope our dataset and bidirectional framew ork will serve as a foundation for future research in 3D facial motion understanding and animation. References [1] Josh Achiam, Steven Adler , Sandhini Agarw al, Lama Ah- mad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv pr eprint arXiv:2303.08774 , 2023. 3 [2] Shuai Bai, Keqin Chen, Xuejing Liu, Jialin W ang, W enbin Ge, Sibo Song, Kai Dang, Peng W ang, Shijie W ang, Jun T ang, et al. Qwen2. 5-vl technical report. arXiv pr eprint arXiv:2502.13923 , 2025. 2 [3] Lin Chen, Xilin W ei, Jinsong Li, Xiaoyi Dong, Pan Zhang, Y uhang Zang, Zehui Chen, Haodong Duan, Zhenyu T ang, Li Y uan, et al. Sharegpt4video: Improving video understand- ing and generation with better captions. Advances in Neural Information Pr ocessing Systems , 37:19472–19495, 2024. 1 [4] Liyang Chen, Tianxiang Ma, Jiawei Liu, Bingchuan Li, Zhuowei Chen, Lijie Liu, Xu He, Gen Li, Qian He, and Zhiyong W u. Humo: Human-centric video generation via collaborative multi-modal conditioning. arXiv preprint arXiv:2509.08519 , 2025. 3 [5] Joon Son Chung, Arsha Nagrani, and Andrew Zisserman. V oxceleb2: Deep speaker recognition. arXiv pr eprint arXiv:1806.05622 , 2018. 2 [6] Andrzej Czyzewski, Bozena Kostek, Piotr Bratoszewski, Jozef K otus, and Marcin Szykulski. An audio-visual cor- pus for multimodal automatic speech recognition. J ournal of Intelligent Information Systems , 49(2):167–192, 2017. 2 [7] Ali Diba, Mohsen Fayyaz, V ivek Sharma, Manohar Paluri, J ¨ urgen Gall, Rainer Stiefelhagen, and Luc V an Gool. Large scale holistic video understanding. In Eur opean Conference on Computer V ision , pages 593–610. Springer , 2020. 2 [8] Bernhard Egger, W illiam AP Smith, A yush T ew ari, Stefanie W uhrer, Michael Zollhoefer , Thabo Beeler , Florian Bernard, T imo Bolkart, Adam K ortylewski, Sami Romdhani, et al. 3d morphable face models—past, present, and future. ACM T ransactions on Graphics (T oG) , 39(5):1–38, 2020. 2 , 3 [9] Christoph Feichtenhofer, Haoqi Fan, Jitendra Malik, and Kaiming He. Slowfast networks for video recognition. In Pr oceedings of the IEEE/CVF international confer ence on computer vision , pages 6202–6211, 2019. 2 [10] Daiheng Gao, Shilin Lu, Shaw W alters, W enbo Zhou, Ji- aming Chu, Jie Zhang, Bang Zhang, Mengxi Jia, Jian Zhao, Zhaoxin Fan, et al. Eraseanything: Enabling con- cept erasure in rectified flo w transformers. arXiv preprint arXiv:2412.20413 , 2024. 3 [11] Chuan Guo, Shihao Zou, Xinxin Zuo, Sen W ang, W ei Ji, Xingyu Li, and Li Cheng. Generating diverse and natural 3d human motions from text. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 5152–5161, 2022. 3 [12] Chuan Guo, Y uxuan Mu, Muhammad Gohar Javed, Sen W ang, and Li Cheng. MoMask: Generative masked mod- eling of 3D human motions. In IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2024. 3 [13] Naomi Harte and Eoin Gillen. Tcd-timit: An audio-visual corpus of continuous speech. IEEE T ransactions on Multi- media , 17(5):603–615, 2015. 2 [14] Y ining Hong, Haoyu Zhen, Peihao Chen, Shuhong Zheng, Y ilun Du, Zhenfang Chen, and Chuang Gan. 3d-llm: In- jecting the 3d world into large language models. Advances in Neural Information Processing Systems , 36:20482–20494, 2023. 2 [15] Bin Huang, Xin W ang, Hong Chen, Zihan Song, and W enwu Zhu. Vtimellm: Empower llm to grasp video moments. In Pr oceedings of the IEEE/CVF Conference on Computer V i- sion and P attern Recognition , pages 14271–14280, 2024. 1 [16] Chao Huang, Y apeng T ian, Anurag Kumar , and Chenliang Xu. Egocentric audio-visual object localization. In Pr oceed- ings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 22910–22921, 2023. 2 [17] Chao Huang, Zeliang Zhang, Jiang Liu, Ximeng Sun, Jialian W u, Xiaodong Y u, Ze W ang, Chenliang Xu, Emad Barsoum, and Zicheng Liu. Directional reasoning injection for fine- tuning mllms. arXiv preprint , 2025. 1 [18] Ozgur Kara, Krishna Kumar Singh, Feng Liu, Duygu Cey- lan, James M. Rehg, and T obias Hinz. Shotadapter: T ext- to-multi-shot video generation with diffusion models, 2025. 3 [19] W eijie K ong, Qi Tian, Zijian Zhang, Rox Min, Zuozhuo Dai, Jin Zhou, Jiangfeng Xiong, Xin Li, Bo Wu, Jianwei Zhang, et al. Hun yuan video: A systematic framework for large video generativ e models. arXiv preprint , 2024. 2 [20] Chunyuan Li, Clif f W ong, Sheng Zhang, Naoto Usuyama, Haotian Liu, Jianwei Y ang, Tristan Naumann, Hoifung Poon, 9 and Jianfeng Gao. Llava-med: Training a large language- and-vision assistant for biomedicine in one day . Advances in Neural Information Processing Systems , 36:28541–28564, 2023. 1 [21] Leyang Li, Shilin Lu, Y an Ren, and Adams W ai-Kin K ong. Set you straight: Auto-steering denoising tra- jectories to sidestep unwanted concepts. arXiv preprint arXiv:2504.12782 , 2025. 3 [22] T ianye Li, T imo Bolkart, Michael J Black, Hao Li, and Javier Romero. Learning a model of facial shape and expression from 4d scans. ACM T rans. Gr aph. , 36(6):194–1, 2017. 3 [23] Bin Lin, Zhen yu T ang, Y ang Y e, Jiaxi Cui, Bin Zhu, Peng Jin, Jinfa Huang, Junwu Zhang, Y atian Pang, Munan Ning, et al. Moe-llav a: Mixture of experts for large vision- language models. arXiv pr eprint arXiv:2401.15947 , 2024. 2 [24] Bin Lin, Y ang Y e, Bin Zhu, Jiaxi Cui, Munan Ning, Peng Jin, and Li Y uan. V ideo-llav a: Learning united visual repre- sentation by alignment before projection. In Proceedings of the 2024 Confer ence on Empirical Methods in Natural Lan- guage Pr ocessing , pages 5971–5984, 2024. 1 [25] Ji Lin, Chuang Gan, and Song Han. Tsm: T emporal shift module for efficient video understanding. In Pr oceedings of the IEEE/CVF international conference on computer vision , pages 7083–7093, 2019. 2 [26] Jing Lin, Ailing Zeng, Shunlin Lu, Y uanhao Cai, Ruimao Zhang, Haoqian W ang, and Lei Zhang. Motion-x: A large-scale 3d e xpressiv e whole-body human motion dataset. Advances in Neural Information Pr ocessing Systems , 36: 25268–25280, 2023. 3 [27] Mingdian Liu, Y ilin Liu, Gurunandan Krishnan, Karl S Bayer , and Bing Zhou. T2m-x: Learning expressiv e text- to-motion generation from partially annotated data, 2024. 5 [28] Pinxin Liu, Haiyang Liu, Luchuan Song, and Chenliang Xu. Intentional Gesture: Deliver Y our Intentions with Gestures for Speech. arXiv preprint , 2025. 3 [29] Pinxin Liu, Pengfei Zhang, Hyeongwoo Kim, Pablo Gar- rido, Ari Shapiro, and K yle Olszewski. Contextual Ges- ture: Co-Speech Gesture V ideo Generation through Conte xt- aware Gesture Representation. In A CM International Con- fer ence on Multimedia , 2025. 3 [30] Stev en R Livingstone and Frank A Russo. The ryer- son audio-visual database of emotional speech and song (ravdess): A dynamic, multimodal set of facial and vocal expressions in north american english. PloS one , 13(5): e0196391, 2018. 2 [31] Shilin Lu, Xinghong Hu, Chengyou W ang, Lu Chen, Shulu Han, and Y uejia Han. Copy-move image forgery detection based on evolving circular domains coverage. Multimedia T ools and Applications , 81(26):37847–37872, 2022. 2 [32] Shunlin Lu, Ling-Hao Chen, Ailing Zeng, Jing Lin, Ruimao Zhang, Lei Zhang, and Heung-Y eung Shum. Humantomato: T ext-aligned whole-body motion generation. arXiv pr eprint arXiv:2310.12978 , 2023. 3 [33] Shilin Lu, Zilan W ang, Leyang Li, Y anzhu Liu, and Adams W ai-Kin K ong. Mace: Mass concept erasure in diffu- sion models. In Proceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 6430– 6440, 2024. 1 [34] Shilin Lu, Zihan Zhou, Jiayou Lu, Y uanzhi Zhu, and Adams W ai-Kin K ong. Robust watermarking using generativ e pri- ors against image editing: From benchmarking to advances. arXiv pr eprint arXiv:2410.18775 , 2024. 2 [35] Shilin Lu, Zhuming Lian, Zihan Zhou, Shaocong Zhang, Chen Zhao, and Adams W ai-Kin K ong. Does flux already know ho w to perform physically plausible image composi- tion? arXiv preprint , 2025. 1 [36] Laurens van der Maaten and Geoffrey Hinton. V isualizing data using t-sne. Journal of mac hine learning r esear ch , 9 (Nov):2579–2605, 2008. 7 [37] Muhammad Maaz, Hanoona Rasheed, Salman Khan, and Fahad Khan. V ideo-chatgpt: T owards detailed video un- derstanding via large vision and language models. In Pro- ceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pages 12585–12602, 2024. 2 [38] Arsha Nagrani, Joon Son Chung, W eidi Xie, and Andrew Zisserman. V oxceleb: Large-scale speak er v erification in the wild. Computer Speech & Language , 60:101027, 2020. 2 [39] OpenAI. V ideo generation models as world simulators, 2024. 3 [40] Mathis Petrovich, Michael J Black, and G ¨ ul V arol. TMR: T ext-to-motion retrie val using contrasti ve 3D human motion synthesis. In IEEE/CVF International Conference on Com- puter V ision , pages 9488–9497, 2023. 3 , 8 [41] Matthias Plappert, Christian Mandery , and T amim Asfour . The kit motion-language dataset. Big data , 4(4):236–252, 2016. 3 [42] Abhinanda R Punnakkal, Arjun Chandrasekaran, Nikos Athanasiou, Alejandra Quiros-Ramirez, and Michael J Black. Babel: Bodies, action and behavior with english la- bels. In Proceedings of the IEEE/CVF conference on com- puter vision and pattern reco gnition , pages 722–731, 2021. 3 [43] Jose Ribeiro-Gomes, T ianhui Cai, Zolt ´ an ´ A Milacski, Chen W u, Aayush Prakash, Shingo T akagi, Amaury Aubel, Daeil Kim, Alexandre Bernardino, and Fernando De La T orre. Mo- tionGPT : Human Motion Synthesis with Improv ed Di ver - sity and Realism via GPT -3 Prompting. In IEEE/CVF W in- ter Conference on Applications of Computer V ision , pages 5070–5080, 2024. 3 [44] Y unhang Shen, Chao you Fu, Peixian Chen, Mengdan Zhang, Ke Li, Xing Sun, Y unsheng W u, Shaohui Lin, and Rongrong Ji. Aligning and prompting everything all at once for uni ver- sal visual perception. In Pr oceedings of the IEEE/CVF Con- fer ence on Computer V ision and P attern Recognition , pages 13193–13203, 2024. 1 [45] Fangxun Shu, Lei Zhang, Hao Jiang, and Cihang Xie. Audio- visual llm for video understanding. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , pages 4246–4255, 2025. 1 [46] Uriel Singer, Amit Zohar, Y uv al Kirstain, Shelly Sheynin, Adam Polyak, Devi Parikh, and Y aniv T aigman. V ideo edit- ing via factorized diffusion distillation. In Eur opean Con- 10 fer ence on Computer V ision , pages 450–466. Springer , 2024. 3 [47] Guangzhi Sun, W enyi Y u, Changli T ang, Xianzhao Chen, T ian T an, W ei Li, Lu Lu, Zejun Ma, Y uxuan W ang, and Chao Zhang. video-salmonn: Speech-enhanced audio-visual large language models. arXiv pr eprint arXiv:2406.15704 , 2024. 1 [48] Licai Sun, Zheng Lian, Bin Liu, and Jianhua T ao. Hic- mae: Hierarchical contrastive masked autoencoder for self- supervised audio-visual emotion recognition. Information Fusion , 108:102382, 2024. 2 [49] Rui Sun, Y umin Zhang, T ejal Shah, Jiahao Sun, Shuoying Zhang, W enqi Li, Haoran Duan, Bo W ei, and Rajiv Ranjan. From sora what we can see: A surv ey of te xt-to-video gener - ation. arXiv preprint , 2024. 3 [50] Zhiyao Sun, T ian Lv , Sheng Y e, Matthieu Lin, Jenny Sheng, Y u-Hui W en, Minjing Y u, and Y ong-jin Liu. Diffposetalk: Speech-driv en stylistic 3d facial animation and head pose generation via diffusion models. ACM T ransactions on Graphics (T OG) , 43(4):1–9, 2024. 5 [51] Gemini T eam, Rohan Anil, Sebastian Bor geaud, Jean- Baptiste Alayrac, Jiahui Y u, Radu Soricut, Johan Schalkwyk, Andrew M Dai, Anja Hauth, Katie Millican, et al. Gemini: a family of highly capable multimodal models. arXiv pr eprint arXiv:2312.11805 , 2023. 2 , 3 , 5 [52] Guy T evet, Brian Gordon, Amir Hertz, Amit H Bermano, and Daniel Cohen-Or . Motionclip: Exposing human motion generation to clip space. In Eur opean Confer ence on Com- puter V ision , pages 358–374. Springer , 2022. 3 [53] Aaron V an Den Oord, Oriol V inyals, et al. Neural discrete representation learning. Advances in neural information pr o- cessing systems , 30, 2017. 2 [54] Aaron van den Oord, Y azhe Li, and Oriol V inyals. Repre- sentation learning with contrastiv e predictive coding. arXiv pr eprint arXiv:1807.03748 , 2019. 3 [55] T eam W an, Ang W ang, Baole Ai, Bin W en, Chaojie Mao, Chen-W ei Xie, Di Chen, Feiwu Y u, Haiming Zhao, Jianxiao Y ang, et al. W an: Open and advanced large-scale video gen- erativ e models. arXiv preprint , 2025. 2 , 3 [56] Kaisiyuan W ang, Qianyi W u, Linsen Song, Zhuoqian Y ang, W ayne W u, Chen Qian, Ran He, Y u Qiao, and Chen Change Loy . Mead: A large-scale audio-visual dataset for emotional talking-face generation. In Eur opean confer ence on com- puter vision , pages 700–717. Springer , 2020. 2 , 5 , 6 , 7 [57] Peng W ang, Shuai Bai, Sinan T an, Shijie W ang, Zhihao Fan, Jinze Bai, K eqin Chen, Xuejing Liu, Jialin W ang, W enbin Ge, et al. Qwen2-vl: Enhancing vision-language model’s perception of the world at any resolution. arXiv pr eprint arXiv:2409.12191 , 2024. 2 [58] Thadd ¨ aus Wiedemer , Y uxuan Li, Paul V icol, Shixiang Shane Gu, Nick Matarese, K evin Swersky , Been Kim, Priyank Jaini, and Robert Geirhos. V ideo models are zero-shot learn- ers and reasoners. arXiv pr eprint arXiv:2509.20328 , 2025. 2 , 3 [59] Chao-Y uan W u and Philipp Krahenbuhl. T owards long-form video understanding. In Pr oceedings of the IEEE/CVF Con- fer ence on Computer V ision and P attern Recognition , pages 1884–1894, 2021. 2 [60] Qize Y ang, Detao Bai, Y i-Xing Peng, and Xihan W ei. Omni- emotion: Extending video mllm with detailed face and audio modeling for multimodal emotion analysis. arXiv pr eprint arXiv:2501.09502 , 2025. 2 [61] Shukang Y in, Chaoyou Fu, Sirui Zhao, K e Li, Xing Sun, T ong Xu, and Enhong Chen. A surve y on multimodal large language models. National Science Revie w , 11(12): nwae403, 2024. 1 [62] Xinlei Y u, Zhangquan Chen, Y udong Zhang, Shilin Lu, Ruolin Shen, Jiangning Zhang, Xiaobin Hu, Y anwei Fu, and Shuicheng Y an. V isual document understanding and ques- tion answering: A multi-agent collaboration frame work with test-time scaling. arXiv preprint , 2025. 1 [63] Shenghai Y uan, Jinfa Huang, Xianyi He, Y unyang Ge, Y u- jun Shi, Liuhan Chen, Jiebo Luo, and Li Y uan. Identity- preserving text-to-video generation by frequency decompo- sition. In Pr oceedings of the IEEE/CVF Confer ence on Com- puter V ision and P attern Recognition (CVPR) , pages 12978– 12988, 2025. 3 [64] Hao Zhang, Feng Li, Shilong Liu, Lei Zhang, Hang Su, Jun Zhu, Lionel M Ni, and Heung-Y eung Shum. Dino: Detr with improv ed denoising anchor boxes for end-to-end object detection. arXiv preprint , 2022. 1 [65] Jianrong Zhang, Y angsong Zhang, Xiaodong Cun, Shaoli Huang, Y ong Zhang, Hongwei Zhao, Hongtao Lu, and Xi Shen. T2M-GPT: Generating Human Motion from T extual Descriptions with Discrete Representations. In IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2023. 3 , 5 [66] Pengfei Zhang, Pinxin Liu, P ablo Garrido, Hyeongwoo Kim, and Bindita Chaudhuri. KinMo: Kinematic-aware Human Motion Understanding and Generation. In IEEE/CVF Inter- national Confer ence on Computer V ision , 2025. 3 [67] Xiaoman Zhang, Chaoyi W u, Ziheng Zhao, W eixiong Lin, Y a Zhang, Y anfeng W ang, and W eidi Xie. Pmc-vqa: V i- sual instruction tuning for medical visual question answer- ing, 2024. URL https://arxiv . org/abs/2305.10415 . 1 [68] Chen Zhao, Jiawei Chen, Hongyu Li, Zhuoliang Kang, Shilin Lu, Xiaoming W ei, Kai Zhang, Jian Y ang, and Y ing T ai. Luv e: Latent-cascaded ultra-high-resolution video generation with dual frequency experts. arXiv pr eprint arXiv:2602.11564 , 2026. 3 [69] Jiaxing Zhao, Qize Y ang, Y ixing Peng, Detao Bai, Shimin Y ao, Boyuan Sun, Xiang Chen, Shenghao Fu, Xihan W ei, Liefeng Bo, et al. Humanomni: A large vision-speech lan- guage model for human-centric video understanding. arXiv pr eprint arXiv:2501.15111 , 2025. 2 , 5 [70] Duo Zheng, Shijia Huang, and Liwei W ang. V ideo-3d llm: Learning position-a ware video representation for 3d scene understanding. In Pr oceedings of the Computer V ision and P attern Recognition Confer ence , pages 8995–9006, 2025. 1 [71] Zangwei Zheng, Xiangyu Peng, Tianji Y ang, Chenhui Shen, Shenggui Li, Hongxin Liu, Y ukun Zhou, T ianyi Li, and Y ang Y ou. Open-sora: Democratizing efficient video production for all. arXiv preprint , 2024. 3 [72] Zihan Zhou, Shilin Lu, Shuli Leng, Shaocong Zhang, Zhum- ing Lian, Xinlei Y u, and Adams W ai-Kin Kong. Dragflow: 11 Unleashing dit priors with region based supervision for drag editing. arXiv preprint , 2025. 3 [73] Y uanzhi Zhu, Ruiqing W ang, Shilin Lu, Junnan Li, Han- shu Y an, and Kai Zhang. Oftsr: One-step flow for im- age super-resolution with tunable fidelity-realism trade-offs. arXiv pr eprint arXiv:2412.09465 , 2024. 3 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment