AgriChat: A Multimodal Large Language Model for Agriculture Image Understanding

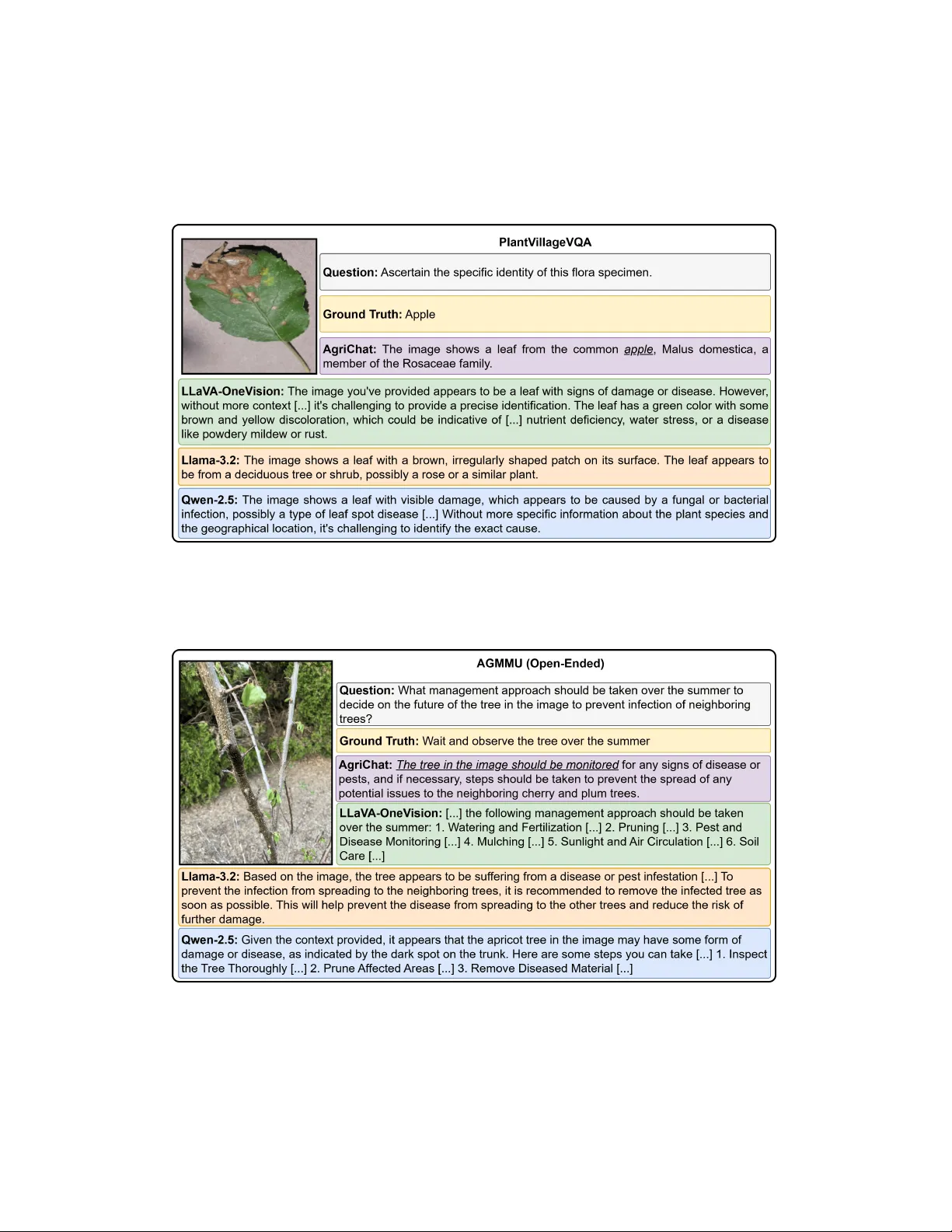

The deployment of Multimodal Large Language Models (MLLMs) in agriculture is currently stalled by a critical trade-off: the existing literature lacks the large-scale agricultural datasets required for robust model development and evaluation, while cu…

Authors: Abderrahmene Boudiaf, Irfan Hussain, Sajid Javed