TransDex: Pre-training Visuo-Tactile Policy with Point Cloud Reconstruction for Dexterous Manipulation of Transparent Objects

Dexterous manipulation enables complex tasks but suffers from self-occlusion, severe depth noise, and depth information loss when manipulating transparent objects. To solve this problem, this paper proposes TransDex, a 3D visuo-tactile fusion motor p…

Authors: Fengguan Li, Yifan Ma, Chen Qian

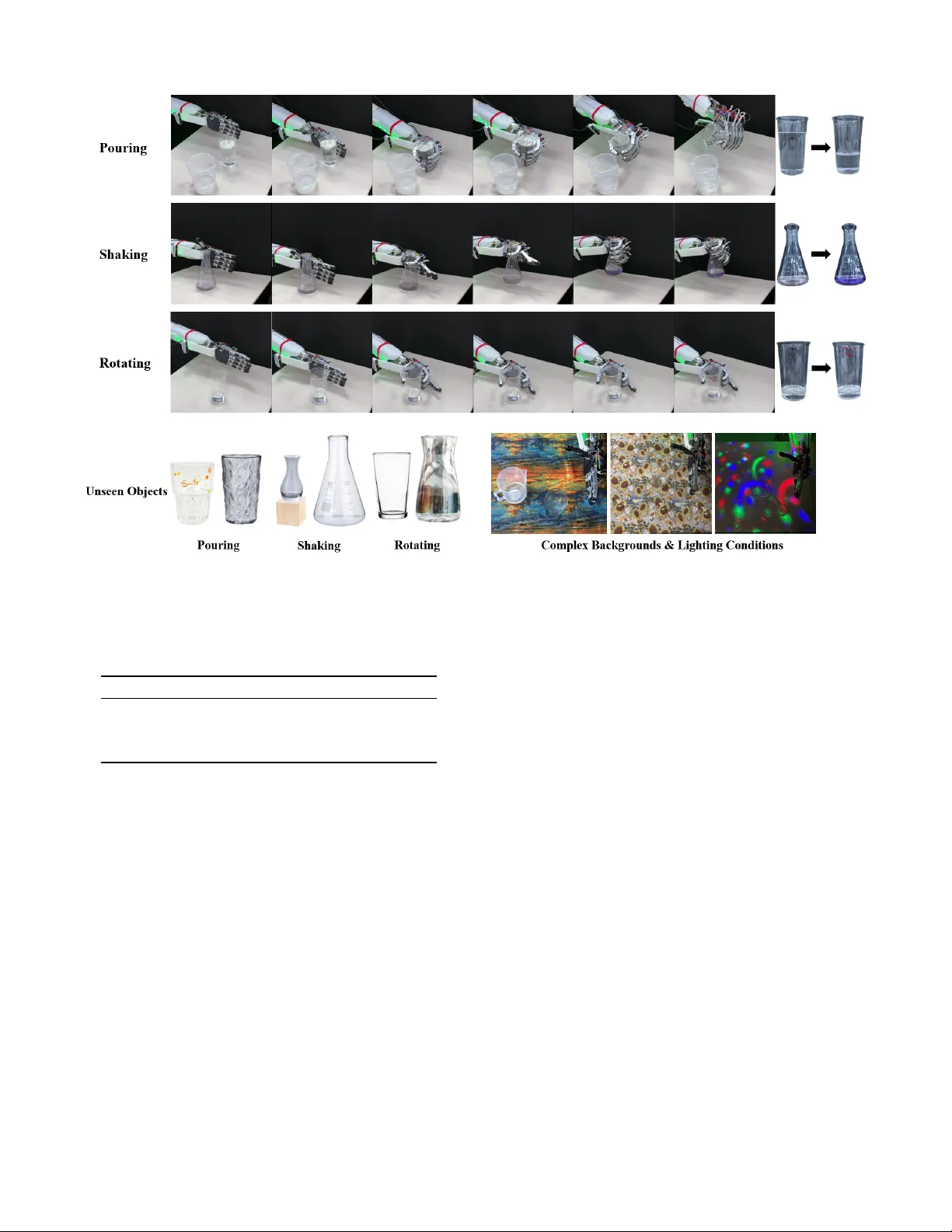

1 T ransDe x: Pre-training V isuo-T actile Polic y with Point Cloud Reconstruction for De xterous Manipulation of T ransparent Objects Fengguan Li, Y ifan Ma, Chen Qian, W entao Rao, W eiwei Shang † † Corresponding Author Uni versity of Science and T echnology of China Project page: T ransDex.Github .io Abstract —Dexterous manipulation enables complex tasks but suffers from self-occlusion, se vere depth noise, and depth infor - mation loss when manipulating transparent objects. T o solve this problem, this paper pr oposes T ransDex, a 3D visuo-tactile fusion motor policy based on point cloud reconstruction pre-training . Specifically , we first propose a self-supervised point cloud re- construction pre-training approach based on T ransf ormer . This method accurately reco vers the 3D structure of objects from interactive point clouds of dexterous hands, even when random noise and large-scale masking ar e added. Building on this, T rans- Dex is constructed in which perceptual encoding adopts a fine- grained hierarchical scheme and multi-round attention mecha- nisms adaptively fuse features of the robotic arm and dexterous hand to enable differentiated motion prediction. Results from transparent object manipulation experiments conducted on a real robotic system demonstrate that T ransDex outperforms existing baseline methods. Further analysis validates the generalization capabilities of T ransDex and the effectiveness of its individual components. Note to Practitioners —This work is motivated by the practical challenges faced by robotic systems when manipulating trans- parent objects, such as perceptual difficulties arising from depth information loss, sever e noise, or self-occlusion. Existing methods often rely on intermediate perception completion steps, which are prone to failure in complex, dexterous manipulation scenarios. This limits their application in the real world. W e propose a 3D visuo-tactile fusion motor policy that performs pre-training by reconstructing the 3D structur e of objects from noisy , lar ge-scale masked interactiv e point cloud data, and utilizes hierarchical en- coding and multi-round attention mechanisms to adaptively fuse multimodal features, enabling differentiated motion prediction for robotic arm and dexterous hand. The proposed method is then validated on a real robotic system, demonstrating excellent performance and generalization capabilities. This work provides a practical, end-to-end solution for dexterous manipulation of transparent objects, r educing reliance on advanced depth sensors or complex optimization methods. Index T erms —Pre-T raining, Visuo-T actile Fusion, Dexterous Manipulation, T ranspar ent Object Manipulation. I . I N T RO D U C T I O N D EXTER OUS manipulation is a core capability that en- ables robots to perform tasks efficiently in real-world scenarios. Whether for routine tasks such as part assembly and stable grasping [ 1 – 9 ], or for comple x manipulation lik e conical flask shaking and liquid pouring in automated laboratories [ 10 , 11 ], accurate dexterous manipulation is essential. Achiev- ing such tasks autonomously with multi-fingered dexterous hands is contingent on the dev elopment of robust and efficient learning policies. In the field of learning-based robotic manipulation, re- searchers have successfully employed 2D visual inputs to train robots for a v ariety of tasks [ 4 – 6 , 12 , 13 ]. W ith the de vel- opment of this visual paradigm, recent research has placed growing emphasis on learning policies using 3D point cloud data [ 14 – 21 ], which provides spatial structural information that better aligns with the physical properties of the real world. Howe ver , visual data is inadequate for comprehensive capture of interaction dynamics, especially in precision tasks that require contact feedback. Advances in tactile sensing technology hav e led to a re- markable enhancement in the significance of tactile perception in dexterous manipulation. T actile sensors, including Gelsight [ 22 ], Uskin [ 23 ] and PaXini [ 24 ], provide essential data on contact force, pressure distribution and object pose, enabling compensation for limitations of vision in perceiving contact states. Consequently , visuo-tactile imitation learning has grad- ually gained traction [ 1 , 3 , 4 , 6 , 15 , 17 , 18 , 25 ]. Despite the integration of multiple sensory modalities, dex- terous manipulation in real-world scenarios still faces signif- icant perceptual challenges. The multi-fingered structure of dexterous hands ine vitably causes self-occlusion during in- teraction, which severely obscures critical spatial information about the hand and object postures [ 16 ]. When manipulating transparent objects, the perceptual insufficienc y problem is exacerbated due to the ef fects of light refraction and reflec- tion, resulting in noisy or even incomplete point cloud data [ 26 ]. Such perceptual ambiguity undermines the reliability of 3D representations and constrains the performance of motor policies that rely on 3D visual information. T o address these limitations, existing research has predominantly focused on intermediate optimization steps such as depth completion or segmentation of transparent objects, followed by generating grasp poses based on refined information [ 27 – 32 ]. Nev er- theless, the accuracy of the intermediate optimization steps can possibly be affected by occlusion from the dexterous hands, potentially introducing and accumulating errors. It also struggles to adapt to real-time state changes during dynamic 2 Fig. 1. The Overall Framework of the Pre-T raining-Based Visual-T actile Fusion Motor Policy , T ransDex. Perceptual information enters the encoder through a fine-grained, hierarchical manner . The hand-object interaction point cloud utilizes a pre-trained encoder , followed by an attention fusion module and two policy heads to achieve feature integration and differentiated action prediction. manipulation due to the open-loop grasping process. The dev elopment of end-to-end motor policies capable of dynamic dexterous manipulation in such perceptually ambiguous sce- narios is still under-explored [ 26 , 33 ]. As a result, the key challenges in dexterous manipulation of transparent objects can be summarized as follows: 1) Self-occlusion ambiguity: Multi-fingered structures cause inevitable self-occlusion during interaction, sev erely obscuring critical spatial information of hand-object postures. 2) T ransparent object perception deficiency: Light refraction and reflection on transparent objects exacerbate insufficient perception, leading to noisy and missing point cloud data. 3) Intermediate optimization limitations: Existing methods rely on intermediate steps, whose accuracy is degraded by occlusion and fail to adapt to real-time dynamic state changes. Based on previous observations, this study proposes T rans- Dex, a polic y that enhances manipulation performance through robust 3D object perception. The objectiv e of this study is to dev elop a robust end-to-end visuo-tactile motor policy that directly addresses perceptual ambiguities, such as occlusion and interference of transparent objects, without relying on independent perception modules. The overall framew ork of T ransDex is illustrated in Fig. 1 . Specifically , we first design a Transformer -based point cloud reconstruction pre-training framew ork. The pre-trained network reconstructs complete geometric structures of objects from randomly masked and noisy interactiv e point clouds of de xterous hands, thereby enhancing the understanding of object shape and pose under sparse observations. Building upon this foundation, we further propose a visuo-tactile motor policy . The policy employs a fine-grained decomposition of multi-modal perceptual in- formation, followed by hierarchical encodings. Specifically , interactiv e point clouds of dexterous hands are processed by the pre-trained encoder . Through multiple self-attention and cross-attention modules for computation, the policy adaptively fuse features of the robotic arm and dexterous hand to enable differentiated motion prediction, based on distinct combina- tions of information. W e performed transparent object manipulation experiments on a real robotic system, including liquid pouring, flask shak- ing, and cup rotating. The comparati ve results demonstrate that T ransDex outperforms baseline policies in terms of success rate under such a scenario where perceptual information is sev erely ambiguous. W e also ev aluated the performance of the pre-trained network on the point cloud reconstruction task. Ab- lation studies verified the effecti v eness of both the pre-training method and each component of TransDe x. Further analysis rev eals that T ransDex exhibits strong generalization capability and robustness against unseen and complex scenarios. In summary , the main contrib utions of this work are as follows: 1) W e propose a T ransformer -based point cloud recon- struction pre-training method that effecti vely enhances the robot’ s understanding of object shape and pose in perceptually ambiguous scenarios. 2) Building on the pre-training method above, we propose an end-to-end 3D visuo-tactile motor policy . It refines the de- composition and encoding of perceptual information, enabling differentiated action prediction through feature combination, without relying on independent perception modules. 3) W e verified the effecti v eness and robustness of T ransDex via a series of experiments on transparent object reconstruction and manipulation conducted on a real robotic system. The rest of this article is organized as follows. Section II revie ws related works. Section III details the proposed end-to- end visuo-tactile motor policy , TransDe x. Section IV presents experimental validations of T ransDex. Finally , Section V con- cludes this work. I I . R E L A T E D W O R K A. V isuo-T actile Fusion As tactile sensation can capture subtle contact information that vision cannot, numerous studies have focused on achiev- ing robotic perception and manipulation through the fusion 3 Fig. 2. Pre-T raining Framework. First, noise is added to and masked from the original hand-object interaction point cloud to generate input data. Then, features are extracted using a pre-encoder and a T ransformer encoder . Finally , the generated query points and a Transformer decoder are emplo yed to accomplish the point cloud reconstruction task for the object. of vision and tactile sensing. A common fusion approach in volv es encoding the two modalities separately , follo wed by the fusion or concatenation of the resulting embedded features [ 1 , 3 , 4 , 34 ]. Y uan et al. [ 18 ] proposed “robot synesthesia”, which unified visual and tactile data into point cloud input by calculating the spatial coordinates of contact points via forward kinematics. 3D-V iT ac was built based on this idea and employed a higher tactile resolution [ 15 ]. Howe v er , both methods primarily utilize only normal force information, and lack ef fectiv e feature-le vel integration. Moreov er , researchers also proposed robot-agnostic visuo-tactile perception models to reconstruct hand-object interaction shapes [ 35 , 36 ], b ut these methods hav e not yet been extended to robot motion generation and control. Overall, existing visuo-tactile fusion methods still exhibit limitations in the fine-grained decom- position of perceptual information and guidance for dynamic manipulation. B. Pre-tr aining for Robotics In the field of robotic pre-training, unsupervised learning is a prev alent paradigm. Pre-training methods for visuo-tactile modalities can be categorized into two broad types: one in volv es aligning the feature spaces of visual and tactile modalities during pre-training [ 4 , 34 , 37 – 39 ], while the other focuses on pre-training within data from a single modality [ 6 , 25 , 40 – 43 ]. Common pre-training enables encoders to de- velop the ability to distinguish between different data samples or accomplish specific sub-tasks, thereby providing effecti ve guidance for training do wnstream policies. For instance, re- searchers guide the tactile encoder during pre-training to focus on learning force-related features in 3DT acDex [ 25 ], while Zhu et al. [ 37 ] use encoders to reconstruct tactile images from visual and tactile inputs, thereby enhancing the model’ s ability to infer local contact conditions and understand cross-modal correlations. Howe v er , extant pre-training methods frequently neglect to lev erage the 3D structural information of objects during interaction and lack explicit modeling of real-world disturbances such as occlusion and noise, which has a conse- quence of limiting their capabilities in complex scenarios. C. Robotic Manipulation for T ranspar ent Objects The complex refraction and reflection ef fects of transparent objects present significant challenges for target perception and state recognition in motor policy , particularly in 3D motor policy . Existing solutions for robotic manipulation of transpar- ent objects typically use a two-stage process: first, perceptual completion via depth estimation or 3D reconstruction; then, grasp pose generation using the completed results. Repre- sentativ e models follo wing this paradigm include ClearGrasp [ 27 ], SwinDRNet [ 28 ], A4T [ 29 ], and ClearDepth [ 31 ]. Nev- ertheless, the occlusion caused by dexterous hands may affect the completion effect and the inaccuracy could be amplified during the subsequent phase, directly leading to grasp failures. Furthermore, these methods are typically limited to the ex ecu- tion of grasping or rely on pre-programmed motion sequences to complete subsequent manipulation. Therefore, real-time end-to-end manipulation policies for transparent objects hold significant research value. In Maniptrans [ 10 ], researchers proposed a method for transferring human skills to dexterous robotic hands and conducted ev aluations of imitation learning, including manipulation of transparent objects. Howe ver , its highest reported success rate was only 18.44%, indicating limited practical utility . I I I . M E T H O D The overall framework operates in two phases: 1) The pre- training framew ork encodes randomly masked and noisy hand- object interaction point cloud, and reconstructs the complete object point cloud via a decoder , as described in III-A . This process endows the pre-trained network with rob ust object comprehension against incomplete perceptual scenarios. 2) Building upon the pre-trained network, the motor policy accomplishes the extraction and multi-modal integration of fine-grained visuo-tactile information, ultimately generating differentiated motion predictions for both the robotic arm and the dexterous hand, detailed in III-B . A. Pre-tr aining Based on P oint Cloud Reconstruction As illustrated in Fig. 2 , the pre-training task is defined as follows: for the original hand-object interaction point cloud, 4 under the interference of random masking and added noise, an encoder-decoder structure is utilized to predict the probability that densely generated query points in 3D space belong to the target object. This is formally represented by the following equation: P ( q ∈ O | ˜ P ′ o ) = f θ ( ˜ P ′ o ; q ) (1) where q denotes query points, O represents tar get object point cloud, e P ′ o signifies the point cloud under perceptual interference, and f θ represents the pre-trained network. The pre-training framew ork is comprised of sev eral k ey components: 1) Pre-tr aining Dataset: First, a dexterous grasping scene is constructed on PyBullet [ 44 ]. The objects in the scene are primarily sourced from the ClearPose dataset [ 45 ], encompass- ing common daily items and laboratory instruments such as various types of cups, vases, flasks, etc. Subsequently , points are uniformly sampled on the dexterous hand and the object respectiv ely to generate a complete hand-object interaction point cloud: P t o = P t hand + P t obj ect ∈ R n × 3 (2) Subsequently , random noise is added to the object point cloud, resulting in a noisy point cloud e P o ∈ R n × 3 . Ke y param- eters, including object size, position, as well as the direction, percentage, and magnitude of the added noise, are varied to ensure that the pre-trained network adapts to various noisy scenarios. Note that only dexterous hand is retained in the scenario to guide the model to focus on learning to reconstruct the object point cloud from the grasping configuration of the dexterous hand. 2) Grouping and Random Masking: Giv en e P o , c center points { C i } c i =1 were selected using Farthest Point Sam- pling(FPS). The points surrounding each center are then aggre- gated into patches via the K-Nearest Neighbors(KNN) algo- rithm. These patches are randomly masked at a ratio of R mask , resulting in masked point cloud e P ′ o . By randomly masking a portion of the patches, the model is forced to learn global- local feature relationships, thereby enhancing its generalization capability when dealing with incomplete perceptual data. 3) P oint Cloud F eature Extraction: V isible patches are fed into a pre-encoder to con vert them into T ransformer - compatible tokens. Additionally , a learnable cl s token is introduced to aggregate features from all patches within the subsequent T ransformer encoder and aid in distinguishing between different samples. The resulting visible patch tokens are then processed by the T ransformer encoder to further extract complex global correlations and local detail features from hand-object interaction point cloud. The final output is a set of high-dimensional latent features, including the feature of cl s token F [ cls ] . 4) Query P oint Generation and Decoder: The objecti ve of the pre-training is to enable the aforementioned encoder to interpret object shapes from the noisy and masked hand- object interaction point cloud. This is achieved by performing a query point classification task. W e first generate dense random points in the 3D space and merge them with the original point cloud as query points. Then the complete point cloud of the object P t obj ect is labeled as positiv e query points Q t p i N t i =1 ∈ R N t × 3 , while the remaining points in the space (including the point cloud of the dexterous hand P t hand ) are treated as negativ e query points Q n p i N n i =1 ∈ R N n × 3 . Specif- ically , if the minimum distance from a neg ativ e point to any positiv e point is smaller than the minimum distance between any two positi ve points, the negati ve point is reclassified as positiv e. This is because such points are suf ficiently close to the object’ s surface to be considered part of the object. It is important to note that positiv e query points Q t p i N t i =1 are not simply a normalized point cloud of the object. Rather , they are uniformly sampled based on the actual pose of the object within the original point cloud coordinate system of each specific grasping instance. This design forces the model to learn how to interpret the shape and pose of the object simultaneously from sparse and noisy point clouds. The overall query points { Q p i } N i =1 , where N = N t + N n , are processed by the query point encoder to generate positional embeddings. These are then fed into the Transformer decoder along with the latent features output by the encoder . The decoder produces a latent representation for each query point, which is then passed through a binary prediction head to output a confidence score { p i } N i =1 for each query point belonging to the tar get object. Critically , the encoder-decoder architecture is designed to pre vent information leakage from masked portions of the original point cloud, including the positions or embeddings of masked patches. This constraint mirrors real-world conditions in which the robot has no access to information about regions that are not in view . Enforcing this design compels the model to genuinely learn how to efficiently utilize visible information and robustly infer the structure of the object. This ensures the dev elopment of reliable object perception capabilities for real-world deployment. The training objecti ve of the pre-trained netw ork is to minimize the classification loss of the query points and the similarity of cl s features between different samples: min θ E ( ˜ P ′ o ,P t obj ect ) L cls p, y ( Q p , P t obj ect ) + λ E ˜ P ′ o L contrast F [ cls ] (3) where θ denotes the network parameters, y denotes the true binary label of the query point, λ is the weighting coefficient, and L contrast denotes the contrast loss. B. V isuo-T actile Fusion Motor P olicy After completing the pre-training based on point cloud reconstruction, only the encoder of the pre-trained network is retained, while the decoder is discarded. Endowed with pre-trained weights, the encoder can comprehend object shape and pose from perceptual ambiguous inputs, thereby providing the perceptual features essential for training the downstream motor policy . As shown in Fig. 1 , the visuo-tactile motor policy comprises four stages: 1) P oint Cloud Pr ocessing: The two point clouds obtained from the depth camera are first unified into the robot’ s base coordinate system. Fine registration is then performed using the Iterati ve Closest Point (ICP) algorithm to mitigate the 5 impact of hand-eye calibration errors on merging the point clouds. The registered point clouds are then cropped and standardized to a fixed value p using Farthest Point Sampling (FPS), resulting in a global point cloud P g ∈ R p × 6 . Here, the six channels represent three-dimensional coordinates and three RGB color channels. Subsequently , an artificial cropping volume is defined within the base coordinate frame of the dexterous hand. The global point cloud is then cropped within this volume, and the color dimensions are discarded, resulting in the vision-based hand-object interaction point cloud P v h o . Dense tactile sensing can complement sparse visual point clouds by providing detailed information about object bound- aries, which is valuable for transparent objects. Therefore, the 3D position of each taxel on the tactile sensor that registers a force reading is computed via forward kinematics using real- time robotic joint angles q ∈ R 23 , expressed in the robot’ s base coordinate frame as P t h o . These tactile-based points are then incorporated into P v h o , resulting in the final hand-object interaction point cloud P h o = P t h o + P v h o . 2) P er ceptual Encoding: Using independent encoders for different modalities, it captures global semantic information while progressi vely mining fine-grained local details. This pro- vides precise feature support for subsequent multimodal fusion and motion generation policy . The specific implementation is as follows: First, for the global point cloud P g , it is encoded using a global point cloud encoder composed of MLP layers, Layer- Norm layers, and a maximum pooling layer , which ultimately produces the perceptual feature F g . Second, for the hand-object interaction point cloud P h o , the pre-trained encoder is utilized for feature extraction. The pre- trained encoder outputs multiple feature tokens, from which only the F [ cls ] corresponding to the cl s token is used here. T o better adapt the feature for the downstream motor policy , a learnable MLP is appended after F [ cls ] , resulting in F ′ [ cls ] as the final perceptual feature of P h o . Third, in addition to supplementing boundary information in the hand-object interaction point cloud, tactile-based points can also assist in providing contact pose references for in- teraction force adjustment and contact position optimization. Therefore, tactile-based points P t h o is encoded to yield F pos t . Finally , to ef ficiently process the array-based 3D force signals from the tactile sensors, they are first reshaped into an image format I f , and then processed by a CNN-based tactile encoder , resulting in the perceptual feature F t for the tactile force information. 3) Modality Fusion Module: The feature fusion module consists of multiple self-attention and cross-attention blocks. The global point cloud feature F g , the hand-object interaction point cloud feature F ′ [ cls ] , and the tactile-based point feature F pos t form a progressiv ely refined spatial relationship. These three core spatially relev ant features are fed into a self- attention module to achie ve feature aggregation, learning the association patterns and key information within the pan-visual modalities. This process yields the self-attention processed latent feature F (1) s . F (1) s subsequently serves as the key and value, while the tactile force feature F t serves as the query , these are then input into a cross-attention module for deep interaction. Through attention weight allocation, the tactile force information modulates the features from other modalities in a targeted manner , resulting in the latent feature F (1) c . This computational process is repeated for M iterations, ultimately yielding the self-attention output of and the cross- attention output of the M th round, denoted as F ( M ) s and F ( M ) c , providing differentiated multimodal fused representations for downstream motion control tasks. This process is formally represented as follows: F ( m ) s = SelfA tten F ( m − 1) s F ( m ) c = CrossA tten F ( m − 1) c , F ( m ) s (4) where m = 0 , 1 , . . . , M , F (0) s = h F g , F ′ [ cls ] , F pos t i , F (0) c = [ F t ] . 4) F eatur e Combination and T ask Adaptation: The self- attention output F ( M ) s , while lacking direct force information in volv ement, integrates global point cloud data with local hand-object details, augmented by tactile-based points data. This results in a feature representation encompassing both en vironmental awareness and interactiv e understanding, mak- ing it siutable for guiding the pose trajectory and spatial offset correction of the robotic arm. In contrast, the cross- attention output F ( M ) c incorporates cross-modal modulated features that are primarily influenced by tactile force feedback. It emphasises details such as force feedback and contact states during tactile interaction and is augmented with visual information to percei ve object shape and pose. Consequently , it is well-suited to the fine-grained regulation of dexterous hand motion, such as grasp pose adjustment and interactive force control. Therefore, F ( M ) s is used to guide the action generation for the robotic arm, while F ( M ) c is used to guide the dexterous hand. 5) Action Generation: For the lower -dimensional robotic arm end-effector pose a a ∈ R pred T × 6 , a policy head P a composed of MLP layers is used for prediction with F ( M ) s and the robot’ s propriocepti ve state s ∈ R pred T × 22 , where pr ed T represents the timesteps for action prediction. For the high-dimensional dexterous hand actions a h ∈ R pred T × 16 , a conditional denoising diffusion model [ 12 , 46 ] serves as the dexterous hand policy head P h , which integrates F ( M ) c and s as conditions. It iteratively denoises a random Gaussian noise vector a h K ov er K steps to obtain the target action a h 0 . The specific action generation pipeline is as follows: The state input for imitation learning, denoted as S in , is defined as an observ ation sequence from the past obs T timesteps: S in,T = < { P g , P h o , P t h o , I f , s } T − obs T +1 , . . . , { P g , P h o , P t h o , I f , s } T > (5) Perceptual encoding and multi-modal feature fusion are performed based on the state input as follows: n F ( M ) s , F ( M ) c o = F usion (Enco der ( S in )) (6) Actions for the robotic arm and the dexterous hand are predicted based on the fused features as follows: b a a = P a h F ( M ) s i (7) 6 Fig. 3. Robotic System Setup: 1 a 16-DOF dexterous hand, with array tactile sensors equipped on the fingertips and finger pads; 2 a 7-DOF robotic arm; 3 depth cameras; 4 experimental items; 5 a data glove; 6 a motion capture camera. a h k − 1 = α k a h k − γ k ϵ θ a h k , k , F ( M ) c + σ k N (0 , I ) (8) where α k , γ k and σ k are parameters determined by the noise scheduler and the denoising step k , ϵ θ represents the denoising network, and N (0 , I ) represents Gaussian noise. The loss function is calculated as L a = MSE( b a a , a a ) (9) L h = MSE( ϵ k , ϵ θ ( ¯ α k a h 0 + ¯ β k ϵ k , k , F ( M ) c )) (10) L t = λ 1 L a + λ 2 L h (11) where ϵ k denotes the noise at step k in the diffusion process; ¯ α k and ¯ β k are noise scheduling parameters; λ 1 and λ 2 are loss weighting factors. I V . E X P E R I M E N T S W e performed transparent object manipulation experiments on a physical robotic platform to validate the following: 1) the applicability of T ransDex to transparent object manipulation tasks in real-world scenarios, and its performance advantages ov er existing baseline methods; 2) the effecti veness of the pre- trained network in point cloud reconstruction under perceptu- ally ambiguous scenarios in both simulation and reality , and its contribution to the performance of TransDe x; 3) the impact of other components of T ransDex on manipulation performance; 4) the robustness of TransDe x when confronted with unseen objects or other perceptual disturbances. A. Robotic System Setup As shown in Fig. 3 , the real robotic system comprises a 7- DOF humanoid robotic arm and a 16-DOF dexterous hand [ 47 ]. PaXini tactile array sensors are installed on the fingertips and fingerpads of each finger of the dexterous hand. The fingertip sensor array has a configuration of 12×6, while the fingerpad array is 10×6. Each tactile unit can measure two-dimensional tangential forces and one-di mensional normal force. T wo RealSense D435 cameras are positioned at the wrist of the robotic arm and around the workbench respectiv ely . The robot is teleoperated using a Manus-Metaglove in combination with an OptiTrack motion capture system to collect expert demonstration data. W ith its sophisticated perceptual capabil- ities enabled by multiple sensors and the fine manipulation capabilities of a high-DOF robot, this system is well-suited for manipulating transparent objects. B. Description of Dexter ous T asks T o ev aluate the performance of T ransDex, our experiment focused on dexterous manipulation tasks of transparent ob- jects. As shown in Fig. 4 , the following tasks were designed: 1) P ouring water: The robot must grasp a glass cup con- taining water from the table, move it above a measuring cup, rotate its wrist to orient the opening downward and pour the water into the measuring cup. The task is considered successful if the water is poured into the measuring cup and the glass cup is grasped stably without slipping and dropping during the process. 2) Shaking flask: The robot must mov e next to a conical flask, grip it with its thumb and index finger and use its re- maining fingers to shake it back and forth. The flask contains a mixture of glucose, sodium hydroxide (NaOH) and methylene blue solution. Initially transparent, the solution turns purple after suf ficient shaking. The task is considered successful if the robot can shake the flask continuously for ov er half a minute, causing the solution to change to purple, without dropping it. Some baseline or ablation policies were observed to tend to press the three fingers tightly against the flask, resulting in small-amplitude shaking that did not meet the intent of the task. If this shaking pattern causes discolouration, it is counted as a 0.5 success. 3) Rotating Glass Cup: The robot first mov es near the cup, then coordinates its thumb, index, and middle fingers to continuously rotate the cup. During rotation, the thumb con- tacts the cup and pushes it outwards, while simultaneously the other two fingers rotate the cup tow ards the palm, completing one rotation together . The fingers subsequently release and re- establish contact with the cup, repeating the process. The task is successful if the cup remains standing and the cumulative rotation angle exceeds 90 degrees. C. Baselines T o validate the ef fectiv eness of T ransDex, a comparativ e ev aluation of its manipulation task performance w as conducted against the following three baseline methods based on 3D point clouds: 1) 3D-V iT ac [ 15 ]: A robot synesthesia learning approach that integrates tactile-based points and force information into a point cloud representation for joint training. The original method utilizes only one-dimensional normal force informa- tion. In our experiments, it was extended to utilize three- dimensional force data to obtain richer force information. 2) DP3 [ 14 ]: A simple yet ef fectiv e 3D point cloud imita- tion learning method. The original model architecture was used in the experiments, without incorporating the tactile modality . 7 Fig. 4. Visualization of Policy’ s rollout on Three T ransparent Object Manipulation T asks , including pouring, shaking, and rotating. The unseen objects used in the test, as well as the complex backgrounds and lighting conditions, are shown at the bottom. T ABLE I S U CC E S S R A T E O F T R A NS D E X A N D B A S E L IN E P O L I C IE S Method Pouring Shaking Rotating A vg 3D-V iT ac 0% 0% 0% 0% DP3 10% 0% 50% 20% 3DT acDex-P 70% 50% 20% 47% T ransDex(Ours) 100% 80% 70% 83% *Note : Bolded content denotes the highest success rate of each task; same for the subsequent tables. 3) 3DT acDex-P [ 25 ]: A method that employs a nov el representation scheme for array-style tactile data and models tactile information using a graph structure. In our experiments, the RGB image input in the model was replaced with point cloud input consistent with our policy . These three baseline methods encompass visual point cloud driv en policy , visuo-tactile point cloud fusion, and nov el tactile representation schemes, facilitating a comprehensiv e ev aluation of the adv antages of T ransDex across key technical dimensions of dexterous manipulation. D. Effectiveness Comparison of Manipulation P olicies As shown in T able I , the proposed policy achieved an av erage success rate of 83%, which is significantly higher than that of all other baseline methods across all tasks. Notably , all attempts with 3D-V iT ac as the policy failed, as while the robot was capable of approaching the object ef fectiv ely , it consistently failed to establish contact with or lift the object. DP3 also performed poorly across the three tasks, with an av erage success rate of only 20%. During experiments, unstable end-effector movements with significant oscillations was observed, leading to task failure. This aligns with the findings of CordV ip [ 16 ] and Rise [ 48 ]: point cloud-based poli- cies generally fail to achieve high manipulation performance when the quality of point cloud data for transparent objects acquired by depth cameras is poor . Furthermore, the lack of tactile information in DP3 makes it particularly challenging to complete manipulation tasks on transparent objects using only incomplete visual data. Among the baseline methods, the 3DT acDex-P policy per- formed best, achieving an average success rate of 47%. While this policy performed well in the pouring task, it frequently exhibited significant end-effector jitter . In the shaking task, the robot’ s middle, ring, and little fingers typically remained pressed firmly against the flask after gripping it, resulting in in- sufficient shaking amplitude. For the rotating task, 3DT acDe x- P demonstrated unstable performance, with difficulties in coordinating finger movements, resulting in a success rate of only 20%. While the policy’ s well-designed representation endows it with excellent tactile perception capabilities, the inherent sparsity of the point cloud data ultimately limits its manipulation performance. By contrast, owing to the integration of point cloud re- construction pre-training, fine-grained feature encoding and feature combination-prediction design, T ransDex can deriv e 8 Fig. 5. Visualization of Point Cloud Reconstruction Perf ormance in Pre- T raining T asks and Real-W orld T ransfer Reconstruction Outcomes. robust object representations from sparse point clouds. It exhibits strong robustness against incomplete point cloud information and demonstrates better arm-hand coordination during motion. Consequently , it achieved better performance across all three tasks. E. Effectiveness of P oint Cloud Reconstruction Pre-tr aining Based on the pre-trained point cloud reconstruction net- work, the point cloud of the target object can be recon- structed by generating dense query points within a predefined bounding box and filtering those with a predicted confidence score exceeding the threshold λ : P rec = { q | f θ e P ′ o ; q > λ or f θ ( P h o ; q ) > λ } . Fig. 5 demonstrates the ef fectiv eness of the pre-training component in point cloud reconstruction tests in both simulated and real-world environments. Experiments conducted within the simulation environment demonstrate that the proposed method can accurately recon- struct the geometric shape and spatial pose of objects from hand-object interaction point cloud, even when the original point cloud is subject to the addition of noise and 70% region masking. The L2 Chamfer Distance( C D − ℓ 2 ) is adopted as the similarity metric to ev aluate the discrepancy between the reconstructed point cloud and the ground-truth point cloud of the target objects. After normalizing the hand-object in- teraction point cloud to a standardized space e P ′ o ∈ [ − 1 , 1] 3 , the average reconstruction accuracy reaches a C D − ℓ 2 of 8 . 1 × 10 − 3 , demonstrating the robustness of the model against perceptually ambiguous inputs. In zero-shot transfer experiments conducted in real-world en vironments, with the incorporation of positional information T ABLE II S U CC E S S R A T E O F A B L A T IO N S T UDY Method Pouring Shaking Rotating A vg Ours w/o Diff. Pred. 0% 0% 0% 0% Ours w/o T ac 0% 0% 20% 7% Ours w/o Pre-train 30% 30% 10% 23% T ransDex(Ours) 100% 80% 70% 83% from tactile sensors as supplementary input, the model effec- tiv ely overcame challenges such as unev en noise distribution and sparsity of point clouds inherent in real scenes. The model successfully reconstructed point cloud structures that closely matched the shapes of the target transparent objects. These results demonstrate that the pre-trained encoder can effecti v ely extract features representing the shape and pose of the interactiv e object e ven under ambiguous real-world scenarios, enabling it to provide reliable guidance for the downstream motor policy . T o quantify the contribution of the pre-training technique to the downstream motor policy , we compared the manipulation performance of the policy incorporating the pre-trained en- coder against that of a policy trained from scratch. As shown in T able II , under identical experimental conditions, the pre- trained policy achiev ed an av erage success rate of 60% higher than the policy trained from scratch. This indicates that pre- training significantly enhances the ability of the encoder to extract geometric features, thereby improving the robustness of the downstream motor policy in perceptually ambiguous scenarios. F . Ablation Study Ablation experiments were designed and conducted to an- alyze the impact of other components and information in the policy on manipulation performance. W e ev aluated the per- formance of three models with distinct ablations: one without differentiated prediction, one without tactile information, and one without pre-training. The results are presented in T able II . These indicate that differentiated prediction, tactile informa- tion and pre-training are all core components of the proposed policy . They contribute to success rate improvements of 83%, 76%, and 60% respectiv ely on transparent object manipulation tasks. W ithout tactile information, the policy failed to grasp the object in the pouring task. During the shaking task, the lack of force feedback often prev ented the thumb and index finger from maintaining a stable pinch grip after the dexterous hand lifted the flask, leading to frequent drops of the flask. Notably , in contact-rich task, such as rotating glass cup, the policy without tactile information did not wait for finger contact with the cup and tactile feedback before initiating rotation, unlike the tactile-enabled policy . While this approach resulted in a non-zero success probability , it also made the cup prone to being knocked over due to uncontrolled contact forces. The polic y lacking dif ferentiated prediction failed in all attempted trials. In the pouring and shaking tasks, the dex- terous hand could grasp the objects but was unable to move them to the correct positions or adjust them to the target 9 T ABLE III S U CC E S S R A T E O F G E N ER A L I ZAT IO N S T UDY Settings Pouring Shaking Rotating A vg Complex Backgrounds 90% 80% 70% 80% Complex Lighting 80% 80% 60% 73% Unseen Objects 100% 90% 50% 80% poses. In the rotating task, the policy demonstrated poor arm- hand coordination, failing to adjust the end-effector and finger positions based on tactile feedback. This resulted in sev ere oscillation and an inability to complete the task. These results suggest that the absence of differentiated prediction prev ented the policy from capturing the correct synergistic arm-hand mov ement patterns. By contrast, incorporating feature com- bination and differentiated prediction significantly improves the success rate of complex, coordinated manipulation tasks in volving the robotic arm and dexterous hand. G. Generalization to Complex V isual Interference and Unseen Objects T o v alidate the generalization capability of T ransDex, e xper- iments were conducted to e valuate its performance in scenarios in volving complex backgrounds and challenging lighting con- ditions, as well as in manipulating unseen objects, as illustrated in Fig. 4 . The experimental results are presented in T able III . T o validate performance under complex perceptual dis- turbances, experiments were conducted under two types of unseen comple x backgrounds and one type of challenging lighting condition. The polic y trained in the simple background was deployed directly without modification, with five attempts conducted for each scenario. The results demonstrate that T ransDex achieved average success rates of 80% and 73% in scenarios inv olving complex backgrounds and challenging lighting condition, respectiv ely . These results indicate that the 3D point cloud-based policy effecti vely resists interference from variations in background color and illumination, main- taining stable performance in transparent object manipulation tasks even under complex perceptual conditions. For each of the three tasks, two types of transparent objects that had not been seen before were selected as test objects, with fiv e attempts conducted for each one. The results demonstrate that TransDe x achie ved an average success rate of 80% on unseen objects. This indicates that the perceptual pre-training approach enhances performance by enabling the model to generalize the manipulation logic learned during training to objects outside the training dataset. Specifically , the policy achieved a 100% success rate with both unseen objects in the pouring task. In the shaking task, all attempts with the narrow-mouth conical flask were successful, whereas one attempt with the wide-mouth flask failed. This failure occurred when the fingertips collided with the rim of the flask during the initial grasping attempt. For the rotating task, the success rate with unseen objects was 50%, which is lower than the original success rate of 70%. This is primarily because, after completing one rotation, the index and middle fingers often generated counteracting forces when re-establishing contact with the wider-aperture vase, which prev ented sustained rota- tion. Furthermore, the performance of a policy based on 2D image information was compared in the rotating task under complex perceptual conditions. This policy adopted the orig- inal 3DT acDex architecture and retained its image encoder for processing 2D images. The experimental results show that with 3DT acDex, all attempts by the robot failed in the rotating task. This is primarily because the contours of transparent objects are easily confused with complex backgrounds in RGB images. By contrast, TransDe x based on point clouds achiev ed a success rate of 70% under the same conditions, performing significantly better than 3DT acDex. This validates the supe- riority of 3D point clouds over 2D images in capturing the geometric features of transparent objects. Robust perception and manipulation can be achieved through the spatial structure of the point clouds, supplemented by tactile information. V . C O N C L U S I O N This paper presents TransDe x, an end-to-end manipulation framew ork, integrating point cloud reconstruction pre-training and deep visuo-tactile fusion. TransDe x demonstrates strong perceptual robustness and motion prediction capability in dex- terous manipulation tasks of transparent objects. Experimental results show that the point cloud reconstruction pre-training technique allows the robot to accurately perceive target objects in perceptually ambiguous conditions, greatly improving the performance of the do wnstream policy in transparent objects manipulation. Furthermore, the framework improves the coor- dination and efficiency of the robotic system through feature combination and task adaptation. Notably , T ransDex works independently of perceptual optimization modules, reducing potential error accumulation and latency in intermediate steps, and making it more suitable for dynamic, real-time robotic manipulation. Nev ertheless, this work has certain limitations. Firstly , the pre-training phase relies on simulated data. While the model exhibits a certain degree of transferability in real-world sce- narios, it still requires further adaptation and adjustment for more complex environments. Secondly , the spatial resolution and response frequency of the tactile sensors restrict the performance in complex contact situations. Future work could in volv e constructing large-scale, real-world dexterous-hand interaction datasets; dev eloping tactile-adaptiv e policies; and designing higher-performance tactile sensing systems. These dev elopments would further enhance the frame work’ s overall adaptability in dynamic and complex scenarios. R E F E R E N C E S [1] I. Guzey , Y . Dai, B. Evans, S. Chintala, and L. Pinto, “See to touch: Learning tactile dexterity through visual incentiv es, ” in 2024 IEEE International Conference on Robotics and Automation (ICRA) . IEEE, 2024, pp. 13 825–13 832. [2] C. Chen, Z. Y u, H. Choi, M. Cutkosky , and J. Bohg, “Dexforce: Extracting force-informed actions from kinesthetic demonstrations for dexterous manipulation, ” IEEE Robotics and Automation Letters , 2025. [3] B. Romero, H.-S. Fang, P . Agrawal, and E. Adelson, “Eyesight hand: Design of a fully-actuated dexterous robot hand with integrated vision- based tactile sensors and compliant actuation, ” in 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) . IEEE, 2024, pp. 1853–1860. 10 [4] Q. Liu, Q. Y e, Z. Sun, Y . Cui, G. Li, and J. Chen, “Masked visual- tactile pre-training for robot manipulation, ” in 2024 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2024, pp. 13 859–13 875. [5] Q. Mao, Z. Liao, J. Y uan, and R. Zhu, “Multimodal tactile sensing fused with vision for dexterous robotic housekeeping, ” Nature Commu- nications , vol. 15, no. 1, p. 6871, 2024. [6] I. Guzey , B. Evans, S. Chintala, and L. Pinto, “Dexterity from touch: Self-supervised pre-training of tactile representations with robotic play , ” in Conference on Robot Learning . PMLR, 2023, pp. 3142–3166. [7] J. Y ang, Z. Cao, C. Deng, R. Antonova, S. Song, and J. Bohg, “Equibot: Sim (3)-equiv ariant diffusion policy for generalizable and data efficient learning, ” in Confer ence on Robot Learning . PMLR, 2025, pp. 1048– 1068. [8] C. W en, X. Lin, J. So, K. Chen, Q. Dou, Y . Gao, and P . Abbeel, “ Any-point trajectory modeling for policy learning, ” arXiv preprint arXiv:2401.00025 , 2023. [9] Z. Zhao, D. Zheng, Y . Chen, J. Luo, Y . W ang, P . Huang, and C. Y ang, “Integrating with multimodal information for enhancing robotic grasp- ing with vision-language models, ” IEEE T ransactions on Automation Science and Engineering , vol. 22, pp. 13 073–13 086, 2025. [10] K. Li, P . Li, T . Liu, Y . Li, and S. Huang, “Maniptrans: Ef ficient dexterous bimanual manipulation transfer via residual learning, ” in Pr oceedings of the Computer V ision and P attern Recognition Confer ence , 2025, pp. 6991–7003. [11] S. Li, Y . Huang, C. Guo, T . W u, J. Zhang, L. Zhang, and W . Ding, “Chemistry3d: Robotic interaction toolkit for chemistry experiments, ” in 2025 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2025, pp. 8064–8071. [12] C. Chi, Z. Xu, S. Feng, E. Cousineau, Y . Du, B. Burchfiel, R. T edrake, and S. Song, “Diffusion policy: V isuomotor policy learning via action diffusion, ” The International Journal of Robotics Research , vol. 44, no. 10-11, pp. 1684–1704, 2025. [13] C. Chi, Z. Xu, C. Pan, E. Cousineau, B. Burchfiel, S. Feng, R. T edrake, and S. Song, “Universal manipulation interface: In-the-wild robot teach- ing without in-the-wild robots, ” arXiv preprint , 2024. [14] Y . Ze, G. Zhang, K. Zhang, C. Hu, M. W ang, and H. Xu, “3d diffusion policy: Generalizable visuomotor policy learning via simple 3d representations, ” in Proceedings of Robotics: Science and Systems (RSS) , 2024. [15] B. Huang, Y . W ang, X. Y ang, Y . Luo, and Y . Li, “3d-vitac: Learning fine-grained manipulation with visuo-tactile sensing, ” in 8th Annual Confer ence on Robot Learning , 2024. [16] Y . Fu, Q. Feng, N. Chen, Z. Zhou, M. Liu, M. W u, T . Chen, S. Rong, J. Liu, H. Dong et al. , “Cordvip: Correspondence-based visuomo- tor policy for dexterous manipulation in real-world, ” arXiv preprint arXiv:2502.08449 , 2025. [17] J. Li, T . W u, J. Zhang, Z. Chen, H. Jin, M. W u, Y . Shen, Y . Y ang, and H. Dong, “ Adapti ve visuo-tactile fusion with predictiv e force attention for dexterous manipulation, ” in 2025 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IR OS) , 2025, pp. 3232–3239. [18] Y . Y uan, H. Che, Y . Qin, B. Huang, Z.-H. Y in, K.-W . Lee, Y . Wu, S.-C. Lim, and X. W ang, “Robot synesthesia: In-hand manipulation with visuotactile sensing, ” in 2024 IEEE International Conference on Robotics and Automation (ICRA) . IEEE, 2024, pp. 6558–6565. [19] Y . Ze, Z. Chen, W . W ang, T . Chen, X. He, Y . Y uan, X. B. Peng, and J. W u, “Generalizable humanoid manipulation with 3d diffusion policies, ” in 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) , 2025, pp. 2873–2880. [20] Y . Liu, Y . Y ang, Y . W ang, X. W u, J. W ang, Y . Y ao, S. Schwertfeger , S. Y ang, W . W ang, J. Y u, X. He, and Y . Ma, “Realdex: T ow ards human- like grasping for robotic dexterous hand, ” in Proceedings of the Thirty- Thir d International Joint Confer ence on Artificial Intelligence, IJCAI-24 . International Joint Conferences on Artificial Intelligence Organization, 2024, pp. 6859–6867. [21] Q. Zhao, M. Zheng, Z. Li, S. Huang, and W . Shi, “Robot dexterous grasping in cluttered scenes based on single-view point cloud, ” IEEE T ransactions on Automation Science and Engineering , vol. 22, pp. 17 160–17 169, 2025. [22] R. Patel, R. Ouyang, B. Romero, and E. Adelson, “Digger finger: Gelsight tactile sensor for object identification inside granular media, ” in International Symposium on Experimental Robotics . Springer, 2020, pp. 105–115. [23] T . P . T omo, A. Schmitz, W . K. W ong, H. Kristanto, S. Somlor , J. Hwang, L. Jamone, and S. Sugano, “Covering a robot fingertip with uskin: A soft electronic skin with distributed 3-axis force sensitive elements for robot hands, ” IEEE Robotics and Automation Letters , vol. 3, no. 1, pp. 124–131, 2017. [24] Paxini Intelligent Robot, “Paxini: High-precision multi-dimensional tactile sensors & humanoid robots. ” [Online]. A vailable: https: //www .paxini.com/ [25] T . W u, J. Li, J. Zhang, M. W u, and H. Dong, “Canonical representation and force-based pretraining of 3d tactile for dexterous visuo-tactile policy learning, ” in 2025 IEEE International Conference on Robotics and Automation (ICRA) . IEEE, 2025, pp. 6786–6792. [26] J. Jiang, G. Cao, J. Deng, T .-T . Do, and S. Luo, “Robotic perception of transparent objects: A revie w , ” IEEE T ransactions on Artificial Intelligence , vol. 5, no. 6, pp. 2547–2567, 2023. [27] S. Sajjan, M. Moore, M. Pan, G. Nagaraja, J. Lee, A. Zeng, and S. Song, “Clear grasp: 3d shape estimation of transparent objects for manipulation, ” in 2020 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2020, pp. 3634–3642. [28] Q. Dai, J. Zhang, Q. Li, T . Wu, H. Dong, Z. Liu, P . T an, and H. W ang, “Domain randomization-enhanced depth simulation and restoration for perceiving and grasping specular and transparent objects, ” in European Confer ence on Computer V ision . Springer, 2022, pp. 374–391. [29] J. Jiang, G. Cao, T .-T . Do, and S. Luo, “ A4t: Hierarchical affordance detection for transparent objects depth reconstruction and manipulation, ” IEEE Robotics and Automation Letters , vol. 7, no. 4, pp. 9826–9833, 2022. [30] S. Li, H. Y u, W . Ding, H. Liu, L. Y e, C. Xia, X. W ang, and X.-P . Zhang, “V isual–tactile fusion for transparent object grasping in complex backgrounds, ” IEEE Tr ansactions on Robotics , vol. 39, no. 5, pp. 3838– 3856, 2023. [31] K. Bai, H. Zeng, L. Zhang, Y . Liu, H. Xu, Z. Chen, and J. Zhang, “Cleardepth: enhanced stereo perception of transparent objects for robotic manipulation, ” arXiv pr eprint arXiv:2409.08926 , 2024. [32] D.-H. Zhai, S. Y u, W . W ang, Y . Guan, and Y . Xia, “Tcrnet: Transparent object depth completion with cascade refinements, ” IEEE T ransactions on Automation Science and Engineering , vol. 22, pp. 1893–1912, 2025. [33] Y . Y an, H. T ian, K. Song, Y . Li, Y . Man, and L. T ong, “Transparent object depth perception network for robotic manipulation based on orientation-aware guidance and texture enhancement, ” IEEE T ransac- tions on Instrumentation and Measur ement , vol. 73, pp. 1–11, 2024. [34] Z. Sun, Z. Shi, J. Chen, Q. Liu, Y . Cui, J. Chen, and Q. Y e, “Vtao-bimanip: Masked visual-tactile-action pre-training with object understanding for bimanual dexterous manipulation, ” in 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) . IEEE, 2025. [35] W . Xu, Z. Y u, H. Xue, R. Y e, S. Y ao, and C. Lu, “V isual-tactile sensing for in-hand object reconstruction, ” in Proceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2023, pp. 8803–8812. [36] C. Jiang, W . Xu, Y . Li, Z. Y u, L. W ang, X. Hu, Z. Xie, Q. Liu, B. Y ang, X. W ang et al. , “Capturing forceful interaction with deformable objects using a deep learning-powered stretchable tactile array , ” Nature Communications , vol. 15, no. 1, p. 9513, 2024. [37] X. Zhu, B. Huang, and Y . Li, “T ouch in the wild: Learning fine-grained manipulation with a portable visuo-tactile gripper , ” arXiv preprint arXiv:2507.15062 , 2025. [38] L. W ang, X. Chen, J. Zhao, and K. He, “Scaling proprioceptiv e- visual learning with heterogeneous pre-trained transformers, ” Advances in Neural Information Pr ocessing Systems , v ol. 37, pp. 124 420–124 450, 2024. [39] S. Gano, A. George, and A. B. Farimani, “Low fidelity visuo-tactile pre- training improv es vision-only manipulation performance, ” arXiv pr eprint arXiv:2406.15639 , 2024. [40] S. Nair, A. Rajeswaran, V . Kumar , C. Finn, and A. Gupta, “R3m: A univ ersal visual representation for robot manipulation, ” in Conference on Robot Learning . PMLR, 2023, pp. 892–909. [41] G. Y an, Y .-H. W u, and X. W ang, “Dnact: Diffusion guided multi-task 3d policy learning, ” in 2025 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IROS) , 2025, pp. 9464–9471. [42] Y . Ze, Y . Liu, R. Shi, J. Qin, Z. Y uan, J. W ang, and H. Xu, “H-index: V isual reinforcement learning with hand-informed representations for dexterous manipulation, ” Advances in Neural Information Processing Systems , vol. 36, pp. 74 394–74 409, 2023. [43] Z. Y uan, T . W ei, S. Cheng, G. Zhang, Y . Chen, and H. Xu, “Learning to manipulate anywhere: A visual generalizable framework for reinforce- ment learning, ” in Confer ence on Robot Learning . PMLR, 2025, pp. 1815–1833. [44] E. Coumans and Y . Bai, “Pybullet, a python module for physics simulation for games, robotics and machine learning, ” 2016. [45] X. Chen, H. Zhang, Z. Y u, A. Opipari, and O. Chadwicke Jenkins, 11 “Clearpose: Large-scale transparent object dataset and benchmark, ” in Eur opean Conference on Computer V ision . Springer, 2022, pp. 381– 396. [46] J. Ho, A. Jain, and P . Abbeel, “Denoising dif fusion probabilistic models, ” Advances in Neural Information Processing Systems , vol. 33, pp. 6840– 6851, 2020. [47] Y . Ma, W . Shang, F . Zhang, S. Pang, S. Dai, and S. Cong, “Development of a flexible cable-driv en dexterous hand and arm–hand integrated system, ” IEEE/ASME Tr ansactions on Mechatr onics , 2025. [48] C. W ang, H. Fang, H.-S. Fang, and C. Lu, “Rise: 3d perception makes real-world robot imitation simple and effectiv e, ” in 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) . IEEE, 2024, pp. 2870–2877.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment