Exploring Low-Dimensional Subspaces in Diffusion Models for Controllable Image Editing

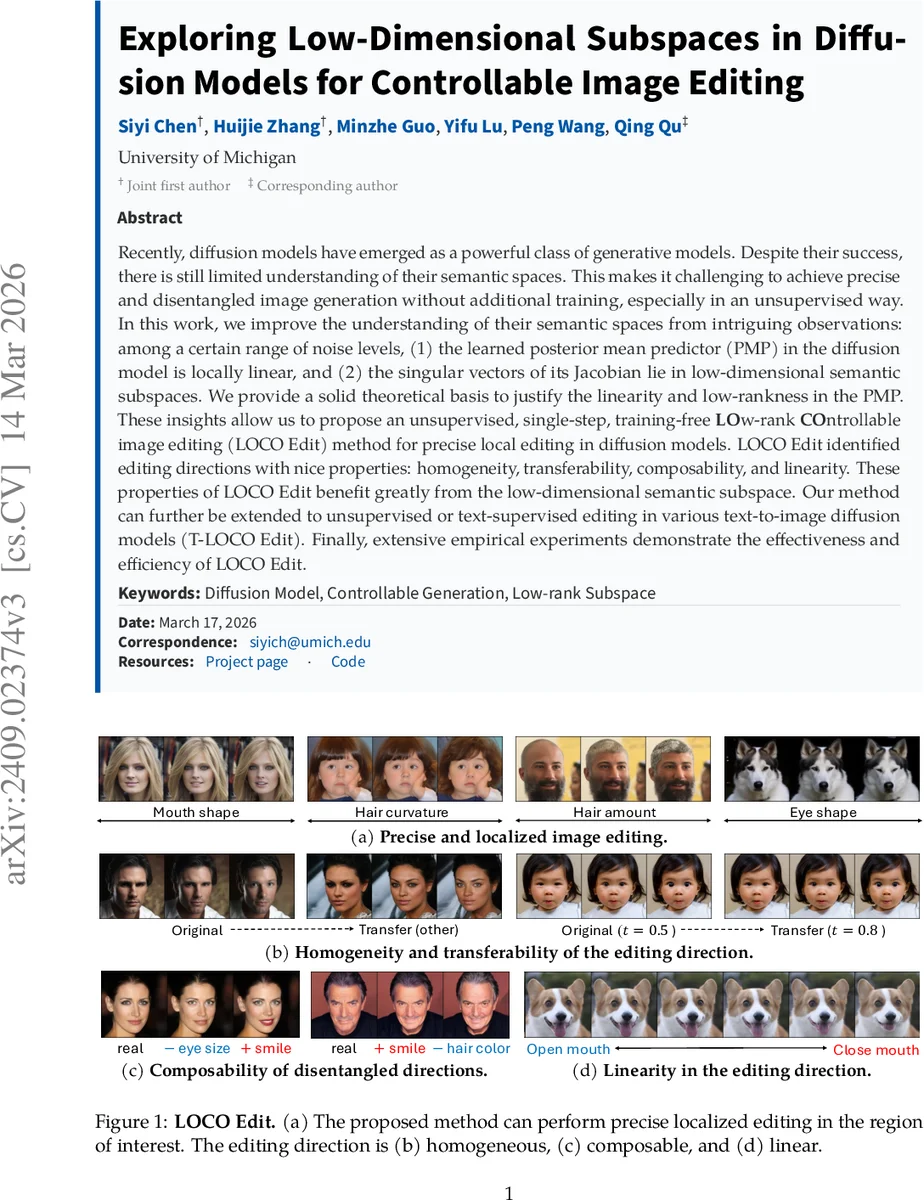

Recently, diffusion models have emerged as a powerful class of generative models. Despite their success, there is still limited understanding of their semantic spaces. This makes it challenging to achieve precise and disentangled image generation without additional training, especially in an unsupervised way. In this work, we improve the understanding of their semantic spaces from intriguing observations: among a certain range of noise levels, (1) the learned posterior mean predictor (PMP) in the diffusion model is locally linear, and (2) the singular vectors of its Jacobian lie in low-dimensional semantic subspaces. We provide a solid theoretical basis to justify the linearity and low-rankness in the PMP. These insights allow us to propose an unsupervised, single-step, training-free LOw-rank COntrollable image editing (LOCO Edit) method for precise local editing in diffusion models. LOCO Edit identified editing directions with nice properties: homogeneity, transferability, composability, and linearity. These properties of LOCO Edit benefit greatly from the low-dimensional semantic subspace. Our method can further be extended to unsupervised or text-supervised editing in various text-to-image diffusion models (T-LOCO Edit). Finally, extensive empirical experiments demonstrate the effectiveness and efficiency of LOCO Edit.

💡 Research Summary

This paper investigates the internal semantic structure of diffusion models and leverages the discovered properties to enable precise, localized image editing without any additional training. The authors focus on the posterior mean predictor (PMP) fθ,t(x_t), which maps a noisy latent x_t to an estimate of the clean image’s posterior mean. Through extensive empirical analysis across multiple architectures (UNet, Vision Transformers, ConvNets) and datasets (CIFAR‑10, CelebA, LAION‑5B), they observe two key phenomena within a broad range of diffusion timesteps (approximately t ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment