MuViS: Multimodal Virtual Sensing Benchmark

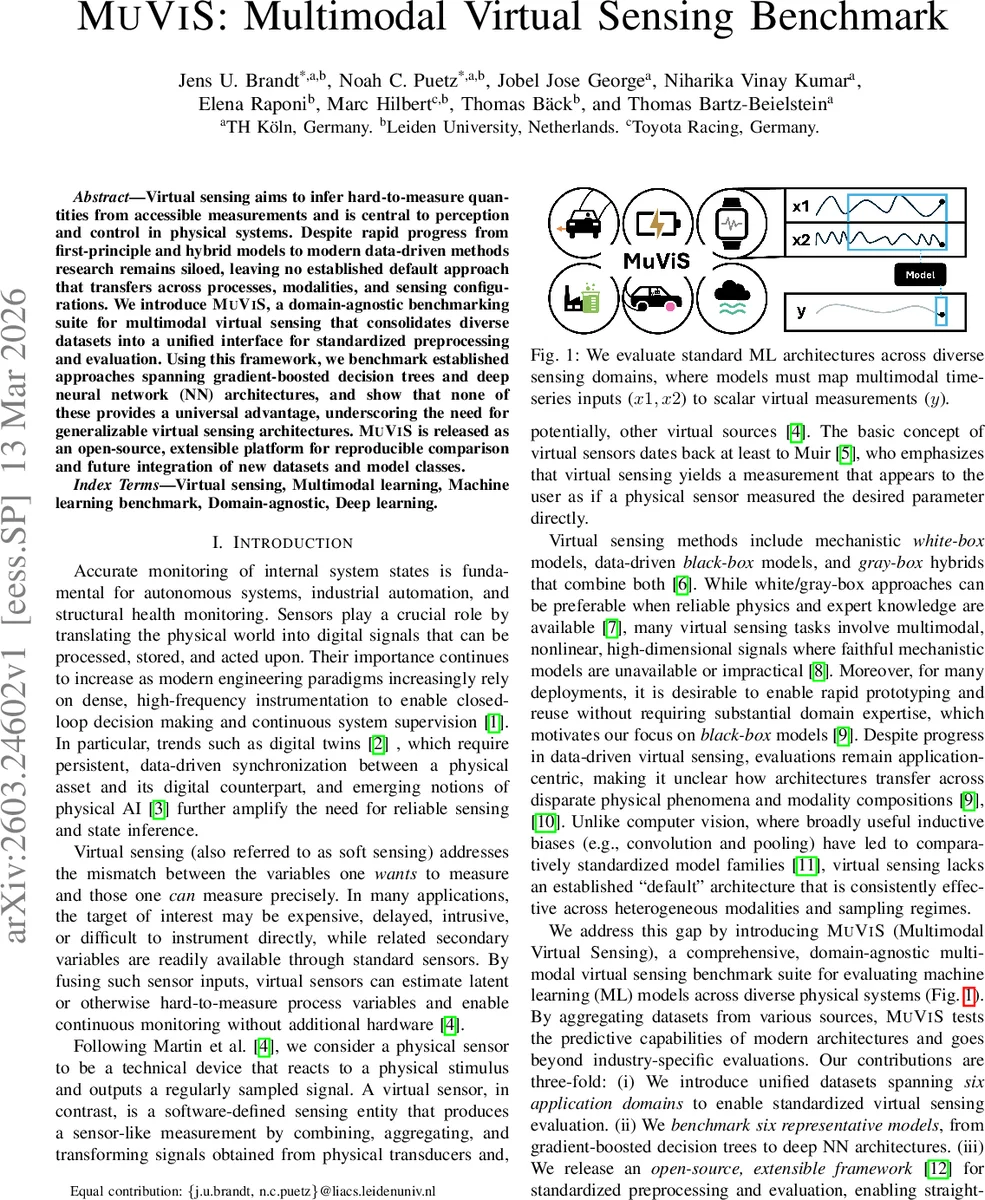

Virtual sensing aims to infer hard-to-measure quantities from accessible measurements and is central to perception and control in physical systems. Despite rapid progress from first-principle and hybrid models to modern data-driven methods research remains siloed, leaving no established default approach that transfers across processes, modalities, and sensing configurations. We introduce MuViS, a domain-agnostic benchmarking suite for multimodal virtual sensing that consolidates diverse datasets into a unified interface for standardized preprocessing and evaluation. Using this framework, we benchmark established approaches spanning gradient-boosted decision trees and deep neural network (NN) architectures, and show that none of these provides a universal advantage, underscoring the need for generalizable virtual sensing architectures. MuViS is released as an open-source, extensible platform for reproducible comparison and future integration of new datasets and model classes.

💡 Research Summary

The paper introduces MuViS, a domain‑agnostic benchmarking suite for multimodal virtual sensing, addressing the lack of a universal baseline that can transfer across disparate physical processes, sensor modalities, and configurations. Virtual sensing—also known as soft sensing—aims to infer hard‑to‑measure quantities (e.g., particulate matter concentration, tire temperature, battery state‑of‑charge, heart rate) from readily available sensor streams. While physics‑based white‑box or gray‑box models excel when domain knowledge is abundant, many real‑world problems involve high‑dimensional, nonlinear, multimodal data where such models are impractical. Consequently, data‑driven black‑box approaches have become popular, yet their evaluations remain siloed within specific applications, making it unclear how well they generalize.

MuViS formalizes the problem as a multimodal dynamic time‑series regression: each sample consists of M modality‑specific streams (x_j \in \mathbb{R}^{D_j \times T_j}). A subset (S_i \subseteq {1,\dots,M}) denotes the modalities actually observed for that sample, reflecting realistic variability in sensor availability. All streams are aligned to a common window length (s = \max_{j\in S_i} T_j); shorter histories are padded, masked, or up‑sampled. The target is a scalar (or low‑dimensional) sensor‑like measurement (y_i(t_0)) anchored at the end of the window, not a forecast. This formulation unifies diverse tasks under a single input‑output schema.

The benchmark aggregates six publicly available datasets spanning six domains:

- Beijing Air Quality (PM2.5/PM10) – 9‑dimensional hourly series, 24‑step windows.

- Vehicle Dynamics (REVS) – 12 channels from a race car, resampled to 20 Hz, 20‑step windows, target is lateral velocity.

- Tire Temperature – 11 vehicle‑state channels at 1 Hz, 50‑step windows, target is mean temperature of the front‑left tire.

- Tennessee Eastman Process (TEP) – Simulated chemical plant, 33 process/manipulated variables, 3‑minute sampling, 20‑step windows, target is concentration of a specific chemical.

- Battery State‑of‑Charge (Panasonic 18650PF) – 7 channels (current, voltage, temperature, engineered features), 10 Hz, 120‑step windows.

- Heart Rate (PPG‑DaLiA) – Wrist‑worn PPG, EDA, temperature, 3‑axis acceleration, resampled to 64 Hz, 512‑step windows, target derived from ECG.

All datasets are standardized: fixed‑length windows, uniform sampling, and identical train/validation/test splits. This enables fair cross‑dataset comparison.

Six baseline models are evaluated:

- Gradient‑Boosted Decision Trees – XGBoost and CatBoost.

- Deep Neural Networks – Multi‑Layer Perceptron (MLP), Long Short‑Term Memory (LSTM), 1‑D ResNet, and a BERT‑style Transformer encoder with learnable positional encodings.

For tree‑based and MLP models, the temporal dimension is flattened into a single feature vector. For sequence models, the raw windows are fed directly. Hyper‑parameter optimization is performed with Optuna (Tree‑structured Parzen Estimator) over 100 trials per model‑dataset pair, minimizing RMSE on a 10 % validation split. Final performance is reported on a held‑out test set; all experiments run on a single NVIDIA H100 GPU.

Key findings:

- No single architecture dominates across all domains. Statistical analysis using the Friedman test (p = 0.095) fails to reject the null hypothesis of equal performance. A Nemenyi post‑hoc test shows that the rank difference between the best and worst models barely exceeds the critical distance (CD = 2.513), indicating only marginal separations.

- Gradient‑Boosted Trees excel in three domains (Vehicle Dynamics, Tennessee Eastman, Beijing PM10), achieving the lowest RMSE, likely due to their ability to capture complex nonlinear interactions while remaining robust to limited data.

- LSTM and ResNet perform best on fast‑dynamics tasks (REVS lateral velocity, Beijing PM2.5), suggesting that recurrent and convolutional temporal inductive biases are advantageous when short‑term dynamics dominate.

- Transformer does not provide a clear advantage in the current settings, possibly because the window lengths are modest and the datasets relatively small, limiting the benefit of self‑attention.

The authors conclude that the diversity of optimal architectures underscores the need for more generalizable virtual‑sensing models that can adapt to varying modality configurations and dynamics. They release MuViS as an extensible open‑source framework, encouraging the community to add new datasets, model families, and evaluation metrics.

Limitations noted include the assumption of fixed‑length windows and full modality availability during training, which may not reflect real‑world sensor failures or irregular sampling. Moreover, the benchmark focuses solely on RMSE, omitting considerations such as latency, uncertainty quantification, or robustness—critical factors for deployment in safety‑critical systems.

Future research directions suggested are:

- Dynamic modality selection and weighting, allowing models to gracefully handle missing or degraded sensors.

- Multi‑task and uncertainty‑aware learning, integrating confidence estimates into virtual sensor outputs.

- Lightweight on‑device architectures, enabling real‑time inference on embedded hardware.

- Extended evaluation criteria, such as computational cost, energy consumption, and robustness to noise or distribution shifts.

In summary, MuViS provides a much‑needed standardized platform for evaluating multimodal virtual sensing methods across a broad spectrum of real‑world problems. By demonstrating that existing state‑of‑the‑art models lack universal superiority, the work motivates the development of more adaptable, robust, and domain‑agnostic virtual sensing architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment