PLUME: Building a Network-Native Foundation Model for Wireless Traces via Protocol-Aware Tokenization

Foundation models succeed when they learn in the native structure of a modality, whether morphology-respecting tokens in language or pixels in vision. Wireless packet traces deserve the same treatment: meaning emerges from layered headers, typed fiel…

Authors: Swadhin Pradhan, Shazal Irshad, Jerome Henry

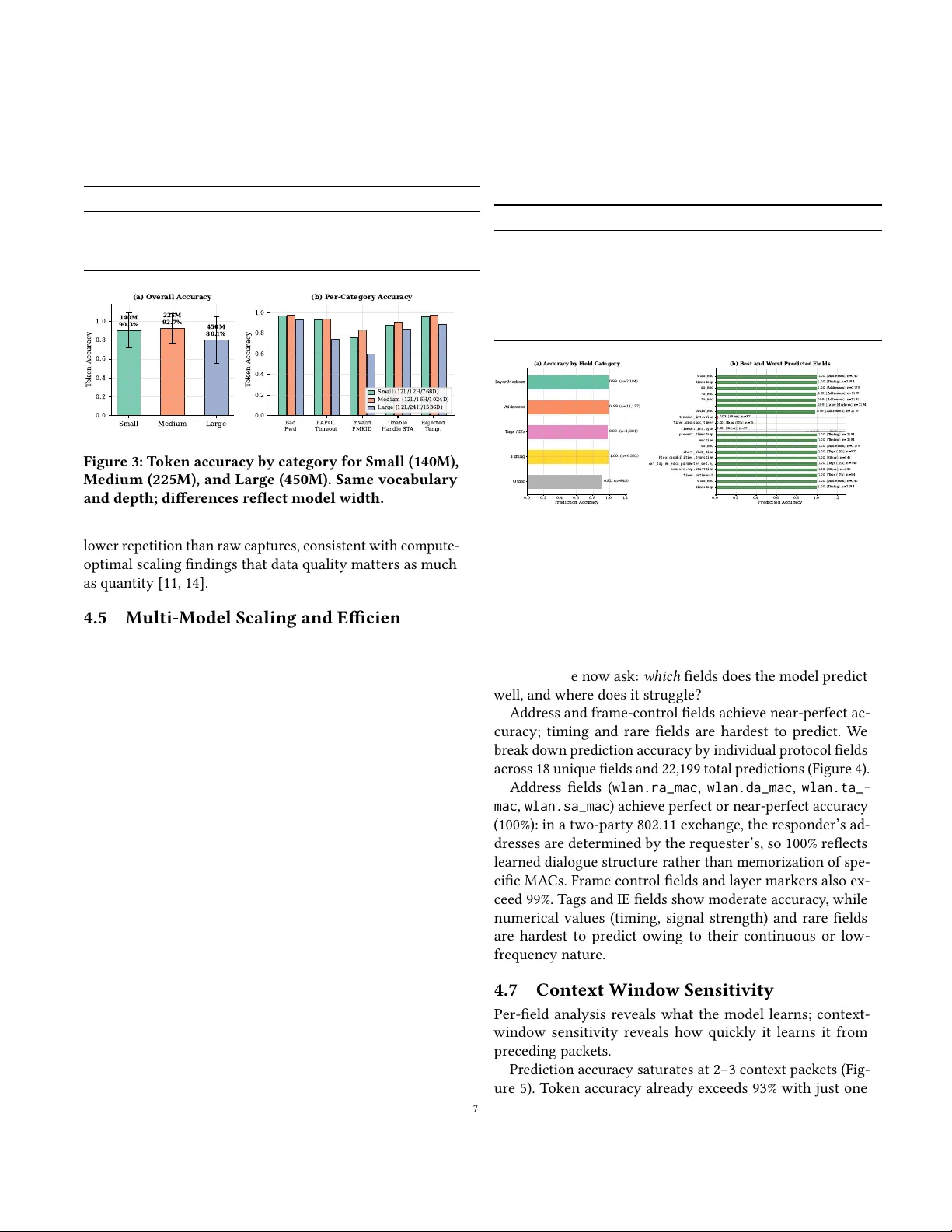

Plume : Building a Network-Nativ e Foundation Model for Wireless T races via Pr otocol- A ware T okenization Swadhin Pradhan swapradh@cisco.com Cisco Systems USA Shazal Irshad sirshad@cisco.com Cisco Systems USA Jerome Henry jerhenry@cisco.com Cisco Systems USA Abstract Foundation models succeed when they learn in the native structure of a modality , whether morphology-respecting to- kens in language or pixels in vision. Wireless packet traces deserve the same treatment: meaning emerges from lay- ered headers, typed elds, timing gaps, and cross-packet state machines, not at strings. W e present Plume ( P r otocol L anguage U nderstanding M odel for E xchanges), a compact 140M-parameter foundation model for 802.11 traces that learns from structured PDML dissections. A protocol-aware tokenizer splits along the dissector eld tree, emits gap tokens for timing, and normalizes identiers, yielding 6 . 2 × shorter sequences than BPE with higher per token information den- sity . Trained on a curated corpus, Plume achieves 74–97% next-packet token accuracy across ve real-world failure categories and AUROC ≥ 0.99 for zero-shot anomaly detec- tion. On the same prediction task, frontier LLMs (Claude Opus 4.6 [ 1 ], GPT -5.4 [ 25 ]) score comparably despite re- ceiving identical protocol context, yet Plume does so with > 600 × fewer parameters, tting on a single GP U at eec- tively zero marginal cost vs. cloud API pricing, enabling on-prem, privacy-preserving root cause analysis. 1 Introduction Foundation models succeed when they learn in the native structure of a modality . In language, GPT -4 and PaLM learn from tokens respecting morphology [ 3 , 23 ]; in vision, modern backbones learn directly from pixels. Wireless networking deserves the same treatment. 802.11 packet exchanges are not free-form text: meaning emerges from layered headers, Infor- mation Elements (IEs) carrying negotiated options, timing gaps, and cross-packet state-machine transitions. Generalist Large Language Models (LLMs) that see packets as attened strings rarely internalize this structure . Plume ( P rotocol L anguage U nderstanding M odel for E xchanges) is a compact, network-language-native founda- tion model for 802.11 wireless traces. It learns from structured dissections , specically Wireshark / tshark Packet Descrip- tion Markup Language (PDML) exports [ 36 , 37 , 39 ]. Rather than claiming novelty in pr etraining over netw ork data (cf. Lens [ 17 ], netFound [ 10 ]), we push to ward a design where representation, tokenization, and data quality are rst-class levers, and outputs can be natural-languagied to interface with LLM planners and chat agents for Root Cause Analysis (RCA) [15]. Why not simply ne-tune a general LLM? Fine-tuning on text tokens preserves an interface mismatch. The model sees surface strings rather than typed protocol elds or state- machine transitions, encouraging shortcuts that mimic rea- soning but encode bias rooted in the tokenize d surface, not the protocol itself. Distillation compounds the problem by compressing the same shortcuts while shedding capacity to question them. W e validate this empirically: frontier LLMs (Claude Opus 4.6 [ 1 ], GPT -5.4 [ 25 ]) given identical protocol context achieve 79–94% token accuracy on ne xt-packet pre- diction, while Plume reaches 75–96% with a > 600 × smaller model (§4.9). A packet-native model amortizes learning once, capturing reusable structure for association or authentica- tion dialogues, control–data interleavings, error signatures, and airtime patterns. Practically , a compact specialized model is simpler to serve on-prem, respects privacy by avoiding external APIs, and ts as a callable tool in multi-agent work- ows, aligning with compute-optimal lessons that advocate scaling tokens and model size in tandem [11]. 1.1 Representation and T okenization Structured dissections as anchors. W e treat PDML ex- ports as primary training substrates because they preserve the protocol tree (typed elds, byte osets, par ent–child r ela- tions) while remaining integrable with scrubbing, annotation, and lab eling pipelines. PDML pro vides stable eld names (e .g., wlan.fc.type_subtype , rsn.capabilities ), explicit typing, and consistent hierarchy [ 36 – 39 ]. Raw PCAPs re- main rst-class for re-dissection, but structured views anchor learning at the right boundaries. Prior foundation-style traf- c models [ 10 , 17 ] demonstrate the promise of pretraining over network data; Plume complements them by centering tokenizer design on eld and timing semantics and by sup- porting a natural-language surface for interoperability . Network-language-native tokenization. T okenization is among the most impactful design choices. O-the-shelf Byte Pair Enco ding (BPE) [ 32 ] or xe d byte chunks smear eld boundaries and timing, yielding long, low-signal se- quences; our experiments conrm BPE on PDML yields 6 . 2 × 1 more tokens per packet than Plume ’s eld-value tokenizer (T able 3). W e adopt protocol- and timing-aware tokenization that (i) splits along the dissector eld tree, (ii) emits gap tokens for inter-arrival times and aggregation windows, (iii) normal- izes identiers that should be compared structurally rather than memorized (MACs, SSIDs, etc.), (iv) adaptively sub- tokenizes variable-length options, and (v ) optionally attaches natural-languagied glosses so outputs are legible to general LLMs without exposing raw payloads. This aligns with recent byte-level modeling showing that dynamic, content-aware patching can rival xed vocabularies at scale [ 26 ]. W e pair this tokenizer with a GPT -style [ 30 ] auto-regressive obje ctive that models the packet conversation across time. 1.2 Data Quality Scaling tokens alone is not enough; what you pretrain on matters as much as how much . Naïve “ capture everything” produces severe skew: today’s pipelines are largely reac- tive , so by the time an alert res, pre-failure context (TCP SYN/A CK, DHCP DISCO VER/OFFER, 802.11 auth/assoc) is gone. Datasets over-represent failures, under-represent healthy baselines, and rarely align positives and negatives under the same SSID , or RF channel, yielding fragile classiers. W e address this via proactive intelligent capture that limits data explosion while preserving what teaches structure: edge agents with rst-sign-of-life buers maintain rolling pre- trigger windows; an adaptive p ositive/negativ e sampling engine constructs matched cohorts under identical RF/policy contexts; and Context-Enriched Capture Bundles (CECBs) pair PCAPs with synchronized metadata (Re ceived Signal Strength Indicator (RSSI) / Channel State Information (CSI) summaries, AP rmware, congestion counters, policy state). Our curation pipeline uses Hierarchical Density-Based Spatial Clustering (HDBSCAN) [20] and Maximal Marginal Relevance (MMR) [ 2 ] sampling to reduce beacon dominance from > 50% to 4.7% in the training set while preserving high per-token entropy (T able 4), aligning with evidence that quality trumps raw token count [11, 14, 28, 41]. 1.3 From Model to System: T oolability Plume is designe d to be callable . A planner (LLM or rule engine) passes Plume a PDML slice for a suspect inter val; Plume returns (i) a structured summary at ow and packet levels, (ii) inconsistency and wrong-eld ags, and (iii) lo- calized hypotheses (e.g., “PMF mismatch with legacy ST A, ” “PS-mode buering → latency spikes”). At 140M parameters it runs on-prem near capture points, exchanges explanations rather than raw packets, and respects strict data-residency constraints, processing ∼ 200 packets/sec on a single N VIDIA A10G at eectively zero marginal cost (T able 7). 1.4 Contributions (1) Plume , a compact 140M-parameter foundation model for wireless traces that learns from structur ed PDML dissections with byte-level fallback, aligning inductive bias with protocol semantics. (2) A protocol- and timing-aware tokenizer with eld-boundar y splits, gap tokens, identier normalization, and adap- tive IE segmentation, producing 6 . 2 × shorter se quences than BPE (T able 3). (3) An HDBSCAN [ 20 ]+MMR [ 2 ] curation pipeline reduc- ing beacon bias from > 50% to 4.7% while preserving rare events [11, 14, 28, 41]. (4) Evaluation on ve real-world 802.11 failure categories (50 PCAPs each) showing 74.1–97.3% token accuracy , zero-shot AUROC ≥ 0.99 for anomaly detection, and 73.2% ve-class root cause accuracy from unsupervise d features, and a head-to-head comparison with frontier LLMs showing that Plume matches or exceeds both on next-packet prediction with > 600 × fewer parameters (T able 8). (5) System integration : Plume as a callable tool in multi- agent RCA, enabling on-prem, privacy-preserving de- ployments that exchange structur ed explanations in- stead of raw packets. 2 Motivation and Background Enterprise wireless networks generate vast volumes of packet traces rich in diagnostic information, from authentication handshakes and association se quences to EAPOL exchanges and data ows. When failures occur ( e.g., bad passwords, EAPOL timeouts, invalid Pair wise Master Key Identiers (PMKIDs)), the root cause is typically buried in subtle cross- packet patterns such as a missing acknowledgment, an une x- pected eld value, or an anomalous timing gap. Diagnosing these failures demands de ep protocol expertise and man- ual Wireshark inspe ction, a process that do es not scale to modern deployments with hundreds of sites and thousands of clients. The promise and limits of general LLMs. LLMs have shown remarkable capability , yet applying them directly to packet analysis faces ve obstacles. (1) Packets are struc- tured, typed, hierarchical protocol data, not natural language; (2) standard tokenizers (BPE [ 32 ], byte-level) destroy eld boundaries, yielding se quences 6 – 16 × longer than neces- sary (T able 3); (3) sending raw packet data to cloud APIs raises privacy and compliance concerns (GDPR, HIP AA, data- residency); (4) API costs scale linearly with volume; we es- timate $4.92 per 1K packets for GPT -5.2 [ 24 ] (T able 7); and (5) even frontier models (Claude Opus 4.6, GPT -5.4) achieve only 86–89% token accuracy on ne xt-packet prediction, com- parable to a 140M-parameter protocol-native model (§4.9). 2 The data quality gap. Existing packet datasets suer from sev ere imbalance. In 802.11 networks, APs transmit beacons every ∼ 102 ms across multiple SSIDs and bands, so a 10-minute capture can contain > 100K quasi-identical beacons dominating any naïve training set. Failure-mode captures are reactiv e, triggered after the fact, so pre-failur e context (initial handshakes, setup packets) is often missing. Models trained on such skewed data learn beacon statistics rather than protocol dynamics. Our approach. Plume addresses these gaps through three interlocking design choices: (1) a protocol-aware tokenizer respecting eld boundaries and protocol hierarchy , yield- ing 6 . 2 × shorter sequences than BPE; (2) a curated training corpus where HDBSCAN [ 20 ] clustering and MMR [ 2 ] sam- pling eliminate redundancy while preserving rare events, reducing beacon dominance from > 50% to 4.7%; and (3) a family of compact auto-regressive architectures (140M–450M parameters) that run on-prem, enabling privacy-preserving deployment as a callable tool in multi-agent RCA workows. 3 T okenization for Network Captures The token is the fundamental semantic unit upon which the entire system is built. It is not merely a vocabular y-reduction device, but the determinant of what the model can learn, how eciently it learns, and how far it can see within a xed context window . T raditional tokenizers fail for network captures be cause they ignore protocol hierarchy; Plume ’s protocol-aware tokenizer addresses each failure mode . 3.1 Why Traditional T okenizers Fail Traditional tokenizers such as BPE [ 32 ] seek short, reusable sub-word units. This makes sense for human languages, where speak er and speak ing share the root sp eak , and the suxes er and ing transfer across many roots. Ho wever , this principle breaks down for network captur es. Packets are ordered bit series, each position encoding a specic role (source address, upper-layer protocol, etc.). Dis- section tools such as Wireshark translate these into eld names, e.g., wlan.da for the 802.11 destination address and wlan.fc.type_subtype for the frame type and subtype. Con- fronted with these, BPE discovers sub-words like lan and wlan , producing tokens such as w , lan , sub , and type , yielding sequences of the form: w lan. fc. type _ sub type = 0 x 000 8 . The resulting fragmentation is pr oblematic in two ways. First, orphan tokens like _ or x carry no semantic content yet consume context-window budget. Second, the sub-word decomposition encodes linguistic relationships (e .g., WLAN as a type of LAN , subtyp e as a sub class of type ) irrelevant to protocol analysis: the fact that subtyp e is linguistically sub or- dinate to type has no bearing on the values these elds carry; they could be called A and B with identical diagnostic utility . T ogether , these pathologies inate sequences by 6 . 2 × relative to eld-level tokenization (T able 3), star ving the model of the cross-packet context needed to learn protocol dynamics. 3.2 A Protocol-A ware T okenizer W e design a tokenizer aligned with the structure of captures rather than that of English. Our rst design choice is to assign one token per eld name, so that wlan.fc.type_subtype is a single, atomic token. This is not entirely new; netFound [ 10 ] and DBF-PSR [ 7 ] adopt similar eld-level tokenization, but our treatment of eld values is where the design diverges. Fields carr y values of three distinct types (strings, symbols, and numerical quantities), and we handle each dierently . Strings. Most strings are upper-layer (Layer 7) entries meaningful in the human dimension, such as a URL, an ap- plication name, or an H T TP user agent. W e tokenize these with a secondary BPE tokenizer adapted to human language, preserving their natural sub-word structure . Symbols. Plume could learn to recognize codes irrespec- tive of representation, e.g., tcp.flags=0x10 versus tcp.flags =ACK . Howev er , network exchanges are dialogues, and sym- bols mark their rhythm. A TCP A CK validates that the pre- vious segment was received, just as an 802.11 A CK validates reception of the prior frame. This semantic equivalence is opaque with hex codes ( 0x10 , 0x1D ) but immediately visible with symbolic names ( ACK ). W e therefore expand all symbols to their word repr esentation. Numerical values. Network captures carry a wide variety of numerical values: time-series quantities ( frame.time_- delta ), identiers ( ip.src = 192.168.0.2 ), and measure- ments ( wlan_radio.signal_dbm = -62 ). Measurements ex- press both a quantity (“ − 62 dBm”) and a quality (“signal level is good”). W e retain raw numerical values during pretrain- ing and teach the mo del to associate quantity ranges with qualitative meaning during post-training. Identiers require special care because they convey mul- tiple levels of meaning. The address 192.168.0.2 identi- es a unique device, but an administrator also knows it is the se cond host in the 192.168.0.0/24 subnet, that any 192.168.0.x address shares the same Layer 2 domain, and that the domain contains at most 254 hosts. W e represent IP addresses in two complementary forms during pretraining: as a string capturing device identity , and as a group of num- bers capturing the hierarchical address structure. W e apply the same dual representation to MA C addresses: the vendor OUI is separated from the device-specic sux, letting the model learn vendor-specic patterns. 3.3 Field Filters and Layer Identiers A frame in a network capture is a long series of elds and values, many redundant or irrelevant. Sev eral elds express 3 the same quantity in dierent contexts ( e.g., radiotap.dbm_- antsignal , and wlan_radio.signal_dbm all report received signal strength). W e remove such duplicates and suppress elds carrying only vacuous negative ags; for example, a 5 GHz capture that reports “not 900 MHz, ” “not 800 MHz, ” “non-CCK, ” and “non-GSM. ” Out of 100–120 elds per frame, this suppresses ∼ 40, retaining only elds carrying positive information or negative information wher e the positive case is plausible. Network captures also encode protocol layering, visible in Wireshark’s tree view or in PDML’s hierarchical structur e. When converted to a at sequence, this layering is lost. How- ever , layering is fundamental: the model must distinguish an 802.11 Layer 2 A CK from a TCP Layer 4 ACK, because these ags expr ess dialogues between dierent entities susceptible to dierent failure modes. W e therefore insert explicit lay er boundary markers: [PACKET_START] [FRAME_START] frame.time_relative 1.834 frame.time_delta 0.002 [FRAME_END] [WLAN_START] wlan.fc.type Data wlan.fc.subtype QoS Data wlan.seq 16 wlan.sa 34:f8:e7:0e:68:d9 wlan.da 6c:6a:77:45:70:6d [WLAN_END] [IP_START] ip.src 10.7.40.10 ip.dst 10.3.152.95 [IP_END] [DNS_START] dns.flags.response 1 dns.qry.name ws-goguardian.pusher.com dns.flags.rcode NoError [DNS_END] [PACKET_END] 3.4 Dataset and Training A network foundation model must supp ort several modes of reasoning. A uto-regressiv e queries (“what should the net- work answer to this client request?”) demand a generative model; encoder-style queries (“which eld value is anoma- lous?”) demand bidirectional context. Although specialized training is always preferable, an auto-regr essive model can emulate encoder-style responses via ne-tuning, whereas the rev erse is far harder . W e therefore train Plume for Causal Language Modeling (CLM). Addressing dataset bias. In 802.11 networks, the AP sends beacons every ∼ 102 ms across multiple SSIDs and bands. A single AP supporting 6 SSIDs in 3 bands produces beacons that, over a 10-minute capture, can number > 100K quasi-identical frames dominating any naïve training set. Similarly , clients of similar brands may emit the same keepalive messages, and clients in specic failure conditions may re- peat the same request indenitely , skewing the corpus. W e address this through a thr ee-stage curation pipeline. First, we tokenize each frame and embed it via a generalist embedding model (mxbai-embed-large [ 16 ]), producing a 1024-dimensional vector capturing both the general intent and internal structure of each frame. Se cond, we project these vectors and apply HDBSCAN [ 20 ] clustering, which surfaces ∼ 25K clusters, each made of typical representatives and vari- ants. As expected, many frames are near-duplicates while others are rare; the Uniform Manifold Appr oximation and Projection ( UMAP) [ 21 ] projection in Figure 1b illustrates this distribution. 200 400 600 800 1000 Frames per cluster 1 0 0 1 0 1 1 0 2 Number of clusters 308 clusters median = 88 noise = 9,191 (a) Frame counts p er cluster . UMAP -1 UMAP -2 308 clusters (b) UMAP of embeddings. Figure 1: HDBSCAN-based curation. (a) Long-tail clus- ter sizes; beacon-dominated clusters contain thousands of near-identical frames. (b) Frames group by protocol function, validating clustering-based deduplication. Third, we apply cosine similarity with MMR [ 2 ] to select up to 100 repr esentative samples fr om each cluster . For small clusters with fewer than 100 members, we identify varying elds via cosine similarity and generate synthetic members until 100 ar e collected. This reduces beacon dominance fr om > 50% to 4.7% with 7.6 bits of token entropy (T able 4). 4 Evaluation W e organize the evaluation as a progressive argument. W e rst establish why Plume works by validating each design lever: architecture choice (§4.2), tokenization (§4.3), dataset curation (§4.4), and model scaling (§4.5). W e then probe how deep the learned representations go via per-eld accuracy (§4.6), context-window sensitivity (§4.7), and cross-category generalization (§4.8). Next, w e ask how Plume compares to frontier LLMs on the same prediction task (§4.9). Finally , we present what Plume achieves on three downstream tasks: next-packet prediction (§4.10), zero-shot anomaly detection (§4.11), and root cause classication (§4.12). 4.1 Experimental Setup Model architectur e. Plume uses a GPT -2 [ 30 ] backb one with 12 transformer [ 34 ] layers, 12 attention heads, and 768- dimensional embeddings, totaling 140M parameters. The con- text window is 2,048 tokens. W e train three model sizes shar- ing the same depth (12 layers) and vocabulary (69K tokens) but diering in width: Small (12H/768D, 140M), Medium 4 (16H/1024D , 225M), and Large (24H/1536D, 450M). All mod- els are trained from scratch with causal language modeling using AdamW [ 19 ] ( 𝛽 1 = 0 . 9 , 𝛽 2 = 0 . 95 ), learning rate 7 × 10 − 4 with cosine de cay to 7 × 10 − 5 , 100 warmup iterations, gra- dient clipping at 1.0, for 2,000 iterations (20 epochs) with eective batch size 12 ( 3 × 4 gradient accumulation). Hardware . W e train and evaluate on an A WS g5.12xlarge instance (4 × NVIDIA A10G, 48 vCP Us, 192 GB RAM; $5.67/hr on-demand), using a single A10G (24 GB GDDR6, 35 TFLOPS FP32) per run. Training takes ∼ 6 h (Small), ∼ 10 h (Me dium), and ∼ 16 h (Large). At inference, the Small model occupies 280 MB in FP16 with 594 MB peak GP U memor y; Medium requires 449 MB (937 MB peak) and Large 901 MB (1,865 MB peak), all under 8% of the A10G’s 24 GB VRAM. Training data. The training corpus consists of 7,890 PCAP les (149,238 packets, 48.9M tokens) curated via HDBSCAN [ 20 ] clustering and MMR [ 2 ] sampling from enterprise 802.11 cap- tures. The validation set contains 2,023 les (38,669 packets, 12.7M tokens). The vocabulary comprises 69,842 tokens. All PCAPs are dissected with a single tshark version (4.2.x) [ 37 ] to ensure consistent PDML eld names; dierent Wireshark versions may rename or r estructure dissector elds, so pin- ning the version is necessary for reproducibility . T est categories. W e evaluate on ve distinct wireless failure categories from real enterprise deplo yments: • Bad Password (9,960 les, 116K packets): A uthentica- tion failures due to incorrect credentials. • EAPOL Timeout (9,992 les, 257K packets): Authen- tication failures where the exchange times out. • Invalid PMKID (2,252 les, 43K packets): Failures from invalid PMKIDs during fast BSS transition. • Unable to Handle New ST A (9,997 les, 144K pack- ets): AP-side rejections when the station table is full. • Rejected T emporarily (9,987 les, 188K packets): As- sociation rejections via transient AP conditions. For each categor y , we randomly sample 50 PCAPs and evaluate next-packet prediction: given the rst 𝑘 packets, the model auto-regressively predicts packet 𝑘 + 1 . 4.2 Architecture and Baselines Before examining downstream results, w e isolate the contri- bution of the transformer architecture itself. Baselines. W e compare Plume against four baselines: (1) Random : uniform random token prediction; (2) Most- Frequent : always predicting the most common token; (3) 3- gram : a trigram language model trained on the same token stream, predicting the most probable next token given the preceding two; and (4) BERT (encoder ) [ 6 ]: a masked language model using the same tokenizer , which can classify but can- not generate or scor e likelihoods natively . W e do not include T able 1: Design-space comparison of foundation mo d- els traine d on networking data. MLM = Masked Lan- guage Modeling, CLM = Causal Language Modeling, Span = masked span prediction. ✓ = supported, ✗ = not supported, – = not reported. netFound [10] Lens [17] NetGPT [22] LLMcap [33] Plume Architecture Enc. Enc-Dec Dec. Enc. Dec. Parameters 640M – – ∼ 66M 140M Objective MLM Span CLM MLM CLM T okenization Field BPE BPE W ordPiece Field Layer hierarchy Partial ✗ ✗ ✗ ✓ 802.11 mgmt/ctrl ✗ ✗ ✗ ✗ ✓ Trac domain Wired Wired Wired T elecom 802.11 Generation ✗ ✓ ✓ ✗ ✓ T able 2: Architecture and baseline comparison. Model T oken Acc. Gen? Anomaly? Classify? Plume (GPT) 0.831 ✓ ✓ ✓ 3-gram 0.257 ✓ – – Most-Frequent 0.226 – – – Random 0.000 ✓ – – BERT (encoder) – – – ✓ netFound [ 10 ], Lens [ 17 ], NetGPT [ 22 ], or LLMcap [ 33 ] as direct baselines because all four target wir ed or encrypted trac at the ow or header-byte level and none train on 802.11 management/control frames or use PDML tokeniza- tion (T able 1). Retraining them on our corpus would require replacing their tokenizers and input pipelines, reducing the comparison to architecture alone, which is exactly what the GPT vs. BERT contrast already isolates. Moreover , net- Found’s 640M-parameter encoder is 4 . 6 × larger than Plume yet provides no generation or likelihood-scoring capability; matching its architecture while replacing its tokenizer would test neither netFound’s design nor ours, only the shared transformer backbone. Plume achieves 3 . 2 × the accuracy of the 3-gram baseline and 3 . 7 × that of Most-Frequent (T able 2), conrming that the transformer captures long-range protocol dependencies that n-gram models cannot. The 3-gram model’s 25.7% accuracy is only marginally above Most-Frequent (22.6%), indicating that local bigram context provides little predictive p ower for protocol eld sequences. Plume is the only architecture supporting generation, anomaly detection, and classication from a single next token prediction objective. The 83.1% 5 T able 3: T okenization ablation: Plume ’s protocol- aware tokenizer vs. alternatives. Compression ratio is relative to byte-level (higher is b etter ). T okenizer V ocab A vg T ok/Pkt Entropy Ratio Plume (eld-value) 69,842 326.8 7.61 16.2 × BPE on PDML 100,277 2,014.0 6.70 2.6 × Byte-level 256 5,294.7 4.75 1.0 × Flat (no layers) 1,959 332.9 7.55 15.9 × NetGPT -style 100,277 2,013.0 6.70 2.6 × accuracy her e uses a held-out sample ( 𝑛 = 405 predictions) for controlled baseline comparison; per-category evaluation in T able 9 reports categor y-specic accuracy (74.1–97.3%) on larger test sets with more context. Why decoder-only? The causal attention mask naturally models the temporal ordering of packet exchanges: request then response, challenge then reply . This provides a better in- ductive bias for protocol conversations than BERT’s [ 6 ] bidi- rectional attention, which sees future tokens during training. ELECTRA [ 4 ] oers an appealing middle ground (replaced- token detection), but requires a separate generator and can- not nativ ely generate or score arbitrary sequences. The auto- regressive factorization pr ovides per-token probabilities for free, enabling zero-shot anomaly detection, generation, and classication from a single objective perspective. 4.3 T okenization Ablation With the architecture established, we turn to the input r ep- resentation. Protocol-aware tokenization yields 6 . 2 × shorter sequences than BPE with higher per-token entropy . T able 3 compares ve dierent tokenization strategies on the same PCAP corpus. Sequence length. Figure 2 visualizes the dierence. Plume ’s eld-value tokenizer produces 6 . 2 × fewer tokens per packet than BPE and 16 . 2 × fewer than byte-level encoding. The F lat tokenizer (eld-value without layer markers) achiev es sim- ilar compression but sacrices the protocol hierarchy that enables the model to distinguish an 802.11 Layer 2 A CK from a TCP Layer 4 A CK. Information density . Plume achieves the highest per- token entropy (7.61 bits), meaning each token carries maxi- mal information. BPE and NetGPT -style tokenizers, despite larger vocabularies (100K), achieve only 6.70 bits; byte-level tokenization has the lowest entrop y (4.75 bits). Practical implications. With a 2,048-token context win- dow , Plume can process ∼ 6 complete packets per for ward pass, compared to ∼ 1 for BPE and < 0.4 for byte-level, directly impacting the model’s ability to learn cross-packet patterns. PL UME (field-value) BPE on PDML Byte-level Flat (no layers) NetGPT -style 0 1000 2000 3000 4000 5000 A vg T okens per P acket 327 2014 5295 333 2013 T okenizer Comparison: Sequence Length per P acket Figure 2: A verage tokens per packet. Plume ’s protocol- aware tokenizer yields 6 . 2 × shorter sequences than BPE and 16 . 2 × shorter than byte-level. T able 4: Dataset statistics after HDBSCAN+MMR cura- tion. Beacon fraction drops from > 50% in raw captures to 4.7%, while token entropy remains high (7.6 bits). Metric Train V al Files 7,890 2,023 Packets 149,238 38,669 T otal tokens 48.9M 12.7M Unique tokens 58,214 22,198 A vg packet length (tokens) 326.8 326.8 T oken entropy (bits) 7.601 7.610 Beacon fraction 4.7% (vs. > 50% raw) T able 5: Per-test-category corpus statistics showing the diversity of the evaluation data. Category Files Packets A vg Len Entropy Bad Password 9,960 116,469 421.8 7.52 EAPOL Timeout 9,992 257,057 318.9 7.37 Invalid PMKID 2,252 43,224 387.5 7.67 Unable Handle ST A 9,997 143,746 377.8 7.53 Rejected T emp. 9,987 187,871 358.3 7.58 4.4 Dataset Quality Ablation Good tokens require good data. T able 4 summarizes the curated dataset statistics. The HDBSCAN [ 20 ]+MMR [ 2 ] pipeline surfaces ∼ 25K clusters and selects up to 100 repr e- sentative samples per cluster via cosine similarity with MMR sampling. T able 5 provides per-test-category corpus statistics; packet counts and average lengths var y substantially , reecting dierent protocol structures across failur e modes. Near-identical entropy and av erage packet length across splits (T able 4) conrm a well-balanced split without informa- tion leakage. Curated data achieves higher token entropy and 6 T able 6: Multi-mo del scaling: same 69K vocabular y and 12-layer depth; dierences reect mo del width. Model Params Width T oken Acc. Field Acc. Small (12H/768D) 140M 768 0.903 0.884 Medium (16H/1024D) 225M 1024 0.927 0.922 Large (24H/1536D) 450M 1536 0.801 0.780 Small Medium Large 0.0 0.2 0.4 0.6 0.8 1.0 T oken Accuracy 140M 90.3% 225M 92.7% 450M 80.1% (a) Overall Accuracy Bad Pwd EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 0.0 0.2 0.4 0.6 0.8 1.0 T oken Accuracy (b) P er-Category Accuracy Small (12L/12H/768D) Medium (12L/16H/1024D) Large (12L/24H/1536D) Figure 3: T oken accuracy by category for Small (140M), Medium (225M), and Large (450M). Same vocabulary and depth; dierences reect model width. lower repetition than raw captures, consistent with compute- optimal scaling ndings that data quality matters as much as quantity [11, 14]. 4.5 Multi-Model Scaling and Eciency Architecture, tokenizer , and data are now xed; we vary only model width to nd the compute-optimal operating point. W e compare three mo del widths trained on the same data with the same vocabular y (69K tokens) and depth (12 lay- ers): Small (12H/768D , 140M), Medium (16H/1024D , 225M), and Large (24H/1536D, 450M). Medium outperforms both alternatives (T able 6). At 92.7% overall token accuracy it edges out Small (90.3%) and substantially beats Large (80.1%). The drop at 450M parameters suggests overtting given the 48.9M-token corpus, consistent with compute-optimal scal- ing laws [11]. Prior work [13] also shows that performance penalties arise when the ratio b etween model parameters and dataset size b ecomes imbalance d, which explains the p er- formance decline for the large model. All three models shar e the same tokenizer and data, so these dierences isolate the eect of model width. Figure 3 visualizes the per-category accuracy breakdown. Eciency . T able 7 summarizes deployment characteris- tics, benchmarked on a single N VIDIA A10G (24 GB VRAM). The Small model achieves the highest throughput ( ∼ 200 pkt/s) at under 3% VRAM; even the largest variant uses under 8%, leaving ample headroom for larger batches or concurrent in- ference. The marginal cost per packet is eectively zer o for all sizes, versus $4.92 per 1K packets for GPT -5.2 [ 24 ] API calls, representing a > 500 × cost advantage at scale. T able 7: Eciency (single A10G, 24 GB) vs. GPT -5.2 API ($4.92/1K pkt at publishe d pricing [ 24 ]). Peak VRAM includes Py T orch [27] overhead. Small Medium Large GPT -5.2 Parameters 140M 225M 450M N/A Size (FP16) 280 MB 449 MB 901 MB N/A Peak VRAM 594 MB 937 MB 1,865 MB N/A Latency (mean) 280 ms 384 ms 666 ms ∼ 2 s Throughput 200.2 pkt/s 150.6 pkt/s 82.7 pkt/s N/A Cost/1K pkt ∼ $0 ∼ $0 ∼ $0 $4.92 0.0 0.2 0.4 0.6 0.8 1.0 1.2 Prediction Accuracy Layer Markers Addresses T ags / IEs Timing Other 0.99 (n=2,184) 0.99 (n=11,137) 0.99 (n=1,381) 1.00 (n=6,552) 0.92 (n=945) (a) Accuracy by Field Category 0.0 0.2 0.4 0.6 0.8 1.0 1.2 Prediction Accuracy staa_mac timestamp da_mac ra_mac ta_mac bssid_mac timeout_int.value fixed.disassoc_timer timeout_int.type present.timestamp mactime sa_mac short_slot_time htex.capabilities.transtime ext_tag.mu_edca_parameter_set.m measure.rep.starttime fixed.batimeout staa_mac timestamp 1.00 [Addresses] n=240 1.00 [Timing] n=2184 1.00 [Addresses] n=2179 0.99 [Addresses] n=2179 0.99 [Addresses] n=2181 0.99 [Layer Markers] n=2184 0.99 [Addresses] n=2179 0.03 [Other] n=37 0.00 [T ags / IEs] n=15 0.00 [Other] n=37 1.00 [Timing] n=2184 1.00 [Timing] n=2184 1.00 [Addresses] n=2179 1.00 [T ags / IEs] n=572 1.00 [Other] n=545 1.00 [T ags / IEs] n=740 1.00 [Other] n=326 1.00 [T ags / IEs] n=54 1.00 [Addresses] n=240 1.00 [Timing] n=2184 worst best (b) Best and W orst Predicted Fields Figure 4: Per-eld accuracy by categor y ( left) and 10 best/worst elds (right). Addresses and frame control are near-perfect; timing and rare elds are hardest. 4.6 Per-Field Micro-Benchmark The preceding sections show that the right architecture, to- kenizer , data, and model width produce strong aggregate accuracy . W e now ask: which elds does the model predict well, and where does it struggle? Address and frame-contr ol elds achieve near-perfect ac- curacy; timing and rare elds are hardest to predict. W e break down prediction accuracy by individual protocol elds across 18 unique elds and 22,199 total predictions (Figure 4). Address elds ( wlan.ra_mac , wlan.da_mac , wlan.ta_- mac , wlan.sa_mac ) achieve perfect or near-p erfect accuracy (100%): in a two-party 802.11 e xchange, the responder’s ad- dresses are determined by the requester’s, so 100% reects learned dialogue structure rather than memorization of spe- cic MA Cs. Frame control elds and lay er markers also ex- ceed 99%. T ags and IE elds show moderate accuracy , while numerical values (timing, signal strength) and rare elds are hardest to predict owing to their continuous or low- frequency nature. 4.7 Context Window Sensitivity Per-eld analysis rev eals what the model learns; context- window sensitivity reveals how quickly it learns it from preceding packets. Prediction accuracy saturates at 2–3 context packets (Fig- ure 5). T oken accuracy already exceeds 93% with just one 7 All 1 2 3 5 8 10 Number of Context P ackets 0.9325 0.9350 0.9375 0.9400 0.9425 0.9450 0.9475 T oken Accuracy Next-P acket Prediction Accuracy vs. Context Length Bad P assword Eapol Timeout Invalid Pmkid Unable T o Handl... Rejected T empor ... Overall Mean Figure 5: Prediction accuracy vs. context length. Accu- racy saturates by 2–3 packets, matching typical 802.11 exchange length. packet of context and plateaus by two packets. This rapid saturation conrms that Plume ’s protocol-aware tokeniza- tion captures sucient cross-packet state within a small context window . The plateau at 3–5 packets aligns with the typical length of 802.11 exchanges. 4.8 Cross-Category Generalization The model learns specic elds quickly from minimal con- text; does this knowledge transfer across failure mo des it was never explicitly trained on? WLAN-layer accuracy exceeds 97.5% across all ve failur e categories (Figure 6), conrming that Plume learns general 802.11 structure that transfers across failure modes. This headline number is dominated by address and frame-control elds that are near-perfectly predictable from conversation context (Figure 4); timing and rare elds remain the pri- mary source of prediction err or . W e train on a general 802.11 corpus, not on any specic failure categor y , and evaluate per-layer accuracy . EAPOL-layer accuracy reaches 100% in the three cate- gories containing EAPOL frames. The OTHER layer shows moderate variation (93.3–95.0% across categories) b ecause it encompasses only the unencrypted Layer 3/4 metadata (IP , ARP, DNS, DHCP headers) present in the PDML dissection; encrypted data-plane payloads are opaque to the dissector and therefore absent fr om the token stream. This is a delib- erate scope boundary: Plume operates strictly on protocol metadata visible to tshark, so encr ypted user trac is never modeled or leaked, a privacy-by-design property consistent with the on-prem deplo yment model (§5). The pairwise cate- gory similarity matrix reveals tw o natural clusters: (1) Bad Password and Unable to Handle New ST A (cosine similar- ity 1.00), sharing similar WLAN-only ows; and (2) EAPOL Timeout, Invalid PMKID, and Rejecte d T emporarily (pairwise similarity > 0.99), all involving EAPOL exchanges. EAPOL OTHER TLS WLAN Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 1.00 0.94 0.80 0.98 1.00 0.94 0.97 1.00 0.94 0.98 0.95 0.98 1.00 0.95 0.98 (a) P er-Layer Accuracy Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 1.00 0.90 0.90 0.73 0.90 0.90 1.00 1.00 0.80 1.00 0.90 1.00 1.00 0.80 1.00 0.73 0.80 0.80 1.00 0.81 0.90 1.00 1.00 0.81 1.00 (b) Category Similarity 0.0 0.2 0.4 0.6 0.8 1.0 0.90 0.92 0.94 0.96 0.98 1.00 Figure 6: Cross-category generalization. (a) Per-layer accuracy: WLAN > 97.5% across all categories. ( b) Co- sine similarity of accuracy proles: categories with shared protocol ows cluster together . T able 8: Next-packet token accuracy: Plume (Small, 140M) vs. frontier LLMs. T otal of 682 prediction pairs across ve categories. Best p er categor y in bold. Failure Category Plume Claude 4.6 GPT -5.4 Bad Password 0.960 0.854 0.790 EAPOL Timeout 0.915 0.904 0.863 Invalid PMKID 0.753 0.865 0.852 Unable to Handle New ST A 0.868 0.903 0.862 Rejected T emporarily 0.958 0.938 0.920 Overall mean 0.891 0.893 0.857 4.9 Frontier LLM Comparison Plume generalizes across elds, contexts, and categories with a 140M-parameter model. The natural question is whether frontier LLMs, with orders-of-magnitude mor e parameters and broader pretraining, can simply be prompted to match this performance. W e compare the Small model (140M parameters) against Claude Opus 4.6 [ 1 ] (via A WS Bedrock) and GPT -5.4 [ 25 ] (via Azure AI Foundry) on the same next-packet prediction task. Each LLM receives identical tokenized context and generates a free-form completion aligned to the ground-truth token sequence for scoring. Plume leads on three of ve categories (T able 8, Figure 7), with the largest margin on stereotyped authentication ows such as Bad Password, where protocol- specic inductive bias dominates. Claude edges ahead on Invalid PMKID and Unable to Handle New ST A, both in- volving diverse key-negotiation or rejection patterns where broader w orld knowledge helps. Overall means are compara- ble ( Plume 89.1%, Claude 89.3%, GPT -5.4 85.7%), but Plume achieves this at > 600 × fewer parameters and eectively zero marginal cost on a single GP U. Why does Plume win on stereotyped ows? Bad Pass- word and Rejected T emporarily follow narrow , predictable protocol sequences where eld-level tokenization and causal pretraining on 802.11 traces provide a strong inductiv e bias. 8 Bad Password EAPOL T imeout Invalid PMKID Unable Handle ST A Rejected T emp. 0.0 0.2 0.4 0.6 0.8 1.0 T oken Accuracy 0.96 0.92 0.75 0.87 0.96 0.85 0.90 0.87 0.90 0.94 0.79 0.86 0.85 0.86 0.92 (a) Per -Category Accuracy PLUME (225M) Claude Opus 4.6 GPT -5.4 PLUME Claude Opus 4.6 GPT -5.4 0.0 0.2 0.4 0.6 0.8 1.0 T oken Accuracy 0.89 0.89 0.86 (b) Overall Figure 7: Per-categor y token accuracy for Plume (Small, 140M) vs. Claude Opus 4.6 and GPT -5.4. Plume matches or exceeds frontier LLMs on stereotyped pro- tocol ows while using > 600 × fewer parameters. Frontier LLMs process the same elds as at te xt tokens and lack the protocol-aware vocabulary that lets Plume predict entire eld-value pairs in a single step. Where do LLMs help? Invalid PMKID involves diverse Fast BSS Transition (FT) patterns where valid Robust Security Network Element (RSNE), Mobility Domain IE (MDIE), and Fast Transition IE (FTIE) combinations depend on the AP’s Protected Management Frames (PMF) and FT p olicies. Fron- tier LLMs can partially recover these constraints from broad pretraining on 802.11 spe cication text, whereas Plume must infer them solely from observed token co-occurrences. This suggests that hybrid approaches, using Plume for structured protocol elds and an LLM for rare or p olicy-dependent content, could combine the strengths of both. 4.10 Next-Packet Prediction Quality Having established why Plume works and how it compares to alternatives, we now present the full do wnstream results. Plume achieves 74.1–97.3% token accuracy acr oss v e fail- ure categories (T able 9). Field accuracy is consistently above 95% in all categories despite the wide spread in token accu- racy . Bad Password is highest (97.3%) because authentication- failure sequences follow a narrow , predictable protocol ow . Invalid PMKID is lowest (74.1%) because fast-BSS-transition sequences inv olve diverse key negotiation patterns; the wide condence interval (0.658–0.822) reects a bimo dal split where PCAPs with standard PMKID renegotiation score above 0.90, while those with non-standard RSNE/MDIE/FTIE combinations in multi- AP roaming scenarios fall below 0.20. Perplexity remains low (2.1–2.3) across all categories, con- rming that the model assigns high probability to correct next tokens. V ariance analysis. Figure 8 shows the distribution of per-PCAP token accuracy . Rejecte d T emporarily exhibits the tightest spread (std = 0.063), reecting stereotyped rejec- tion sequences. Invalid PMKID has the widest (std = 0.299), consistent with the bimodal split described ab ove . T able 9: Per-category prediction quality (50 PCAPs each; ± denotes std across PCAPs). Failure Category T oken Acc. Field Acc. PPL 𝑛 Bad Password 0.973 ± 0.110 0.963 2.1 50 EAPOL Timeout 0.937 ± 0.182 0.959 2.3 50 Invalid PMKID 0.741 ± 0.299 0.957 2.3 50 Unable to Handle. ST A 0.880 ± 0.111 0.963 2.1 50 Rejected T emporarily 0.969 ± 0.063 0.962 2.1 50 Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 0.2 0.4 0.6 0.8 1.0 T oken Accuracy Next-P acket Prediction Accuracy by F ailure Category Figure 8: Per-PCAP token accuracy distribution ( 𝑛 = 50 ). Boxes: IQR; whiskers: 1 . 5 × IQR; circles: outliers. EAPOL WLAN OTHER TLS 0.0 0.2 0.4 0.6 0.8 1.0 V alue Prediction Accuracy P er-Protocol-Layer Prediction Accuracy Figure 9: Per-protocol-layer prediction accuracy (mean ± std across categories). EAPOL elds are predicte d perfectly; WLAN exceeds 96%; OTHER reaches 93–95%. Per-protocol-layer accuracy . Figure 9 breaks down pre- diction accuracy by protocol layer . EAPOL elds are pre- dicted p erfectly in all three categories where they appear . WLAN elds reach 96.5–97.8% and OTHER elds 93.3–95.0%, reecting the model’s strong 802.11 inductive bias from train- ing data dominated by wireless management frames. Bootstrap condence inter vals. T able 10 reports 95% bootstrap condence inter vals for token accuracy . All cate- gories show non-overlapping CIs with the random baseline (0.0%), conrming robust performance across failure modes. 4.11 Zero-Shot Anomaly Detection The per-token probabilities that drive prediction accuracy also provide a zer o-shot anomaly detector , requiring no la- beled failure data. 9 T able 10: 95% bootstrap condence inter vals for p er- category token accuracy ( 𝑛 = 50 PCAPs each). Failure Category Mean CI Lower CI Upper Bad Password 0.973 0.942 0.996 EAPOL Timeout 0.937 0.886 0.977 Invalid PMKID 0.741 0.658 0.822 Unable to Handle New ST A 0.880 0.849 0.912 Rejected T emporarily 0.969 0.952 0.986 T able 11: Zero-shot anomaly dete ction (AU- ROC/AUPRC, 𝑛 = 50 per categor y vs. healthy baselines). Failure Category Plume Pkt-Length AUROC AUPRC AUROC AUPRC Bad Password 0.99 0.99 0.95 0.95 EAPOL Timeout 1.00 1.00 0.78 0.76 Invalid PMKID 1.00 1.00 0.68 0.75 Unable to Handle. ST A 1.00 1.00 0.79 0.80 Rejected T emporarily 1.00 1.00 0.79 0.73 Plume achieves AUROC ≥ 0.99 across all ve failure cate- gories without any labeled anomaly data. For each PCAP , we compute the mean per-token probability under the model. Healthy captures yield high mean probabilities ( > 0.99), while failure-category captures show systematically low er proba- bilities; we use this gap to discriminate healthy from failure captures via a simple threshold. W e set a single global thresh- old at the 5th percentile of mean per-token probability over the healthy validation split (not tune d per categor y); captures scoring below this threshold are agged as anomalous. No failure-category labels or failure-category statistics inform the threshold, preserving the zero-shot property . T able 11 reports AUROC and Area Under the Precision- Recall Curve (AUPRC) for each failur e category . The mean per-token probability gap between healthy and failure cap- tures is sucient for zero-shot detection. Statistical baseline. A simple packet-length baseline (mean tokens per packet as anomaly score) achie ves AUROC 0.95 for Bad Password, where failure PCAPs are substantially longer . For categories with packet lengths closer to healthy trac, such as Invalid PMKID (0.68) and EAPOL Timeout (0.78), the statistical baseline degrades sharply , while Plume maintains AUROC ≥ 0.99 across all ve categories (T able 11). Why AUROC ≥ 0.99 is not data leakage. The model is trained exclusiv ely on healthy 802.11 captures and never sees failure-category labels. The near-p erfect separation reects the rigidity of the 802.11 state machine: protocol failures (e .g., a Deauthentication where EAPOL Message 3 was expected, 0.0 0.2 0.4 0.6 0.8 1.0 F alse P ositive R ate 0.0 0.2 0.4 0.6 0.8 1.0 True P ositive R ate (a) ROC Curves (all AUC = 1.00) Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 0.94 0.95 0.96 0.97 0.98 0.99 1.00 Mean P er- T oken Probability Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 0.9772 0.9658 0.9658 0.9743 0.9722 (b) Probability Gap Healthy (0.9973) Figure 10: Zero-shot anomaly detection. (a) ROC cur ves: AUROC ≥ 0.99 for all categories. (b) Mean p er-token probability vs. healthy baseline (dashed). Healthy Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. 0.90 0.92 0.94 0.96 0.98 1.00 Mean T oken Probability P er-PCAP Likelihood: Healthy vs. F ailure Categories Figure 11: Per-token probability: healthy (green) vs. failure (red). Failure captures consistently score lower . or a missing A CK after Association Response) ar e syntac- tically anomalous relative to the well-dened handshake grammar the model learns during pretraining. Deviations from rigid protocol syntax produce low per-token probabil- ities by construction, analogous to how language models trained on valid source code trivially ag syntax errors. Figure 10 shows the per-category ROC cur ves and prob- ability gap; Figure 11 plots the full per-token probability distribution conrming the clear separation. 4.12 Root Cause Classication Anomaly detection ags that something is wrong; r oot cause classication identies what . Plume ’s unsup ervised features carry discriminative signal for root cause classication. W e extract a 19-dimensional feature vector per PCAP (mean probability , standard deviation, median, log-probability , and rank statistics) and train lightweight classiers to discrimi- nate the ve failure categories. A Random Forest achieves 73.2% ve-class accuracy , 3 . 7 × the 20% random baseline, with- out any task-specic training (T able 12). Figure 12 shows the confusion matrix for Logistic Regres- sion. Rejected T emp orarily and Unable to Handle New ST A are classied most reliably; Invalid PMKID is most often con- fused with Bad Password and EAPOL Timeout, consistent with overlapping EAPOL-layer protocol ows. The confusion patterns align with protocol structure: categories sharing 10 T able 12: Root cause classication: three classiers on 19-D per-PCAP features ( 𝑛 = 250 , 5 categories × 50). Classier Accuracy Macro F1 Best Category Logistic Regression 0.68 0.68 Rejected T emp. (0.83) Random Forest 0.73 0.73 Rejected T emp. (0.85) kNN 0.62 0.61 Rejected T emp. (0.78) Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. Predicted Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. True 34 7 2 6 1 1 41 3 3 2 7 8 29 5 1 4 3 4 38 1 0 1 2 6 41 RCA Confusion Matrix (R andomF orest, F1=0.731) 0 5 10 15 20 25 30 35 40 Figure 12: Logistic Regression confusion matrix ( 𝑛 = 250 ). Most reliable: Rejected T emporarily , Unable to Handle New ST A. Most confused: Invalid PMKID. EAPOL-layer ows (EAPOL Timeout, Invalid PMKID) are confused with each other , while Rejected T emp orarily and Unable to Handle New ST A occupy more distinct features. Practical utility . At 73.2% ve-class accuracy , the clas- sier serves as a triage tool rather than a denitive diagno- sis. Rejected T emporarily (F1 = 0.85) and Unable to Handle New ST A are reliably separated and can trigger targeted re- mediation (T able 12); Invalid PMKID and EAPOL Timeout, which share EAPOL-layer ows, are frequently confused and require human follow-up. Even for confused categories, narrowing to two or thr ee reduces the time to investigate. Figure 13 pro vides a complementary view via t-SNE pro- jection of the 19-dimensional feature vectors. The ve cate- gories form visually separable clusters, with Bad Passwor d and Unable to Handle New ST A occupying distinct regions. EAPOL Timeout, Invalid PMKID, and Rejected T emp orarily show partial ov erlap, consistent with shared EAPOL-layer ows and the confusion patterns in Figure 12. t-SNE 1 t-SNE 2 PL UME F eature Embeddings by F ailure Category Bad P assword EAPOL Timeout Invalid PMKID Unable Handle ST A Rejected T emp. Figure 13: t-SNE of 19-D per-PCAP features colored by failure category . EAPOL-related categories partially overlap, reecting shared protocol ows. 5 Use Cases and System Integration Plume is designed not as a standalone classier but as a callable tool within larger diagnostic workows. T wo con- crete use cases lev erage its combination of generation, likeli- hood scoring, and protocol-native representations. 5.1 Multi- A gent RCA In a multi-agent RCA architecture, a planner agent (e.g., an LLM orchestrator) r eceives a user complaint and coordi- nates specialized to ols. Plume ser ves as the packet analysis tool: (1) the planner retrieves the relevant PCAP slice and passes it to Plume in tokenized PDML form; (2) Plume pro- cesses the sequence auto-regressively , agging tokens with anomalously low likeliho od; (3) it generates the expected next packet, and divergence between expected and actual lo- calizes the failure point; (4) the planner r eceives a structured summary , e.g., “EAPOL Message 3 expected after Message 2 (seq=4), but AP sent Deauthentication (reason=2). Likely cause: PMKID mismatch or PMF policy conict. ” (1) The planner retrieves the relevant PCAP slice (e.g., the 30-second window around the failure ev ent) and passes it to Plume in tokenized PDML form. (2) Plume processes the sequence auto-regressively , pro- ducing per-token log-likelihoo ds. T okens with anoma- lously low likelihood ag unexpecte d eld values or missing protocol steps. (3) Plume generates the expected next packet given the conversation so far . Divergence between expected and actual packets localizes the failure point. (4) The planner receives a structured summary: “EAPOL Message 3 expected after Message 2 (seq=4), but AP 11 sent Deauthentication (reason=2, ‘previously authen- ticated ST A leaving’). Likely cause: PMKID mismatch or PMF policy conict. ” Because Plume exchanges explanations rather than raw packets, the planner never sees sensitive payload data, only protocol-level summaries. Our evaluation validates this pipeline’s foundation. A Random Forest on 19-dimensional likelihood features achieves 73.2% ve-class root cause accuracy , 3 . 7 × the random baseline, with per-categor y F1 reaching 0.85 for Rejected T emp orarily (§4.12). The confusion matrix (§4.12) and t-SNE pr ojection (Figure 13) conrm that the ve failure categories are distinguishable from repr esentations alone. 5.2 Proactive Anomaly Dete ction Plume ’s auto-regressive likeliho od provides a zero-shot anom- aly dete ctor without requiring labele d anomaly data. For each packet, we compute the average per-token log-likelihoo d; packets deviating signicantly from the model’s expecta- tions are agged as anomalous. Our evaluation (§4.11) con- rms this capability . Plume achieves AUROC ≥ 0.99 for all ve failure categories without any labeled anomaly training data (T able 11). The mean p er-token probability gap between healthy and failure captures is sucient for reliable zero-shot detection acr oss all failure modes. The p er-protocol-layer accuracy (Figure 9) conrms that anomalies in WLAN-layer are detected. 6 Related W ork W e organize related w ork into seven categories and position Plume with respect to each. Foundation models for network trac. Lens [ 17 ] pre- trains a transformer via knowledge-guided masked span prediction [ 31 ] for trac classication and generation. net- Found [ 10 ] pre-trains on unlabeled packet traces with self- supervise d multi-modal embeddings. DBF-PSR [ 7 ] employs dual-branch fusion with protocol semantic repr esentations for trac classication. These works operate on ow- or header-level features with standar d tokenization (BPE [ 32 ] or xed byte chunks) and do not address 802.11-specic data quality challenges, namely b eacon dominance, reac- tive capture bias, and missing pre-failure context. Plume complements them by centering on PDML disse ctions, eld- boundary tokenization, and curate d training data. T okenization for structured networking data. net- Found [ 10 ] uses eld-le vel tokens without preserving hierar- chy or timing; NetGPT [ 22 ] applies BPE [ 32 ] to raw packet text, fragmenting eld boundaries. The Byte Latent T rans- former [ 26 ] shows that dynamic, content-aware patching can rival xed vocabularies at scale; Plume aligns patches to protocol eld boundaries, yielding 7.61 bits of per-token entropy vs. 4.75 for byte-lev el based system (T able 3). Adapting LLMs for networking tasks. NetLLM [ 40 ] adapts pretrained LLMs to networking tasks ( viewport pre- diction, adaptive bit-rate, cluster scheduling) by converting multi-modal data into token sequences with task-specic heads. TracLLM [ 5 ] proposes dual-stage ne-tuning with trac- domain tokenization, reporting high F1 scores across 229 trac types. Both adapt text-nativ e LLMs; Plume instead builds a network-native mo del with a protocol-structure tok- enizer , yielding 6 . 2 × shorter sequences at 140M parameters. Generative pretrained models for network trac. NetGPT [ 22 ] tokenizes multi-pattern trac into unied text via header-eld shuing, packet segmentation, and prompt la- bels. TracGPT [ 29 ] extends this with a linear-attention transformer . NetDiusion [ 12 ] takes a diusion-based ap- proach, generating protocol-constrained synthetic traces for data augmentation. All rely on BPE [ 32 ] or byte-level tok- enization, inheriting the mismatch we quantify in §4.3: se- quences 6 . 2 × longer than Plume ’s protocol-aware tokenizer (T able 3). Plume ’s eld-value tokenization yields shorter , higher-entropy sequences that let the model see more pack- ets per context window and learn denser cross-packet pat- terns. LLMs applied to PCAP analysis. LLMcap [ 33 ] applies masked language modeling to PCAP data, learning the gram- mar , context, and structure of successful captures for unsu- pervise d failure detection, and is the closest work to Plume in spirit. Howe ver , its BERT -style [ 6 ] objective cannot gen- erate next packets or produce per-token likelihoods natively . Howev er , LLMcap operates without protocol layer hierar chy and does not address data curation challenges of 802.11 cap- tures. Plume ’s auto-regressiv e objective enables generation, anomaly scoring, and classication from a single mo del while preserving layer structure ( e.g., 802.11 L2 A CK from a TCP L4 A CK). Trac classication with pretrained transformers. ET -BERT [ 18 ] pre-trains deep contextualized datagram-level representations for encrypted trac classication. Y aTC [ 42 ] uses a masked auto-encoder with multi-level ow represen- tation for few-shot classication. NetMamba [ 35 ] replaces the transformer with a unidir ectional Mamba [ 8 ] state-space model for linear-time classication. All target encrypted trac at the ow/datagram level; Plume targets 802.11 man- agement and control frames using protocol structure . Data quality for pretraining. Compute-optimal scal- ing [ 11 ] shows that data quantity and model size should be balanced. Deduplication [ 14 ] curbs memorization and improves generalization. Rene dW eb [ 28 ] shows that rig- orous web-data ltering and de duplication alone can out- perform curated corpora. Plume applies these principles to packet traces: HDBSCAN [ 20 ] clustering identies redundant frames, and MMR [ 2 ] preserves diversity while eliminating repetition, reducing beacon fraction to 4.7% (T able 4). 12 7 Conclusion and Future W ork W e presented Plume , a compact 140M-parameter founda- tion model for 802.11 wireless traces built on three inter- locking ideas: a protocol-aware tokenizer that yields 6 . 2 × shorter sequences than BPE, curated training data that sup- presses beacon dominance while preserving rare events, and a decoder-only auto-regressiv e objective that unies genera- tion, anomaly detection, and classication in a single model. Across v e real-world failure categories, Plume achieves 74–97% next-packet token accuracy and AUROC ≥ 0.99 for zero-shot anomaly dete ction. Head-to-head, it matches or exceeds Claude Opus 4.6 [ 1 ] and GPT -5.4 [ 25 ] on the same prediction task (T able 8) with > 600 × fewer parameters and eectively zero marginal cost on a single GP U . Limitations and future work. Plume currently targets 802.11 management and control frames; extending to data- plane protocols is a natural next step . Root cause classica- tion from unsupervised features would benet from larger evaluation sets and task-specic feature engineering. The anomaly detector uses raw per-token probabilities without calibration [ 9 ], and we do not yet characterize condently wrong predictions, both important for safe automated RCA. All data come from a single enterprise deployment; multi-site evaluation and cross-domain benchmarking remain open. Plume demonstrates that repr esentation matters : a small model with the right tokenizer and training data can match or exceed much larger general-purpose models. W e expect this principle to hold broadly as foundation models expand into new structured-data domains. References [1] Anthropic. 2025. Claude Opus 4.6 Mo del Card. https://do cs.anthropic. com/en/docs/about- claude/mo dels. Accessed: 2026-03-09. [2] Jaime Carbonell and Jade Goldstein. 1998. The Use of MMR, Diversity- Based Reranking for Reordering Documents and Producing Summaries. In Proceedings of the 21st A nnual International A CM SIGIR Conference on Research and Development in Information Retrieval . ACM, 335–336. doi:10.1145/290941.291025 [3] Aakanksha Chowdhery , Sharan Narang, Jacob Devlin, Maarten Bosma, Gaurav Mishra, Adam Roberts, Paul Barham, Hyung W on Chung, Charles Sutton, Sebastian Gehrmann, et al . 2022. PaLM: Scaling Lan- guage Mo deling with Pathways. arXiv:2204.02311 (2022). https: //arxiv .org/abs/2204.02311 [4] Kevin Clark, Minh-Thang Luong, Quoc V . Le, and Christopher D. Manning. 2020. ELECTRA: Pre-training T ext Enco ders as Discrim- inators Rather Than Generators. In ICLR . arXiv:2003.10555 https: //arxiv .org/abs/2003.10555 [5] Tianyu Cui, Xinjie Lin, Sijia Li, Miao Chen, Qilei Yin, Qi Li, and Ke Xu. 2025. TracLLM: Enhancing Large Language Models for Network Trac Analysis with Generic Trac Representation. arXiv:2504.04222 [cs.LG] https://arxiv .org/abs/2504.04222 [6] Jacob Devlin, Ming- W ei Chang, Kenton Lee, and Kristina T outanova. 2019. BERT: Pre-training of Deep Bidirectional T ransformers for Language Understanding. In NAACL-HLT . arXiv:1810.04805 https: //arxiv .org/abs/1810.04805 [7] Y aojun Ding and W ei Chen. 2025. DBF-PSR: A Dual-Branch Fusion Approach to Network Trac Classication Using Protocol Seman- tic Representation. Journal of King Saud University – Computer and Information Sciences 37, 211 (2025). doi:10.1007/s44443- 025- 00233- w [8] Albert Gu and Tri Dao. 2023. Mamba: Linear- Time Se quence Modeling with Sele ctive State Spaces. arXiv:2312.00752 [cs.LG] https://ar xiv . org/abs/2312.00752 [9] Chuan Guo, Ge o P leiss, Yu Sun, and Kilian Q. W einberger . 2017. On Calibration of Modern Neural Networks. In Pr oceedings of the 34th International Conference on Machine Learning (ICML) . 1321–1330. https://arxiv .org/abs/1706.04599 [10] Satyandra Guthula, Roman Beltiukov , Navya Battula, W enbo Guo, Arpit Gupta, and Inder Monga. 2023. netFound: Foundation Model for Network Security . arXiv:2310.17025 [cs.NI] https://arxiv .org/abs/2310. 17025 [11] Jordan Homann, Sebastian Borgeaud, Arthur Mensch, Elena Buchatskaya, Trev or Cai, Eliza Rutherford, Diego de Las Casas, Lisa Anne Hendricks, Johannes W elbl, et al . 2022. Train- ing Compute-Optimal Large Language Mo dels. In NeurIPS . https://proceedings.neurips.cc/paper_les/paper/2022/le/ c1e2fa6f588870935f114ebe04a3e5- Paper- Conference.pdf [12] Xi Jiang, Shinan Liu, Aaron Gember-Jacobson, Arjun Nitin Bhagoji, Paul Schmitt, Francesco Bronzino , and Nick Feamster . 2024. NetDif- fusion: Network Data A ugmentation Through Pr otocol-Constrained Trac Generation. In Proc. ACM Meas. Anal. Comput. Syst. , V ol. 8. doi:10.1145/3639037 [13] Jared Kaplan, Sam McCandlish, T om Henighan, T om B. Brown, Ben- jamin Chess, Rewon Child, Scott Gray , Alec Radford, Jerey W u, and Dario Amodei. 2020. Scaling Laws for Neural Language Mod- els. arXiv:2001.08361 [cs.LG] https://ar xiv .org/abs/2001.08361 [14] Katherine Lee, Daphne Ippolito, Andrew Nystrom, Chiyuan Zhang, Douglas Eck, Chris Callison-Burch, and Nicholas Carlini. 2022. Dedu- plicating Training Data Makes Language Mo dels Better . In ACL . https://aclanthology .org/2022.acl- long.577/ [15] Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich Küttler , Mike Lewis, W en-tau Yih, Tim Rocktäschel, Sebastian Riedel, and Douwe Kiela. 2020. Retrieval- A ugmented Generation for Knowledge-Intensive NLP Tasks. arXiv:2005.11401 https://ar xiv .org/abs/2005.11401 [16] Xianming Li and Jing Li. 2023. AnglE-optimized T ext Embe ddings. arXiv:2309.12871 [cs.CL] https://arxiv .org/abs/2309.12871 [17] Xiaochang Li, Chen Qian, Qineng Wang, Jiangtao Kong, Y uchen W ang, Ziyu Y ao, Bo Ji, Long Cheng, Gang Zhou, and Huajie Shao. 2024. Lens: A Knowledge-Guided Foundation Model for Network Trac. arXiv:2402.03646 [cs.LG] https://arxiv .org/abs/2402.03646 [18] Xinjie Lin, Gang Xiong, Gaop eng Gou, Zhen Li, Junzheng Shi, and Jing Y u. 2022. ET -BERT: A Contextualized Datagram Representation with Pre-training Transformers for Encrypted Trac Classication. In Proceedings of the ACM W eb Conference (W WW) . https://arxiv .org/abs/2202.06335 [19] Ilya Loshchilov and Frank Hutter . 2019. Decoupled W eight Decay Regularization. In International Conference on Learning Representations (ICLR) . arXiv:1711.05101 https://arxiv .org/abs/1711.05101 [20] Leland McInnes, John Healy , and Steve Astels. 2017. hdbscan: Hierar- chical density based clustering. Journal of Open Source Software 2, 11 (2017), 205. doi:10.21105/joss.00205 [21] Leland McInnes, John Healy , and James Melville. 2018. UMAP: Uniform Manifold Approximation and Projection for Dimension Reduction. arXiv preprint arXiv:1802.03426 (2018). arXiv:1802.03426 https://arxiv . org/abs/1802.03426 [22] Xuying Meng, Chungang Lin, Y equan W ang, and Yujun Zhang. 2023. NetGPT: Generative Pretrained Transformer for Network Trac. 13 arXiv:2304.09513 [cs.NI] https://arxiv .org/abs/2304.09513 [23] OpenAI. 2023. GPT -4 T echnical Report. arXiv:2303.08774 [cs.CL] https://arxiv .org/abs/2303.08774 [24] OpenAI. 2025. GPT -5.2 Mo del Card. https://platform.openai.com/docs/ models/gpt- 5.2. Accessed: 2026-02-13. Pricing: $1.75/1M input tokens, $14.00/1M output tokens. [25] OpenAI. 2025. GPT -5.4 Mo del Card. https://platform.openai.com/docs/ models/gpt- 5.4. Accessed: 2026-03-09. [26] Artidoro Pagnoni, Ram Pasunuru, Pe dro Rodriguez, John Nguyen, Ben- jamin Muller , Margaret Li, Chunting Zhou, Lili Yu, Jason W eston, Luke Zettlemoyer , Gargi Ghosh, Mike Lewis, Ari Holtzman, and Srinivasan Iyer . 2024. Byte Latent Transformer: Patches Scale Better Than T okens. arXiv:2412.09871 [cs.LG] https://arxiv .org/abs/2412.09871 [27] Adam Paszke, Sam Gross, Francisco Massa, Adam Lerer , James Bradbury , Gregor y Chanan, T revor Killeen, Zeming Lin, Na- talia Gimelshein, Luca Antiga, et al . 2019. Py T orch: An Imperative Style, High-Performance Deep Learning Librar y . In NeurIPS . https://proceedings.neurips.cc/pap er/2019/hash/ bdbca288fee7f92f2bfa9f7012727740- Abstract.html [28] Guilherme Penedo, Quentin Malartic, Daniel Hesslow , Ruxandra Cojo- caru, Alessandro Cappelli, Hamza Alobeidli, Baptiste Pannier , Ebtesam Almazrouei, and Julien Launay . 2023. The RenedW eb Dataset for Falcon LLM: Outperforming Curated Corpora with W eb Data, and W eb Data Only . arXiv:2306.01116 https://arxiv .org/abs/2306.01116 [29] Jian Qu, Xiaobo Ma, and Jianfeng Li. 2024. TracGPT: Breaking the T oken Barrier for Ecient Long Trac Analysis and Generation. arXiv:2403.05822 [cs.LG] https://arxiv .org/abs/2403.05822 [30] Alec Radford, Jerey W u, Rew on Child, David Luan, Dario Amodei, and Ilya Sutskever . 2019. Language Models are Unsupervised Multitask Learners. https://cdn.openai.com/b etter- language- models/language_ models_are_unsupervised_multitask_learners.p df [31] Colin Rael, Noam Shazeer , Adam Roberts, Katherine Le e, Sharan Narang, Michael Matena, Y anqi Zhou, W ei Li, and Peter J. Liu. 2020. Exploring the Limits of Transfer Learning with a Unied T ext-to- T ext Transformer . Journal of Machine Learning Research 21, 140 (2020), 1–67. https://jmlr .org/pap ers/v21/20- 074.html [32] Rico Sennrich, Barr y Haddow , and Alexandra Birch. 2016. Neural Machine Translation of Rare W ords with Subwor d Units. In Proceed- ings of the 54th A nnual Meeting of the Association for Computational Linguistics (ACL) . 1715–1725. doi:10.18653/v1/P16- 1162 [33] Łukasz T ulczyjew , Kinan Jarrah, Charles Abondo, Dina Bennett, and Nathanael W eill. 2024. LLMcap: Large Language Model for Unsu- pervised PCAP Failure Dete ction. arXiv:2407.06085 [cs.AI] https: //arxiv .org/abs/2407.06085 [34] Ashish V aswani, Noam Shazeer , Niki Parmar , Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser , and Illia Polosukhin. 2017. Attention Is All Y ou Need. arXiv:1706.03762 [cs.CL] https://arxiv .org/ abs/1706.03762 [35] T ongze W ang, Xiaohui Xie, W enduo W ang, Chuyi W ang, Y oujian Zhao, and Y ong Cui. 2024. NetMamba: Ecient Network Trac Classica- tion via Pre-training Unidirectional Mamba. arXiv:2405.11449 [cs.LG] https://arxiv .org/abs/2405.11449 [36] Wireshark Foundation. 2024. PDML – Packet Description Markup Language. https://wiki.wireshark.org/PDML Accessed 2025-08-11. [37] Wireshark Foundation. 2025. tshark(1) Manual Page. https://w ww . wireshark.org/docs/man- pages/tshark.html Accessed 2025-08-11. [38] Wireshark Foundation. 2025. Wireshark User’s Guide: Export Packet Dissections ( JSON, etc.). https://ww w .wireshark.org/docs/wsug_ html_chunked/ChIOExportSection.html A ccessed 2025-08-11. [39] Wireshark Project. 2025. README.xml-output (PDML de- tails). https://github.com/wireshark/wireshark/blob/master/doc/ README.xml- output Accessed 2025-08-11. [40] Duo Wu, Xianda W ang, Y aqi Qiao, Zhi W ang, Junchen Jiang, Shuguang Cui, and Fangxin W ang. 2024. NetLLM: Adapting Large Language Models for Networking. In Proce edings of A CM SIGCOMM . doi:10.1145/ 3651890.3672268 [41] Sang Michael Xie, Hieu Pham, Xuanyi Dong, Nan Du, Hanxiao Liu, Yifeng Lu, Percy Liang, Quoc V . Le, T engyu Ma, and Adams W ei Y u. 2023. DoReMi: Optimizing Data Mixtures Spe eds Up Language Model Pretraining. In NeurIPS . arXiv:2305.10429 10429 [42] Ruijie Zhao, Mingwei Zhan, Xianwen Deng, Y anhao W ang, Yijun W ang, Guan Gui, and Zhi Xue. 2023. Y et Another Trac Classier: A Masked Autoencoder Based T rac Transformer with Multi-Lev el Flow Representation. In Proceedings of the AAAI Confer ence on A rticial Intelligence , V ol. 37. 5420–5427. doi:10.1609/aaai.v37i4.25674 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment