Ghosts of Softmax: Complex Singularities That Limit Safe Step Sizes in Cross-Entropy

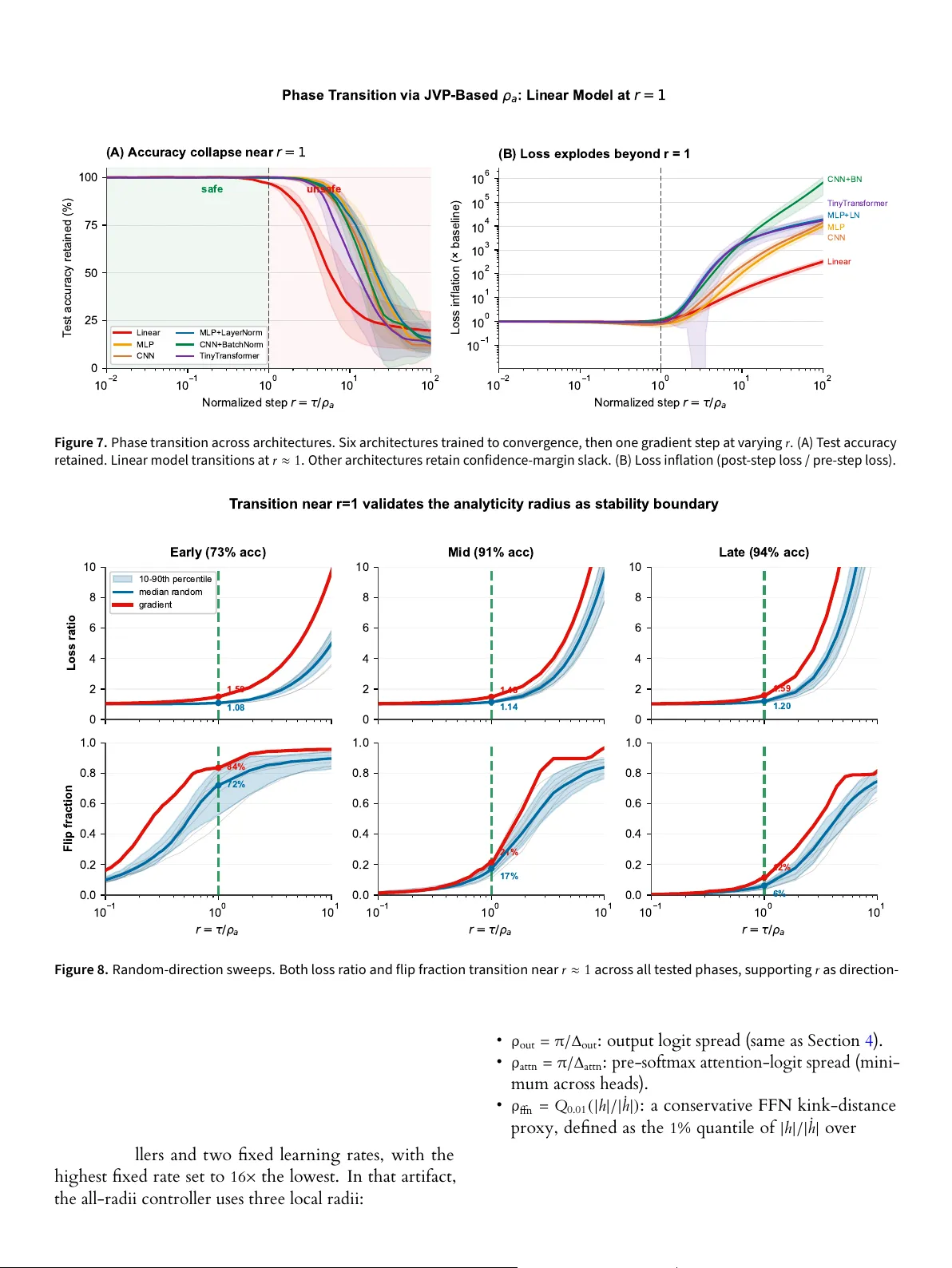

Optimization analyses for cross-entropy training rely on local Taylor models of the loss to predict whether a proposed step will decrease the objective. These surrogates are reliable only inside the Taylor convergence radius of the true loss along th…

Authors: Piyush Sao