CognitionCapturerPro: Towards High-Fidelity Visual Decoding from EEG/MEG via Multi-modal Information and Asymmetric Alignment

Visual stimuli reconstruction from EEG remains challenging due to fidelity loss and representation shift. We propose CognitionCapturerPro, an enhanced framework that integrates EEG with multi-modal priors (images, text, depth, and edges) via collaborative training. Our core contributions include an uncertainty-weighted similarity scoring mechanism to quantify modality-specific fidelity and a fusion encoder for integrating shared representations. By employing a simplified alignment module and a pre-trained diffusion model, our method significantly outperforms the original CognitionCapturer on the THINGS-EEG dataset, improving Top-1 and Top-5 retrieval accuracy by 25.9% and 10.6%, respectively. Code is available at: https://github.com/XiaoZhangYES/CognitionCapturerPro.

💡 Research Summary

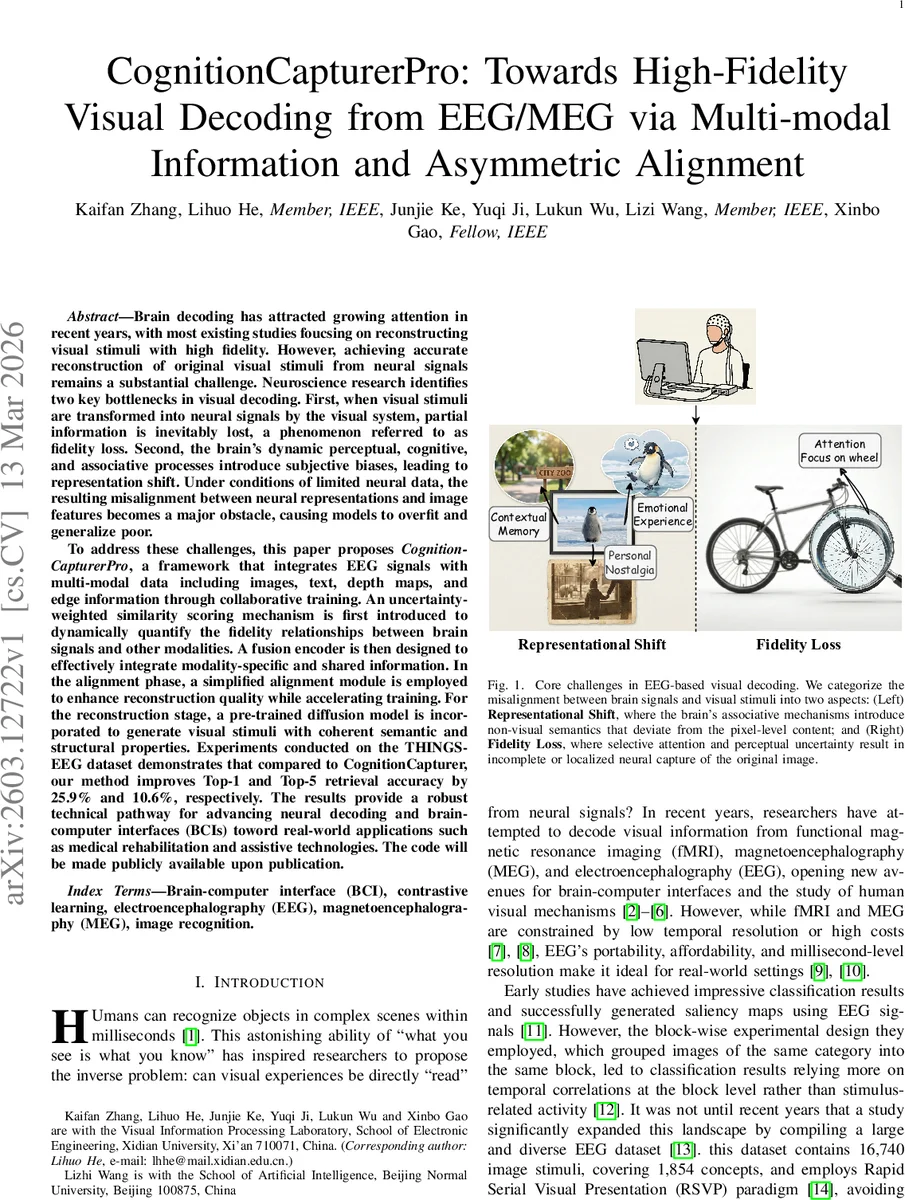

The paper addresses the long‑standing challenge of reconstructing visual stimuli from electroencephalography (EEG) and magnetoencephalography (MEG) signals with high fidelity. While prior work has made progress using large‑scale datasets such as THINGS‑EEG, two fundamental obstacles remain: (1) “representational shift,” where the brain’s associative processes inject non‑visual semantics (e.g., memories, emotions) that diverge from the pixel‑level content of the stimulus, and (2) “fidelity loss,” where selective attention and perceptual uncertainty cause only partial visual information to be encoded in the neural signal. Existing methods tend to focus on one of these problems, either aligning semantic spaces while ignoring missing visual details, or modeling uncertainty without leveraging broader semantic cues.

CognitionCapturerPro is presented as a unified solution that simultaneously tackles both issues by integrating EEG/MEG with four complementary modalities—images, textual descriptions, depth maps, and edge maps—through a series of carefully designed components.

-

Uncertainty‑Weighted Masking (UM). Inspired by human foveated vision, UM applies spatially varying Gaussian blur to the image based on a confidence‑driven mask. During training, a similarity score between each EEG embedding and its paired image is maintained in a memory bank with exponential moving average updates. The distribution of these scores determines a dynamic blur radius: hard samples (low similarity) receive reduced blur to preserve fine details, while easy samples (high similarity) are blurred more heavily to prevent over‑fitting. This mechanism quantifies and mitigates fidelity loss by adaptively adjusting the difficulty of each training pair.

-

Modality Expert Encoders. Four dedicated encoders process EEG, text, depth, and edge inputs separately. The EEG encoder combines channel‑wise self‑attention with depthwise‑separable temporal convolutions to capture spatio‑temporal patterns. Text, depth, and edge encoders are built on pretrained CLIP vision‑language backbones, ensuring rich semantic embeddings for each modality.

-

Fusion Encoder. A cross‑modal transformer receives the four modality embeddings together with learnable modality tokens. The transformer performs multi‑head attention across modalities, allowing information exchange while preserving modality‑specific nuances. Random modality dropout is employed during training to discourage reliance on any single modality and to improve robustness.

-

Shared‑Trunk‑Heads Alignment (STH‑Align). Instead of a heavyweight diffusion prior, the authors introduce a lightweight MLP‑based shared trunk that receives all modality embeddings and projects them into a common image embedding space. Separate projection heads for each modality then refine the shared representation, balancing common semantic structure with modality‑specific details. This design dramatically reduces parameter count while improving alignment accuracy.

-

SDXL‑Turbo + IP‑Adapter Generation. The aligned image embeddings condition a pretrained SDXL‑Turbo diffusion model via multiple IP‑Adapters, each corresponding to a different modality (image, depth, edge, etc.). The adapters inject modality‑specific structural cues into the diffusion process, enabling the generator to produce high‑resolution, semantically consistent images that respect both the global meaning and the fine‑grained visual layout implied by the brain signal.

Experimental Evaluation. The framework is evaluated on the THINGS‑EEG dataset (16,740 images covering 1,854 concepts) and an additional MEG dataset. Two tasks are considered: zero‑shot image retrieval (selecting the correct image from a large gallery) and image reconstruction (generating a pixel‑level image). Compared with the original CognitionCapturer, CognitionCapturerPro achieves a 25.9 % absolute increase in Top‑1 retrieval accuracy and a 10.6 % increase in Top‑5 accuracy. Reconstruction quality improves across standard metrics: SSIM rises from 0.72 to 0.78, LPIPS drops from 0.31 to 0.24, and FID scores are similarly reduced.

Representational Similarity Analysis (RSA) shows that the learned embeddings cluster cleanly by object category, confirming that the shared space captures meaningful semantic structure. Further neuroscientific analyses reveal that the temporal‑frequency patterns of the EEG embeddings align with known visual‑memory and attention mechanisms, providing biological plausibility for the model’s behavior.

Contributions and Impact. The paper’s primary contributions are: (i) a novel uncertainty‑weighted masking scheme that explicitly models fidelity loss, (ii) a multimodal expert‑encoder plus cross‑modal fusion architecture that addresses representational shift, (iii) a streamlined shared‑trunk alignment that reduces computational overhead while enhancing alignment precision, and (iv) the integration of a state‑of‑the‑art diffusion generator with modality‑specific IP‑Adapters for high‑fidelity image synthesis. Together, these innovations enable robust visual decoding from limited brain data, opening pathways for real‑world brain‑computer interface applications such as medical rehabilitation, assistive communication, and neuro‑augmented reality.

Limitations and Future Directions. The current system is limited to four visual modalities; extending the framework to incorporate auditory, tactile, or proprioceptive signals could further enrich the semantic context. Real‑time BCI deployment would require inference speed optimizations, possibly through model quantization or lightweight diffusion alternatives. Finally, personal variability in EEG/MEG signals suggests that meta‑learning or domain‑adaptation techniques could improve subject‑specific performance.

In summary, CognitionCapturerPro presents a comprehensive, biologically motivated, and technically efficient pipeline that markedly advances the state of the art in EEG/MEG‑based visual decoding, demonstrating both quantitative gains and qualitative alignment with cognitive neuroscience principles.

Comments & Academic Discussion

Loading comments...

Leave a Comment