LR-SGS: Robust LiDAR-Reflectance-Guided Salient Gaussian Splatting for Self-Driving Scene Reconstruction

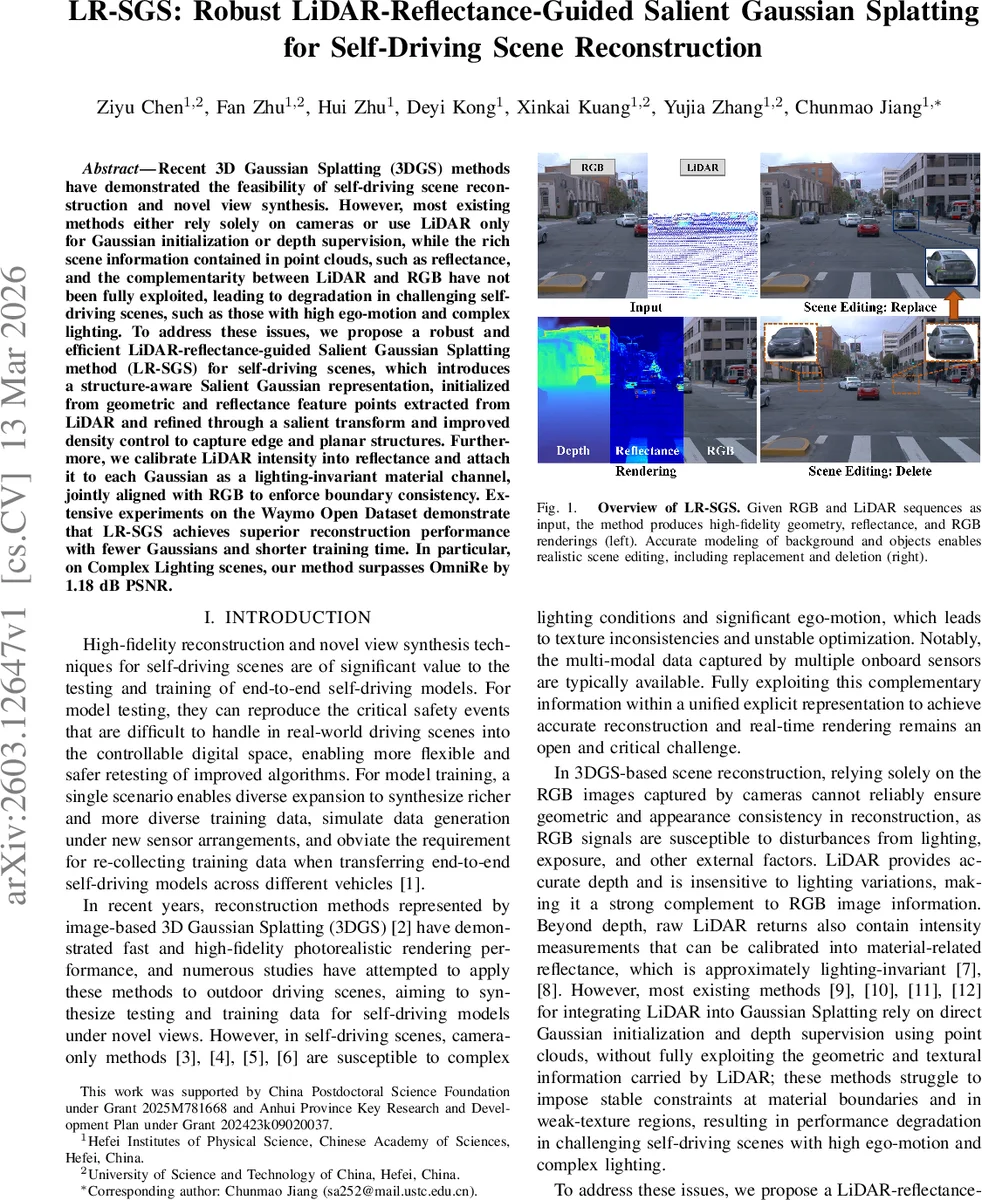

Recent 3D Gaussian Splatting (3DGS) methods have demonstrated the feasibility of self-driving scene reconstruction and novel view synthesis. However, most existing methods either rely solely on cameras or use LiDAR only for Gaussian initialization or depth supervision, while the rich scene information contained in point clouds, such as reflectance, and the complementarity between LiDAR and RGB have not been fully exploited, leading to degradation in challenging self-driving scenes, such as those with high ego-motion and complex lighting. To address these issues, we propose a robust and efficient LiDAR-reflectance-guided Salient Gaussian Splatting method (LR-SGS) for self-driving scenes, which introduces a structure-aware Salient Gaussian representation, initialized from geometric and reflectance feature points extracted from LiDAR and refined through a salient transform and improved density control to capture edge and planar structures. Furthermore, we calibrate LiDAR intensity into reflectance and attach it to each Gaussian as a lighting-invariant material channel, jointly aligned with RGB to enforce boundary consistency. Extensive experiments on the Waymo Open Dataset demonstrate that LR-SGS achieves superior reconstruction performance with fewer Gaussians and shorter training time. In particular, on Complex Lighting scenes, our method surpasses OmniRe by 1.18 dB PSNR.

💡 Research Summary

LR‑SGS (LiDAR‑Reflectance‑Guided Salient Gaussian Splatting) addresses two major shortcomings of existing 3D Gaussian Splatting (3DGS) approaches for autonomous‑driving scene reconstruction: (1) reliance on RGB images alone makes the pipeline vulnerable to illumination changes and rapid ego‑motion, and (2) current LiDAR integration strategies only use point clouds for initialization or depth supervision, ignoring the rich intensity (reflectance) information that can provide lighting‑invariant material cues.

The proposed method first calibrates raw LiDAR intensity to a reflectance value by correcting for distance and incident angle, then attaches this reflectance as an additional per‑Gaussian attribute channel. Because reflectance is largely independent of lighting, it serves as a robust material signal that can be jointly aligned with RGB gradients to enforce boundary consistency and reduce blur at material edges.

A novel “Salient Gaussian” representation is introduced to capture structural features more efficiently. Each Salient Gaussian has an anisotropic covariance matrix with a dominant direction (d_spec) and two equal non‑dominant scales, reducing the number of scale parameters to σ∥ (along the dominant axis) and σ⊥ (shared across the other two axes). This design enables the Gaussian to elongate along edges or flatten on planar surfaces while keeping parameter count low.

Salient Gaussians are initialized not from raw LiDAR points but from a high‑confidence feature set derived from LiDAR geometry and reflectance. Geometric feature points are extracted via a smoothness metric, separating edge and planar points; reflectance edge points are identified using a gradient computed along LiDAR scan rings. Edge Salient Gaussians are seeded at geometric and reflectance edges, while Planar Salient Gaussians are seeded on planar regions. Non‑salient Gaussians are still created from SfM points to fill gaps where LiDAR coverage is sparse.

Density control is extended to respect the Salient nature of the Gaussians. During a split operation, Edge Salient Gaussians are divided along their dominant axis, whereas Planar Salient Gaussians split within the plane orthogonal to the dominant axis. Newly created Gaussians inherit the type of their parent. A “Salient Transform” mechanism monitors each Gaussian’s ordered scales (s1 ≥ s2 ≥ s3) and computes linearity L = (s1‑s2)/s1 and planarity P = (s2‑s3)/s1. If a Non‑Salient Gaussian exhibits high L or P for two consecutive density‑control steps, it is promoted to a Salient Gaussian (edge or planar depending on whether L ≥ P). Conversely, if a Salient Gaussian’s scales become similar (both L and P fall below a lower threshold for two steps), it is demoted to Non‑Salient. This dynamic conversion keeps the representation focused on structurally important regions and improves both accuracy and efficiency.

Rendering follows the standard 3DGS α‑blended volume rendering pipeline, producing per‑pixel color C, depth D, and reflectance F. The final color is composited with a sky node; reflectance and depth are directly taken from the Gaussian contributions because LiDAR does not measure the sky.

The optimization loss combines three terms:

- Color loss (L_rgb) – an L2 + SSIM term enforcing photometric consistency between rendered and ground‑truth RGB images.

- LiDAR loss (L_lidar) – a depth term and a reflectance term that constrain geometry and material using calibrated LiDAR data.

- Joint loss (L_joint) – a cross‑modal consistency term that aligns the gradient direction and magnitude of the reflectance map with those of a grayscale‑converted RGB image, sharpening material boundaries.

Experiments on the Waymo Open Dataset cover three challenging categories—Dense Traffic, High‑Speed, and Complex Lighting—as well as static scenes. LR‑SGS consistently outperforms prior methods such as OmniRe and StreetGS while using roughly 30 % fewer Gaussians and reducing training time by about 25 %. In the Complex Lighting benchmark, LR‑SGS achieves a 1.18 dB PSNR gain over OmniRe and a 0.012 SSIM improvement. Qualitative results demonstrate clearer edges, more accurate planar surfaces, and stable rendering of dynamic objects under severe illumination changes.

Beyond quantitative metrics, the authors showcase scene editing capabilities (object replacement and deletion) that preserve realistic lighting and geometry, highlighting the practical utility of a high‑fidelity, editable 3D representation for autonomous‑driving simulation and data augmentation.

In summary, LR‑SGS leverages the complementary strengths of LiDAR and RGB by converting LiDAR intensity into a lighting‑invariant reflectance channel, introduces a structure‑aware Salient Gaussian representation, and employs adaptive density control with a Salient‑Transform mechanism. These innovations enable robust, high‑quality reconstruction of self‑driving scenes with lower computational cost, paving the way for more efficient simulation pipelines and richer training data generation. Future work may explore finer modeling of dynamic deformations and robustness to real‑time sensor calibration errors.

Comments & Academic Discussion

Loading comments...

Leave a Comment