Towards unified brain-to-text decoding across speech production and perception

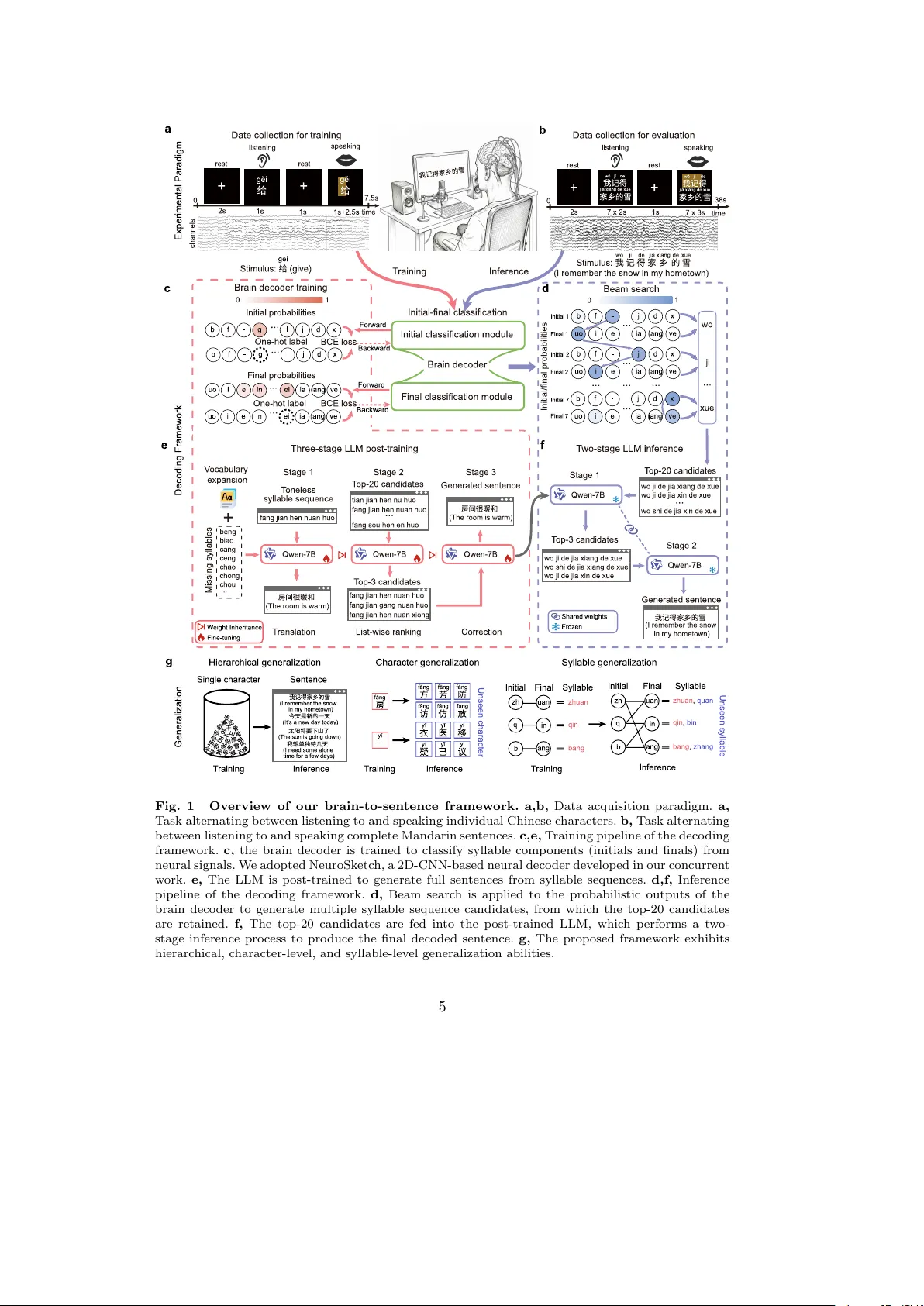

Speech production and perception are the main ways humans communicate daily. Prior brain-to-text decoding studies have largely focused on a single modality and alphabetic languages. Here, we present a unified brain-to-sentence decoding framework for …

Authors: Zhizhang Yuan, Yang Yang, Gaorui Zhang