Reinforcement Learning for Diffusion LLMs with Entropy-Guided Step Selection and Stepwise Advantages

Reinforcement learning (RL) has been effective for post-training autoregressive (AR) language models, but extending these methods to diffusion language models (DLMs) is challenging due to intractable sequence-level likelihoods. Existing approaches th…

Authors: Vishnu Teja Kunde, Fatemeh Doudi, Mahdi Farahbakhsh

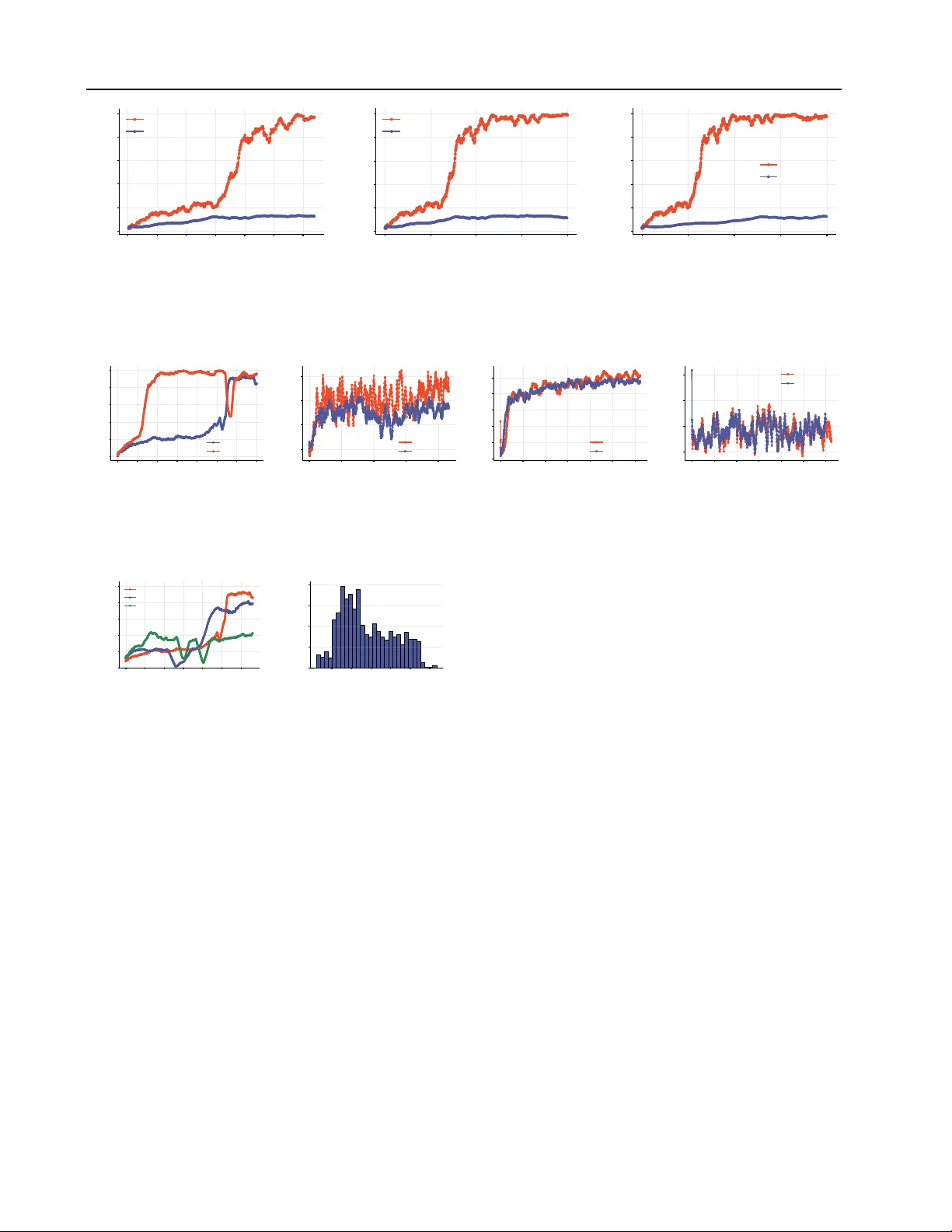

Reinf or cement Learning f or Diffusion LLMs with Entr opy-Guided Step Selection and Stepwise Advantages V ishnu T eja Kunde * 1 Fatemeh Doudi * 1 Mahdi Farahbakhsh 1 Dileep Kalathil 1 Krishna Narayanan 1 Jean-Francois Chamberland 1 Abstract Reinforcement learning (RL) has been effecti ve for post-training autoregressi ve (AR) language models, but e xtending these methods to diffusion language models (DLMs) is challenging due to intractable sequence-lev el likelihoods. Existing approaches therefore rely on surrogate likelihoods or heuristic approximations, which can introduce bias and obscure the sequential structure of de- noising. W e formulate dif fusion-based sequence generation as a finite-horizon Markov decision process ov er the denoising trajectory and derive an exact, unbiased policy gradient that decom- poses o ver denoising steps and is expressed in terms of intermediate advantages, without requir - ing explicit e valuation of the sequence likelihood. T o obtain a practical and compute-efficient esti- mator , we (i) select denoising steps for policy up- dates via an entropy-guided approximation bound, and (ii) estimate intermediate advantages using a one-step denoising reward naturally provided by the diffusion model, av oiding costly multi-step rollouts. Experiments on coding and logical rea- soning benchmarks demonstrate state-of-the-art results, with strong competiti ve performance on mathematical reasoning, outperforming existing RL post-training approaches for DLMs. Code is av ailable at Github. 1. Introduction Diffusion Language Models (DLMs) (Sahoo et al., 2024; Nie et al., 2025; Y e et al., 2025) have recently emer ged as a compelling alternative to autoregressiv e language models (ARLMs). Instead of generating tokens sequentially from left to right, DLMs produce text through an iterativ e de- noising process, typically via masked discrete diffusion, en- * Equal contribution 1 Department of Electrical and Computer Engineering, T exas A&M uni versity , College Station, T exas. Cor- respondence to: Dileep Kalathil < dileep.kalathil.tamu.edu > . Pr eprint. Mar ch 16, 2026. abling bidirectional context and multi-token parallelism for higher token throughput while maintaining competitiv e out- put quality . These advantages ha ve spurred rapid progress in DLM architectures and algorithms, including multimodal generation (Y ang et al., 2025; Li et al., 2025), long-context modeling (Liu et al., 2025), accelerated inference (W u et al., 2025), and code generation (Song et al., 2025). Moti vated by the transformativ e impact of reinforcement learning (RL) post-training on ARLMs (Guo et al., 2025), there is now growing interest in de veloping effecti ve and scalable RL algorithms tailored to DLMs. Despite the success of RL post-training for ARLMs, ex- tending these methods to DLMs is not a direct translation. RL for ARLMs relies on causal token-wise factorization, which yields a natural tok en-space Marko v decision process (MDP) and enables efficient computation of log-likelihoods and importance ratios. DLMs fundamentally break this structure: generation proceeds through a denoising trajec- tory in masked space, and the likelihood of the final output does not admit a readily usable tok en-wise decomposition. As a result, na ¨ ıvely porting standard polic y-gradient objec- tiv es leads to intractable or prohibitively expensiv e likeli- hood ev aluations. At the same time, diffusion generation offers opportunities largely absent in ARLMs. Model uncer- tainty ev olves non-uniformly along the denoising trajectory , motiv ating stepwise cr edit/advantage assignment and step- wise compute allocation across dif fusion steps. Moreover , masked DLMs output token distributions for all positions at each step, enabling a lightweight “full unmasking” that can serve as an increasingly accurate proxy of the e ventual out- put later in the trajectory , and thus provide partial learning signals for intermediate steps without an explicit pretrained value function. T ogether , these challenges and opportuni- ties motiv ate a principled approach to RL for DLMs that exploits dif fusion structure. A growing body of work has begun exploring RL post- training for diffusion LMs, largely via surrogate objec- tiv es and tractable likelihood approximations. d1 (Zhao et al., 2025b) adapts GRPO to DLMs using mean-field likelihood approximations; wd1 (T ang et al., 2025) mod- ifies the objectiv e to reduce instability while still relying 1 Entropy-Guided Stepwise P olicy Optimization with Stepwise Advantages on proxy likelihoods; SPG (W ang et al., 2025a) optimizes pessimistic/optimistic bound-based surrogates; d2 (W ang et al., 2025c) deri ves a trajectory-le vel formulation b ut em- ploys step-merging estimators to obtain tractable updates; T raceRL (W ang et al., 2025d) introduces trajectory-aware training with a dif fusion-based value model; and DiFFPO (Zhao et al., 2025a) proposes of f-policy training via surro- gate policies with improv ed likelihood approximations and importance sampling. While these methods are practically effecti ve, they typically begin with a chosen appr oximation (to the likelihood, objectiv e, or advantage) and optimize the resulting surrogate directly , leaving the connection to the true RL objecti ve less e xplicit and step-lev el optimization across the denoising trajectory largely implicit. W e take a complementary , first-principles approach that makes the diffusion structure explicit, rather than treating a DLM as a black-box sampler . Instead of beginning with a particular surrogate likelihood approximation, we ask the fundamental questions that should underlie an y principled RL method for dif fusion LMs: What is the right MDP for- malism for DLMs? What is the exact polic y gradient for the true RL objecti ve? How can it be approximated tractably at scale? And how can dif fusion-time structure enable step- wise advantage (credit) estimation and compute allocation across denoising steps? W e answer these questions in the affirmati ve, leading to the follo wing main contributions. • MDP formalism f or DLMs: W e formulate masked diffu- sion generation as a finite-horizon MDP ov er denoising steps, making the structure needed for RL explicit. • Exact policy gradient with stepwise advantage: Build- ing on the MDP formalism, we derive an exact policy- gradient theorem that decomposes ov er denoising steps, yielding a principled notion of stepwise advantages . • T ractable estimators exploiting diffusion structure: W e turn this exact theory into a practical algorithm by exploiting two DLM-nativ e capabilities absent in ARLMs. First, we allocate training compute acr oss denoising steps using the model’ s intrinsic uncertainty: higher-entropy steps are prioritized under a fixed budget. W e call this method E ntropy- G uided S tepwise P olicy O ptimization ( EGSPO ). Second, in addition to the entropy-guided step selection, we estimate stepwise advantages via a lightweight full-sequence “one-shot” completion from intermediate states, yielding intermediate learning sig- nals without an e xtra value network or costly multi-step rollouts. W e call this method E ntrop y- G uided S tepwise P olicy O ptimization with S tepwise A dv antages ( EGSPO- SA ). T ogether , these ideas make RL training scalable for DLMs while preserving the diffusion-time structure. • State-of-the-art results on coding/reasoning: Empiri- cally , we achie ve state-of-the-art results on standard cod- F igure 1. Overvie w of the performance on coding and reasoning tasks. Our approach outperforms the existing baselines in coding and logical reasoning tasks, while maintaining competitiv e perfor- mance in mathematical reasoning tasks. ing and logical reasoning benchmarks, outperforming existing RL post-training approaches for DLMs. 2. Related W ork Diffusion Language Models: Diffusion language mod- els (DLMs) (Sahoo et al., 2024; Nie et al., 2025; Y e et al., 2025) extend discrete diffusion frame works (Austin et al., 2021a; Lou et al., 2023; Song et al., 2025) to natural lan- guage, enabling parallel multi-token generation. Prior work has explored architectural simplifications (Shi et al., 2024), theoretical connections between discrete and continuous dif- fusion (Zhao et al., 2024), and multimodal e xtensions (Y ang et al., 2025; Li et al., 2025). Another line of research fo- cuses on inference efficienc y , including remasking schemes (W ang et al., 2025b), token reordering (Arriola et al., 2025; Sahoo et al., 2025), and entropy- or confidence-based sam- pling strategies (Ben-Hamu et al., 2025; W u et al., 2025). RL f or LLMs and Reasoning: Reinforcement learning has become central to enhancing the reasoning capabili- ties of auto-regressi ve language models (ARLMs) (OpenAI, 2024), with policy-gradient methods such as PPO (Schul- man et al., 2017) and GRPO (Shao et al., 2024) enabling effecti ve fine-tuning on mathematical reasoning, coding, and planning tasks (Guo et al., 2025; Zhang et al., 2025). RL for Diffusion Image Models: RL has also been ap- plied to diffusion models for image generation, where re- wards are commonly deri ved from pretrained classifiers, aesthetic metrics, or do wnstream task objecti ves. Prior work primarily adopts polic y-gradient methods (Black et al., 2024; Fan et al., 2023; Uehara et al., 2024) or preference- based direct policy optimization (W allace et al., 2024; Y ang et al., 2024). These methods operate in continuous diffusion 2 Entropy-Guided Stepwise P olicy Optimization with Stepwise Advantages steps Choose steps wisely Choose your steps wisely steps Choose (a) Entropy-Guided Step Selection steps Choose steps wisely Choose your steps wisely steps Choose Pick your steps random (b) Stepwise Advantage Estimation F igure 2. (a) Illustration of entropy-guided denoising step selection: At each denoising step t , the entropy H t of the unmasking policy distribution is computed and used to identify the K informativ e steps that have maximum entropy . In the figure, assuming H 3 > H 1 > H 2 > H 0 and K = 2 , the two highest-entropy steps 3 , 1 are selected for per-step policy gradient computation (mark ed by solid lines). (b) Illustration of stepwise advantage estimation: From state x t +1 , a greedy one-step completion ˆ x 0 | t +1 provides a baseline rew ard approximating the state value. The stepwise advantage A t measures the additional reward gained by taking the denoising action at step t and continuing to x 0 . settings with dense rewards, unlik e diffusion language mod- els with discrete decisions and long-horizon sparse credit assignment. 3. Preliminaries and Pr oblem Formulation In this section, we pro vide a brief o vervie w of the masked diffusion language model (MDLM) and reinforcement learn- ing for language models. Notations. Let x = x 0: T , 1: L denote a sequence of T + 1 steps of L -length sentences. Each of x t can take v alues from a finite set of vocabulary V ∪ { m } where m is a special token denoting mask. The state x 0 denotes the clean sentence and can take v alues only in V . 3.1. Masked Diffusion Language Models The MDLMs (Sahoo et al., 2024; Nie et al., 2025; Song et al., 2025; Y e et al., 2025) in volv e a forwar d masking (noising) process and re verse unmasking (denoising). During the training, the masking process is implemented by taking a clean sequence x 0 = x 0 , 1: L and obtaining a partially masked sequence x t as x t,ℓ ∼ q t | 0 ( · | x 0 ,ℓ ) = Cat( · | α t x 0 ,ℓ + (1 − α t ) m ) , where α t ∈ [0 , 1] is a strictly decreasing noise schedule typically chosen to be α t = 1 − t T for t ∈ [0 , T − 1] . The re verse unmasking process is parameterized by a neural network f θ that specifies the distribution over tokens at each position. Given a partially masked sequence at time t and for any s < t , the denoised sample is obtained as x s,ℓ ∼ π s | t θ ( · | x t ) = q s | t, 0 ( · | x t , x 0 = f θ ( · | x t )) . (1) In practice, a sample x s is obtained starting from x t iter- ativ ely as π θ s : t − 1 | t ( x s : t − 1 | x t ) ≜ Q s u = t − 1 π θ u | u +1 ( x u | x u +1 ) . The complete re verse sampling process starts from x T and samples iterati vely for t = T − 1 , . . . , 0 . Gi ven x t +1 , let M t = M t ( x t +1 ) ≜ { ℓ : x t +1 ,ℓ = m } denote the set of masked positions. The positions to unmask are typi- cally decided using some heuristic based on f θ ,ℓ ( · | x t +1 ) for ℓ ∈ M t ( x t +1 ) . The dif fusion LM model f θ is trained by minimizing the negati ve evidence lo wer bound (NELBO): − E x 0 ∼ p data , x t ∼ q t | 0 ( ·| x 0 ) [ | x t | X ℓ =1 I { x t,ℓ = m } log f θ ,ℓ ( x 0 ,ℓ | x t )] . This essentially means that f θ models a product distrib ution ov er the clean tokens in the masked position of the input sequence x t , which is equi valent to getting a probability distribution over x 0 giv en x t . W e denote this one-step denoising distribution as π θ 0 | t , giv en by π θ 0 | t ( x 0 | x t ) = Y ℓ : x t,ℓ = m f θ ,ℓ ( x 0 ,ℓ | x t ) . (2) In the following, we drop the superscript that denotes the time steps in the policy π θ to lighten the notation. 3.2. Reinfor cement Learning for LLMs Giv en a query q ∼ D , an LM generates an output x 0 and re- cei ves a rew ard r ( x 0 , q ) . RL fine-tuning seeks to maximize the expected re ward: J ( θ ) ≜ E q , x 0 ∼ π θ ( ·| q ) [ r ( x 0 , q )] . (3) Policy-gradient methods are standard tools for optimizing (3) . Among them, the Group Relati ve Policy Optimization (GRPO) (Shao et al., 2024) is widely popular for RL with V erifiable Re ward (RL VR) tasks, as it eliminates the need 3 Entropy-Guided Stepwise P olicy Optimization with Stepwise Advantages for a trained value model used in PPO-style algorithms (Schulman et al., 2017). The GRPO loss function for an auto-regressi ve (AR) LM is giv en by L AR ( θ ) = − E q , x 0 ∼ π θ old ( ·| q ) h 1 L L X ℓ =1 min( ρ ℓ A π θ old , clip( ρ ℓ , 1 − ϵ, 1 + ϵ ) A π θ old ) i + β D KL ( π θ ∥ π ref ) (4) where π θ old denotes the sampling policy , ρ ℓ = π θ ( x 0 ,ℓ | x 0 ,<ℓ , q ) π θ old ( x 0 ,ℓ | x 0 ,<ℓ , q ) denotes the per-token importance ratio, β controls the strength of the KL regularization w .r .t. the base model π ref , and the adv antage A π θ is defined for an y policy π θ as A π θ ( x 0 , q ) = r ( x 0 , q ) − E x 0 ∼ π θ ( ·| q ) [ r ( x 0 , q )] , (5) which is approximated by a Monte Carlo estimate. More precisely , for a given query q , we sample x j ∼ π θ old ( · | q ) , 1 ≤ j ≤ G , and estimate A π θ old ( x j 0 , q ) = r ( x j 0 , q ) − 1 G G X i =1 r ( x i 0 , q ) . (6) A well-known limitation of GRPO is that the same sequence- lev el advantage A π θ old is broadcast to all tokens, whereas PPO-style methods can (in principle) use token-specific advantages via a learned value function. This credit as- signment issue becomes substantially more pronounced for diffusion LMs, where decision-making occurs over denois- ing steps rather than token positions. 3.3. Problem F ormulation: RL for Diffusion LLMs RL finetuning of an AR-LLM exploits its auto-regressiv e structure by modeling the token generation as a tok en-space MDP , and the LLM as a polic y . Since x 0 = x 0 , 1: L , we can define the initial state as q , state at step ℓ as ( q , x 0 ,<ℓ ) and action at step ℓ as the next token x 0 ,ℓ ∼ π θ ( · | x 0 ,<ℓ , q ) . This MDP formalism offers a significant computational ad- vantage in training, because any policy gradient algorithm for solving (3) has to calculate the term π θ ( x 0 | q ) , and here it decomposes as π θ ( x 0 | q ) = Q L ℓ =1 π θ ( x 0 ,ℓ | x 0 ,<ℓ , q ) . Due to causal attention of AR-LLM, all these terms can be efficiently computed using only a single model forward pass. Unfortunately , diffusion LMs break these con ve- niences because there is no token-wise causal factorization of π θ ( x 0 | q ) av ailable at training time. As a consequence, naiv ely porting the RL training objecti ves for AR-LLM, such as Eq. (4), would require ev aluating (explicitly or im- plicitly) sequence-lev el likelihood terms that are intractable or prohibitiv ely expensiv e for diffusion models. Recent work has made progress on RL for diffusion LMs by introducing surrogate objecti ves and likelihood approxi- mations. Representativ e examples include: (i) mean-field or one-step likelihood approximations to enable GRPO-style ratios (Zhao et al., 2025b); (ii) ratio-free weighted updates that still rely on tractable proxy likelihoods (T ang et al., 2025); (iii) pessimistic bound-based objectiv es that opti- mize lower/upper bounds on likelihood quantities (W ang et al., 2025a); and (iv) trajectory-based formulations with biased step-merging estimators or additional structural as- sumptions for one-pass ev aluation (W ang et al., 2025c). These approaches are practical, b ut they necessarily make compromises: the optimized objecti ve may de viate from 3, the resulting gradients may be biased, and, crucially , the role of individual denoising steps in producing the final re ward is often ignored. In this work, we take a complementary approach. Rather than be ginning with a particular surrogate likelihood ap- proximation, we ask the foundational questions that should underlie any principled RL method for dif fusion LMs. ( i ) MDP formalism: What are the states, actions, and tran- sitions that faithfully represent dif fusion-based generation while remaining amenable to RL analysis? ( ii ) P olicy-gradient for Eq. (3) : Can we deriv e an unbiased gradient expression that respects the sequential structure of denoising? Ho w can we estimate that gradient tractably? ( iii ) Stepwise advantages: In AR decoding, actions are tied to token positions; in diffusion decoding, actions are tied to denoising step. Can diffusion structure yield improv ed stepwise advantages that do not ha ve direct AR analogues? W e provide positiv e answers to these questions in Section 4. 4. Methodology 4.1. Diffusion MDP and Policy Gradient Theor em W e formalize the unmasking process as a T -step MDP . The state and action at time t is defined as s t = ( x T − t , q ) and a t = x T − t − 1 . The transition probability function is deter- ministic by construction. The re ward at time t is giv en by r t = 0 for all t < T and r T ( s T ) = r ( x 0 , q ) . Notice that the time index moves forward in the MDP , but it moves backwards in the diffusion process. T o keep the notation consistent, we only use the time index corresponding to the diffusion in defining the quantities of interest. The value of a polic y π at time step t is gi ven by V π t ( x t , q ) = E x ...... • a strict format rew ard of +0 . 5 for e xact adherence to the expected structure, including correct line breaks; • an integer -answer reward of +0 . 5 if the predicted answer is a valid inte ger; • a correctness rew ard of +2 . 0 when the predicted answer matches the ground-truth solution. B.2. MA TH500. For MA TH500, we train on the training split and ev aluate on the test split. The reward function consists of formatting and correctness components: • a format reward of 1 . 0 if 4123324114322314 • EGSPO (Correct) To solve the 4x4 Sudoku puzzle, we need to fill in the empty cells with the digits 1-4 such that each row, column, and 2x2 box contains the digits 1-4 exactly once. We can start by analyzing the given puzzle and filling in the empty cells according to the rules. We need to ensure that each row, column, and 2x2 box contains the digits 1-4 exactly once. 4123324114322314 • d1 (Incorrect) To solve this 4x4 Sudoku puzzle, we need to fill in the empty cells with digits 1-4 such that each row, column, and 2x2 box contains all digits exactly once. We can start by placing the known digits and then filling in the empty cells guided by the rules of Sudoku. We will use a systematic approach to fill in the grid, ensuring that each row, column, and box conforms to the Sudoku rules. 1234314214322314 Sudoku Example Problem. Solve the following 4 × 4 Sudoku puzzle: 0400010002311340 Ground T ruth. 2413312442311342 Model Generations. • EGSPO-SA (Correct) To solve this Sudoku puzzle, we need to fill the empty cells with the digits 1-4 such that each row, each, and each 2x2 box contains all the digits exactly once. We can start by filling the first row with the digits 4 and 1. Then, we can fill the second row with the digits 3 and 3. Next, we can fill the remaining rows and columns with the remaining digits. 2413312442311342 • EGSPO (Correct) To solve the 4x4 Sudoku puzzle, we need to fill in the empty cells with the digits 1-4 such that each row, column, and 2x2 box contains the digits 1-4 exactly once. We can start by analyzing the given puzzle and filling in the empty cells according to the rules. We need to ensure that each row, column, and 2x2 box contains the digits 1-4 exactly once. 2413312442311342 • d1 (Incorrect) To solve this 4x4 Sudoku puzzle, we need to fill in the empty cells with digits 1-4 such that each row, column, and 2x2 box contains all digits exactly once. We can start by placing the known digits and then use logical deduction to fill in the remaining cells. We will use the process of and process of elimination to solve the puzzle. . . . . . 1234412323413412 14 Entropy-Guided Stepwise P olicy Optimization with Stepwise Advantages F . LLM Usage Large language models were used solely as an editorial aid to improve clarity and presentation. No scientific content, including methods, algorithms, formulas, experimental design, or results, was generated or suggested by LLMs. All technical contributions and conclusions are the original w ork of the authors. 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment