Spatial-TTT: Streaming Visual-based Spatial Intelligence with Test-Time Training

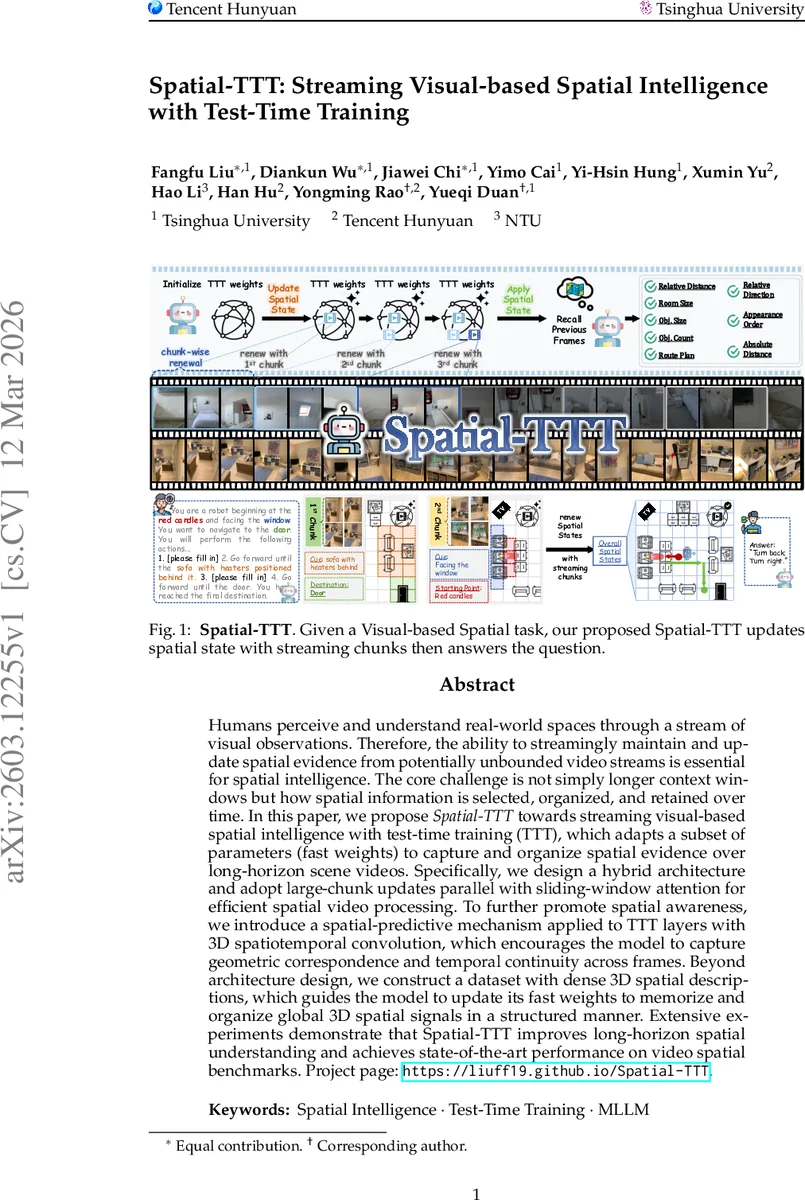

Humans perceive and understand real-world spaces through a stream of visual observations. Therefore, the ability to streamingly maintain and update spatial evidence from potentially unbounded video streams is essential for spatial intelligence. The core challenge is not simply longer context windows but how spatial information is selected, organized, and retained over time. In this paper, we propose Spatial-TTT towards streaming visual-based spatial intelligence with test-time training (TTT), which adapts a subset of parameters (fast weights) to capture and organize spatial evidence over long-horizon scene videos. Specifically, we design a hybrid architecture and adopt large-chunk updates parallel with sliding-window attention for efficient spatial video processing. To further promote spatial awareness, we introduce a spatial-predictive mechanism applied to TTT layers with 3D spatiotemporal convolution, which encourages the model to capture geometric correspondence and temporal continuity across frames. Beyond architecture design, we construct a dataset with dense 3D spatial descriptions, which guides the model to update its fast weights to memorize and organize global 3D spatial signals in a structured manner. Extensive experiments demonstrate that Spatial-TTT improves long-horizon spatial understanding and achieves state-of-the-art performance on video spatial benchmarks. Project page: https://liuff19.github.io/Spatial-TTT.

💡 Research Summary

Spatial‑TTT tackles the problem of maintaining and reasoning over 3‑D spatial information in unbounded video streams, a capability that current multimodal large language models (MLLMs) lack due to their training on static 2‑D image‑text pairs. The authors adopt the test‑time training (TTT) paradigm, which updates a small set of parameters—fast‑weights—online while processing each test frame. These fast‑weights act as a compact, non‑linear memory that continuously encodes key‑value associations from the visual stream, allowing the model to accumulate spatial evidence over long horizons without the quadratic cost of full‑attention Transformers.

The core architecture is a hybrid transformer decoder in which 75 % of the layers are TTT layers and the remaining 25 % are conventional self‑attention “anchor” layers. Anchor layers preserve the pretrained cross‑modal alignment and high‑level semantic reasoning, while TTT layers compress long‑range temporal dependencies into the fast‑weights, yielding sub‑linear memory growth. To make TTT practical for video, the authors replace the usual small token chunks (16–64 tokens) with large chunks that span multiple frames, dramatically improving GPU utilization and keeping spatially coherent content together. Because large chunks cannot attend to themselves during fast‑weight updates (to avoid causal leakage), the authors run a sliding‑window attention (SWA) branch in parallel with the TTT branch. The SWA window is at least as large as the chunk, guaranteeing that all intra‑chunk token interactions are covered while respecting causality.

A key innovation is the spatial‑predictive mechanism. Standard TTT uses point‑wise linear projections for Q/K/V, ignoring the neighborhood structure of visual tokens. Spatial‑TTT injects lightweight depth‑wise 3‑D spatiotemporal convolutions into the TTT branch, aggregating local spatiotemporal context before the fast‑weight network processes it. This encourages the fast‑weights to learn predictive mappings between neighboring frames, capturing geometric correspondences and temporal continuity, which stabilizes online updates and improves spatial reasoning.

Training fast‑weights requires supervision that tells the model what spatial information to retain. Existing spatial datasets are sparse, offering only local cues. The authors therefore construct a dense scene‑description dataset where each video is paired with a comprehensive textual description covering global layout, object counts, sizes, absolute and relative distances, and directional relations. This rich supervision provides strong gradient signals for learning effective fast‑weight dynamics that preserve structured 3‑D scene information across the stream.

Experiments on several video‑spatial benchmarks (VSI‑Bench, STI‑Bench, VSI‑Super, etc.) show that Spatial‑TTT outperforms prior state‑of‑the‑art methods by a large margin, especially on long‑horizon tasks involving thousands of frames. Ablation studies confirm the importance of each component: the hybrid 3:1 TTT‑to‑anchor ratio, large‑chunk updates, parallel SWA, and the 3‑D convolutional predictor. Memory usage scales as O(√T) rather than O(T²), and inference speed remains practical thanks to the parallel chunk processing.

In summary, Spatial‑TTT demonstrates that test‑time training, when combined with a hybrid architecture, large‑chunk processing, sliding‑window attention, and a spatial‑predictive convolutional module, can endow MLLMs with robust streaming visual‑based spatial intelligence. The work opens avenues for deploying such models in embodied robotics, autonomous navigation, and augmented reality systems where continuous spatial perception and reasoning are essential. Future directions include extending the approach to more complex embodied tasks, optimizing fast‑weight memory further, and integrating explicit 3‑D geometry representations.

Comments & Academic Discussion

Loading comments...

Leave a Comment