CreativeBench: Benchmarking and Enhancing Machine Creativity via Self-Evolving Challenges

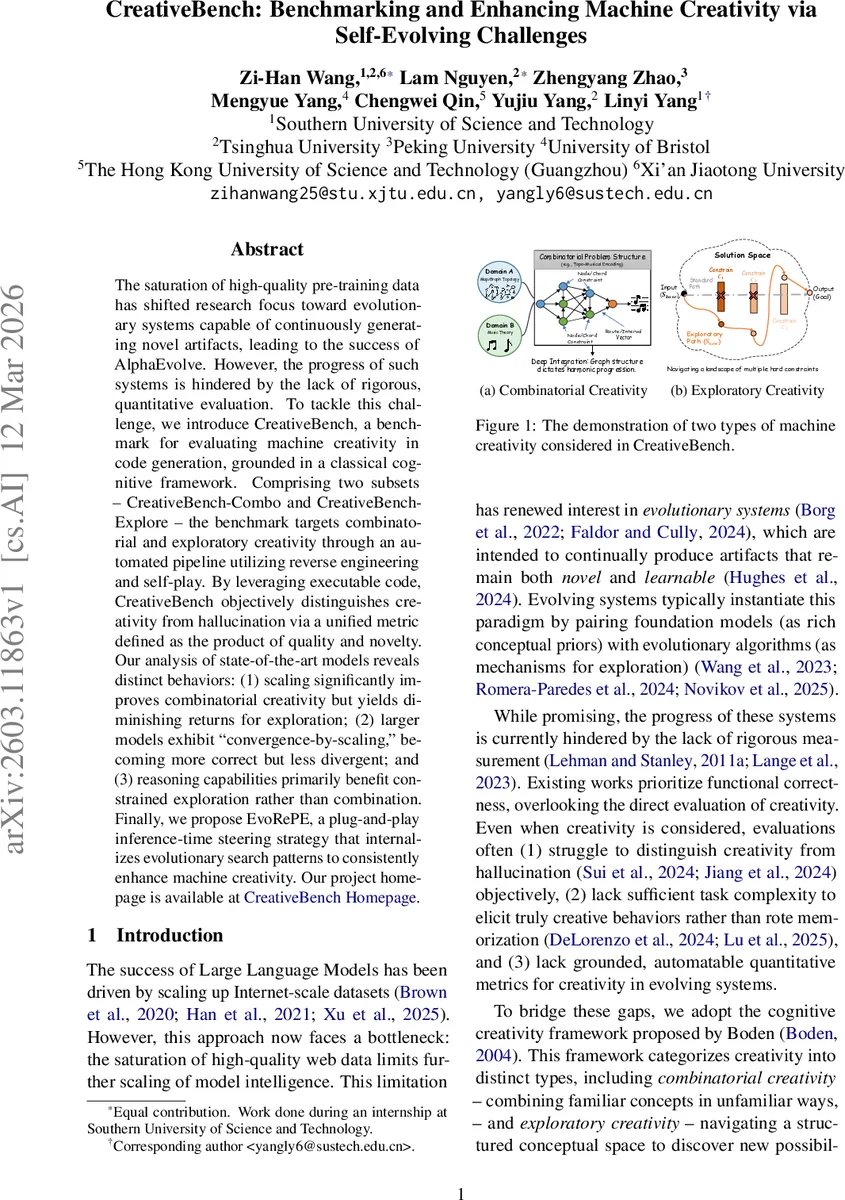

The saturation of high-quality pre-training data has shifted research focus toward evolutionary systems capable of continuously generating novel artifacts, leading to the success of AlphaEvolve. However, the progress of such systems is hindered by the lack of rigorous, quantitative evaluation. To tackle this challenge, we introduce CreativeBench, a benchmark for evaluating machine creativity in code generation, grounded in a classical cognitive framework. Comprising two subsets – CreativeBench-Combo and CreativeBench-Explore – the benchmark targets combinatorial and exploratory creativity through an automated pipeline utilizing reverse engineering and self-play. By leveraging executable code, CreativeBench objectively distinguishes creativity from hallucination via a unified metric defined as the product of quality and novelty. Our analysis of state-of-the-art models reveals distinct behaviors: (1) scaling significantly improves combinatorial creativity but yields diminishing returns for exploration; (2) larger models exhibit ``convergence-by-scaling,’’ becoming more correct but less divergent; and (3) reasoning capabilities primarily benefit constrained exploration rather than combination. Finally, we propose EvoRePE, a plug-and-play inference-time steering strategy that internalizes evolutionary search patterns to consistently enhance machine creativity.

💡 Research Summary

The paper introduces CreativeBench, a novel benchmark designed to quantitatively evaluate machine creativity in code generation. Drawing on Boden’s cognitive creativity framework, the benchmark splits into two complementary subsets: CreativeBench‑Combo, which measures combinatorial creativity (the ability to recombine familiar concepts in novel ways), and CreativeBench‑Explore, which assesses exploratory creativity (the capacity to navigate a structured conceptual space under increasingly restrictive constraints).

Data construction is fully automated. For Combo, the authors employ a “code‑first” reverse‑engineering pipeline: they first ask GPT‑4.1 to fuse code components from different domains into a single, executable solution, verify its correctness in a sandbox, generate corresponding test cases, and finally synthesize a clear problem statement that describes the solution. This guarantees that every benchmark instance has a verified reference implementation, allowing the metric to cleanly separate genuine creativity from hallucination.

Explore uses a self‑play mechanism where a Constraint Generator and a Solver interact iteratively. Starting from an unconstrained problem, the Generator adds a new negative constraint that invalidates a specific algorithmic choice made by the Solver. The Solver must then refine its solution to satisfy the accumulated constraint set, using a reference‑guided refinement loop with a limited number of attempts per level. The process continues until the Solver fails, producing a hierarchy of increasingly difficult, constraint‑rich tasks that push models toward structurally distinct algorithms.

The core evaluation metric is defined as Creativity = Quality × Novelty. Quality combines Pass@1 success rates with an LLM‑as‑judge evaluation, both executed in a sandboxed environment. Novelty is measured by a “logic distance” that blends embedding‑based similarity and n‑gram divergence between a candidate program and its baseline reference. Human expert validation confirms 89.1 % instance validity, and the automated metric correlates strongly with human judgments (Spearman ρ = 0.78).

Extensive experiments involve state‑of‑the‑art foundation models (GPT‑4, Gemini‑3‑Pro, Claude‑2, etc.) paired with various evolutionary algorithms (AlphaEvolve, FunSearch, GEP‑A). Three key insights emerge: (1) Scaling dramatically improves combinatorial creativity but yields diminishing returns for exploratory creativity, indicating that larger models excel at recombination but struggle to generate fundamentally new algorithmic strategies under tight constraints. (2) “Convergence‑by‑scaling” is observed: larger models become more correct yet less divergent, reducing the breadth of generated solutions. (3) Reasoning capabilities primarily benefit constrained exploration rather than combinatorial recombination.

To translate these findings into a practical improvement, the authors propose EvoRePE (Evolutionary Representation Engineering). EvoRePE extracts a “creativity vector” from the activation differences between high‑creativity and low‑creativity solutions observed during evolution. At inference time, this vector is injected into the model’s latent representation (e.g., added to the input embedding or intermediate hidden states), steering the model toward the creativity direction identified in the evolutionary trajectory. Empirical results show that EvoRePE consistently boosts the Creativity score by roughly 12 % across different models and evolutionary strategies, and the gains are orthogonal to the underlying search algorithm.

In summary, CreativeBench offers a rigorously constructed, automatically generated, and objectively measurable benchmark for code‑centric machine creativity, addressing prior shortcomings such as reliance on subjective human ratings, insufficient task difficulty, and lack of grounded metrics. The EvoRePE steering technique demonstrates that a portion of evolutionary search can be internalized as a latent‑space bias, providing a lightweight, plug‑and‑play method to enhance creativity at inference time. This work paves the way for more systematic development and evaluation of continuously creative AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment