One-Shot Individual Claims Reserving

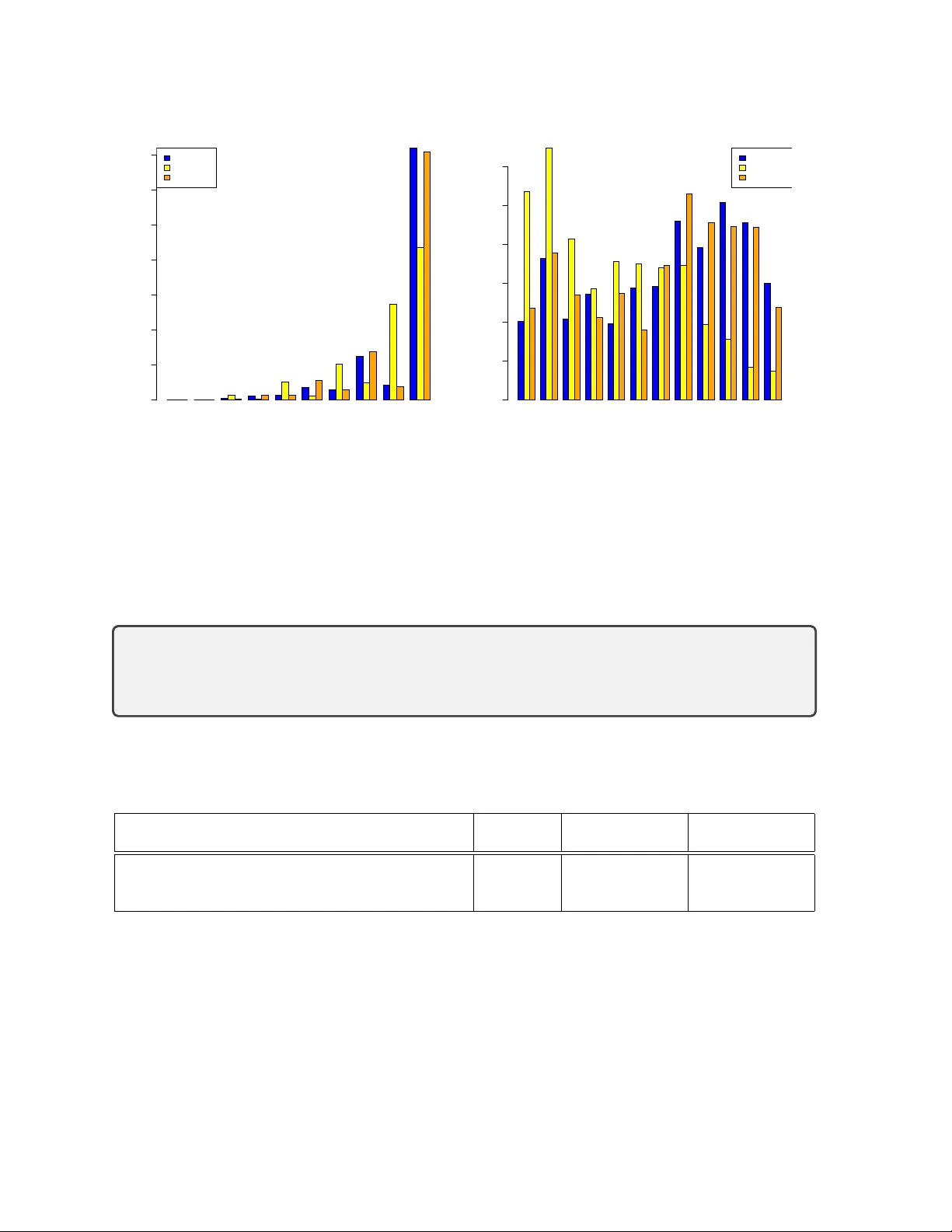

Individual claims reserving has not yet become established in actuarial practice. We attribute this to the absence of a satisfactory methodology: existing approaches tend to be either overly complex or insufficiently flexible and robust for practical…

Authors: Ronald Richman, Mario V. Wüthrich