EReCu: Pseudo-label Evolution Fusion and Refinement with Multi-Cue Learning for Unsupervised Camouflage Detection

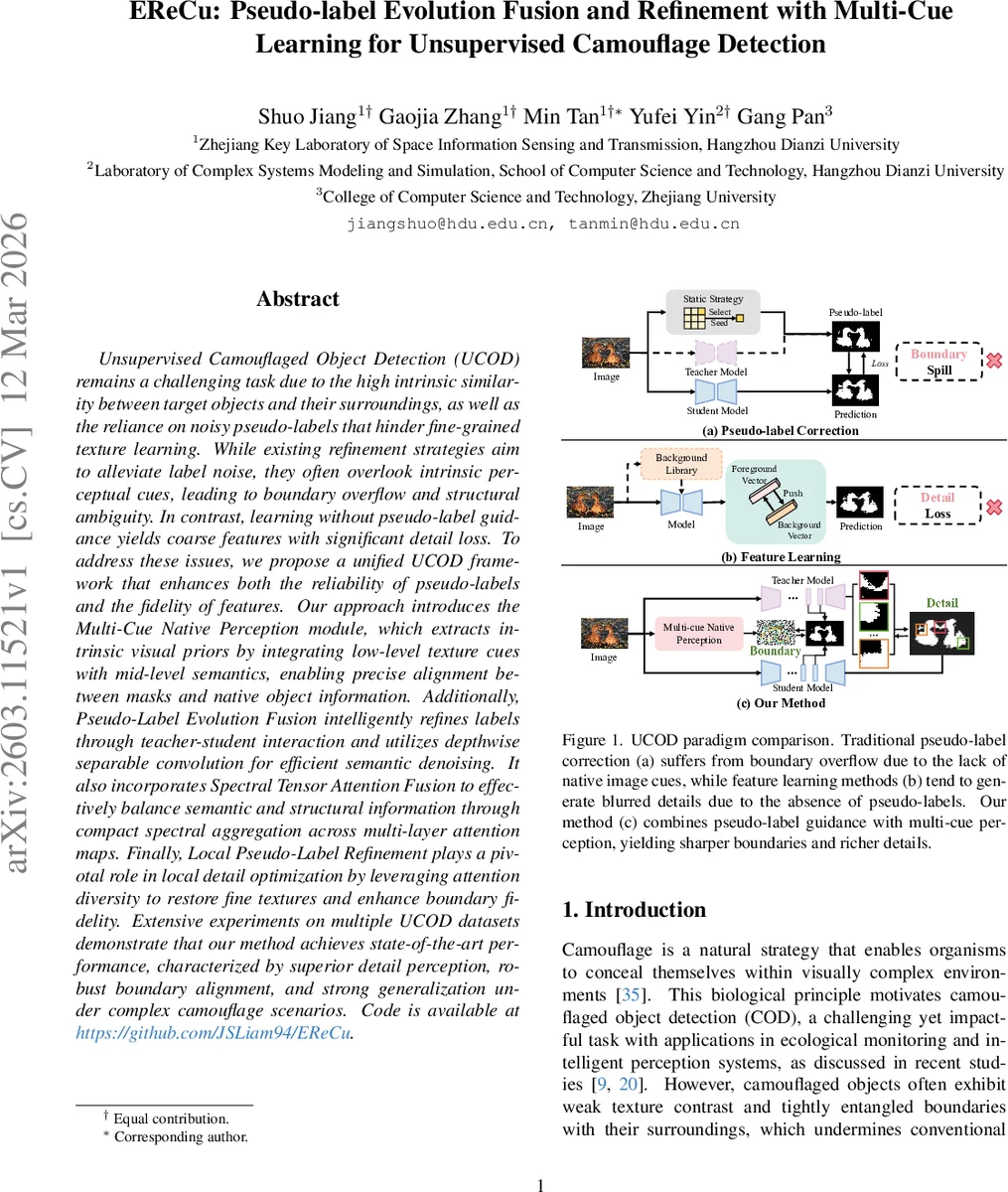

Unsupervised Camouflaged Object Detection (UCOD) remains a challenging task due to the high intrinsic similarity between target objects and their surroundings, as well as the reliance on noisy pseudo-labels that hinder fine-grained texture learning. While existing refinement strategies aim to alleviate label noise, they often overlook intrinsic perceptual cues, leading to boundary overflow and structural ambiguity. In contrast, learning without pseudo-label guidance yields coarse features with significant detail loss. To address these issues, we propose a unified UCOD framework that enhances both the reliability of pseudo-labels and the fidelity of features. Our approach introduces the Multi-Cue Native Perception module, which extracts intrinsic visual priors by integrating low-level texture cues with mid-level semantics, enabling precise alignment between masks and native object information. Additionally, Pseudo-Label Evolution Fusion intelligently refines labels through teacher-student interaction and utilizes depthwise separable convolution for efficient semantic denoising. It also incorporates Spectral Tensor Attention Fusion to effectively balance semantic and structural information through compact spectral aggregation across multi-layer attention maps. Finally, Local Pseudo-Label Refinement plays a pivotal role in local detail optimization by leveraging attention diversity to restore fine textures and enhance boundary fidelity. Extensive experiments on multiple UCOD datasets demonstrate that our method achieves state-of-the-art performance, characterized by superior detail perception, robust boundary alignment, and strong generalization under complex camouflage scenarios.

💡 Research Summary

The paper tackles two fundamental challenges in Unsupervised Camouflaged Object Detection (UCOD): (1) noisy pseudo‑labels that impede fine‑grained texture learning, and (2) the loss of detail when training without any label guidance. Existing refinement pipelines either ignore intrinsic perceptual cues—causing boundary overflow—or rely solely on feature‑learning, which yields blurred edges. To bridge this semantic‑perceptual gap, the authors propose EReCu, a unified teacher‑student framework that tightly couples pseudo‑label evolution with multi‑cue native perception.

The core of the method is three complementary modules.

-

Multi‑Cue Native Perception (MNP) extracts intrinsic visual priors by concatenating low‑level texture descriptors (Local Binary Patterns, Difference‑of‑Gaussians) with mid‑level semantic features from a frozen ResNet‑18. Random patch sampling stabilizes cosine similarity estimates across three region types (interior, boundary, exterior). The resulting multi‑cue quality metric S_mc (a sum of interior‑exterior separation, interior‑boundary contrast, and boundary‑exterior similarity) quantifies foreground‑background separability; its complement (1‑S_mc) serves as a loss L_MNP that continuously aligns pseudo‑labels with native image cues.

-

Pseudo‑Label Evolution Fusion (PEF) consists of Evolutionary Pseudo‑Label Learning (EPL) and Spectral Tensor Attention Fusion (STAF). EPL enables shallow student features to interact with deep teacher features via Depthwise Separable Convolution (DSC), which efficiently refines spatial and channel information while preserving texture. Both teacher and student produce coarse masks (M_p^t, M_p^s) and a DSC‑derived mask (M_dsc^s); these are iteratively refined under the guidance of L_MNP, forming a self‑evolving supervision signal. STAF aggregates multi‑layer attention maps into a tensor, then applies a compact spectral (FFT‑based) aggregation to fuse semantic structure with fine‑grained details, yielding a unified attention representation that is both discriminative and detail‑preserving.

-

Local Pseudo‑Label Refinement (LPR) leverages the high‑confidence regions identified by STAF to generate Target‑Aware Local Pseudo‑Labels. By focusing on diverse, high‑confidence attention patches, LPR restores fine textures and sharp boundaries that global predictions may miss. The locally refined masks are merged with the global pseudo‑labels, completing a feedback loop where native cues guide both global evolution and local correction.

Efficiency is a design priority: DSC dramatically reduces convolutional cost, and the spectral tensor fusion operates on compact frequency representations, enabling near‑real‑time inference. The backbone teacher network is a DINO‑pretrained Vision Transformer, providing strong self‑supervised visual representations without extra annotation.

Extensive experiments on standard UCOD benchmarks (COD10K, CHAMELEON, CAMO) and related unsupervised segmentation datasets demonstrate that EReCu outperforms prior state‑of‑the‑art methods such as UCOS‑DA, UCOD‑DPL, Sdal‑sNet, and EASE across multiple metrics (mIoU, F‑measure, E‑measure). Notably, the method achieves 5–8 percentage‑point gains in boundary accuracy and texture recovery, and shows robust generalization to complex camouflage scenarios with highly entangled foreground‑background textures.

In summary, the contributions are: (i) a unified UCOD framework that fuses pseudo‑label evolution with native perceptual cues via a self‑evolving teacher‑student loop; (ii) the novel MNP metric and loss that quantify and enforce foreground‑background separability; (iii) an efficient evolution‑fusion pipeline (EPL + STAF) that denoises pseudo‑labels while preserving fine details; and (iv) a local refinement stage (LPR) that restores high‑frequency structures. The approach not only sets a new performance baseline for unsupervised camouflage detection but also offers a transferable paradigm for other segmentation tasks where labeled data are scarce.

Comments & Academic Discussion

Loading comments...

Leave a Comment