Evaluating Zero-Shot and One-Shot Adaptation of Small Language Models in Leader-Follower Interaction

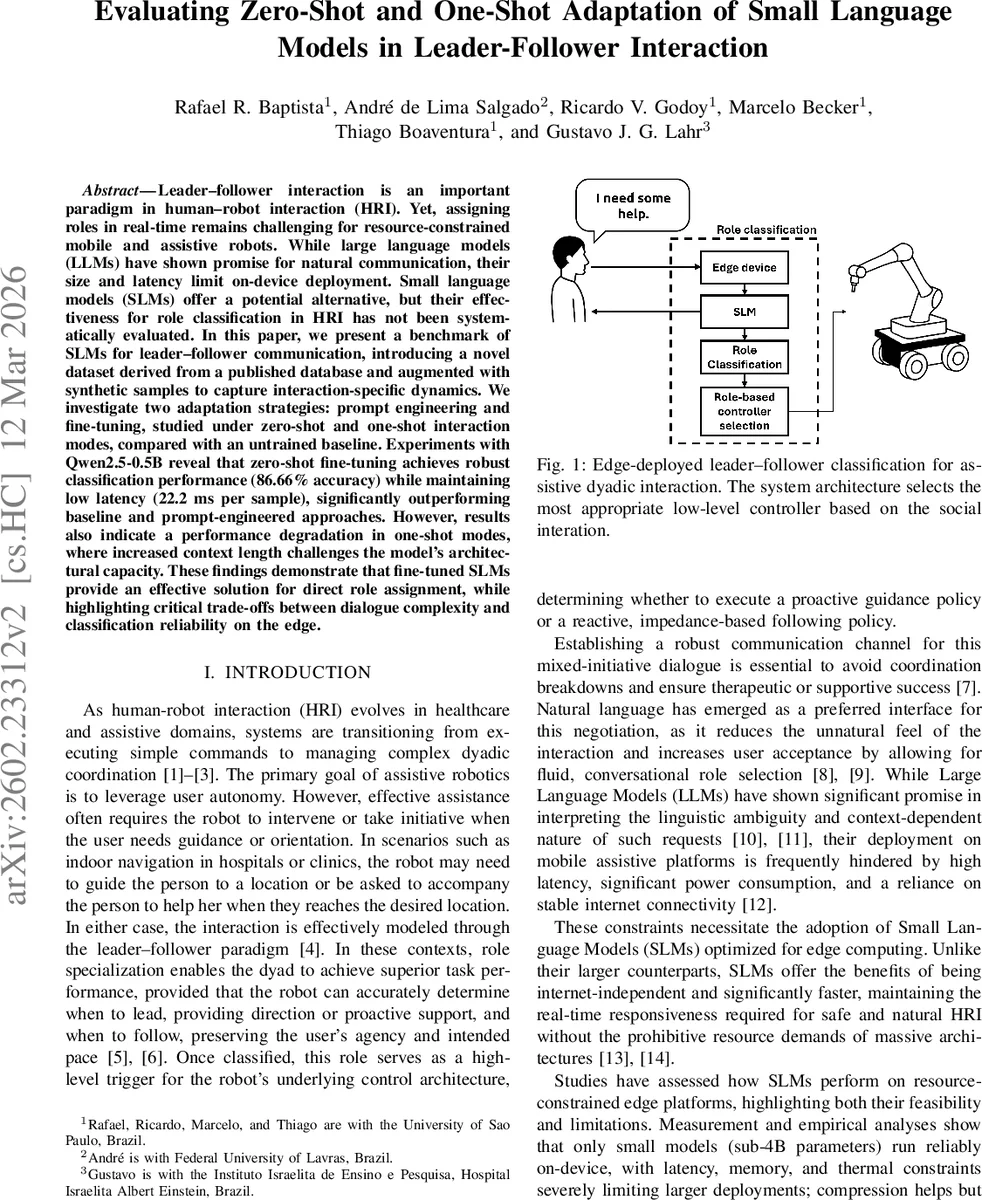

Leader-follower interaction is an important paradigm in human-robot interaction (HRI). Yet, assigning roles in real time remains challenging for resource-constrained mobile and assistive robots. While large language models (LLMs) have shown promise for natural communication, their size and latency limit on-device deployment. Small language models (SLMs) offer a potential alternative, but their effectiveness for role classification in HRI has not been systematically evaluated. In this paper, we present a benchmark of SLMs for leader-follower communication, introducing a novel dataset derived from a published database and augmented with synthetic samples to capture interaction-specific dynamics. We investigate two adaptation strategies: prompt engineering and fine-tuning, studied under zero-shot and one-shot interaction modes, compared with an untrained baseline. Experiments with Qwen2.5-0.5B reveal that zero-shot fine-tuning achieves robust classification performance (86.66% accuracy) while maintaining low latency (22.2 ms per sample), significantly outperforming baseline and prompt-engineered approaches. However, results also indicate a performance degradation in one-shot modes, where increased context length challenges the model’s architectural capacity. These findings demonstrate that fine-tuned SLMs provide an effective solution for direct role assignment, while highlighting critical trade-offs between dialogue complexity and classification reliability on the edge.

💡 Research Summary

The paper addresses the challenge of real‑time leader‑follower role assignment in human‑robot interaction (HRI) on resource‑constrained platforms. While large language models (LLMs) have demonstrated strong natural‑language understanding, their size, latency, and reliance on cloud connectivity make them unsuitable for on‑device deployment in assistive robots. The authors therefore investigate whether a small language model (SLM), specifically Qwen2.5‑0.5B (≈0.5 B parameters), can reliably classify whether a user request implies a “leader” or “follower” role, and how different adaptation strategies affect performance under two interaction paradigms: zero‑shot (single user utterance) and one‑shot (model asks one clarifying question before deciding).

Dataset creation – Because no public leader‑follower HRI corpus exists, the authors built one from DailyDialog. They manually selected 415 questions that naturally map to leader or follower intent, labeling them accordingly. To enlarge the data, three state‑of‑the‑art LLMs (DeepSeek, Gemini, GPT‑4) generated six paraphrases per question, yielding 5,400 synthetic samples (1,800 per generator). Semantic fidelity was measured with Sentence‑BERT embeddings; GPT‑4‑augmented data achieved the highest cosine similarity (≈0.84), indicating good preservation of meaning. Two dataset variants were produced: (1) a zero‑shot set containing only the user utterance and its label, and (2) a one‑shot set that adds a synthetic clarifying question and an intentionally ambiguous user response, emulating a “scarecrow” validation pipeline that avoids early human‑subject exposure.

Model and adaptation strategies – Qwen2.5‑0.5B was chosen due to its proven parameter efficiency and low inference latency on edge hardware (e.g., NVIDIA Jetson). Two adaptation approaches were compared:

-

Prompt engineering – Hand‑crafted system prompts. For zero‑shot, a single prompt encodes the robot’s persona and task instructions. For one‑shot, two prompts are used: one to elicit a clarifying question, another to combine the original utterance and the user’s answer for final classification. This decomposition aims to mitigate the limited capacity of small models.

-

Fine‑tuning – The base model was fine‑tuned on the synthetic dataset using the Autotrain framework (10 epochs, batch size 16, FP16 mixed precision, weight decay 0.01). In zero‑shot, a single fine‑tuned model directly predicts the role. In one‑shot, two separate fine‑tuned models were trained: one specialized in generating the clarifying question, the other in final role classification using both inputs.

Evaluation protocol – Monte‑Carlo cross‑validation with 30 independent runs was employed to obtain robust statistics. Metrics reported include accuracy, precision, recall, F1‑score, tokens per second, and latency (ms). The test set comprised 100 human‑sourced questions (50 leader, 50 follower) held out from training.

Results –

-

Zero‑shot: The fine‑tuned model achieved 86.66 % ± 6.77 accuracy, 84.81 % precision, 90.05 % recall, and an F1 of 86.72 %, with an average latency of 22.2 ms ± 4.2 and throughput of 432 tokens/s. Prompt‑engineered zero‑shot performed poorly (≈53 % accuracy) and was slower (≈112 ms). The untrained baseline was near chance (55 % accuracy).

-

One‑shot: Fine‑tuned accuracy dropped to 51.65 % ± 13.40, with a similar latency (22.2 ms) but a dramatically higher token count (≈1,851 tokens per interaction) due to the added clarifying exchange. Prompt‑engineered one‑shot also lagged (≈45 % accuracy) and exhibited high variance in latency. The performance degradation is attributed to the model’s limited context window; the longer combined input exceeds the 0.5 B model’s capacity to maintain attention over all tokens, leading to information loss.

Key insights –

- Fine‑tuning small models can match or exceed large‑model performance for direct, single‑turn role classification, delivering sub‑30 ms latency suitable for real‑time robot control.

- Prompt engineering alone is insufficient for this task; the model’s pre‑training distribution does not align well with the specialized leader‑follower semantics.

- One‑shot interaction introduces a context‑length bottleneck. Even though the model can generate a clarifying question, the subsequent combined input overwhelms its attention mechanism, causing a steep accuracy drop despite unchanged inference speed.

- Synthetic data quality matters: higher semantic similarity (as seen with GPT‑4) correlates with better downstream performance, suggesting that careful LLM‑based augmentation can compensate for limited real‑world data.

- Trade‑offs emerge between dialogue richness (multiple turns) and edge feasibility; future work should explore context compression, lightweight attention variants, or hierarchical models to retain multi‑turn capabilities without sacrificing accuracy or latency.

Conclusion – The study demonstrates that a well‑fine‑tuned 0.5 B parameter language model can reliably perform leader‑follower role classification on edge devices, achieving high accuracy and low latency in a zero‑shot setting. However, extending to one‑shot or multi‑turn interactions currently exceeds the model’s architectural limits, highlighting a critical area for future research in efficient context handling for small on‑device language models in HRI.

Comments & Academic Discussion

Loading comments...

Leave a Comment