OpenVision 3: A Family of Unified Visual Encoder for Both Understanding and Generation

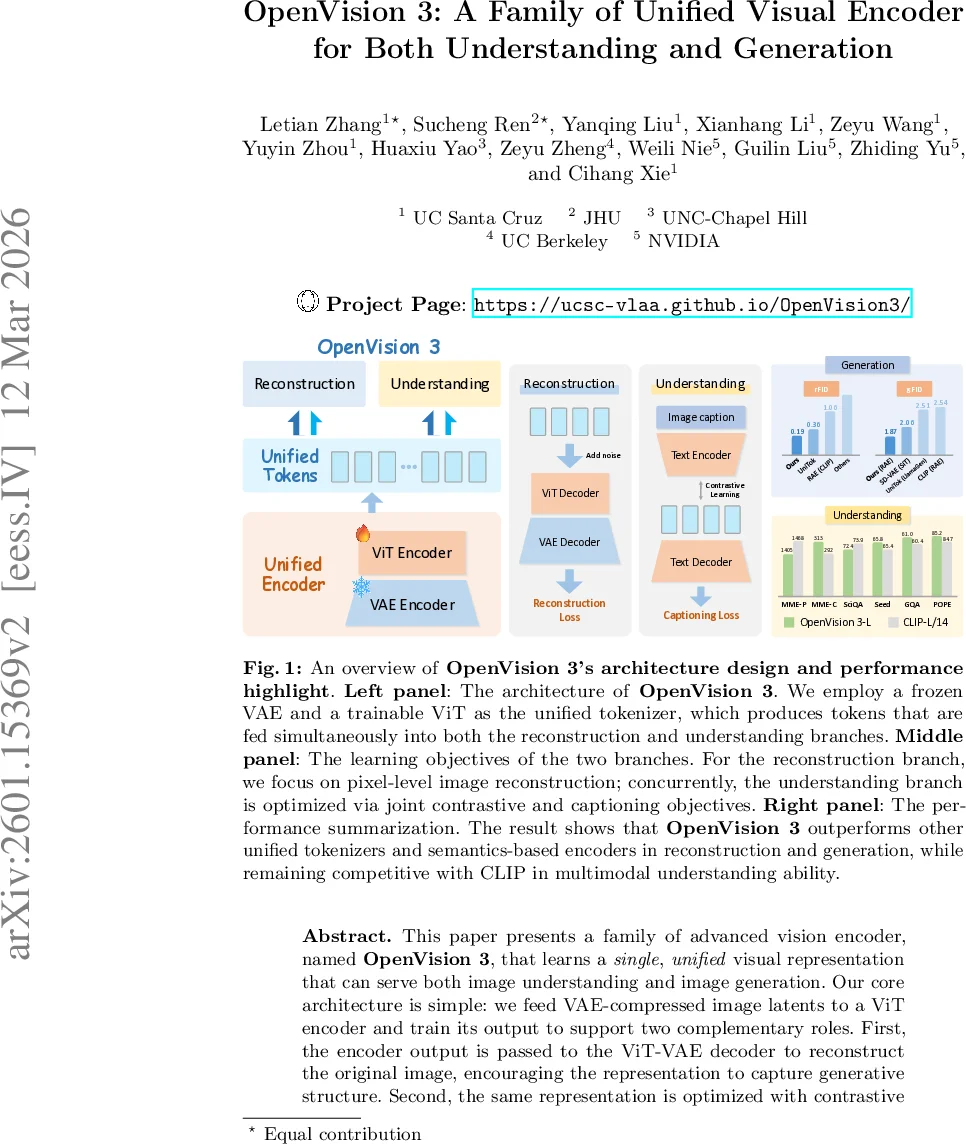

This paper presents a family of advanced vision encoder, named OpenVision 3, that learns a single, unified visual representation that can serve both image understanding and image generation. Our core architecture is simple: we feed VAE-compressed image latents to a ViT encoder and train its output to support two complementary roles. First, the encoder output is passed to the ViT-VAE decoder to reconstruct the original image, encouraging the representation to capture generative structure. Second, the same representation is optimized with contrastive learning and image-captioning objectives, strengthening semantic features. By jointly optimizing reconstruction- and semantics-driven signals in a shared latent space, the encoder learns representations that synergize and generalize well across both regimes. We validate this unified design through extensive downstream evaluations with the encoder frozen. For generation, we test it under the RAE framework: ours substantially surpasses the standard CLIP-based encoder (e.g., gFID: 1.87 vs. 2.54 on ImageNet). For multimodal understanding, we plug the encoder into the LLaVA-1.5 and LLaVA-NeXT framework: it performs comparably with a standard CLIP vision encoder (e.g., 63.3 vs. 61.2 on SeedBench, and 59.2 vs. 58.1 on GQA). We provide empirical evidence that generation and understanding are mutually beneficial in our architecture, while further underscoring the critical role of the VAE latent space. We hope this work can spur future research on unified modeling.

💡 Research Summary

OpenVision 3 introduces a unified visual encoder that simultaneously serves image understanding and image generation tasks. The core idea is to build a continuous visual tokenizer by stacking a Vision Transformer (ViT) on top of a frozen, high‑quality VAE (the FLUX‑1 VAE). An input image is first encoded by the VAE into latent vectors z_vae, which are then processed by a ViT with a 2×2 patch size, yielding unified tokens z_u at a 16× spatial compression. This design preserves fine‑grained spatial detail while avoiding the quantization errors inherent in discrete tokenizers.

The unified tokens are fed into two separate branches that share the same representation. The reconstruction branch adds Gaussian noise to z_u, decodes it back to VAE latents with a ViT decoder (1×1 patches) and a linear layer, and finally reconstructs the image using the VAE decoder. Its loss L_rec combines pixel‑wise L1, latent‑space L1, and a perceptual LPIPS term, encouraging the tokens to retain low‑level visual fidelity.

The understanding branch follows a contrastive‑plus‑captioning paradigm. A text encoder extracts caption embeddings z_txt, which are aligned with z_u via a contrastive loss L_contrastive. In parallel, a text decoder predicts synthetic captions autoregressively from z_u, yielding a captioning loss L_caption. The overall objective is L_overall = ω_rec·L_rec + ω_und·L_und, with the understanding weight set twice as high as the reconstruction weight to prioritize semantic alignment while still preserving generative quality.

Training proceeds in two stages. First, a low‑resolution (128×128) pre‑training phase runs for 4000 epochs on a batch of 8192 samples with a cosine‑decayed learning rate of 8e‑6; LPIPS is disabled to avoid resolution‑related conflicts. Second, a high‑resolution (224/256) fine‑tuning phase runs for 400 epochs on a batch of 4096 with a learning rate of 4e‑7. The VAE remains frozen throughout, while all other components (ViT encoder/decoder, text encoder/decoder, linear layers) are trained from scratch.

Extensive evaluation covers three dimensions: reconstruction, generation, and multimodal understanding. In reconstruction, OpenVision 3 achieves PSNR ≈ 30.9 dB, SSIM ≈ 0.90, LPIPS ≈ 0.05, and rFID ≈ 0.187 on ImageNet and COCO, substantially outperforming prior unified tokenizers such as UniToken, OmniTokenizer, and Vila‑U. For generation, the model is plugged into the RAE framework with a DiT generator; it yields a generative FID (gFID) of 1.87 on ImageNet, a 30 % improvement over the CLIP‑based encoder (gFID 2.54). In multimodal understanding, integrating the encoder into LLaVA‑1.5 and LLaVA‑NeXT yields SeedBench 63.3 and GQA 59.2, comparable to CLIP’s 61.2 and 58.1 respectively, while keeping the encoder frozen to demonstrate true transferability.

A notable finding is the mutual promotion between the two branches: training with only contrastive and captioning losses already improves reconstruction quality, and conversely, reconstruction‑only training enhances semantic alignment. This synergy suggests that semantic and generative signals can be harmonized in a single latent space without the need for separate encoders. Moreover, leveraging the VAE latent space directly enables high‑resolution generation with faithful pixel‑level details, sidestepping the degradation typical of discrete tokenizers.

In summary, OpenVision 3 presents a simple yet powerful paradigm for unified visual tokenization: a continuous ViT‑based tokenizer built on top of a frozen VAE, jointly optimized for reconstruction and semantic objectives. It delivers state‑of‑the‑art performance across reconstruction, generation, and understanding while dramatically simplifying architecture compared to prior dual‑tokenizer systems. The work establishes a strong baseline for future unified multimodal models and demonstrates that continuous tokenization can effectively bridge the gap between low‑level generative fidelity and high‑level semantic understanding.

Comments & Academic Discussion

Loading comments...

Leave a Comment