SimulU: Training-free Policy for Long-form Simultaneous Speech-to-Speech Translation

Simultaneous speech-to-speech translation (SimulS2S) is essential for real-time multilingual communication, with increasing integration into meeting and streaming platforms. Despite this, SimulS2S remains underexplored in research, where current solu…

Authors: Amirbek Djanibekov, Luisa Bentivogli, Matteo Negri

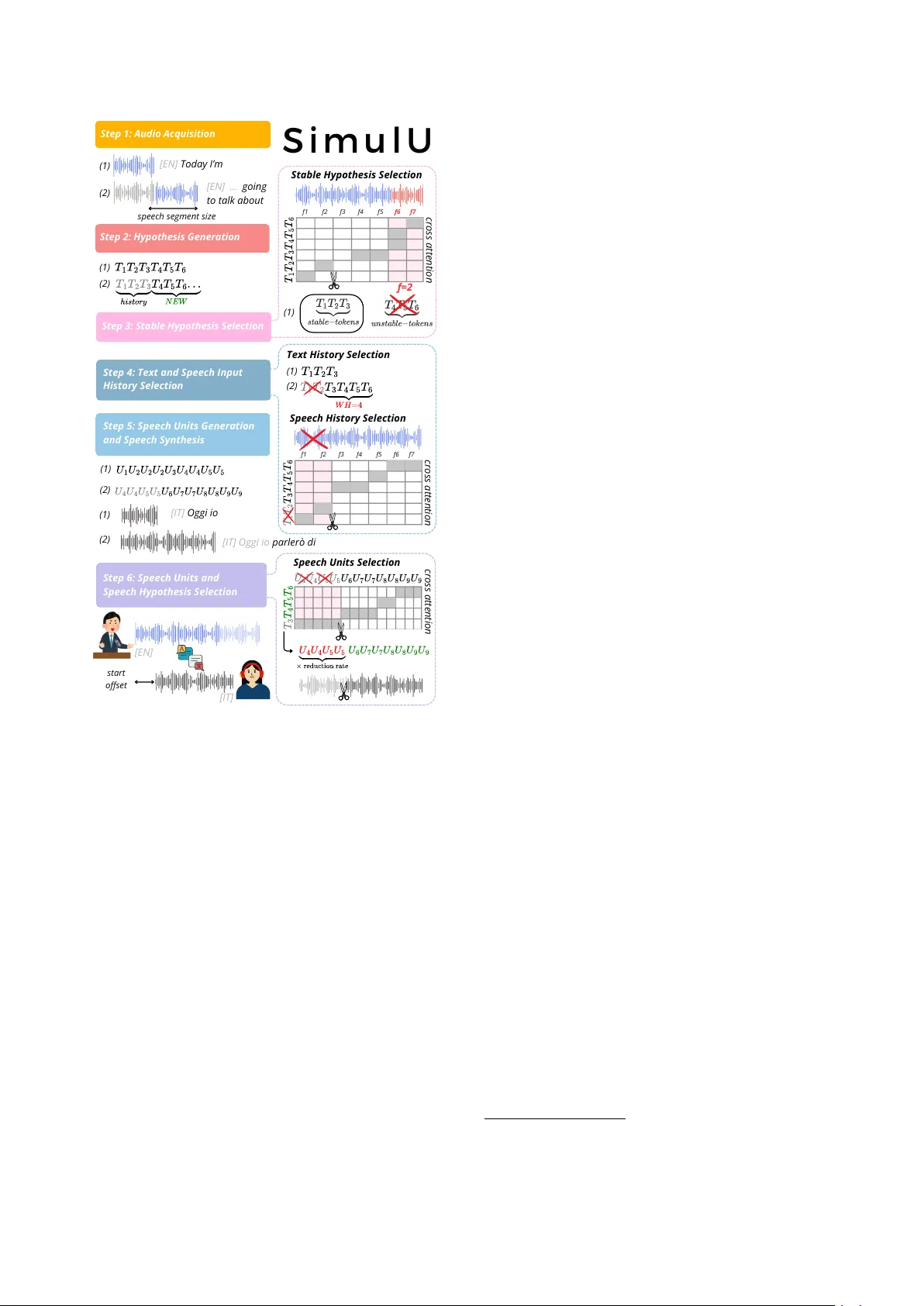

SimulU: T raining-fr ee P olicy f or Long-f orm Simultaneous Speech-to-Speech T ranslation Amirbek Djanibekov ID 1 , Luisa Bentivogli ID 2 , Matteo Ne gri ID 2 , Sara P api ID 2 1 MBZU AI, United Arab Emirates 2 FBK, Italy amirbek.djanibekov@mbzuai.ac.ae, spapi@fbk.eu Abstract Simultaneous speech-to-speech translation (SimulS2S) is es- sential for real-time multilingual communication, with increas- ing integration into meeting and streaming platforms. Despite this, SimulS2S remains underexplored in research, where cur- rent solutions often rely on resource-intensive training proce- dures and operate on short-form, pre-segmented utterances, fail- ing to generalize to continuous speech. T o bridge this gap, we propose SimulU, the first training-free policy for long-form SimulS2S. SimulU adopts history management and speech out- put selection strategies that exploit cross-attention in pre-trained end-to-end models to regulate both input history and output generation. Evaluations on MuST -C across 8 languages show that SimulU achie v es a better or comparable quality-latency trade-off against strong cascaded models. By eliminating the need for ad-hoc training, SimulU offers a promising path to end- to-end SimulS2S in realistic, long-form scenarios. Index T erms : spoken translation, simultaneous processing, si- multaneous speech-to-text, long-form 1. Introduction Speech-to-speech (S2S) technology represents a high-potential direction for enhancing natural and seamless human-computer interaction [1, 2], enabling end-to-end spoken communication across languages and modalities [3]. W ithin this context, simul- taneous translation (a.k.a. simultaneous interpretation) extends the S2S paradigm by requiring a system to generate translated speech incrementally as the input stream is received. This setup mandates a real-time decision-making policy to balance the trade-off between reading new input and writing output based on partial information, often under acoustic and linguistic un- certainty . The optimal design of such a simultaneous policy is further complicated for long-form inputs, where simultaneous S2S translation (SimulS2ST) operates on continuous, unseg- mented speech streams rather than pre-segmented utterances. The SimulS2ST policy is usually learned during complex training pipelines. For instance, [4] jointly optimizes four dis- tinct objectiv es, while [5] adopts a two-stage procedure incor- porating a large language model [6]. More recent approaches, such as [7] and [8], further introduce reinforcement learning to refine policy learning. Additionally , the training process is compounded by the need for large-scale speech data [9]. The limited availability of w ord-lev el aligned corpora necessitates the use of synthetic datasets, where alignments are automat- ically generated through hand-crafted heuristics [9, 8] (e.g., natural pauses). Standard approaches typically use cascaded pipelines that include speech-to-text translation (S2TT) and text-to-speech (TTS) components [10, 11]. Howe ver , cascaded systems have several problems. First, they are subject to com- pounding errors due to combining separately trained models [12]. Second, as input speech goes through the text bottleneck, the non-linguistic information it carries (e.g., speaker identity , prosody) is lost and cannot be transferred to the output speech [13]. Finally , cascaded pipelines are inherently disadvantaged in latency-critical settings, as each component must complete its processing before the ne xt can be gin, making such systems less suited for simultaneous translation. In addition, most existing works e v aluate SimulS2ST in a short-form setting [14, 5], rely- ing on test sets inherited from offline scenarios where input au- dio is pre-segmented (often manually) into fixed-length chunks (typically up to 30 seconds). This setup constrains systems to operate within predefined boundaries, limiting their ability to handle long-form, continuous speech and diver ging from more realistic deployment conditions [15]. T o fill these gaps, we propose SimulU , the first training- free simultaneous policy for long-form end-to-end speech-to- speech translation. Building on the recent success of exploiting attention scores for guiding simultaneous S2TT inference [16], we propose le veraging cross-attention not only to decide what and when to emit a partial spok en translation, b ut also to deter - mine which contextual history–both from the received speech input and the generated output–to retain, thereby enabling long- form speech generation. These decisions are made solely based on the internal knowledge of pre-existing models that natively incorporate attention mechanisms, without requiring retraining or adaptation. SimulU eliminates the need for costly ad-hoc training procedures while addressing the read/write decision problem directly in an end-to-end setting. W e sho wcase our proposed policy by applying it to a strong offline pretrained model, SeamlessM4T [17], and comparing it against strong baselines built on state-of-the-art ASR, S2TT , and TTS compo- nents across all 8 languages of MuST -C v1.0 [18]. Our method achiev es the best quality-latency trade-of f in most settings, pro- viding the first promising step in the training-free policy re- search for long-form speech-to-speech translation. 2. Methodology SimulU is a simultaneous policy that implements history man- agement and speech output selection for direct SimulS2ST based on internal kno wledge of the system, in particular , the cross-attention scores. The policy is applied to the Seam- lessM4T S2ST model, which employs a speech-to-te xt mod- ule, a text-to-unit module, and a vocoder (more details on the SimulU backbone can be found in Section 3.3) that were jointly trained for offline end-to-end S2ST . W ithout requiring additional training or adaptation, SimulU repurposes attention-based offline models (here SeamlessM4T) for simultaneous generation, a process frequently referred to as S t e p 5 : S p e e c h U n i t s G e n e r a t i o n a n d S p e e c h S y n t h e s i s S t e p 4 : T e x t a n d S p e e c h I n p u t H i s t o r y S e l e c t i o n T e x t H i s t o r y S e l e c t i o n (1) (2) f 1 f 2 f 3 f 4 f 5 f 7 f 6 c r o s s a t t e n t i o n S t e p 6 : S p e e c h U n i t s a n d S p e e c h H y p o t h e s i s S e l e c t i o n S t e p 1 : A u d i o A c q u i s i t i o n S i m u l U [ I T ] O g g i i o [ I T ] O g g i i o p a r l e r ò d i (1) (2) S t e p 3 : S t a b l e H y p o t h e s i s S e l e c t i o n s p e e c h s e g m e n t s i z e S t e p 2 : H y p o t h e s i s G e n e r a t i o n (1) (2) S p e e c h H i s t o r y S e l e c t i o n c r o s s a t t e n t i o n f 1 f 2 f 3 f 4 f 5 f 7 f 6 S t a b l e H y p o t h e s i s S e l e c t i o n c r o s s a t t e n t i o n (1) (2) (1) (1) (2) S p e e c h U n i t s S e l e c t i o n f = 2 [ E N ] T o d a y I ’ m [ E N ] . . . g o i n g t o t a l k a b o u t s t a r t o f f s e t [ E N ] [ I T ] Figure 1: SimulU Overvie w . The incoming speech input, here in English, is repr esented by the blue waveform , while the speech output, her e in Italian, is r epr esented by the black wav eform . onlinization [19, 20]. Its w orkflow follows a six-step polic y , illustrated in Figure 1 and detailed below: 1. A udio Acquisition : the incoming speech input, divided in chunks with size speech se gment size , is incrementally added to the speech history . 2. Hypothesis Generation : the content of the speech history is provided to the speech-to-text module to generate intermedi- ate textual representations. 3. Stable Hypothesis Selection : the intermediate textual repre- sentation is selected to be giv en as context to Step 5 based on speech-text cross-attention scores following [21], which stops the hypothesis emission as soon as a token is aligned (i.e., having the maximum attention score) with one of the un- stable audio frames (i.e., the lastly recei ved speech frames); the unstable audio frames, hereinafter cut-off frame defined by the f hyperparameter (and equal to 2 in Figure 1), control the latency of the system. 4. T ext and Speech Input History Selection : to allow for long- form speech processing, both text history and speec h history (at input only) hav e to be selected to maintain a context that is manageable by the model. T o this aim, we preserv e a fixed number of words, hereinafter W H (equal to 4 in Figure 1) in the text history , following [16], and exploit speech-text cross-attention scores again to select the audio frames cor- responding to the discar ded text history (equal to 2 in Fig- ure 1), which are remov ed from the speech history to al ways keep the text and speech content aligned. 5. Speech Units Generation and Speech Synthesis : the inter- mediate textual representation is provided to the text-to-unit module and, consequently , to the vocoder to generate the out- put speech; the whole text history is provided in this phase, as we found it dramatically improves the synthesized speech. 6. Speech Units and Speech Hypothesis Selection : the text- unit cross-attention is lev eraged in the final step, which is in charge of selecting the part of the output speech that cor- responds to the newly generated hypothesis; by taking the maximum attention scores (similar to Step 3 and 4), the in- termediate textual representation is aligned with the corre- sponding units, and the units assigned to the text history but the new hypothesis ( T 3 in Figure 2) are discarded; the num- ber of discarded units is then multiplied by the r eduction r ate of SeamlessM4T (i.e., 320 ) to retriev e the part of the syn- thesized wav eform to be cut from the output speech. The resulting partial wa veform is emitted. 3. Experimental Settings 3.1. Data T o be comparable with pre vious S2TT w orks [16, 22], we e v al- uated on all languages of MuST -C v1.0 [18], English (en) to Dutch (nl), French (fr), German (de), Italian (it), Portuguese (pt), Russian (ru), Romanian (ro), Spanish (es). W e simulate streaming conditions by providing the entire TED T alks from the MuST -C dev and tst-COMMON set as input. The av erage durations for dev elopment and test sets are 948 seconds (15.7 minutes) and 650 seconds (10.8 minutes), respectiv ely . 3.2. Metrics W e follow the settings from the IWSL T 2023 ev aluation cam- paign on simultaneos speech-to-speech translation [23], which measures both quality and latency using SimulEval [24]. F or quality , BLEU score [25] is computed on the transcripts ob- tained with the Canary [26] model on the translated output speech. W e use Canary ov er Whisper [27] because we em- pirically found that Canary handles long speech better than Whisper . Additionally , we applied the Whisper text normal- izer before computing the BLEU score. For latency , we report StartOffset following IWSL T 2023 settings (negativ e results are excluded, as they are due to misalignment). It is measured in seconds and represents the minimum amount of source input that must be observed before the system emits the first target output. A graphical representation is provided in Figure 1. 3.3. Models SimulU Backbone. W e employ the SeamlessM4T model ( SeamlessM4T-medium-v1 1 ), a series of transformer encoder-decoder architecture [17] for translation. The S2T model uses 2 frame stacking, 256k word-piece tok enization, and supports around 100 languages, resulting in ∼ 1B total pa- 1 https://huggingface.co/facebook/ hf- seamless- m4t- medium W ord History = 5 W ord History = 10 W ord History = 15 W ord History = 20 W ord History = 25 ASR Start End ASR Start End ASR Start End ASR Start End ASR Start End BLEU Offset Offset BLEU Offset Of fset BLEU Offset Of fset BLEU Offset Of fset BLEU Offset Of fset 2 17.97 0.90 77.24 18.56 0.90 128.68 19.38 0.90 161.47 16.60 0.90 187.27 13.63 0.90 183.51 4 16.93 1.36 51.18 23.04 1.24 147.60 18.65 1.36 151.84 18.15 1.36 240.88 12.73 1.36 202.10 6 16.54 1.72 55.95 18.85 1.72 131.39 20.32 1.72 179.22 17.87 1.72 256.14 11.37 1.72 184.63 8 18.38 1.77 46.78 17.64 1.77 121.12 20.12 1.77 189.09 18.25 1.77 263.51 11.37 1.77 173.28 T able 1: ASR-BLEU scor es for dif fer ent W ord History ( W H ) configurations (5-25) acr oss combinations of cut-off frame f (2, 4, 6, 8). rameters. The model takes a mel-spectrogram with a hop size of 160 as input and produces tok enized text as output. The speech encoder follows the w2v-BER T [28] frame work and consists of 12 Conformer layers, totaling approximately 300 million parameters. For the text decoder, SeamlessM4T adopts the NLLB [29] decoder trained on around 100 languages, rather than the 200 languages used in the original NLLB study . Addi- tionally , the NLLB text encoder is employed for knowledge dis- tillation, aligning the speech encoder’ s representations with the text embedding space using the SONAR [30] alignment score. The TTS employs a transformer encoder–decoder (170M pa- rameters) that produces speech units at 50Hz. It outputs dis- crete speech units derived from XLS-R-1B 35th layer represen- tations [31] using (k)-means clustering, and uses a specially de- signed interlea ving between speech encoded representation and decoded translation te xt. Finally , the generated speech units are sent to a unit-vocoder , which is a multilingual HiFi-GAN [32] unit that synthesizes speech from those units. T o determine optimal parameter setting for the word history of SimulU, we did preliminary e xperiments on the MuST -C de v set in the en-de direction. The results are shown in T able 1. Overall, the SimulU configuration using a word history of 10 yields results that are better than or on par with the others, while keeping the history relati v ely short, and therefore used through- out the rest of the paper . Baselines. W e dev eloped four strong cascade approaches based on state-of-the-art training-free S2TT policies, StreamAtt [16] and LocalAgreement (LA [33]), which are then coupled with existing TTS models for the speech generation part: • StreamAtt+SeamT TS is based on StreamAtt [16], which enables long-form S2TT by lev eraging cross-attention be- tween speech and generated te xt to both guide simultane- ous inference and history management; the partial gener- ated text is then given to the SeamlessM4T TTS model (Seam.TTS), which is based on the unit-generation architec- ture of UnitY [34] for the speech generation. This baseline directly compares the cascaded approach to the end-to-end SimulU approach within the same model, highlighting the performance dif ference under the same data and architecture. • StreamAtt+XTT S-v2 is a cascade approach made of the state-of-the-art S2TT policy , StreamAtt, and the strongest multilingual TTS system that supports 17 languages, except Romanian, achie ving the best result on the TTS Arena 2 (best multilingual TTS results after monolingual-English K ok o- roTTS [35] and Fish Speech [36]). This system is consid- ered an upperbound of the TTS performance, as sho wn in T a- ble 2, where XTTSv2 achieves WER and CER scores from 4 to 10 times lower (hence, better) than Seam.TTS, while be- ing comparable or slightly worse regarding naturalness (UT - 2 https://huggingface.co/spaces/TTS- AGI/ TTS- Arena TTS sys. Metric de fr it nl pt es ru ro Seam.TTS WER (%) 41.77 35.28 39.03 40.81 43.29 34.59 46.89 40.15 CER (%) 31.76 28.75 31.29 31.33 34.41 29.14 35.56 26.29 UTMOS 2.82 2.60 3.63 3.50 3.87 3.60 3.32 3.83 ( ± std) (0.32) (0.35) (0.34) (0.27) (0.33) (0.33) (0.41) (0.29) XTTS-v2 WER (%) 9.789 14.02 10.25 10.19 6.24 3.93 9.78 — CER (%) 7.70 10.60 7.85 7.00 3.17 2.38 6.49 — UTMOS 2.77 2.53 2.71 2.94 2.83 2.66 2.87 — ( ± std) (0.40) (0.40) (0.38) (0.34) (0.37) (0.36) (0.33) — T able 2: TTS r esults for the Seam.TTS and XTTS-v2 systems. MOS [37] from V oiceMOS challenge). • LA+Seam.TTS is a baseline system derived from the IWSL T 2025 simultaneous Speech-to-T ext ev aluation campaign [38]. It uses Local Agreement (LA) for the STT part, which com- pares consecutiv e chunk outputs and emits only the longest common prefix between the current chunk and the previous chunk’ s output, ensuring stable hypothesis emission. T o al- low for long-form processing, silero V AD [39] is used to seg- ment the continuous stream of audio into shorter segments of about 15-30s, with a maximum unv oiced interval of 20 sec- onds and a voice threshold of 0.1, suitable for standard S2TT model, and the memory is reset between segments. In this version, Seam.TTS is used as the TTS component. • LA+XTTS-v2 , similar to LA+Seam.TTS , couples the LA policy but replaces Seam.TTS with XTTS-v2 model for the TTS component. For the StreamAtt-based cascades, the latenc y is controlled by the cut-off frame (spanning 2, 4, 6, and 8), while for the LA- based cascades, by the speech segment size (spanning 0.5, 1.0, 1.5, and 2.0 seconds). Default decoding parameters are used for all models (e.g. num. beams for Seam.TTS is 1). 4. Results Figure 2 reports test-set performance of the proposed method, SimulU, alongside the previously defined strong cascade sys- tems (Section 3.3). Detailed results for each language pair sug- gest that SimulU consistently achieves the highest ASR-BLEU scores across six language directions (de, fr , it, es, pt, and ro), while maintaining competitive performance in the other ones (ru and nl), with always a reasonable latency (between 1 and 2 seconds in most cases) as measured by the start offset. 3 As shown, both SimulU and StreamAtt+XTTS-v2 outperform both LA-based systems by a large margin (at least, 4-5 ASR-BLEU points at the same latency), particularly in fr, pt, ro, and nl. The LA-based cascades span a wide range of start offset (often between 1 and 3 seconds), and increasing the speech segment size–hence, the av ailable context–always yields similar perfor- mance (e.g., in fr, it, pt, ro, nl). Replacing the strong XTTS- 3 Limits of acceptability have been set at ∼ 2 seconds for the ear- voice span depending on different conditions and language pairs [40]. 0 1 2 3 5 10 15 20 25 Start Offset (s) ASR-BLEU (a) en-de 0 1 2 3 5 10 15 20 25 30 35 40 Start Offset (s) ASR-BLEU (b) en-fr 0 1 2 3 4 5 10 15 20 25 30 Start Offset (s) ASR-BLEU (c) en-it 0 1 2 3 5 10 15 20 25 30 35 Start Offset (s) ASR-BLEU (d) en-es 0 1 2 3 5 10 15 20 25 30 Start Offset (s) ASR-BLEU (e) en-pt 0 1 2 3 5 10 15 20 Start Offset (s) ASR-BLEU (f) en-ru 0 1 2 3 5 10 15 20 25 Start Offset (s) ASR-BLEU (g) en-ro 0 1 2 5 10 15 20 25 30 35 Start Offset (s) ASR-BLEU (h) en-nl SimulU StreamAtt+XTTS-v2 StreamAtt+Seam.TTS LA+Seam.TTS LA+XTTS-v2 Figure 2: ASR-BLEU and Start Offset scor es across dif fer ent systems for eac h languag e pair of MuST -C v1 tst-COMMON. v2 model (as attested by results in T able 2) with Seam.TTS, as the TTS component in the cascades, leads to substantial quality degradation, with ASR-BLEU scores oscillating between 5 and 10 with the StreamAtt policy , indicating near-complete failure, and between 10 and 15 with the LA policy . A manual inspection suggests that this degradation largely stems from Seam.TTS’ s sensitivity to limited context: when conditioned on partial sen- tences rather than complete ones (as is typical in SimulS2ST settings), its synthesis quality deteriorates markedly , while it is not the case for XTTS-v2. W e further examine the end-offset latency , defined as the time delay between the end of the input speech stream and the generation of the final speech output by the system. T able 3 re- ports the results for the two best-performing systems, SimulU and StreamAtt+XTTS-v2 (SimulU and StreamAtt+Seam.TTS for Romanian). Considering the av erage performance across four cut-off frame settings for SimulU and across segment step configurations for StreamAtt+XTTS-v2, we observe that sys- tems with comparable overall performance exhibit similar end- offset latency in de and fr . Howe v er , for the other languages, SimulU achiev es lo wer end-offset latenc y values. Furthermore, the standard de viation under the SimulU policy is smaller , indi- cating more stable latency beha vior . All in all, we can conclude that SimulU achieves the best quality overall across the analyzed languages while maintaining the latency between 1 and 2 seconds. System de fr it nl pt es ru ro SimulU 247 224 100 82 146 106 106 34 (137) (132) (6) (9) (6) (5) (13) (2) T op 246 224 262 40 251 217 68 58 Cascade (137) (132) (122) (44) (123) (124) (62) (52) T able 3: End Offset in milliseconds (mean and std) for SimulU and the top cascade for each language averaged acr oss cutoff frame f and speech se gment size, respectively . 5. Conclusions In this work, we propose SimulU, the first training-free long- form simultaneous speech-to-speech translation policy that ex- ploits the internal cross-attention of pre-trained end-to-end models to regulate both input history and output generation dy- namically . W e ev aluated its performance across eight language pairs ag ainst strong cascade systems combining top-performing existing solutions for streaming translation and TTS systems. In these settings, SimulU yields strong results even when com- pared with state-of-the-art cascades, demonstrating the effec- tiv eness of the proposed approach without requiring any addi- tional training, therefore offering a practical and competitive solution for real-world simultaneous speech translation. 6. Acknowledgmements This work has receiv ed funding from the European Union’ s Horizon Europe programme under grant agreement No. 101213369 (DVPS). The research was conducted during an in- ternship at FBK, which was facilitated by MBZU AI Career Ser- vices, whose support we gratefully acknowledge. W e also thank Dr . Hanan Aldarmaki for supporting the main author through valuable feedback and guidance. 7. References [1] C. Munteanu, M. Jones, S. Oviatt, S. Brewster , G. Penn, S. Whit- taker , N. Rajput, and A. Nanavati, “W e need to talk: Hci and the delicate topic of spoken language interaction, ” in CHI’13 Ex- tended Abstracts on Human F actors in Computing Systems , 2013, pp. 2459–2464. [2] C. Munteanu and G. Penn, “Speech-based interaction: Myths, challenges, and opportunities, ” in Proceedings of the 2017 CHI Confer ence Extended Abstracts on Human F actors in Computing Systems , 2017, pp. 1196–1199. [3] Y . Jia, R. J. W eiss, F . Biadsy , W . Macherey , M. Johnson, Z. Chen, and Y . W u, “Direct Speech-to-Speech Translation with a Sequence-to-Sequence Model, ” in Interspeech 2019 , 2019, pp. 1123–1127. [4] S. Zhang, Q. Fang, S. Guo, Z. Ma, M. Zhang, and Y . Feng, “Streamspeech: Simultaneous speech-to-speech translation with multi-task learning, ” in Pr oceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , 2024, pp. 8964–8986. [5] K. Deng, W . Chen, X. Chen, and P . W oodland, “SimulS2S-LLM: Unlocking simultaneous inference of speech LLMs for speech-to- speech translation, ” in Pr oceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , W . Che, J. Nabende, E. Shutova, and M. T . Pilehvar , Eds. V ienna, Austria: Association for Computational Linguistics, Jul. 2025, pp. 16 718–16 734. [Online]. A vailable: https://aclanthology .org/2025.acl- long.817/ [6] A. Grattafiori, A. Dubey , A. Jauhri, A. Pandey , A. Kadian, A. Al-Dahle, A. Letman, A. Mathur , A. Schelten, A. V aughan, and A. Y ang, “The llama 3 herd of models, ” 2024. [Online]. A vailable: https://arxiv .org/abs/2407.21783 [7] S. Cheng, Y . Bao, Z. Huang, Y . Lu, N. Peng, L. Xu, R. Y u, R. Cao, Y . Du, T . Han, Y . Hu, Z. Li, S. Liu, S. Ma, S. Pan, J. Xiao, N. Xu, M. Y ang, R. Y e, Y . Y u, J. Zhang, R. Zhang, W . Zhang, W . Zhu, L. Zou, L. Lu, Y . W ang, and Y . W u, “Seed liv einterpret 2.0: End-to-end simultaneous speech-to-speech translation with your voice, ” 2025. [Online]. A vailable: https://arxiv .org/abs/2507.17527 [8] T . Labiausse, R. F abre, Y . Est ` eve, A. D ´ efossez, and N. Zeghidour , “Simultaneous speech-to-speech translation without aligned data, ” 2026. [Online]. A v ailable: https://arxiv .org/abs/2602.11072 [9] T . Labiausse, L. Mazar ´ e, E. Grav e, A. D ´ efossez, and N. Zeghidour , “High-fidelity simultaneous speech-to- speech translation, ” in F orty-second International Confer- ence on Machine Learning , 2025. [Online]. A v ailable: https://openrevie w .net/forum?id=fgjN8B6xVX [10] A. Lavie, A. W aibel, L. Levin, M. Finke, D. Gates, M. Gavalda, T . Zeppenfeld, and P . Zhan, “J ANUS-III: Speech-to-speech trans- lation in multiple languages, ” in 1997 IEEE International Confer - ence on Acoustics, Speech, and Signal Pr ocessing , vol. 1. IEEE, 1997, pp. 99–102. [11] S. Nakamura, K. Markov , H. Nakaiwa, G.-i. Kikui, H. Kawai, T . Jitsuhiro, J.-S. Zhang, H. Y amamoto, E. Sumita, and S. Y a- mamoto, “The A TR multilingual speech-to-speech translation system, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , v ol. 14, no. 2, pp. 365–376, 2006. [12] M. Sperber and M. Paulik, “Speech translation and the end-to-end promise: T aking stock of where we are, ” in Pr oceedings of the 58th Annual Meeting of the Association for Computational Linguistics , D. Jurafsky , J. Chai, N. Schluter, and J. T etreault, Eds. Online: Association for Computational Linguistics, Jul. 2020, pp. 7409–7421. [Online]. A v ailable: https://aclanthology .org/2020.acl- main.661/ [13] I. Tsiamas, M. Sperber, A. Finch, and S. Garg, “Speech is more than words: Do speech-to-text translation systems leverage prosody?” in Proceedings of the Ninth Conference on Machine T r anslation , B. Haddo w , T . Kocmi, P . Koehn, and C. Monz, Eds. Miami, Florida, USA: Association for Computational Linguistics, Nov . 2024, pp. 1235–1257. [Online]. A vailable: https://aclanthology .org/2024.wmt- 1.119/ [14] J. Zhao, N. Moritz, E. Lakomkin, R. Xie, Z. Xiu, K. Zmolikova, Z. Ahmed, Y . Gaur, D. Le, and C. Fuegen, “T e xtless streaming speech-to-speech translation using semantic speech tokens, ” in ICASSP 2025 - 2025 IEEE International Conference on Acous- tics, Speech and Signal Pr ocessing (ICASSP) , 2025, pp. 1–5. [15] S. P api, P . Pol ´ ak, D. Mach ´ a ˇ cek, and O. Bojar , “How “real” is your real-time simultaneous speech-to-text translation system?” T ransactions of the Association for Computational Linguistics , vol. 13, pp. 281–313, 2025. [Online]. A v ailable: https://aclanthology .org/2025.tacl- 1.14/ [16] S. Papi, M. Gaido, M. Negri, and L. Bentivogli, “StreamAtt: Direct streaming speech-to-text translation with attention-based audio history selection, ” in Pr oceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , L.-W . Ku, A. Martins, and V . Srikumar , Eds. Bangkok, Thailand: Association for Computational Linguistics, Aug. 2024, pp. 3692–3707. [Online]. A v ailable: https://aclanthology .org/2024.acl- long.202/ [17] S. Communication, L. Barrault, Y .-A. Chung, M. C. Meglioli, D. Dale, N. Dong, P .-A. Duquenne, H. Elsahar, H. Gong, K. Heffernan, J. Hof fman, C. Klaiber, P . Li, D. Licht, J. Maillard, A. Rakotoarison, K. R. Sadagopan, G. W enzek, E. Y e, B. Akula, P .-J. Chen, N. E. Hachem, B. Ellis, G. M. Gonzalez, J. Haaheim, P . Hansanti, R. Howes, B. Huang, M.-J. Hwang, H. Inaguma, S. Jain, E. Kalbassi, A. Kallet, I. Kulikov , J. Lam, D. Li, X. Ma, R. Mavlyutov , B. Peloquin, M. Ramadan, A. Ramakrishnan, A. Sun, K. T ran, T . Tran, I. T uf anov , V . V ogeti, C. W ood, Y . Y ang, B. Y u, P . Andrews, C. Balioglu, M. R. Costa-juss ` a, O. Celebi, M. Elbayad, C. Gao, F . Guzm ´ an, J. Kao, A. Lee, A. Mourachko, J. Pino, S. Popuri, C. Ropers, S. Saleem, H. Schwenk, P . T omasello, C. W ang, J. W ang, and S. W ang, “Seamlessm4t: Massiv ely multilingual & multimodal machi ne translation, ” 2023. [Online]. A vailable: https://arxiv .org/abs/2308.11596 [18] M. A. Di Gangi, R. Cattoni, L. Bentiv ogli, M. Negri, and M. T urchi, “MuST-C: a Multilingual Speech Translation Corpus, ” in Pr oceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language T echnologies, V olume 1 (Long and Short P apers) , J. Burstein, C. Doran, and T . Solorio, Eds. Minneapolis, Minnesota: Association for Computational Linguistics, Jun. 2019, pp. 2012–2017. [Online]. A v ailable: https://aclanthology .org/N19- 1202/ [19] D. Mach ´ a ˇ cek and P . Pol ´ ak, “Simultaneous translation with offline speech and LLM models in CUNI submission to IWSL T 2025, ” in Pr oceedings of the 22nd International Confer ence on Spoken Language T ranslation (IWSLT 2025) , E. Salesky , M. Federico, and A. Anastasopoulos, Eds. V ienna, Austria (in-person and online): Association for Computational Linguistics, Jul. 2025, pp. 389–398. [Online]. A vailable: https://aclanthology .org/2025.iwslt- 1.41/ [20] P . Pol ´ ak, N.-Q. Pham, T . N. Nguyen, D. Liu, C. Mullov , J. Niehues, O. Bojar, and A. W aibel, “CUNI-KIT system for simultaneous speech translation task at IWSL T 2022, ” in Pr oceedings of the 19th International Confer ence on Spoken Language Tr anslation (IWSLT 2022) , E. Salesky , M. Federico, and M. Costa-juss ` a, Eds. Dublin, Ireland (in-person and online): Association for Computational Linguistics, May 2022, pp. 277–285. [Online]. A vailable: https://aclanthology .org/2022. iwslt- 1.24/ [21] S. Papi, M. Turchi, and M. Negri, “AlignAtt: Using Attention- based Audio-T ranslation Alignments as a Guide for Simultaneous Speech T ranslation, ” in Interspeech 2023 , 2023, pp. 3974–3978. [22] S. Ouyang, X. Xu, and L. Li, “InfiniSST: Simultaneous translation of unbounded speech with large language model, ” in Findings of the Association for Computational Linguistics: ACL 2025 , W . Che, J. Nabende, E. Shutova, and M. T . Pilehvar , Eds. V ienna, Austria: Association for Computational Linguistics, Jul. 2025, pp. 3032–3046. [Online]. A v ailable: https://aclanthology .org/2025.findings- acl.157/ [23] M. Agarwal, S. Agrawal, A. Anastasopoulos, L. Bentivogli, O. Bojar, C. Borg, M. Carpuat, R. Cattoni, M. Cettolo, M. Chen, W . Chen, K. Choukri, A. Chronopoulou, A. Currey , T . Declerck, Q. Dong, K. Duh, Y . Est ` eve, M. Federico, S. Gahbiche, B. Haddow , B. Hsu, P . Mon Htut, H. Inaguma, D. Ja vorsk ´ y, J. Judge, Y . Kano, T . K o, R. Kumar , P . Li, X. Ma, P . Mathur, E. Matusov , P . McNamee, J. P . McCrae, K. Murray , M. Nadejde, S. Nakamura, M. Negri, H. Nguyen, J. Niehues, X. Niu, A. Kr . Ojha, J. E. Ortega, P . Pal, J. Pino, L. v an der Plas, P . Pol ´ ak, E. Rippeth, E. Salesky , J. Shi, M. Sperber , S. St ¨ uker , K. Sudoh, Y . T ang, B. Thompson, K. Tran, M. T urchi, A. W aibel, M. W ang, S. W atanabe, and R. Ze vallos, “FINDINGS OF THE IWSL T 2023 EV ALU A TION CAMP AIGN, ” in Pr oceedings of the 20th International Conference on Spoken Language T ranslation (IWSLT 2023) , E. Salesky , M. Federico, and M. Carpuat, Eds. T oronto, Canada (in-person and online): Association for Computational Linguistics, Jul. 2023, pp. 1–61. [Online]. A vailable: https://aclanthology .or g/2023.iwslt- 1.1/ [24] X. Ma, M. J. Dousti, C. W ang, J. Gu, and J. Pino, “SIMULE- V AL: An evaluation toolkit for simultaneous translation, ” in Pr oceedings of the 2020 Conference on Empirical Methods in Natural Language Pr ocessing: System Demonstrations , Q. Liu and D. Schlangen, Eds. Online: Association for Computa- tional Linguistics, Oct. 2020, pp. 144–150. [Online]. A v ailable: https://aclanthology .org/2020.emnlp- demos.19/ [25] K. P apineni, S. Roukos, T . W ard, and W .-J. Zhu, “Bleu: a method for automatic ev aluation of machine translation, ” in Pr oceedings of the 40th annual meeting of the Association for Computational Linguistics , 2002, pp. 311–318. [26] M. Sekoyan, N. R. K oluguri, N. T adevosyan, P . Zelasko, T . Bart- ley , N. Karpov , J. Balam, and B. Ginsburg, “Canary-1b-v2 & parakeet-tdt-0.6 b-v3: Efficient and high-performance models for multilingual asr and ast, ” arXiv pr eprint arXiv:2509.14128 , 2025. [27] A. Radford, J. W . Kim, T . Xu, G. Brockman, C. McLeave y , and I. Sutskev er , “Robust speech recognition via large-scale weak supervision, ” in International conference on machine learning . PMLR, 2023, pp. 28 492–28 518. [28] Y .-A. Chung, Y . Zhang, W . Han, C.-C. Chiu, J. Qin, R. Pang, and Y . Wu, “W2v-bert: Combining contrastiv e learning and masked language modeling for self-supervised speech pre-training, ” in 2021 IEEE Automatic Speech Recognition and Understanding W orkshop (ASR U) . IEEE, 2021, pp. 244–250. [29] M. R. Costa-Juss ` a, J. Cross, O. C ¸ elebi, M. Elbayad, K. Heafield, K. Hef fernan, E. Kalbassi, J. Lam, D. Licht, J. Maillard et al. , “No language left behind: Scaling human-centered machine transla- tion, ” arXiv preprint , 2022. [30] P .-A. Duquenne, H. Schwenk, and B. Sagot, “Sonar: sentence- lev el multimodal and language-agnostic representations, ” arXiv pr eprint arXiv:2308.11466 , 2023. [31] A. Babu, C. W ang, A. Tjandra, K. Lakhotia, Q. Xu, N. Goyal, K. Singh, P . von Platen, Y . Saraf, J. Pino et al. , “Xls-r: Self- supervised cross-lingual speech representation learning at scale, ” Interspeech 2022 , 2022. [32] J. K ong, J. Kim, and J. Bae, “Hifi-gan: Generative adversarial net- works for efficient and high fidelity speech synthesis, ” Advances in neural information pr ocessing systems , vol. 33, pp. 17 022– 17 033, 2020. [33] D. Liu, G. Spanakis, and J. Niehues, “Low-latency sequence-to- sequence speech recognition and translation by partial hypothesis selection, ” Interspeech 2020 , pp. 3620–3624, 2020. [34] H. Inaguma, S. Popuri, I. Kulikov , P .-J. Chen, C. W ang, Y .-A. Chung, Y . T ang, A. Lee, S. W atanabe, and J. Pino, “UnitY: T wo-pass direct speech-to-speech translation with discrete units, ” in Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , A. Rogers, J. Boyd-Graber, and N. Okazaki, Eds. T oronto, Canada: Association for Computational Linguistics, Jul. 2023, pp. 15 655–15 680. [Online]. A v ailable: https: //aclanthology .org/2023.acl- long.872/ [35] Hexgrad, “Kok oro-82m (revision d8b4fc7), ” 2025. [Online]. A vailable: https://huggingface.co/hexgrad/K okoro- 82M [36] S. Liao, Y . W ang, T . Li, Y . Cheng, R. Zhang, R. Zhou, and Y . Xing, “Fish-speech: Le veraging large language models for advanced multilingual text-to-speech synthesis, ” 2024. [Online]. A vailable: https://arxiv .org/abs/2411.01156 [37] T . Saeki, D. Xin, W . Nakata, T . Koriyama, S. T akamichi, and H. Saruwatari, “Utmos: Utokyo-sarulab system for voicemos challenge 2022, ” Interspeech 2022 , 2022. [38] I. Abdulmumin, V . Agostinelli, T . Alum ¨ ae, A. Anastasopoulos, L. Bentivogli, O. Bojar, C. Borg, F . Bougares, R. Cattoni, M. Cettolo, L. Chen, W . Chen, R. Dabre, Y . Est ` eve, M. Federico, M. Fishel, M. Gaido, D. Javorsk ´ y, M. Kasztelnik, F . Kponou, M. Krubi ´ nski, T . Kin Lam, D. Liu, E. Matuso v , C. Kumar Maurya, J. P . McCrae, S. Mdhaff ar , Y . Moslem, K. Murray , S. Nakamura, M. Negri, J. Niehues, A. Kr . Ojha, J. E. Ortega, S. Papi, P . Pecina, P . Pol ´ ak, P . Połe ´ c, A. Sankar, B. Sav oldi, N. Sethiya, C. Sikasote, M. Sperber, S. St ¨ uker , K. Sudoh, B. Thompson, M. T urchi, A. W aibel, P . Wilken, R. Ze v allos, V . Zouhar , and M. Z ¨ ufle, “Findings of the IWSL T 2025 ev aluation campaign, ” in Pr oceedings of the 22nd International Conference on Spoken Language T ranslation (IWSLT 2025) , E. Salesky , M. Federico, and A. Anastasopoulos, Eds. V ienna, Austria (in-person and online): Association for Computational Linguistics, Jul. 2025, pp. 412–481. [Online]. A vailable: https://aclanthology .or g/2025.iwslt- 1.44/ [39] S. T eam, “Silero vad: pre-trained enterprise-grade voice activity detector (vad), number detector and language classifier , ” https:// github .com/snakers4/silero- v ad, 2024. [40] A. Chmiel, A. Szarkowska, D. K or ˇ zinek, A. Lijewska, Ł. Dutka, Ł. Brocki, and K. Marasek, “Ear–voice span and pauses in intra- and interlingual respeaking: An exploratory study into tempo- ral aspects of the respeaking process, ” Applied Psycholinguistics , vol. 38, pp. 1–27, 05 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment