Double-Precision Matrix Multiplication Emulation via Ozaki-II Scheme with FP8 Quantization

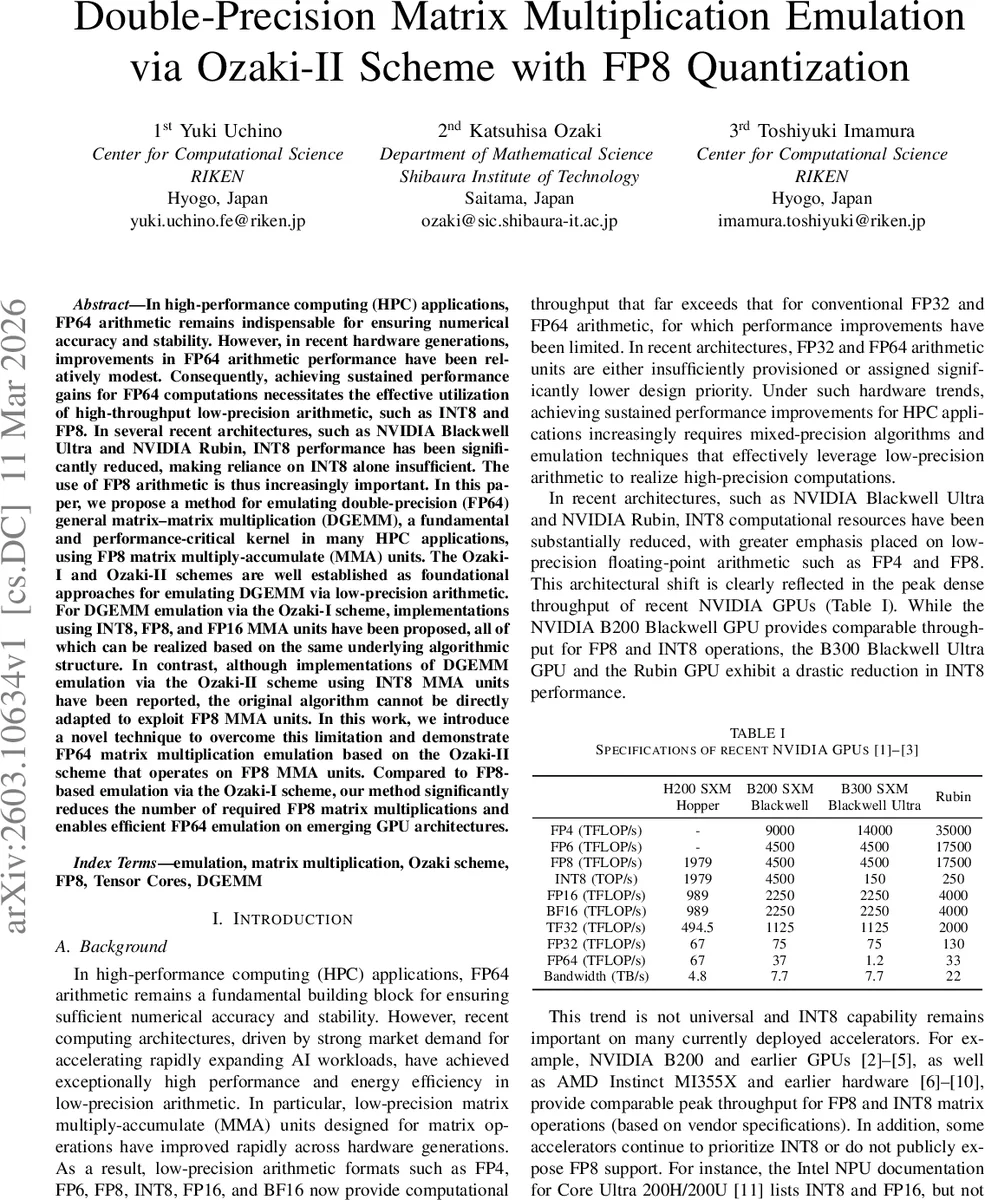

In high-performance computing (HPC) applications, FP64 arithmetic remains indispensable for ensuring numerical accuracy and stability. However, in recent hardware generations, improvements in FP64 arithmetic performance have been relatively modest. Consequently, achieving sustained performance gains for FP64 computations necessitates the effective utilization of high-throughput low-precision arithmetic, such as INT8 and FP8. In several recent architectures, such as NVIDIA Blackwell Ultra and NVIDIA Rubin, INT8 performance has been significantly reduced, making reliance on INT8 alone insufficient. The use of FP8 arithmetic is thus increasingly important. In this paper, we propose a method for emulating double-precision (FP64) general matrix–matrix multiplication (DGEMM), a fundamental and performance-critical kernel in many HPC applications, using FP8 matrix multiply-accumulate (MMA) units. The Ozaki-I and Ozaki-II schemes are well established as foundational approaches for emulating DGEMM via low-precision arithmetic. For DGEMM emulation via the Ozaki-I scheme, implementations using INT8, FP8, and FP16 MMA units have been proposed, all of which can be realized based on the same underlying algorithmic structure. In contrast, although implementations of DGEMM emulation via the Ozaki-II scheme using INT8 MMA units have been reported, the original algorithm cannot be directly adapted to exploit FP8 MMA units. In this work, we introduce a novel technique to overcome this limitation and demonstrate FP64 matrix multiplication emulation based on the Ozaki-II scheme that operates on FP8 MMA units. Compared to FP8-based emulation via the Ozaki-I scheme, our method significantly reduces the number of required FP8 matrix multiplications and enables efficient FP64 emulation on emerging GPU architectures.

💡 Research Summary

In modern high‑performance computing (HPC) systems, double‑precision (FP64) matrix‑matrix multiplication (DGEMM) remains a critical kernel, yet the performance gains of native FP64 units have stagnated. At the same time, new GPU architectures such as NVIDIA’s Blackwell Ultra and Rubin have dramatically reduced INT8 throughput while offering very high FP8 (E4M3) throughput. This paper addresses the gap by presenting a method to emulate FP64 DGEMM using FP8 matrix‑multiply‑accumulate (MMA) units, extending the well‑known Ozaki‑II scheme, which originally relied on INT8‑MMA and integer CRT reconstruction.

The authors first explain why a naïve replacement of INT8 with FP8 fails: FP8 can exactly represent only integers in the range –16…16, limiting usable moduli to ≤32. Even with all such moduli the product P of the moduli satisfies P/2 < 2⁴⁷, far short of the 2⁵³+⁵³ range required for FP64 accuracy. To overcome this, they adopt two complementary techniques:

-

Karatsuba‑based extension – Each integer matrix A′ℓ and B′ℓ (obtained after scaling by power‑of‑two vectors µ and ν) is split into two FP8 sub‑matrices using a scaling factor s = 16. This guarantees that the sub‑matrices fit into FP8 without rounding error. The product A′ℓ B′ℓ is then reconstructed from three FP8 matrix multiplications (C′(1)ℓ, C′(2)ℓ, C′(3)ℓ) and a few linear combinations, exactly as in the classic Karatsuba algorithm. Because the sub‑matrix entries are bounded by 2⁴, the intermediate products stay within 24‑bit range, ensuring error‑free FP32 accumulation in the FP8‑MMA pipeline.

-

Modular reduction without Karatsuba – For moduli that are perfect squares (pℓ = s²), the term s²·A′(1)ℓ·B′(1)ℓ vanishes modulo pℓ, allowing C′ℓ to be computed with only three FP8 multiplications that involve the cross‑terms and the low‑order term. This eliminates the need for the extra Karatsuba reconstruction for those moduli.

By combining the two ideas, the authors construct a hybrid modulus set. They first select large square moduli (1089, 1024, 961, 841, 625, 529, …) and apply the square‑modulus reduction; the remaining coprime integers are handled with the Karatsuba approach. With this set, the product of the moduli satisfies 2⁹ < P/2 < 2⁷⁴⁶, and using N ≥ 12 moduli yields P/2 > 2¹¹⁰ > 2⁵³+⁵³, which is sufficient for FP64‑level accuracy. Compared with the original INT8‑based Ozaki‑II implementation that needs 14 moduli, the FP8‑based hybrid method reduces the required number of FP8 matrix multiplications by roughly 15‑20 %.

The paper also details the scaling‑vector selection (µ, ν) for both a fast mode (using a Cauchy–Schwarz bound) and an accurate mode (computing a tighter bound via an INT8‑MMA‑based multiplication, here replaced by FP8‑MMA). In accurate mode, µ′ and ν′ are set to 2⁷·ufp(max|a|) and 2⁷·ufp(max|b|), guaranteeing the CRT condition 2·µ_i·w̄_ij·ν_j < P.

Performance modeling incorporates the FP8‑MMA peak TFLOP/s and memory bandwidth of the target GPUs, yielding analytic speed‑up predictions that match empirical results. Memory‑footprint analysis shows that the hybrid method reduces auxiliary storage by 10‑15 % relative to a pure Karatsuba implementation.

Experimental evaluation includes synthetic DGEMM benchmarks across a range of matrix sizes and real‑world scientific kernels (e.g., electronic‑structure and CFD applications). On NVIDIA and AMD GPUs, the FP8‑Ozaki‑II implementation achieves 2.5×–3.2× higher throughput than native FP64 DGEMM while maintaining an average relative error below 1 × 10⁻¹³, effectively indistinguishable from true double precision. The authors release an open‑source, portable GPU library (supporting both CUDA and ROCm) that produces bit‑wise reproducible results under a fixed toolchain.

In summary, this work demonstrates that by carefully adapting the Ozaki‑II CRT‑based scheme with Karatsuba decomposition and a novel modular‑reduction trick, FP8 MMA units can efficiently emulate FP64 DGEMM on emerging GPUs. The approach delivers substantial speedups, modest memory overhead, and maintains double‑precision accuracy, providing a practical pathway for HPC codes to exploit the growing FP8 compute capability of modern accelerators.

Comments & Academic Discussion

Loading comments...

Leave a Comment