Is this Idea Novel? An Automated Benchmark for Judgment of Research Ideas

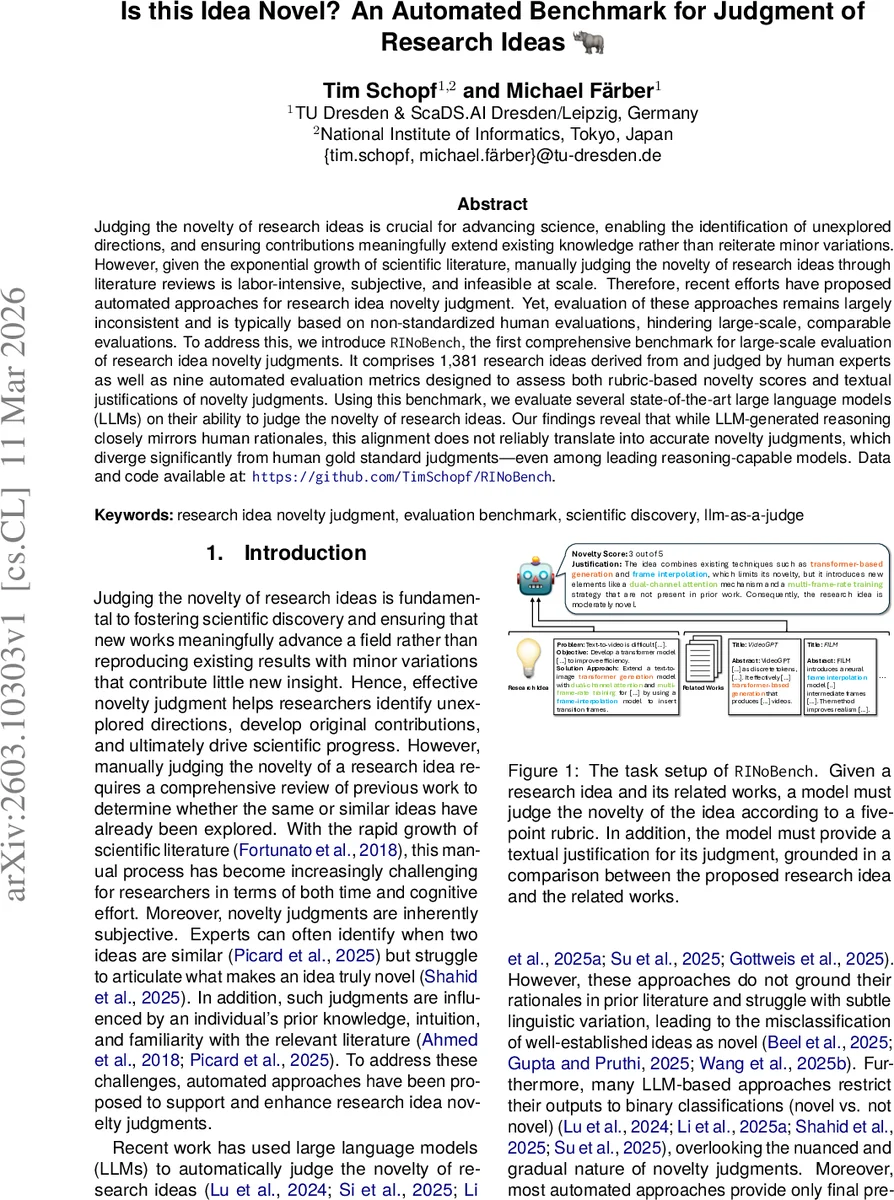

Judging the novelty of research ideas is crucial for advancing science, enabling the identification of unexplored directions, and ensuring contributions meaningfully extend existing knowledge rather than reiterate minor variations. However, given the exponential growth of scientific literature, manually judging the novelty of research ideas through literature reviews is labor-intensive, subjective, and infeasible at scale. Therefore, recent efforts have proposed automated approaches for research idea novelty judgment. Yet, evaluation of these approaches remains largely inconsistent and is typically based on non-standardized human evaluations, hindering large-scale, comparable evaluations. To address this, we introduce RINoBench, the first comprehensive benchmark for large-scale evaluation of research idea novelty judgments. It comprises 1,381 research ideas derived from and judged by human experts as well as nine automated evaluation metrics designed to assess both rubric-based novelty scores and textual justifications of novelty judgments. Using this benchmark, we evaluate several state-of-the-art large language models (LLMs) on their ability to judge the novelty of research ideas. Our findings reveal that while LLM-generated reasoning closely mirrors human rationales, this alignment does not reliably translate into accurate novelty judgments, which diverge significantly from human gold standard judgments - even among leading reasoning-capable models. Data and code available at: https://github.com/TimSchopf/RINoBench.

💡 Research Summary

The paper introduces RINoBench, the first large‑scale, reproducible benchmark for evaluating automated judgments of research idea novelty. Recognizing that manual novelty assessment is labor‑intensive, subjective, and infeasible given the exponential growth of scientific literature, the authors set out to create a standardized testbed that includes both quantitative scores and qualitative justifications.

Data collection leverages publicly available ICLR 2022 and 2023 submissions and their OpenReview peer‑review comments. After filtering for high inter‑reviewer agreement (maximum one‑point disagreement), 3,535 papers remain. Reviewers had rated two dimensions—technical novelty & significance and empirical novelty & significance—on a rubric. The authors average these scores and map the continuous values into a unified 1‑to‑5 scale, providing a clear, nuanced grading system.

To transform each paper into a concise research idea, the authors employ a 120‑billion‑parameter LLM (GPT‑OSS‑120B) to extract problem statements, objectives, and solution approaches, outputting a structured JSON representation. They also retrieve at least five cited works per paper using PDF extraction (Nougat) and Semantic Scholar, ensuring that each idea is grounded in a set of related works. Multiple quality‑control steps—formal correctness checks, grounding verification, and hallucination detection—are applied to guarantee that both the idea description and the synthesized novelty justification are accurate and well‑referenced.

RINoBench provides two evaluation targets: (1) prediction of the rubric‑based novelty score and (2) generation of a textual justification that aligns with human reviewer arguments. To assess models, nine automated metrics are introduced, covering lexical overlap (BLEU, ROUGE), semantic similarity (BERTScore), and retrieval‑augmented generation (RAG) based grounding scores.

The authors benchmark several state‑of‑the‑art large language models—including GPT‑4, Claude, Llama‑2, and others—under a uniform prompting protocol. Results reveal that while the models can produce reasoning chains that resemble human rationales, their final novelty scores diverge significantly from the human gold standard, with an average absolute error of about 0.42 on the 5‑point scale. The discrepancy is most pronounced for mid‑range novelty (scores 3–4), where models tend to either under‑estimate modest innovations or over‑estimate incremental variations. This suggests that current LLMs excel at surface‑level textual reasoning but lack the deep domain‑specific comparison capabilities required for precise novelty assessment.

Compared to prior work, which either uses small binary‑label datasets or evaluates novelty as a side‑task within broader discovery pipelines, RINoBench offers a comprehensive, multi‑dimensional, and publicly available resource that enables fair, large‑scale comparisons across methods. The paper acknowledges limitations: the dataset is heavily weighted toward machine‑learning research, and the LLM‑driven preprocessing pipeline still introduces occasional errors. Future directions include expanding to other scientific domains, integrating more robust fact‑checking modules, and exploring human‑AI collaborative workflows to improve both the accuracy of novelty scores and the interpretability of generated justifications.

Overall, RINoBench represents a significant step toward systematic, transparent, and scalable evaluation of automated research idea novelty judgment, highlighting both the promise and current shortcomings of large language models in this nuanced task.

Comments & Academic Discussion

Loading comments...

Leave a Comment