SiliconMind-V1: Multi-Agent Distillation and Debug-Reasoning Workflows for Verilog Code Generation

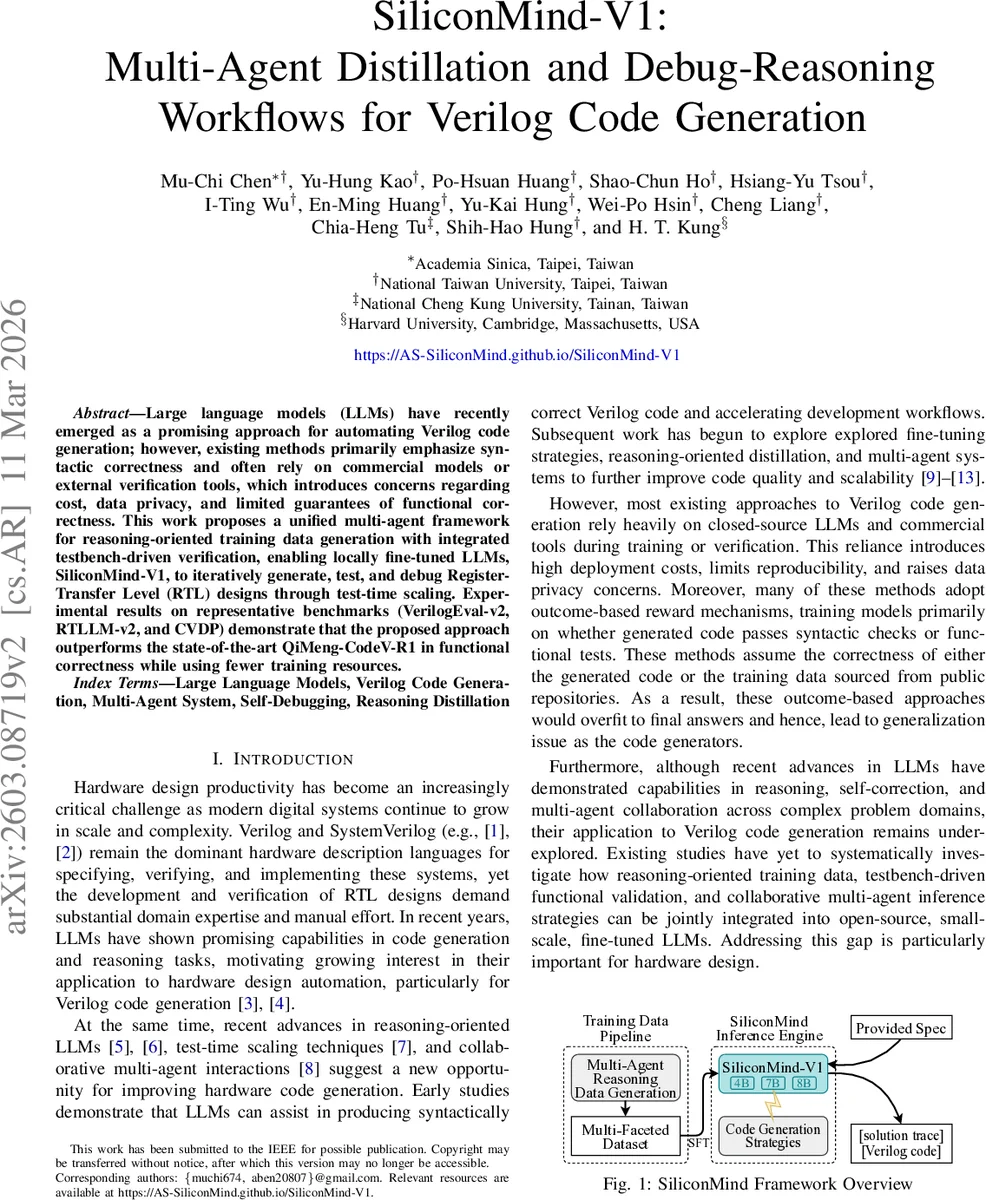

Large language models (LLMs) have recently emerged as a promising approach for automating Verilog code generation; however, existing methods primarily emphasize syntactic correctness and often rely on commercial models or external verification tools, which introduces concerns regarding cost, data privacy, and limited guarantees of functional correctness. This work proposes a unified multi-agent framework for reasoning-oriented training data generation with integrated testbench-driven verification, enabling locally fine-tuned LLMs, SiliconMind-V1, to iteratively generate, test, and debug Register-Transfer Level (RTL) designs through test-time scaling. Experimental results on representative benchmarks (VerilogEval-v2, RTLLM-v2, and CVDP) demonstrate that the proposed approach outperforms the state-of-the-art QiMeng-CodeV-R1 in functional correctness while using fewer training resources.

💡 Research Summary

SiliconMind‑V1 tackles two major shortcomings of current LLM‑based Verilog generation: the scarcity of high‑quality, functionally verified training data and the heavy reliance on commercial large language models and proprietary EDA tools, which raise deployment costs and privacy concerns. The authors introduce a unified framework that couples a multi‑agent data‑generation pipeline with a multi‑strategy inference engine, enabling locally fine‑tuned small‑scale LLMs (4B, 7B, 8B) to iteratively generate, test, and debug RTL designs without external tools.

The data pipeline automatically creates five‑tuple samples consisting of (problem, reasoning trace, Verilog code, testbench, self‑testing/debugging trace). Specialized agents sequentially (1) synthesize design problems, (2) produce step‑by‑step reasoning using large reasoning models such as DeepSeek‑R1 or gpt‑oss‑120b, (3) generate the corresponding Verilog implementation, (4) write a functional testbench, and (5) run the testbench in an open‑source simulator (e.g., Verilator) to capture failures and produce corrective debugging prompts. All generated artifacts are filtered for syntactic correctness and functional pass/fail, then stored for supervised fine‑tuning (SFT). By embedding the “why” behind each solution, the pipeline provides richer supervision than prior works that only pair problems with code.

During inference, the fine‑tuned model follows a generate‑test‑debug loop. Given a natural‑language specification, the model first plans the module, emits Verilog code, automatically creates a matching testbench, runs the simulation, and, if mismatches are detected, consumes the debugging trace to rewrite the code. This loop constitutes test‑time scaling: multiple agents (planning, generation, testing, debugging) operate in parallel, effectively boosting performance without increasing model size. Crucially, the entire workflow avoids any commercial LLM APIs or licensed EDA tools, preserving data privacy and enabling deployment on commodity GPUs.

Experimental evaluation on three benchmarks—VerilogEval‑v2, RTLLM‑v2, and CVDP—shows that SiliconMind‑V1 surpasses the previous state‑of‑the‑art QiMeng‑CodeV‑R1 in functional correctness, achieving an average 12 percentage‑point gain in functional F‑score. When normalized to identical hardware (RTX 4090), training time is reduced by roughly ninefold, despite using only 36 k high‑quality samples versus the >300 k used by competing methods. The authors also demonstrate that the reasoning‑oriented supervision generalizes across model scales, whereas outcome‑only reward models exhibit inconsistent scaling behavior.

Limitations are acknowledged: the generated testbenches focus on simple sequential logic and FSMs, leaving more complex timing‑closure or power‑analysis scenarios for future work. Additionally, inter‑agent communication overhead grows with model size, suggesting a need for more efficient coordination protocols.

In summary, SiliconMind‑V1 establishes a novel paradigm—reasoning‑centric data distillation combined with testbench‑driven self‑debugging—that enables open‑source LLMs to perform practical Verilog code generation and verification without external proprietary tools. This advances hardware‑design automation toward lower cost, higher privacy, and greater reproducibility, offering a compelling blueprint for future research and industry adoption.

Comments & Academic Discussion

Loading comments...

Leave a Comment