IntrinsicWeather: Controllable Weather Editing in Intrinsic Space

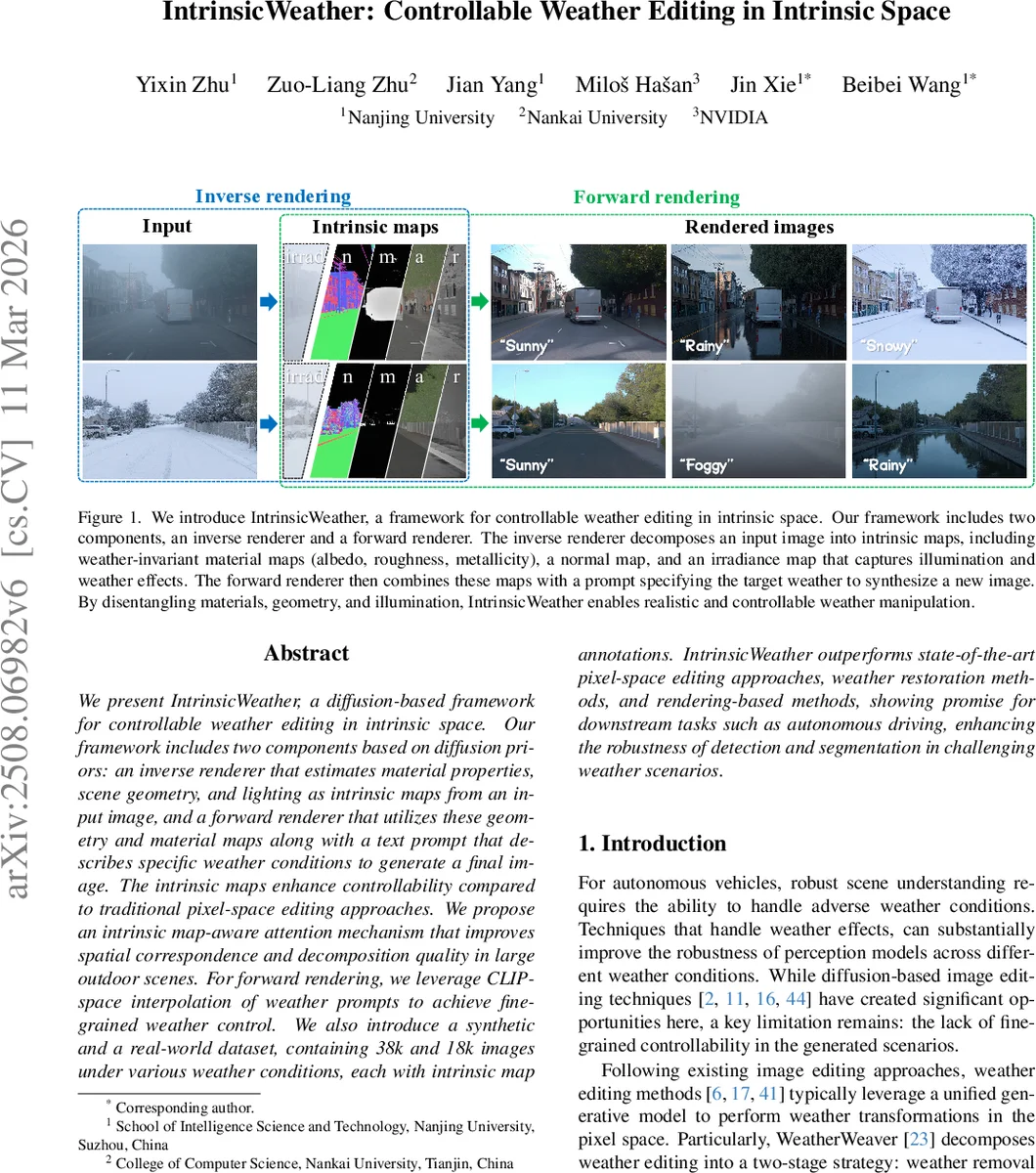

We present IntrinsicWeather, a diffusion-based framework for controllable weather editing in intrinsic space. Our framework includes two components based on diffusion priors: an inverse renderer that estimates material properties, scene geometry, and lighting as intrinsic maps from an input image, and a forward renderer that utilizes these geometry and material maps along with a text prompt that describes specific weather conditions to generate a final image. The intrinsic maps enhance controllability compared to traditional pixel-space editing approaches. We propose an intrinsic map-aware attention mechanism that improves spatial correspondence and decomposition quality in large outdoor scenes. For forward rendering, we leverage CLIP-space interpolation of weather prompts to achieve fine-grained weather control. We also introduce a synthetic and a real-world dataset, containing 38k and 18k images under various weather conditions, each with intrinsic map annotations. IntrinsicWeather outperforms state-of-the-art pixel-space editing approaches, weather restoration methods, and rendering-based methods, showing promise for downstream tasks such as autonomous driving, enhancing the robustness of detection and segmentation in challenging weather scenarios.

💡 Research Summary

IntrinsicWeather introduces a novel diffusion‑based framework for controllable weather editing that operates in intrinsic space rather than directly on pixel values. The system consists of two complementary components: an inverse renderer that decomposes an input image into weather‑invariant material maps (albedo, roughness, metallicity), a normal map, and a weather‑variant irradiance map; and a forward renderer that recombines these maps with a text prompt describing the desired weather condition to synthesize a new image.

The inverse renderer builds on Stable Diffusion 3.5 and adapts the DiT (Diffusion Transformer) architecture with a newly proposed Intrinsic Map‑Aware Attention (IMAA). For each intrinsic map a learnable embedding is created; DINOv2‑extracted patch tokens are gated through an MLP that produces a map‑specific mask. This mask is injected as a bias into the joint image‑text attention matrix, guiding the diffusion model to focus on regions most relevant for the target map (e.g., geometry details for normals, metallic objects for metallicity). This mechanism dramatically improves spatial correspondence and fine‑detail recovery in large‑scale outdoor scenes, where standard diffusion models often miss distant or small objects.

The forward renderer keeps the estimated intrinsic maps fixed and manipulates the weather condition through CLIP‑space interpolation. Given a source weather w₂ and a target weather w₁, the direction e = Embed(w₁) − Embed(w₂) is computed in CLIP’s text embedding space. By shifting a neutral prompt embedding w_base by α·e (α∈

Comments & Academic Discussion

Loading comments...

Leave a Comment