From Prior to Pro: Efficient Skill Mastery via Distribution Contractive RL Finetuning

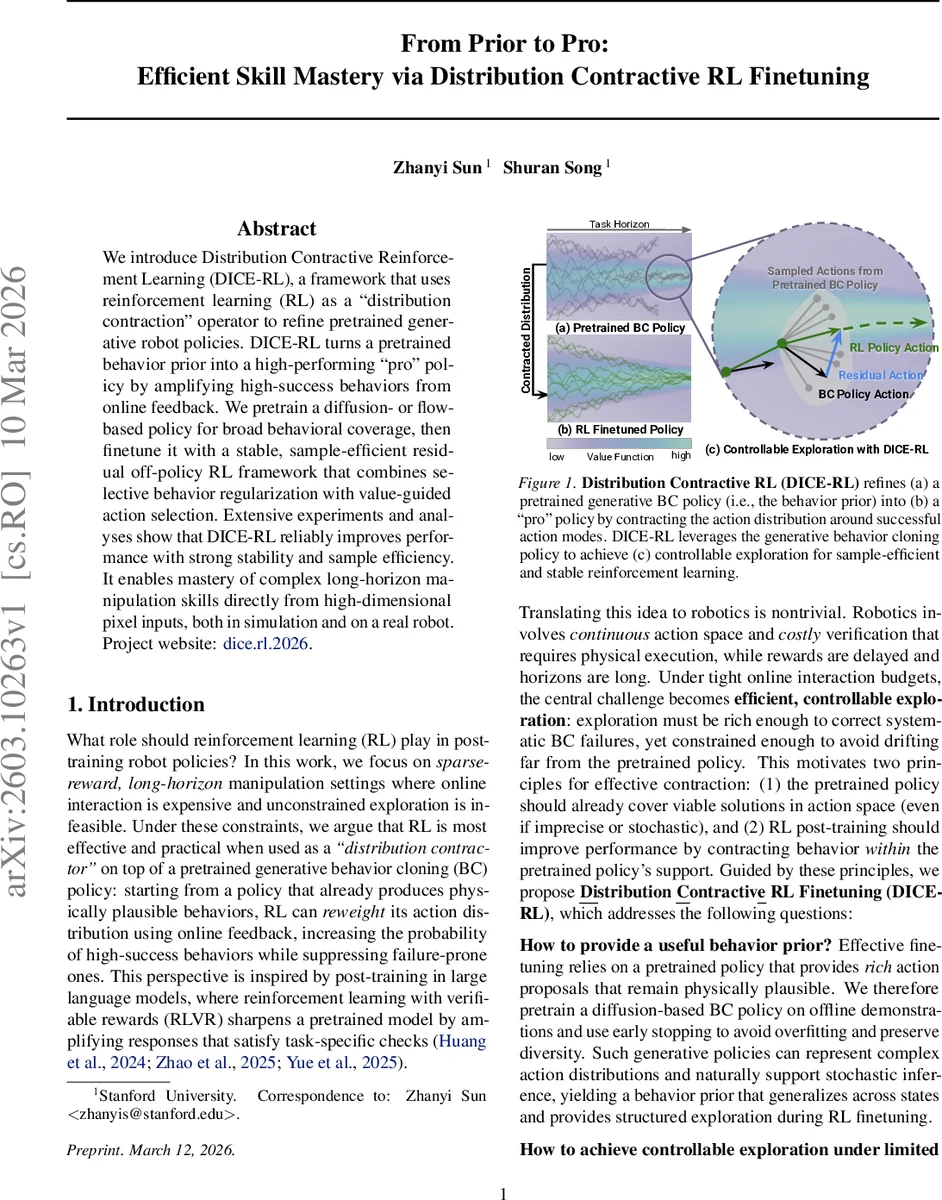

We introduce Distribution Contractive Reinforcement Learning (DICE-RL), a framework that uses reinforcement learning (RL) as a “distribution contraction” operator to refine pretrained generative robot policies. DICE-RL turns a pretrained behavior prior into a high-performing “pro” policy by amplifying high-success behaviors from online feedback. We pretrain a diffusion- or flow-based policy for broad behavioral coverage, then finetune it with a stable, sample-efficient residual off-policy RL framework that combines selective behavior regularization with value-guided action selection. Extensive experiments and analyses show that DICE-RL reliably improves performance with strong stability and sample efficiency. It enables mastery of complex long-horizon manipulation skills directly from high-dimensional pixel inputs, both in simulation and on a real robot. Project website: https://zhanyisun.github.io/dice.rl.2026/.

💡 Research Summary

The paper introduces Distribution Contractive Reinforcement Learning (DICE‑RL), a framework that treats reinforcement learning as a “distribution‑contraction” operator applied to a pretrained generative robot policy. The authors first pretrain a diffusion‑ or flow‑based behavior cloning (BC) policy on a large offline demonstration dataset. This pretrained policy, denoted π_pre, is kept frozen during downstream learning and serves as a stochastic proposal distribution: given a state s and a latent noise vector z∼N(0,I), π_pre(s,z) generates an action (or an h‑step action chunk).

Instead of fine‑tuning the entire generative model—a process that would require back‑propagation through iterative denoising or ODE solvers—the authors attach a lightweight residual network s_θ(s,z) to the frozen prior. The final policy is a simple additive correction: a = π_pre(s,z) + s_θ(s,z). Because the residual is conditioned on the same latent z, it can make precise, local edits to the specific base action proposed by the prior while preserving the prior’s expressive stochasticity.

Training proceeds with an off‑policy TD3+BC‑style objective. The residual actor maximizes the critic’s Q‑value while being penalized by an L2 term ‖s_θ(s,z)‖² that pulls the policy toward the prior. This regularization ensures that exploration stays within the support of the demonstrations, preventing the policy from drifting into unsafe regions. To avoid over‑constraining the policy, a “BC‑loss filter” disables or attenuates the regularization whenever the critic predicts that the corrected action yields a higher value than the uncorrected prior action (i.e., when Q(s,π_pre(s,z)+s_θ) – Q(s,π_pre(s,z)) > ε).

A key efficiency trick is multi‑sample expectation training. For each sampled state, K latent noises are drawn, producing K candidate action chunks. The critic is updated using a TD target that averages over the next‑state values of these K candidates, and the actor maximizes the average Q‑value across the K candidates. This reduces variance, encourages the residual to improve the entire latent‑induced distribution rather than a single sample, and yields better sample efficiency.

During online interaction, the policy performs a “best‑of‑N” selection: K candidates are generated, their Q‑values are evaluated, and the highest‑valued candidate is executed. This value‑guided action selection further stabilizes learning by preventing low‑value stochastic samples from being deployed on the robot.

The framework also incorporates action chunking (h‑step actions) to address sparse‑reward, long‑horizon tasks. Chunking reduces the effective decision frequency, improves temporal consistency, and makes credit assignment more tractable. Additionally, the authors adopt an RLPD‑style data mixing schedule: early training batches are weighted heavily toward offline demonstrations (ratio r_offline), then linearly decay to favor online experience as the residual improves. This schedule provides early stability while allowing the policy to eventually rely on its own experience.

Experiments span both simulation and real‑world robot platforms. In simulation, DICE‑RL solves complex manipulation sequences (e.g., multi‑object stacking, assembly) from raw pixel observations with dramatically fewer online samples than baseline on‑policy RL, direct fine‑tuning of diffusion models, or distillation‑based methods. On a real robot arm, the method learns a five‑step assembly task within ~30 minutes of interaction, achieving >70 % success, whereas competing approaches plateau below 30 % under the same budget. Ablations demonstrate that each component—residual architecture, multi‑sample training, BC‑loss filter, and best‑of‑N selection—contributes measurably to performance and stability.

The authors claim three main contributions: (1) a practical RL fine‑tuning pipeline for generative BC policies that preserves the prior’s expressiveness while enabling sample‑efficient correction; (2) strong empirical validation on high‑dimensional visual tasks in both simulation and the real world; and (3) an analytical study of how distribution contraction manifests in policy space, offering guidance on pre‑training data quality, model choice, and hyper‑parameter settings.

Limitations include a focus on flow‑matching priors (diffusion extensions are left for future work), reliance on manually set thresholds (ε, β) for the regularization filter, and the need to explore multi‑agent or collaborative scenarios. Nonetheless, DICE‑RL presents a compelling synthesis of behavior cloning, residual policy correction, and value‑guided exploration, establishing a new paradigm for turning large offline priors into high‑performing, safe robot policies with minimal online interaction.

Comments & Academic Discussion

Loading comments...

Leave a Comment