ParTY: Part-Guidance for Expressive Text-to-Motion Synthesis

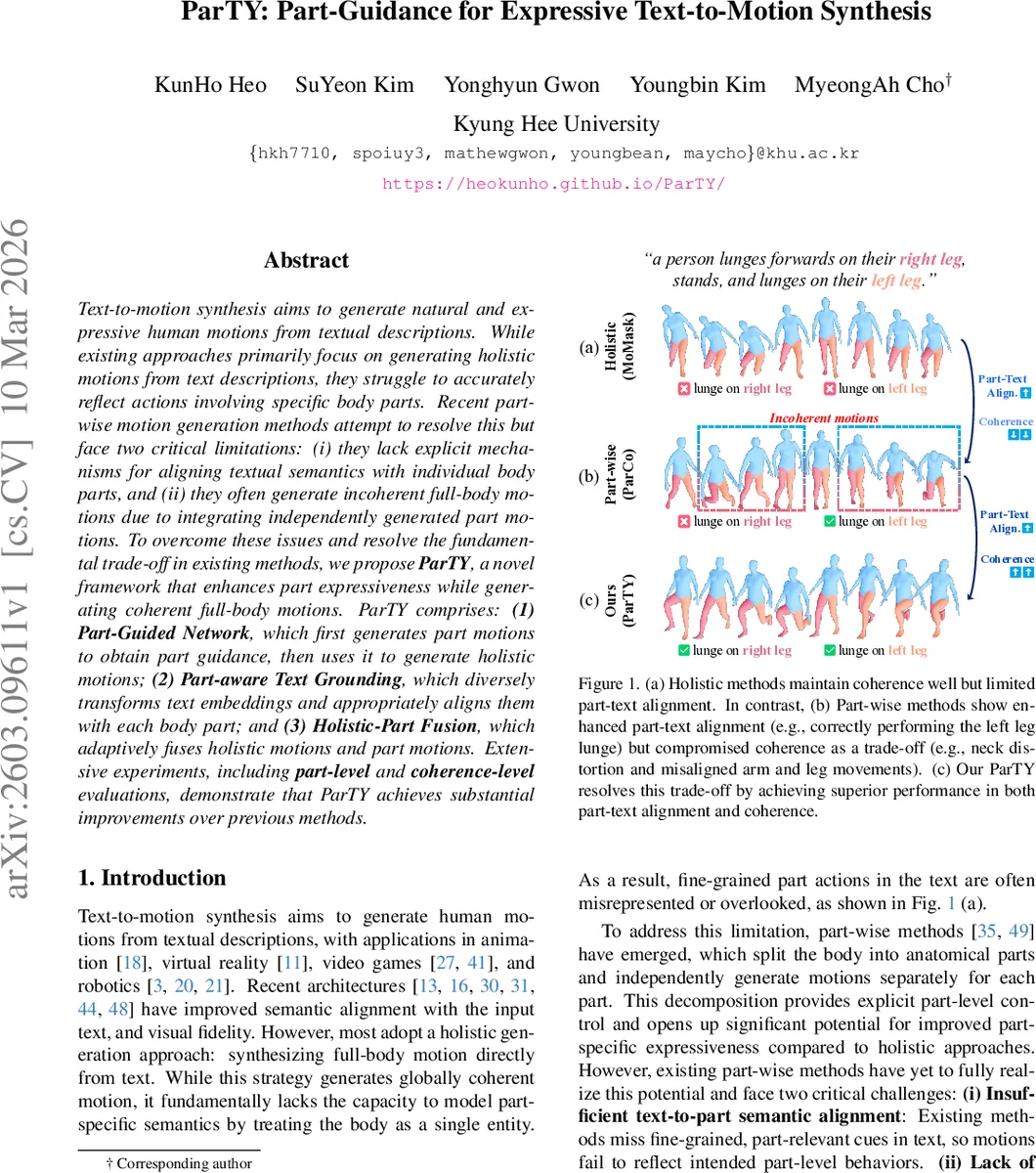

Text-to-motion synthesis aims to generate natural and expressive human motions from textual descriptions. While existing approaches primarily focus on generating holistic motions from text descriptions, they struggle to accurately reflect actions involving specific body parts. Recent part-wise motion generation methods attempt to resolve this but face two critical limitations: (i) they lack explicit mechanisms for aligning textual semantics with individual body parts, and (ii) they often generate incoherent full-body motions due to integrating independently generated part motions. To overcome these issues and resolve the fundamental trade-off in existing methods, we propose ParTY, a novel framework that enhances part expressiveness while generating coherent full-body motions. ParTY comprises: (1) Part-Guided Network, which first generates part motions to obtain part guidance, then uses it to generate holistic motions; (2) Part-aware Text Grounding, which diversely transforms text embeddings and appropriately aligns them with each body part; and (3) Holistic-Part Fusion, which adaptively fuses holistic motions and part motions. Extensive experiments, including part-level and coherence-level evaluations, demonstrate that ParTY achieves substantial improvements over previous methods.

💡 Research Summary

ParTY tackles a long‑standing trade‑off in text‑to‑motion synthesis: the need for fine‑grained control of individual body parts while preserving the overall coherence of the full‑body motion. Existing holistic approaches generate globally consistent motions but cannot faithfully render part‑specific actions described in the text. Conversely, recent part‑wise methods split the body into anatomical components and generate motions for each part independently; however, they suffer from two critical drawbacks: (i) insufficient alignment between textual semantics and body parts, and (ii) incoherent full‑body motions caused by naïve concatenation of independently generated parts.

The proposed framework consists of three tightly coupled modules. First, the Part‑Guided Network generates short sequences of part‑level motion tokens (for arms and legs) and treats them as “part guidance”. This guidance is fed into a holistic transformer that produces the full‑body motion tokens. By interleaving cycles of part‑token generation and holistic token generation, the model continuously injects future part information into the global motion stream, thereby ensuring that the final motion respects both local part dynamics and global temporal consistency.

Second, the Part‑aware Text Grounding (PTG) module bridges the semantic gap between the input sentence and each body part. A single CLIP‑derived sentence embedding is passed through K distinct MLPs, yielding K diverse part‑specific embeddings. Contrastive learning forces each embedding to stay close to the original sentence while diverging from the others, encouraging the capture of different semantic aspects. During training, an LLM is used to generate auxiliary part‑descriptions (e.g., “left arm lifts the cup”) which are also embedded with CLIP; an auxiliary L1 loss aligns the PTG outputs with these part‑specific embeddings. The LLM‑generated texts are discarded at inference, keeping the model lightweight.

Third, the Holistic‑Part Fusion (HPF) module fuses the holistic token stream with the part token streams at every time step. It concatenates the three token sequences, applies self‑attention, then splits the result back into separate streams. Cross‑attention is performed with the holistic tokens as queries and each part stream as key/value, allowing the holistic representation to be directly informed by part dynamics. The fused representation is fed back into the holistic transformer, creating a tight feedback loop that preserves inter‑part coordination.

A supporting technical contribution is the Temporal‑aware VQ‑VAE used for motion quantization. Standard VQ‑VAEs suffer from temporal information loss due to fixed‑size windows. The authors introduce Local Temporal Enhancement (LTE), which aggregates frame‑level features within a sliding window using learned attention weights, and Global Temporal Enhancement (GTE), which employs a graph convolutional network over the window‑level nodes to capture long‑range dependencies. This enriched representation is then quantized into codebook entries, reducing information loss while keeping the codebook size manageable.

Training involves separate cross‑entropy losses for the holistic and part transformers, contrastive loss for PTG, and auxiliary L1 loss from LLM‑generated part texts. The overall loss is a weighted sum of these components.

Evaluation is performed on the standard HumanML3D and KIT‑ML datasets. In addition to conventional metrics (FID, R‑Precision, Diversity), the authors introduce part‑level precision/recall and spatial‑temporal coherence metrics to directly assess how well the generated motion matches part‑specific textual cues and how coherent the full‑body motion remains. ParTY outperforms state‑of‑the‑art holistic models (e.g., MotionDiffuse) and part‑wise baselines (ParCo, LGTM) across all metrics. Notably, part‑level precision improves by roughly 15‑20 % over the best prior part‑wise method, while coherence scores rise substantially, demonstrating that the model does not sacrifice global consistency for part expressiveness.

Ablation studies confirm the importance of each component: removing PTG degrades part‑alignment dramatically; disabling HPF leads to a marked drop in coherence; varying the length of part guidance (the number of frames T) shows that moderate values (e.g., T = 8) balance detail and stability best.

Limitations include the fixed guidance length, which may be sub‑optimal for very long or highly interactive motions, and reliance on LLM‑generated part descriptions only during training, which could limit generalization to highly complex sentences at inference. Future work could explore adaptive guidance lengths, multi‑modal grounding (e.g., video‑text), and real‑time interactive control.

In summary, ParTY presents a coherent, well‑engineered solution that simultaneously advances part‑specific expressiveness and full‑body motion consistency in text‑to‑motion synthesis, and introduces new evaluation protocols that will likely become benchmarks for subsequent research.

Comments & Academic Discussion

Loading comments...

Leave a Comment