From Representation to Clusters: A Contrastive Learning Approach for Attributed Hypergraph Clustering

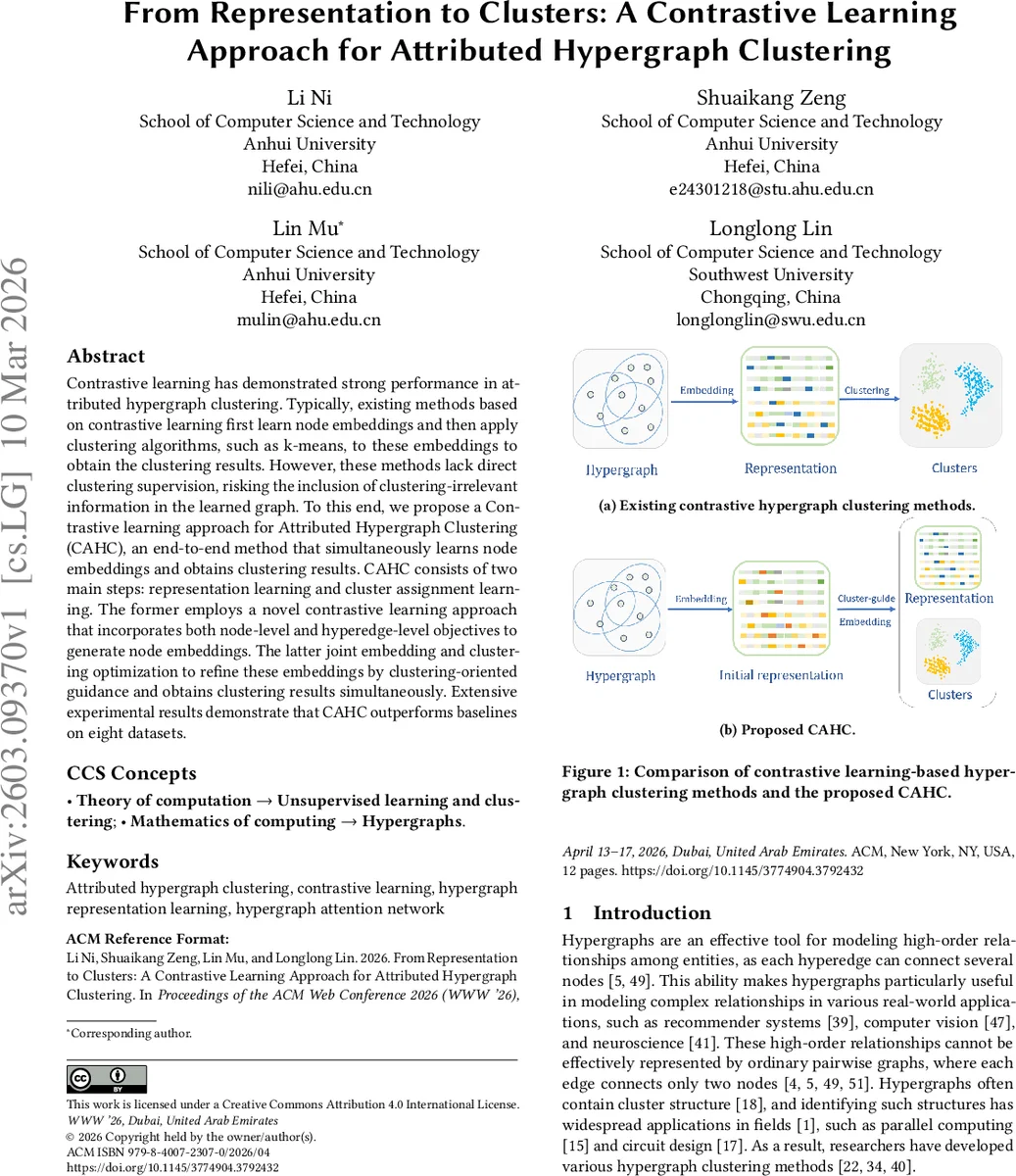

Contrastive learning has demonstrated strong performance in attributed hypergraph clustering. Typically, existing methods based on contrastive learning first learn node embeddings and then apply clustering algorithms, such as k-means, to these embeddings to obtain the clustering results.However, these methods lack direct clustering supervision, risking the inclusion of clustering-irrelevant information in the learned graph.To this end, we propose a Contrastive learning approach for Attributed Hypergraph Clustering (CAHC), an end-to-end method that simultaneously learns node embeddings and obtains clustering results. CAHC consists of two main steps: representation learning and cluster assignment learning. The former employs a novel contrastive learning approach that incorporates both node-level and hyperedge-level objectives to generate node embeddings.The latter joint embedding and clustering optimization to refine these embeddings by clustering-oriented guidance and obtains clustering results simultaneously.Extensive experimental results demonstrate that CAHC outperforms baselines on eight datasets.

💡 Research Summary

The paper introduces CAHC (Contrastive learning approach for Attributed Hypergraph Clustering), an end‑to‑end framework that jointly learns node embeddings and produces cluster assignments for attributed hypergraphs. Traditional contrastive hypergraph clustering pipelines first obtain embeddings via contrastive learning and then apply a separate clustering algorithm such as k‑means. This two‑stage process lacks direct clustering supervision, allowing irrelevant information to be encoded in the embeddings and potentially degrading the final clustering quality.

CAHC addresses this limitation by integrating representation learning and clustering into a single optimization loop. The method consists of two main phases: (1) representation learning and (2) cluster assignment learning.

Representation learning employs two augmentation strategies: (i) node feature masking, which randomly zeros out a proportion p_f of feature entries, and (ii) membership relation masking, which randomly removes or adds node‑hyperedge connections with probability p_m. These augmentations generate two correlated views of the hypergraph, H₁ and H₂. A shared hypergraph neural network (HGNN) with a multi‑head attention mechanism processes both views. The attention mechanism computes separate node‑to‑hyperedge and hyperedge‑to‑node aggregations, allowing the model to weigh the importance of individual nodes within each hyperedge rather than using simple averaging.

Two contrastive objectives are defined:

- Node‑level loss (L_node) follows the InfoNCE formulation. For each node i, the representation from view 1 (z_i^{(1)}) is treated as a positive pair with its counterpart from view 2 (z_i^{(2)}), while all other nodes in view 2 serve as negatives. Cosine similarity and a temperature τ_n are used to compute the loss.

- Hyperedge‑level loss (L_hyper) distinguishes real hyperedges from synthetically generated negative hyperedges. Real hyperedge representations y_e are obtained by mean‑pooling the embeddings of their constituent nodes; negative hyperedges are created by randomly swapping nodes. A sigmoid‑based binary cross‑entropy loss encourages high scores for real hyperedges and low scores for negatives.

The total representation loss is L_rep = L_node + L_hyper, encouraging embeddings to capture both high‑order structural patterns (via L_hyper) and discriminative node‑level information (via L_node).

Cluster assignment learning begins by initializing cluster centroids c_k with k‑means on the embeddings Z_init obtained after the representation phase. Soft assignments μ_{ik} are computed using a temperature‑scaled softmax over cosine similarities between node embeddings z_i and centroids c_k (τ_c controls the sharpness). Hard pseudo‑labels l_i are derived by taking the arg‑max of μ_{ik}. The clustering loss L_clus is a cross‑entropy term that penalizes divergence between the soft assignments and the hard pseudo‑labels:

L_clus = - (1/N) Σ_i Σ_k I(l_i = k) log μ_{ik}.

The overall objective combines both components: L = L_rep + L_clus. During the second training stage, gradients from L_clus flow back to the encoder, allowing the embeddings to be refined under direct clustering guidance, while centroids are updated jointly. This eliminates the need for a separate post‑hoc clustering step and aligns the learned representation space with the desired cluster structure.

Algorithmic flow:

- For T₁ epochs, generate augmented views, construct negative hyperedges, compute L_rep, and update the encoder and projection head.

- Obtain Z_init, run k‑means to get initial centroids.

- For T₂ epochs, compute L_rep, soft assignments μ, hard pseudo‑labels l, clustering loss L_clus, and update encoder, projection head, and centroids jointly.

- Return final hard assignments as the clustering result.

Experimental evaluation spans eight real‑world attributed hypergraph datasets covering text, citation networks, and biological data. Metrics include Normalized Mutual Information (NMI), Adjusted Rand Index (ARI), and clustering accuracy. CAHC consistently outperforms state‑of‑the‑art baselines such as SE‑HSSL, Hyper‑GCL, and TriCL, achieving average improvements of 4–7% in NMI and ARI. Ablation studies demonstrate that each component—hyperedge‑level contrast, multi‑head attention, and the clustering loss—contributes significantly: removing any of them leads to noticeable performance drops (3–9%).

Contributions and significance:

- First end‑to‑end contrastive framework that directly incorporates clustering supervision for attributed hypergraph clustering.

- Novel hyperedge‑level contrastive objective that explicitly captures high‑order relationships.

- Multi‑head attention‑augmented HGNN that learns adaptive importance weights for nodes within hyperedges.

- Joint embedding‑clustering loss that aligns representation learning with the clustering objective, removing reliance on external k‑means after training.

- Open‑source implementation and reproducibility package.

In summary, CAHC provides a powerful, unified solution for unsupervised clustering of attributed hypergraphs, leveraging contrastive learning at both node and hyperedge levels while simultaneously steering embeddings toward meaningful cluster structures.

Comments & Academic Discussion

Loading comments...

Leave a Comment