Reading the Mood Behind Words: Integrating Prosody-Derived Emotional Context into Socially Responsive VR Agents

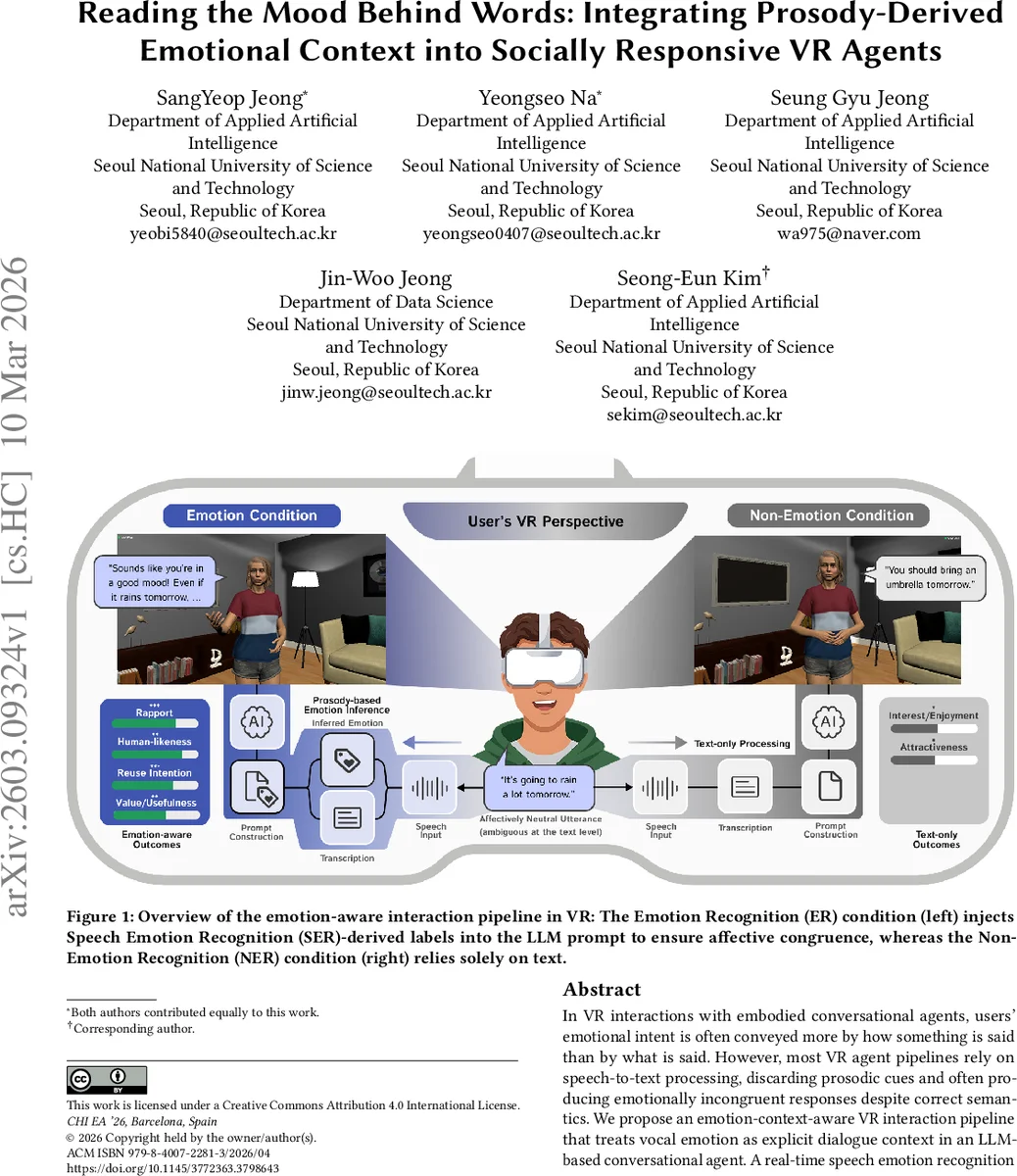

In VR interactions with embodied conversational agents, users’ emotional intent is often conveyed more by how something is said than by what is said. However, most VR agent pipelines rely on speech-to-text processing, discarding prosodic cues and often producing emotionally incongruent responses despite correct semantics. We propose an emotion-context-aware VR interaction pipeline that treats vocal emotion as explicit dialogue context in an LLM-based conversational agent. A real-time speech emotion recognition model infers users’ emotional states from prosody, and the resulting emotion labels are injected into the agent’s dialogue context to shape response tone and style. Results from a within-subjects VR study (N=30) show significant improvements in dialogue quality, naturalness, engagement, rapport, and human-likeness, with 93.3% of participants preferring the emotion-aware agent.

💡 Research Summary

The paper addresses a fundamental limitation in current virtual‑reality (VR) conversational agents: the reliance on a speech‑to‑text (STT) pipeline that discards prosodic information, resulting in agents that understand what users say but not how they say it. To overcome this, the authors propose an emotion‑context‑aware interaction pipeline that treats vocal emotion as explicit dialogue context for a large language model (LLM).

A real‑time speech emotion recognition (SER) component based on a HuBERT‑base model extracts discrete emotion labels (Happy, Sad, Angry, Neutral) from the user’s voice. On the IEMOCAP benchmark the model achieves 67.62 % accuracy; during the user study it reaches 72 % overall, with especially high performance for Happy (92.2 %) and Sad (95.4 %) but low performance for Angry (19.3 %) due to a cross‑lingual acoustic gap (the model was pre‑trained on English while participants spoke Korean).

The extracted label is injected directly into the LLM prompt in the form “

Comments & Academic Discussion

Loading comments...

Leave a Comment