Synthesizing Interpretable Control Policies through Large Language Model Guided Search

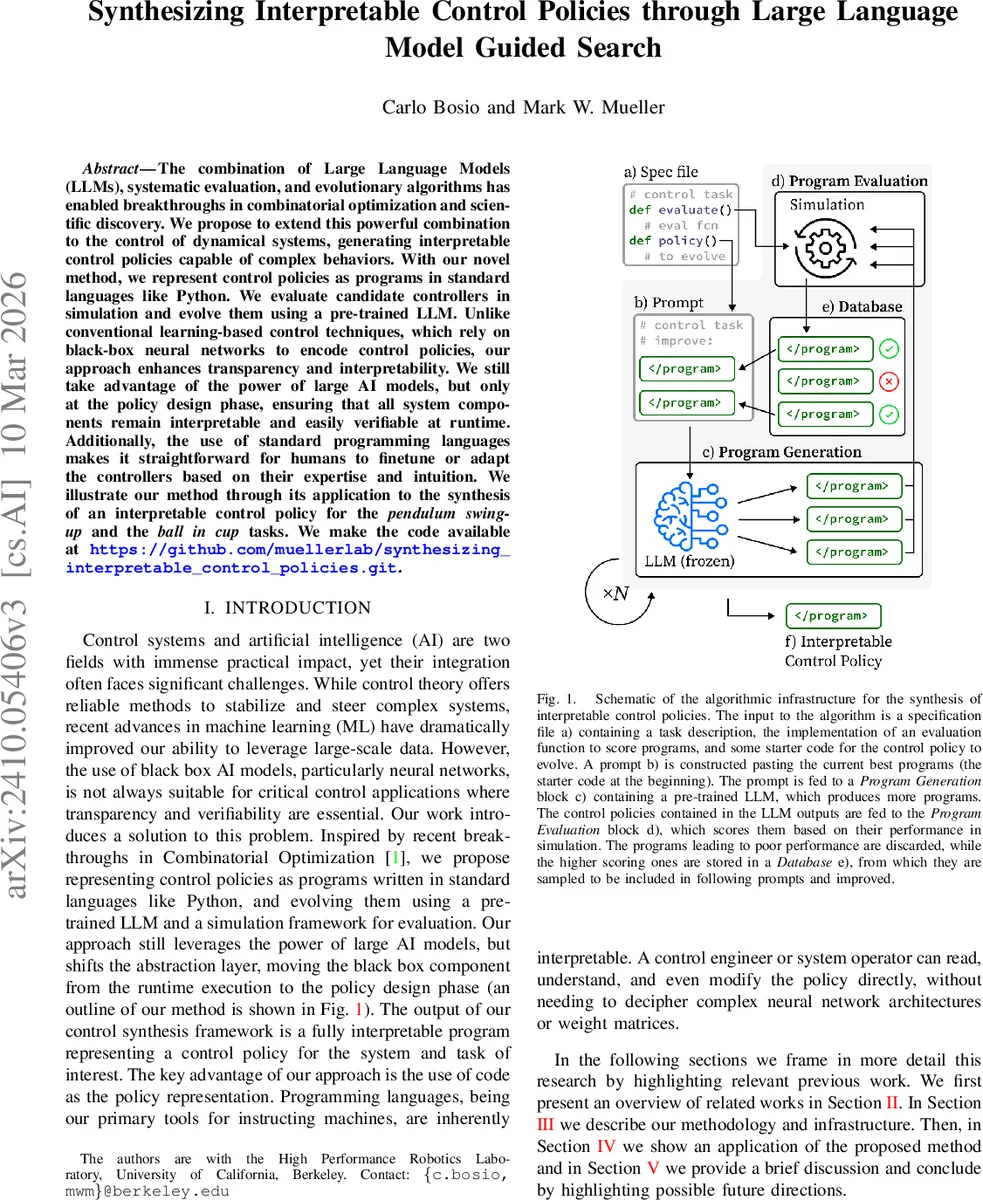

The combination of Large Language Models (LLMs), systematic evaluation, and evolutionary algorithms has enabled breakthroughs in combinatorial optimization and scientific discovery. We propose to extend this powerful combination to the control of dynamical systems, generating interpretable control policies capable of complex behaviors. With our novel method, we represent control policies as programs in standard languages like Python. We evaluate candidate controllers in simulation and evolve them using a pre-trained LLM. Unlike conventional learning-based control techniques, which rely on black-box neural networks to encode control policies, our approach enhances transparency and interpretability. We still take advantage of the power of large AI models, but only at the policy design phase, ensuring that all system components remain interpretable and easily verifiable at runtime. Additionally, the use of standard programming languages makes it straightforward for humans to finetune or adapt the controllers based on their expertise and intuition. We illustrate our method through its application to the synthesis of an interpretable control policy for the \textit{pendulum swing-up} and the \textit{ball in cup} tasks. We make the code available at https://github.com/muellerlab/synthesizing_interpretable_control_policies.git.

💡 Research Summary

The paper introduces a novel framework that synthesizes interpretable control policies by leveraging large language models (LLMs) together with evolutionary search and simulation‑based evaluation. Traditional learning‑based control methods often rely on black‑box neural networks, which, despite achieving high performance, lack transparency and are difficult to verify in safety‑critical applications. To address this, the authors represent a control policy directly as a Python function, treating the source code itself as the optimization variable rather than a set of tunable parameters.

The problem is formalized as a discrete‑time dynamical system xₜ₊₁ = f(xₜ, uₜ) with stage reward rₜ = g(xₜ, uₜ). The objective is to maximize the cumulative reward R = Σₜ rₜ by finding a policy uₜ = h(xₜ). Instead of restricting h(·) to a predefined functional family, the authors encode h as a program policy(x) written in Python. This transforms the search into an exploration of an infinite‑dimensional program space, which is intractable by random token sampling alone.

To make the search feasible, a pre‑trained code‑generation LLM serves as the program generator. The input to the LLM is a “specification file” containing a textual task description, allowed libraries, starter code, and an evaluation function. At each iteration, the two highest‑scoring programs discovered so far are concatenated with an instruction to “improve upon these programs.” The LLM then generates a new function body, effectively performing a crossover‑like operation on the existing solutions. Token sampling is controlled by temperature T = 1, top‑p = 0.95, and a repeat‑last‑n = 15 window to balance diversity and avoid repetitive output.

Generated programs are parsed and executed inside a sandboxed simulation environment using the provided evaluate() routine. Programs that fail to compile or cause runtime errors are discarded. Successful runs receive a numerical score equal to the cumulative reward, and the (program, score) pair is stored in a database. For the next iteration, two programs are randomly sampled from this database and fed back into the prompt, creating a closed loop of generation, evaluation, and selection.

To mitigate premature convergence, the authors adopt an island model: multiple independent populations evolve in parallel. Periodically, islands with poor performance are emptied and repopulated with elite programs from more successful islands, encouraging migration of high‑quality genetic material across the search space.

The framework is validated on two classic nonlinear control benchmarks: (1) pendulum swing‑up, where the goal is to swing a pendulum from the downward equilibrium to the upright position, and (2) ball‑in‑cup, which requires guiding a ball into a moving cup. For each task, the initial starter code simply returns a random action, and the evaluation function returns the total reward over 1000 simulation steps. After roughly 30–50 evolutionary cycles, the method discovers compact Python controllers (typically 10–20 lines) that achieve success rates above 90 % on both tasks. The resulting policies are human‑readable, with clear variable names and logical flow, allowing engineers to inspect, modify, or formally verify the code without dealing with opaque weight matrices.

Key contributions include: (1) redefining control synthesis as program synthesis, thereby guaranteeing interpretability at the source‑code level; (2) exploiting the generative capabilities of LLMs as a powerful, domain‑agnostic search operator while still grounding the search in task‑specific simulation feedback; (3) introducing a parallel island‑based evolutionary scheme that improves exploration efficiency and reduces the risk of local optima; and (4) demonstrating that the final policies are pure Python code, which can be seamlessly integrated into existing verification pipelines for safety‑critical systems.

The paper also acknowledges limitations. The quality of generated code depends heavily on the size and training data of the LLM; more complex systems may produce excessively long programs, inflating the search cost. Moreover, the current approach relies exclusively on simulated evaluation, so transferring the learned policies to real hardware may encounter model‑reality gaps that require additional domain adaptation techniques. Future work is suggested in areas such as real‑world robot deployment, multi‑objective optimization, and the incorporation of formal methods to verify the correctness of the synthesized code.

In summary, this work presents a compelling hybrid of modern AI language models and classic evolutionary optimization to produce transparent, high‑performing controllers, opening a promising pathway toward trustworthy, AI‑augmented control system design.

Comments & Academic Discussion

Loading comments...

Leave a Comment