Why Channel-Centric Models are not Enough to Predict End-to-End Performance in Private 5G: A Measurement Campaign and Case Study

Communication-aware robot planning requires accurate predictions of wireless network performance. Current approaches rely on channel-level metrics such as received signal strength and signal-to-noise ratio, assuming these translate reliably into end-to-end throughput. We challenge this assumption through a measurement campaign in a private 5G industrial environment. We evaluate throughput predictions from a commercial ray-tracing simulator as well as data-driven Gaussian process regression models against measurements collected using a mobile robot. The study uses off-the-shelf user equipment in an underground, radio-shielded facility with detailed 3D modeling, representing a best-case scenario for prediction accuracy. The ray-tracing simulator captures the spatial structure of indoor propagation and predicts channel-level metrics with reasonable fidelity. However, it systematically over-predicts throughput, even in line-of-sight regions. The dominant error source is shown to be over-estimation of sustainable MIMO spatial layers: the simulator assumes near-uniform four-layer transmission while measurements reveal substantial adaptation between one and three layers. This mismatch inflates predicted throughput even when channel metrics appear accurate. In contrast, a Gaussian process model with a rational quadratic kernel achieves approximately two-thirds reduction in prediction error with near-zero bias by learning end-to-end throughput directly from measurements. These findings demonstrate that favorable channel conditions do not guarantee high throughput; communication-aware planners relying solely on channel-centric predictions risk overly optimistic trajectories that violate reliability requirements. Accurate throughput prediction for 5G systems requires either extensive calibration of link-layer models or data-driven approaches that capture real system behavior.

💡 Research Summary

This paper investigates whether channel‑centric models are sufficient for predicting the end‑to‑end downlink throughput that mobile robots experience in a private 5G industrial environment. The authors conduct a comprehensive measurement campaign in the KTH Reactor Hall, an underground, radio‑shielded facility equipped with an Ericsson Private 5G (EP5G) network. A mobile robot equipped with commercial user equipment traverses the site, collecting dense spatial samples of downlink throughput, latency, link‑layer metrics (e.g., PRB allocation, MIMO rank, SINR), and repeated measurements to quantify variability.

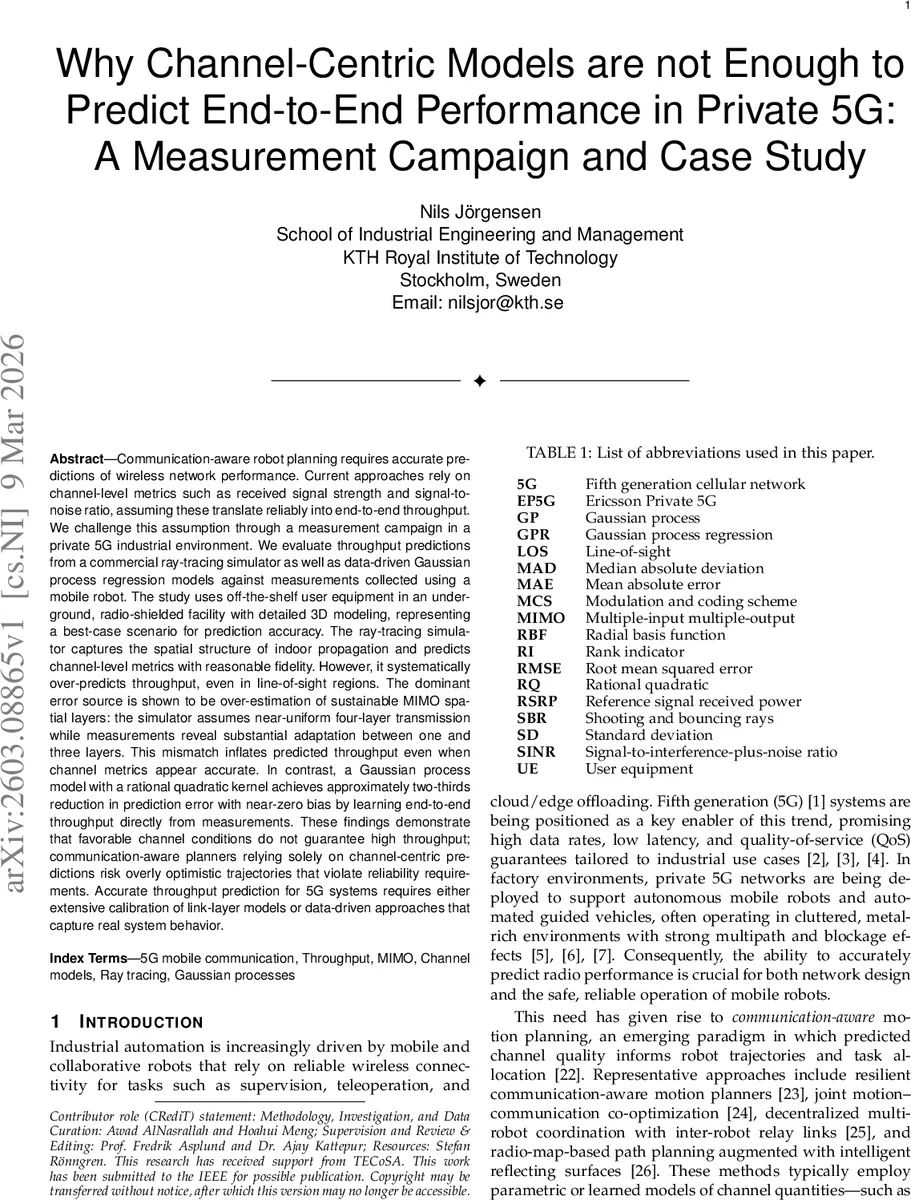

Two prediction approaches are evaluated. First, a commercial deterministic ray‑tracing simulator is configured with a 3GPP‑compliant indoor‑factory channel model and a detailed 3‑D building model. The simulator outputs channel‑level quantities (RSRP, SINR) which are fed into a standard 3GPP link‑adaptation model that assumes a uniform four‑layer MIMO transmission. Second, a data‑driven Gaussian Process Regression (GPR) model is trained directly on the measured throughput data. The GPR uses spatial coordinates as inputs and predicts both mean throughput and predictive variance. Three kernel families are examined—Radial Basis Function (RBF), Matérn (ν = 1), and Rational Quadratic (RQ). The composite kernel includes a constant signal variance term and a white‑noise term to capture measurement uncertainty; hyper‑parameters are optimized by maximizing the marginal log‑likelihood.

The evaluation framework quantifies bias, root‑mean‑square error (RMSE), mean absolute error (MAE), and median absolute deviation (MAD) for both approaches. Results show that the ray‑tracing simulator reproduces channel‑level metrics with modest errors (MAE ≈ 1.2 dB for RSRP, 1.8 dB for SINR) but systematically over‑predicts throughput. The average bias is +12 Mbps (≈ 30 % overestimation) and RMSE reaches 15 Mbps. Detailed analysis attributes this discrepancy primarily to the simulator’s assumption of a constant four‑layer spatial multiplexing rank, whereas measurements reveal adaptive rank selection ranging from one to three layers depending on local multipath conditions and scheduler load. Consequently, the simulated MCS and PRB allocation are overly optimistic even when SINR is accurately modeled.

In contrast, the GPR model with the Rational Quadratic kernel achieves near‑zero bias (+0.3 Mbps) and reduces RMSE to 9 Mbps, a two‑thirds reduction relative to the ray‑tracing approach. MAE drops to 6 Mbps, and the model’s predictive variance highlights regions with sparse measurements, offering a principled uncertainty estimate useful for risk‑aware planning. The authors note that the RQ kernel’s ability to capture multi‑scale spatial correlations is key to its superior performance.

The paper’s contributions are fourfold: (1) a publicly released dataset of spatially dense 5G throughput and link‑layer measurements in a realistic industrial setting; (2) a calibrated ray‑tracing‑based radio‑network planning workflow applied to the same site; (3) a data‑driven GPR throughput model that directly learns end‑to‑end performance; and (4) a comparative analysis framework quantifying bias, accuracy, and variance of channel‑centric versus data‑driven predictions.

From a broader perspective, the findings challenge the prevalent assumption in communication‑aware motion planning that favorable channel metrics (high RSRP, SINR) guarantee sufficient throughput. In environments with complex metal structures and dynamic scheduling, link‑layer adaptations such as MIMO rank selection dominate throughput outcomes. Planners that rely solely on channel‑centric predictions risk generating overly optimistic trajectories that may violate reliability or latency constraints. Incorporating data‑driven throughput maps—or at least calibrating channel‑centric models with extensive link‑layer measurements—emerges as a necessary step for safe and efficient robot navigation in private 5G networks.

The authors conclude by outlining future work: extending the study to multi‑robot scenarios, exploring online model updating for dynamic environments, and evaluating other machine‑learning regressors (e.g., deep neural networks) for scalability. The paper thus provides both a methodological benchmark and practical guidance for integrating realistic wireless performance models into next‑generation industrial robotics.

Comments & Academic Discussion

Loading comments...

Leave a Comment