Scale Space Diffusion

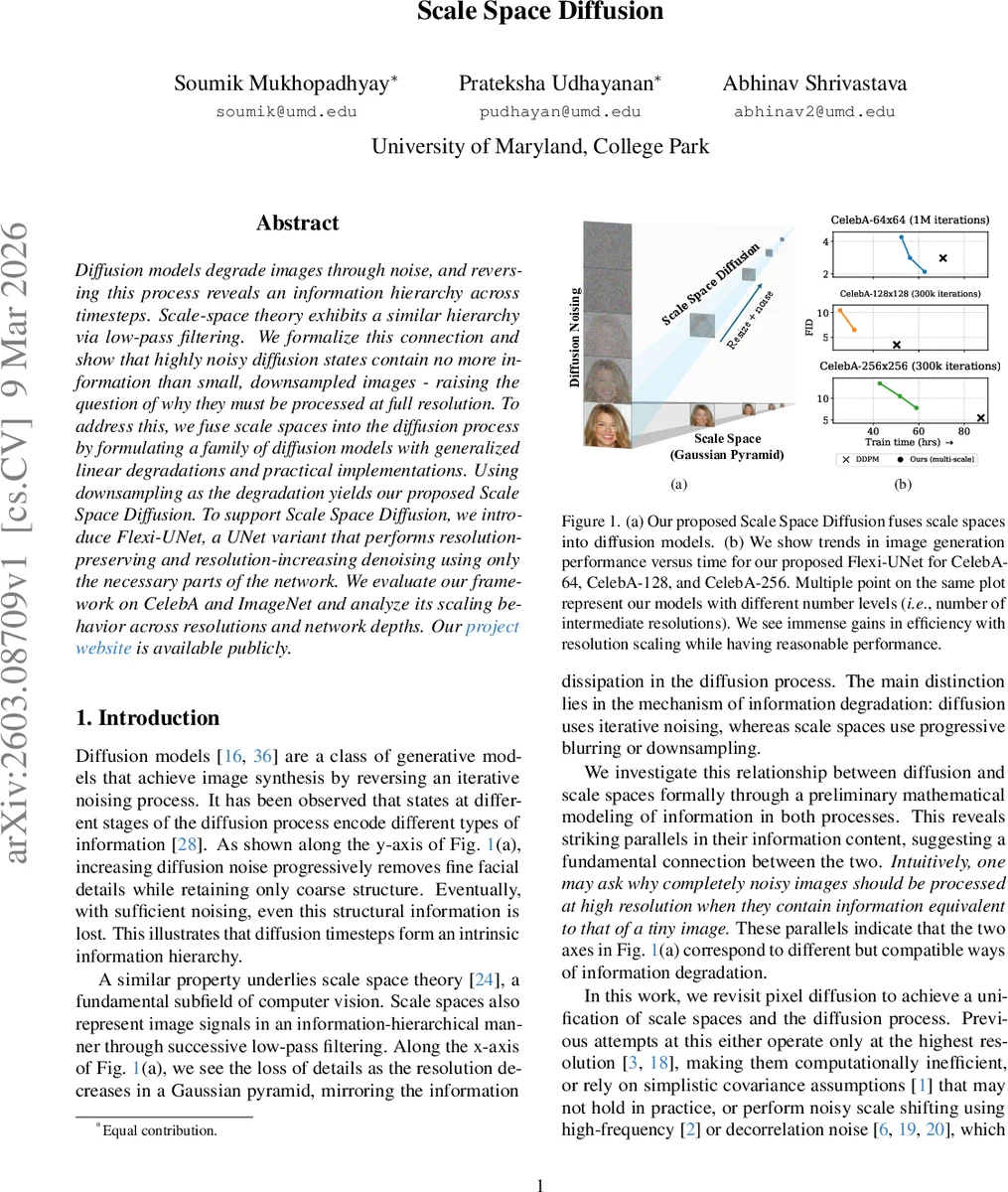

Diffusion models degrade images through noise, and reversing this process reveals an information hierarchy across timesteps. Scale-space theory exhibits a similar hierarchy via low-pass filtering. We formalize this connection and show that highly noisy diffusion states contain no more information than small, downsampled images - raising the question of why they must be processed at full resolution. To address this, we fuse scale spaces into the diffusion process by formulating a family of diffusion models with generalized linear degradations and practical implementations. Using downsampling as the degradation yields our proposed Scale Space Diffusion. To support Scale Space Diffusion, we introduce Flexi-UNet, a UNet variant that performs resolution-preserving and resolution-increasing denoising using only the necessary parts of the network. We evaluate our framework on CelebA and ImageNet and analyze its scaling behavior across resolutions and network depths. Our project website ( https://prateksha.github.io/projects/scale-space-diffusion/ ) is available publicly.

💡 Research Summary

The paper investigates a deep connection between diffusion models and classic scale‑space theory, showing that the information hierarchy present across diffusion timesteps mirrors that of a Gaussian pyramid. By analytically quantifying information loss in both processes—using a Gaussian‑CDF‑based metric for diffusion and a simple area‑proportional model for scale—the authors demonstrate that highly noisy diffusion states contain essentially the same amount of information as heavily down‑sampled images. This observation motivates a unified formulation that augments the standard diffusion forward process with a linear degradation operator (M_t) (e.g., blurring or down‑sampling) in addition to additive Gaussian noise.

Mathematically, the forward transition becomes (x_t = M_t x_{t-1} + \eta_t) with (\eta_t \sim \mathcal N(0,\Sigma_{t|t-1})). By enforcing isotropic marginal covariances ((\Sigma_t = \sigma_t^2 I)) and deriving conditions for positive‑semidefinite transition covariances, the authors obtain a closed‑form expression for the marginal distribution (q(x_t|x_0) = \mathcal N(M_{1:t} x_0, \sigma_t^2 I)). Crucially, when (M_t) is a down‑sampling operator, (M_{1:t}) corresponds to successive reductions in resolution, meaning that at large (t) the latent is effectively a low‑resolution version of the original image plus noise. The posterior (q(x_{t-1}|x_t,x_0)) is also derived analytically, yielding a simple update rule (Eq. 6) that generalises the DDPM reverse step while accounting for the non‑isotropic effect of down‑sampling.

To make this “Scale Space Diffusion” (SSD) practical, the authors introduce Flexi‑UNet, a variant of the classic UNet that dynamically activates only the network levels required for the current resolution. Small‑scale inputs bypass deep encoder/decoder blocks, saving computation, while up‑sampling steps re‑activate higher‑resolution pathways as needed. This design enables a single model to handle both resolution‑preserving diffusion steps and resolution‑increasing transitions without the overhead of training multiple separate models.

Empirical evaluation is performed on CelebA (64×64, 128×128, 256×256) and ImageNet‑256. Compared to a baseline DDPM with the same FLOPs, SSD‑Flexi‑UNet reduces training time by roughly 2–3× and sampling time by 3–4×, while achieving competitive Fréchet Inception Distance (FID) scores (only 5–12 % higher than DDPM). Ablation studies show that increasing the number of resolution levels (e.g., 3‑5 levels) yields the best trade‑off between speed and quality, especially for the highest resolution CelebA‑256. The paper also contrasts SSD with prior multi‑scale diffusion approaches such as cascaded diffusion, Matryoshka Diffusion, and Pyramidal Flow Matching, highlighting that SSD integrates scale changes directly into the diffusion Markov chain rather than treating them as post‑hoc up‑sampling steps or separate models. Moreover, unlike UDPM, SSD explicitly handles the non‑isotropic posterior induced by down‑sampling, avoiding the simplifying isotropic covariance assumption.

In summary, the contributions are threefold: (1) a theoretical analysis linking diffusion timesteps to scale‑space resolutions; (2) a generalized linear diffusion framework that accommodates arbitrary linear degradations, with a concrete implementation using down‑sampling (SSD); and (3) the Flexi‑UNet architecture that efficiently realizes SSD by conditionally using network depth. The work demonstrates that incorporating scale‑space concepts into diffusion models yields substantial computational savings while preserving generation quality, opening avenues for further extensions such as more complex degradations, latent‑space variants, or transformer‑based backbones.

Comments & Academic Discussion

Loading comments...

Leave a Comment