Scalable multitask Gaussian processes for complex mechanical systems with functional covariates

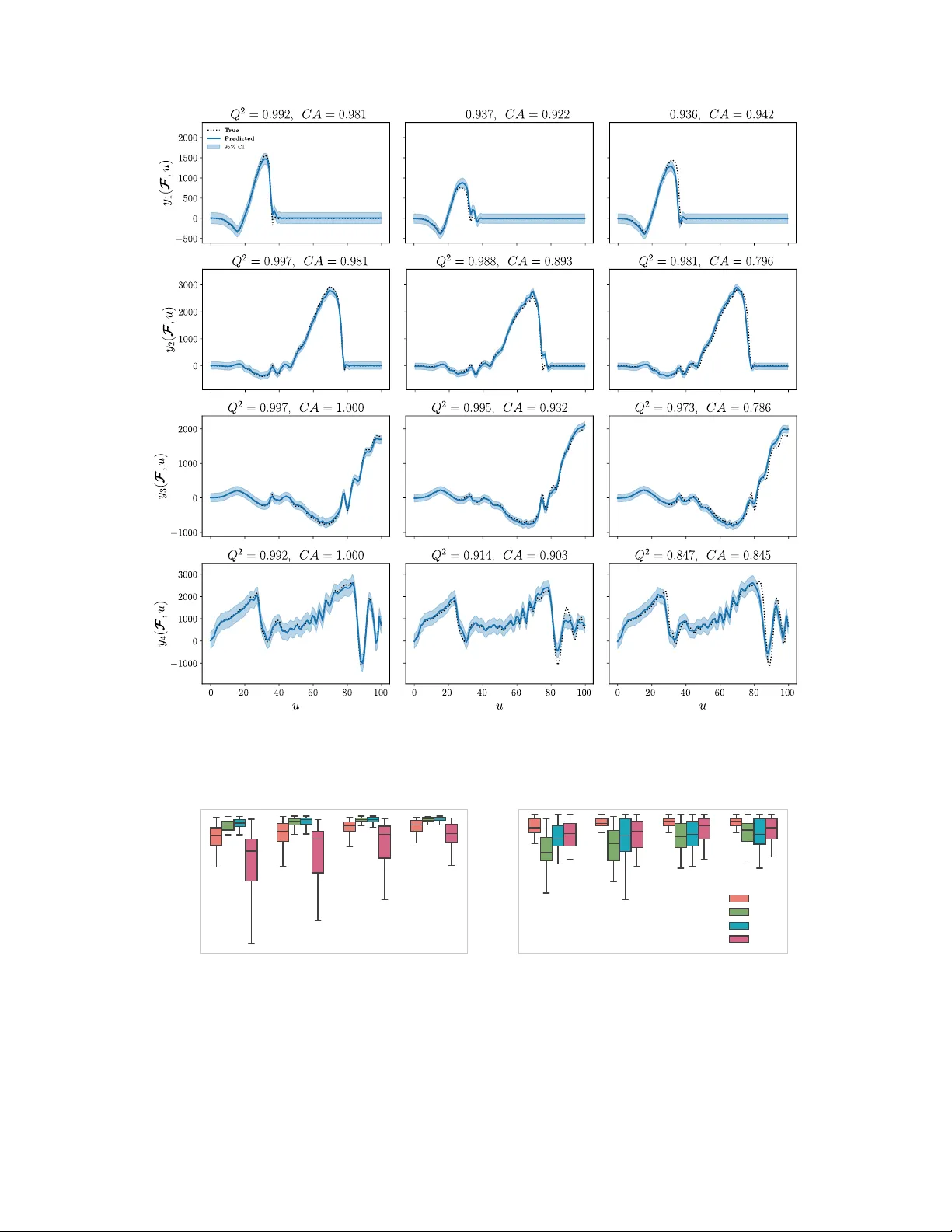

Functional covariates arise in many scientific and engineering applications when model inputs take the form of time-dependent or spatially distributed profiles, such as varying boundary conditions or changing material behaviours. In addition, new pra…

Authors: Razak Christophe Sabi Gninkou, Andrés F. López-Lopera, Franck Massa