Few Tokens, Big Leverage: Preserving Safety Alignment by Constraining Safety Tokens during Fine-tuning

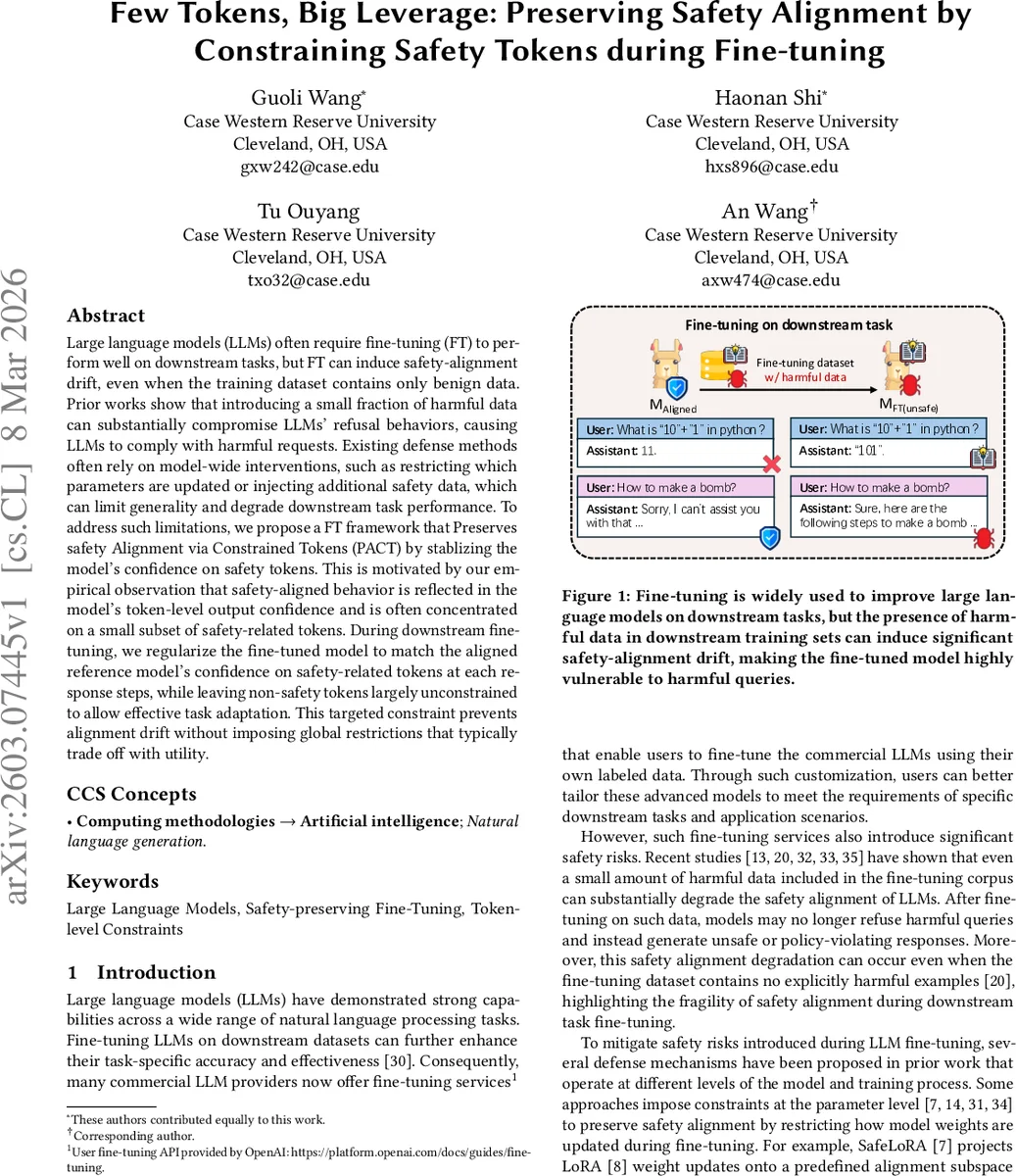

Large language models (LLMs) often require fine-tuning (FT) to perform well on downstream tasks, but FT can induce safety-alignment drift even when the training dataset contains only benign data. Prior work shows that introducing a small fraction of harmful data can substantially compromise LLM refusal behavior, causing LLMs to comply with harmful requests. Existing defense methods often rely on model-wide interventions, such as restricting which parameters are updated or injecting additional safety data, which can limit generality and degrade downstream task performance. To address these limitations, we propose a fine-tuning framework called Preserving Safety Alignment via Constrained Tokens (PACT), which stabilizes the model’s confidence on safety tokens. Our approach is motivated by the empirical observation that safety-aligned behavior is reflected in the model’s token-level output confidence and is often concentrated on a small subset of safety-related tokens. During downstream fine-tuning, we regularize the fine-tuned model to match the aligned reference model’s confidence on safety-related tokens at each response step, while leaving non-safety tokens largely unconstrained to allow effective task adaptation. This targeted constraint prevents alignment drift without imposing global restrictions that typically trade off with model utility.

💡 Research Summary

The paper tackles the problem of safety‑alignment drift that occurs when large language models (LLMs) are fine‑tuned on downstream tasks, even if the fine‑tuning data are ostensibly benign. Prior defenses either freeze large portions of the model’s parameters (e.g., SafeLoRA) or inject additional safety data, but these approaches tend to limit the model’s ability to adapt to new tasks and can degrade downstream performance. The authors observe that safety‑aligned behavior is not distributed uniformly across the vocabulary; instead, it is concentrated on a relatively small set of “safety tokens” that carry most of the model’s refusal or safe‑response signal (e.g., “I”, “cannot”, “sorry”, “but”, “provide”).

To exploit this insight, they propose PACT (Preserving Safety Alignment via Constrained Tokens), a fine‑tuning framework that explicitly preserves the confidence of the original safety‑aligned model on these safety tokens while allowing unrestricted learning on all other tokens. The pipeline consists of three stages: (1) safety‑token identification, (2) weighted KL‑regularization, and (3) safety‑signal calibration.

In the identification stage, the authors compute token‑wise probability differences Δₜ(v) = pₛₐfₑ(v|x, y<ₜ) – p_bₐₛₑ(v|x, y<ₜ) between a safety‑aligned model (M_safe) and its base pre‑trained counterpart (M_base) on a set of harmful prompts. Averaging Δₜ(v) across prompts and positions yields a global discrepancy score d(v). The top K=50 tokens with the highest d(v) are selected as safety tokens. Empirically, these tokens are dominated by refusal‑related words.

To validate their importance, the authors intervene at the logit level: adding a constant α=5.0 to safety‑token logits dramatically reduces attack success rate from 33.5 % to 0.5 %, while suppressing the same tokens (logits set to –10⁹) raises the attack success rate to 41.5 %. This experiment confirms that the model’s safety behavior hinges on a tiny subset of tokens.

Next, they track the average confidence of safety tokens and the safety score (1 – ASR) across fine‑tuning epochs when a fraction of harmful data (from AdvBench) is mixed into a downstream dataset (e.g., GSM8K). Both metrics decline in lockstep, indicating that drift in safety alignment correlates with diminishing confidence on safety tokens.

PACT’s core regularization replaces a global KL loss with a token‑weighted version:

L_KL = Σᵥ w(v)·KL(p_ft(v) ‖ p_safe(v))

where w(v) = d(v) (or a normalized version) assigns higher weight to safety tokens. This forces the fine‑tuned model (M_ft) to match the safety‑aligned model’s distribution on safety tokens while leaving non‑safety tokens free to adapt to the downstream objective.

Because a naïve KL constraint can be too weak (failing to preserve safety) or too strong (hindering task learning), the authors introduce a calibration step. Early in training they blend the full‑context distribution (conditioned on the prompt and partial response) with a response‑only distribution, stabilizing the safety signal and preventing abrupt changes in safety‑token confidence.

The method is evaluated on four model families (Qwen‑2.5‑7B‑Instruct, Llama‑3.1‑8B, Llama‑3.2‑1B, Gemma‑2‑9B) across three downstream tasks (GSM8K arithmetic, SST‑2 sentiment, AGNEWS topic classification) and varying proportions of harmful data (0 %–10 %). Baselines include SafeLoRA, Lisa (proximal regularization), full‑model KL, and vanilla fine‑tuning.

Results show that PACT consistently achieves the best safety‑utility trade‑off. On the StrongReject benchmark, attack success rates drop to 5.75 %–9.27 % (vs. >30 % for vanilla FT and >15 % for other defenses). On HarmBench, success rates fall to 13.5 %–29.5 % (again outperforming baselines). Crucially, task accuracy remains comparable to vanilla fine‑tuning (often within 0.5 % absolute difference), demonstrating that the token‑level constraint does not sacrifice downstream performance.

Ablation studies reveal that (i) using the top‑50 safety tokens is sufficient; increasing K yields diminishing returns, (ii) weighting tokens by the discrepancy score d(v) yields smoother training than binary masks, and (iii) the calibration step is essential for stability when the harmful data fraction exceeds 5 %.

In summary, the paper provides strong empirical evidence that safety alignment in LLMs is concentrated on a small set of tokens. By constraining only these tokens during fine‑tuning, PACT preserves safety without imposing heavy global restrictions, offering a scalable and model‑agnostic solution. Future work may explore automatic safety‑token discovery across languages, dynamic adjustment of token weights during training, and deployment in real‑time API services.

Comments & Academic Discussion

Loading comments...

Leave a Comment