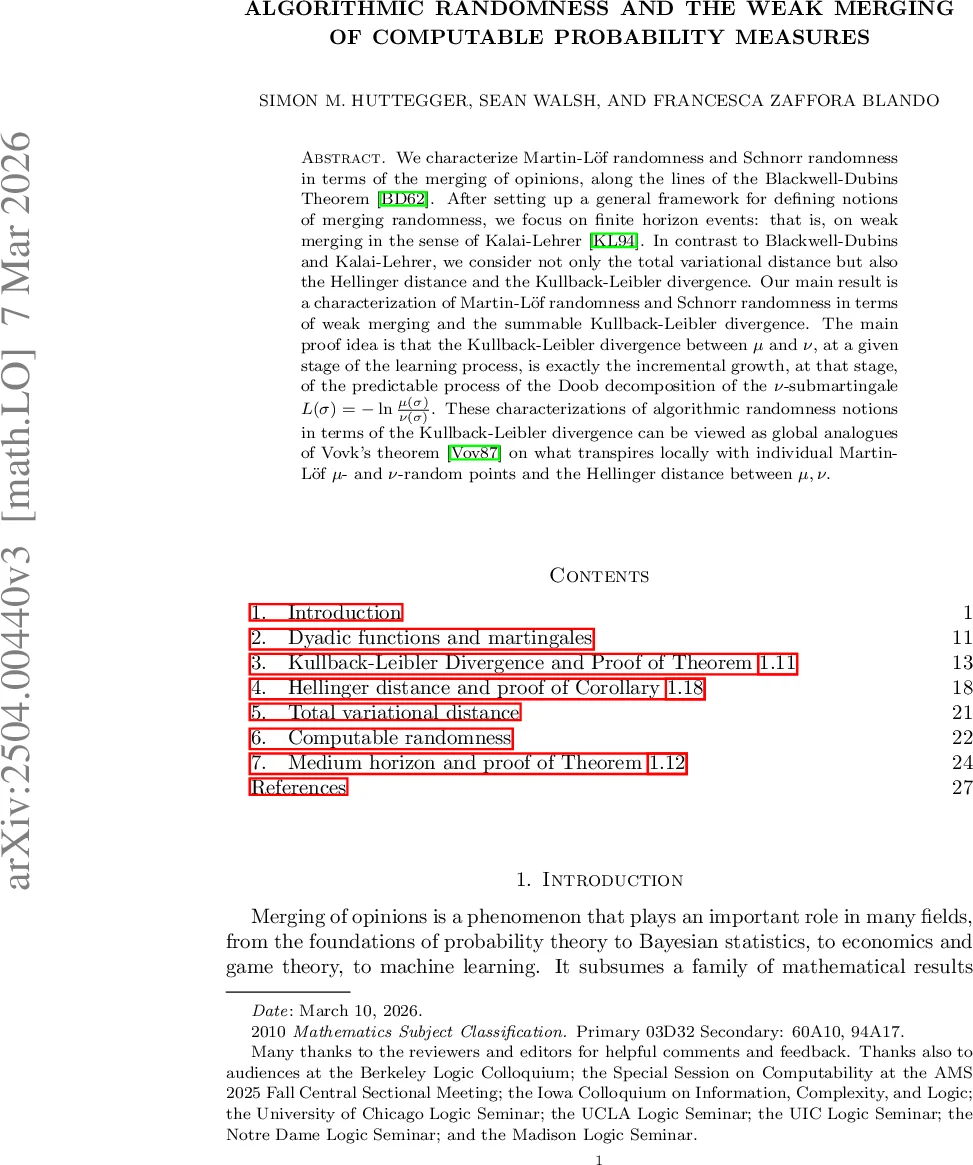

Algorithmic randomness and the weak merging of computable probability measures

We characterize Martin-Löf randomness and Schnorr randomness in terms of the merging of opinions, along the lines of the Blackwell-Dubins Theorem. After setting up a general framework for defining notions of merging randomness, we focus on finite horizon events, that is, on weak merging in the sense of Kalai-Lehrer. In contrast to Blackwell-Dubins and Kalai-Lehrer, we consider not only the total variational distance but also the Hellinger distance and the Kullback-Leibler divergence. Our main result is a characterization of Martin-Löf randomness and Schnorr randomness in terms of weak merging and the summable Kullback-Leibler divergence. The main proof idea is that the Kullback-Leibler divergence between $μ$ and $ν$, at a given stage of the learning process, is exactly the incremental growth, at that stage, of the predictable process of the Doob decomposition of the $ν$-submartingale $L(σ)=-\ln \frac{μ(σ)}{ν(σ)}$. These characterizations of algorithmic randomness notions in terms of the Kullback-Leibler divergence can be viewed as global analogues of Vovk’s theorem on what transpires locally with individual Martin-Löf $μ$- and $ν$-random points and the Hellinger distance between $μ,ν$.

💡 Research Summary

The paper investigates the relationship between algorithmic randomness and the merging of probabilistic forecasts on Cantor space. It introduces a formal “merging quadruple” consisting of a merging exponent p, a merging relation ⪯ (e.g., absolute continuity, KL‑boundedness), a sequence of σ‑algebras Gₙ (weak merging uses Gₙ = Fₙ₊₁, strong merging uses the full Borel σ‑algebra), and a distance measure ρ (total variation T, Hellinger H, or Kullback‑Leibler D). For a computable measure ν and any computable μ with ν ⪯ μ, the random variable ρ₍Gₙ₎(ν,μ)(ω) quantifies the distance between the conditional forecasts of ν and μ at stage n.

The main contribution is a pair of characterizations of Martin‑Löf randomness (MLR) and Schnorr randomness (SR) in terms of weak merging and the summability of the KL‑divergence. Specifically, a sequence ω is μ‑MLR if and only if, for every computable ν satisfying ν ≪{kl} μ (i.e., the KL‑divergence sup E_ν ln ν/μ is finite), the series ∑ₙ D{Fₙ₊₁}(μ | ν)(ω) converges (ℓ¹‑summable). The analogous statement for Schnorr randomness replaces the KL‑relation with its computable version ν ≪_{klc} μ and requires ℓ²‑summability.

The proof hinges on the submartingale L(σ)=−ln μ(σ)/ν(σ). Its Doob decomposition L = M + A separates a martingale part M from a predictable increasing part A; the latter is exactly the incremental KL‑divergence D_{Fₙ₊₁}(μ | ν). Thus the finiteness of the total predictable increase corresponds precisely to the convergence conditions defining algorithmic randomness.

The paper also revisits classical results: the Blackwell‑Dubins theorem (strong merging in total variation) and the Kalai‑Lehrer weak merging theorem, extending them to Hellinger and KL distances. It shows that weak Hellinger‑merging (ℓ²‑summable) implies absolute continuity, and that strong merging holds ν‑almost surely for any computable ν.

Additional sections discuss various merging relations (kl, klc, bd, bdc, comp) and their inclusion diagram, as well as intermediate horizons between weak and strong merging. Strong merging results are deferred to a companion article. Overall, the work bridges algorithmic randomness, martingale theory, and information‑theoretic divergences, providing a unified global perspective on when and how probabilistic forecasts converge on random sequences.

Comments & Academic Discussion

Loading comments...

Leave a Comment