Multi-modal, Multi-task, Multi-criteria Automatic Evaluation with Vision Language Models

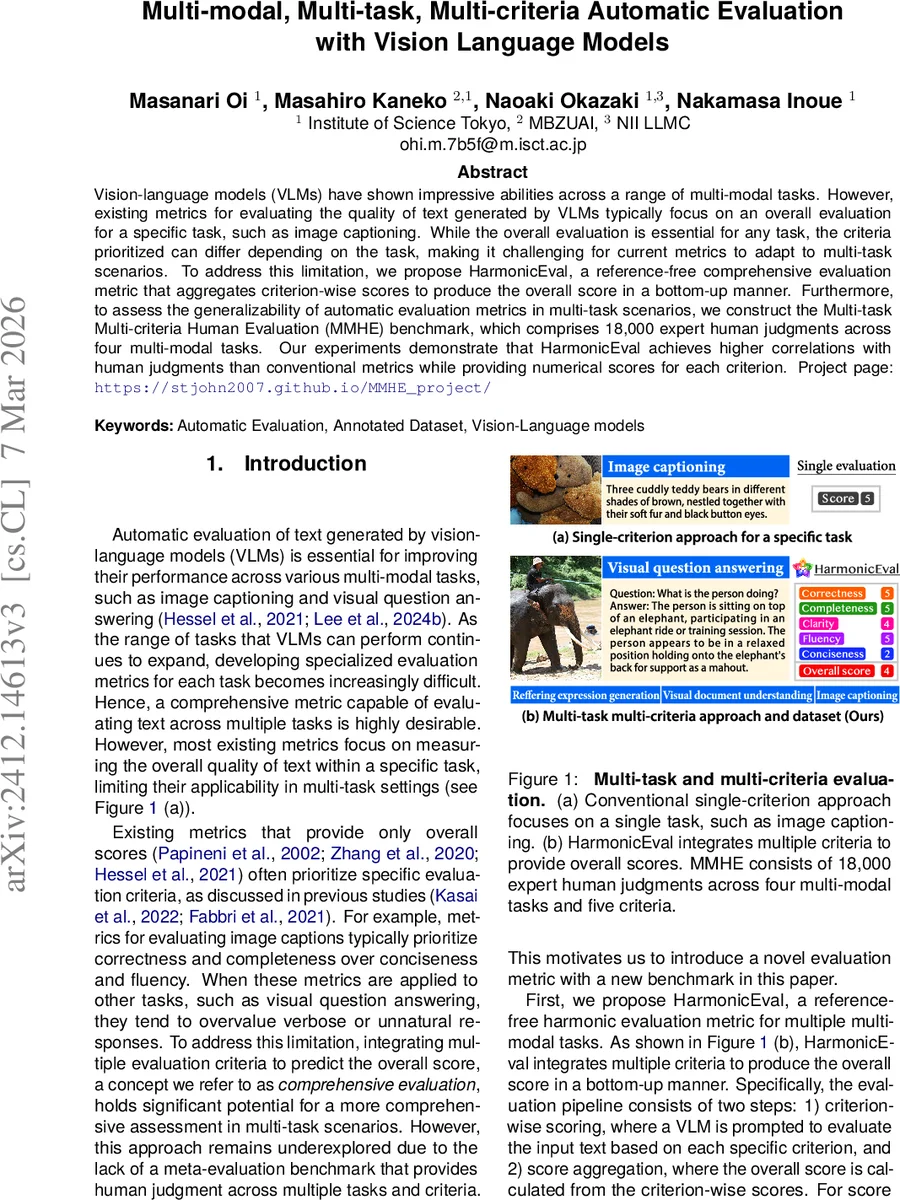

Vision-language models (VLMs) have shown impressive abilities across a range of multi-modal tasks. However, existing metrics for evaluating the quality of text generated by VLMs typically focus on an overall evaluation for a specific task, such as image captioning. While the overall evaluation is essential for any task, the criteria prioritized can differ depending on the task, making it challenging for current metrics to adapt to multi-task scenarios. To address this limitation, we propose HarmonicEval, a reference-free comprehensive evaluation metric that aggregates criterion-wise scores to produce the overall score in a bottom-up manner. Furthermore, to assess the generalizability of automatic evaluation metrics in multi-task scenarios, we construct the Multi-task Multi-criteria Human Evaluation (MMHE) benchmark, which comprises 18,000 expert human judgments across four multi-modal tasks. Our experiments demonstrate that HarmonicEval achieves higher correlations with human judgments than conventional metrics while providing numerical scores for each criterion. Project page: https://stjohn2007.github.io/MMHE_project/

💡 Research Summary

This paper tackles the problem of evaluating the textual outputs of vision‑language models (VLMs) in a way that is both reference‑free and adaptable to multiple tasks and evaluation criteria. Existing automatic metrics either focus on a single task (e.g., image captioning) or provide only an overall score that implicitly emphasizes certain criteria (such as correctness or completeness) while neglecting others. As VLMs are increasingly applied to a diverse set of multimodal tasks—referring expression generation (REG), visual question answering (VQA), visual document understanding (VDU), and image captioning (IC)—a more comprehensive evaluation framework is needed.

HarmonicEval is introduced as a two‑stage pipeline. In the first stage, a VLM is used as an evaluator: for each of five predefined criteria—Correctness, Completeness, Fluency, Conciseness, and Clarity—a task‑specific prompt asks the model to rate the generated text on a five‑point scale. The model’s token‑level probability distribution over the rating tokens is smoothed by taking the expectation (first‑order statistic), yielding a criterion‑wise score (\tilde{s}_c). In the second stage, the variance (second‑order statistic) of the same distribution is computed; the standard deviation (\sigma_c) serves as a confidence indicator. HarmonicEval then assigns a weight (w_c) to each criterion based on a harmonic weighting formula: \

Comments & Academic Discussion

Loading comments...

Leave a Comment