EffectMaker: Unifying Reasoning and Generation for Customized Visual Effect Creation

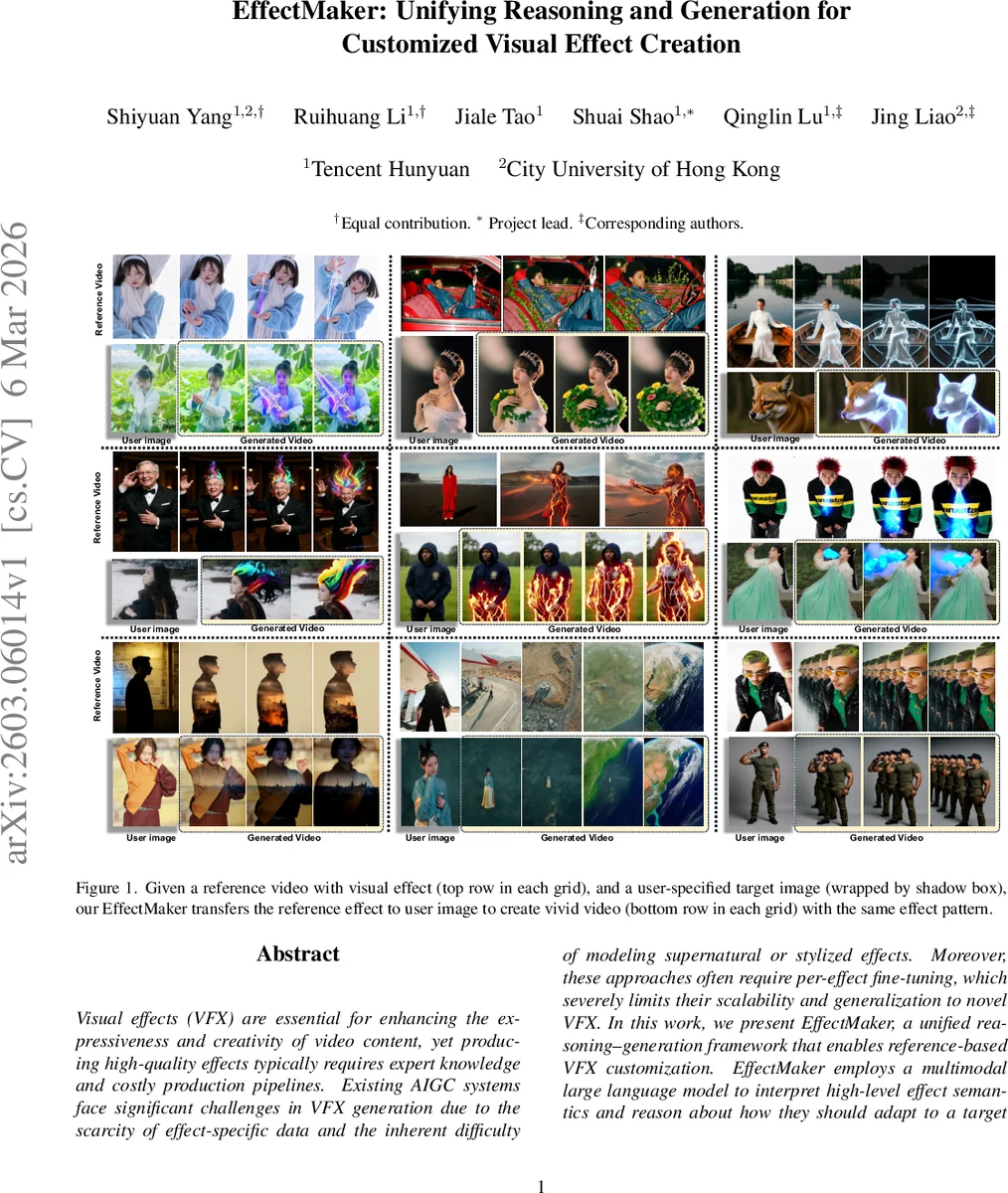

Visual effects (VFX) are essential for enhancing the expressiveness and creativity of video content, yet producing high-quality effects typically requires expert knowledge and costly production pipelines. Existing AIGC systems face significant challenges in VFX generation due to the scarcity of effect-specific data and the inherent difficulty of modeling supernatural or stylized effects. Moreover, these approaches often require per-effect fine-tuning, which severely limits their scalability and generalization to novel VFX. In this work, we present EffectMaker, a unified reasoning-generation framework that enables reference-based VFX customization. EffectMaker employs a multimodal large language model to interpret high-level effect semantics and reason about how they should adapt to a target subject, while a diffusion transformer leverages in-context learning to capture fine-grained visual cues from reference videos. These two components form a semantic-visual dual-path guidance mechanism that enables accurate, controllable, and effect-consistent synthesis without per-effect fine-tuning. Furthermore, we construct EffectData, the largest high-quality synthetic dataset containing 130k videos across 3k VFX categories, to improve generalization and scalability. Experiments show that EffectMaker achieves superior visual quality and effect consistency over state-of-the-art baselines, offering a scalable and flexible paradigm for customized VFX generation. Project page: https://effectmaker.github.io

💡 Research Summary

EffectMaker addresses the long‑standing challenge of generating high‑quality visual effects (VFX) without the need for costly expert pipelines or per‑effect fine‑tuning. The authors observe that existing generative AI models excel at realistic video synthesis but struggle with supernatural, stylized, or highly abstract effects, largely because VFX data are scarce and out‑of‑distribution. Moreover, prior solutions either rely on small closed‑set datasets or require a separate LoRA (Low‑Rank Adaptation) model for each effect, which does not scale to the thousands of effect types used in modern media.

To overcome these limitations, EffectMaker combines two complementary modules: a multimodal large language model (MLLM) for high‑level semantic understanding and reasoning, and a diffusion transformer (DiT) for fine‑grained visual generation. The MLLM (initialized from Qwen3‑VL‑8B) receives a system prompt, user instruction, the reference VFX video, and the target image. It first extracts a high‑level description of the effect, then reasons about how the effect should be adapted to the target subject, and finally produces a textual plan describing the expected appearance after transfer. From this process the authors extract two kinds of features: (1) semantic‑understanding features from the final hidden states (rich multimodal embeddings) and (2) semantic‑reasoning features from the auto‑regressive token sequence (capturing the model’s internal reasoning).

The DiT backbone (Wan2.2‑TI2V‑5B) is used as an image‑to‑video generator. The authors introduce a dual‑conditioning strategy: semantic conditioning via decoupled cross‑attention and visual conditioning via in‑context learning. For semantic conditioning, the reasoning features are encoded with DiT’s native text encoder and injected through a standard cross‑attention layer, while the understanding features are processed by an additional cross‑attention branch with separate key/value projections. The outputs of both branches are summed, preserving modality‑specific information while providing a unified guidance signal. For visual conditioning, the reference video latent is concatenated with the target latent along the token dimension, and a dual‑stream self‑attention mechanism processes them with separate query, key, and value projections for each stream. This design allows the model to attend across reference and target tokens without mixing their internal representations. A biased 3‑D Rotary Positional Embedding (RoPE) further separates the temporal positional encodings of reference and target streams, preventing interference.

A critical contribution is EffectData, a synthetic dataset containing 130 k videos across 3 k effect categories—an order of magnitude larger than prior VFX datasets. Each video is paired with a label, a natural‑language caption, and an editing instruction, providing the rich supervision needed for both the MLLM and DiT components. The dataset is generated through a pipeline that first collects subject images (human and animal portraits), applies a library of effect generators, and annotates each result automatically.

Experiments compare EffectMaker against state‑of‑the‑art text‑to‑video models, LoRA‑based VFX creators, and recent reference‑based baselines (Video‑as‑Prompt, VFXMaster). Quantitative metrics (FID, CLIP‑Score) and user studies show that EffectMaker consistently yields higher visual fidelity and stronger effect consistency, especially when the reference and target scenes differ significantly in shape or background. Ablation studies confirm that both the semantic‑visual dual‑path guidance and the dual‑stream attention are essential for performance gains.

In summary, the paper’s main contributions are: (1) a reference‑based VFX creation framework that unifies multimodal reasoning and controllable generation, eliminating per‑effect fine‑tuning; (2) a novel dual‑path guidance mechanism that fuses high‑level semantic reasoning with low‑level visual cues; and (3) the largest publicly released VFX dataset to date, EffectData, which will facilitate future research in effect generation and editing. Limitations include the current focus on single‑subject, single‑effect transfer and the lack of real‑world video evaluations; future work may explore multi‑effect compositing, real‑time interaction, and broader domain adaptation.

Comments & Academic Discussion

Loading comments...

Leave a Comment