Layer-wise Instance Binding for Regional and Occlusion Control in Text-to-Image Diffusion Transformers

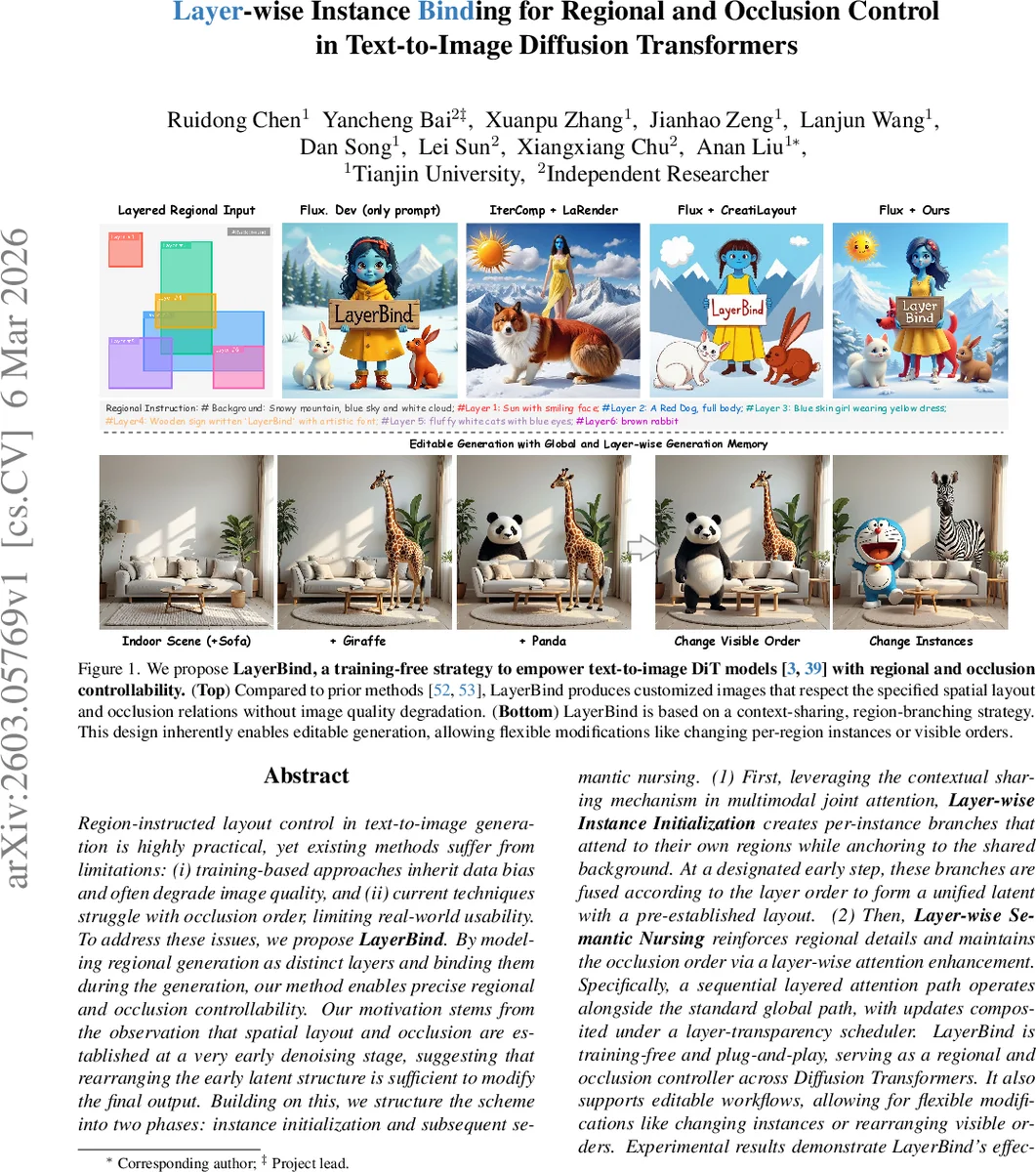

Region-instructed layout control in text-to-image generation is highly practical, yet existing methods suffer from limitations: (i) training-based approaches inherit data bias and often degrade image quality, and (ii) current techniques struggle with occlusion order, limiting real-world usability. To address these issues, we propose LayerBind. By modeling regional generation as distinct layers and binding them during the generation, our method enables precise regional and occlusion controllability. Our motivation stems from the observation that spatial layout and occlusion are established at a very early denoising stage, suggesting that rearranging the early latent structure is sufficient to modify the final output. Building on this, we structure the scheme into two phases: instance initialization and subsequent semantic nursing. (1) First, leveraging the contextual sharing mechanism in multimodal joint attention, Layer-wise Instance Initialization creates per-instance branches that attend to their own regions while anchoring to the shared background. At a designated early step, these branches are fused according to the layer order to form a unified latent with a pre-established layout. (2) Then, Layer-wise Semantic Nursing reinforces regional details and maintains the occlusion order via a layer-wise attention enhancement. Specifically, a sequential layered attention path operates alongside the standard global path, with updates composited under a layer-transparency scheduler. LayerBind is training-free and plug-and-play, serving as a regional and occlusion controller across Diffusion Transformers. Beyond generation, it natively supports editable workflows, allowing for flexible modifications like changing instances or rearranging visible orders. Both qualitative and quantitative results demonstrate LayerBind’s effectiveness, highlighting its strong potential for creative applications.

💡 Research Summary

LayerBind addresses the practical need for precise region‑guided layout and occlusion control in text‑to‑image diffusion models, specifically targeting Diffusion Transformers (DiTs). Existing approaches either fine‑tune the model or add adapters, which inherit dataset bias and often degrade visual fidelity, or they use training‑free regional prompting that preserves quality but cannot enforce occlusion order, leading to concept blending. Observing that the spatial arrangement of objects is largely determined in the very early denoising steps of a diffusion process, the authors propose a two‑stage, training‑free controller that aligns with the intrinsic dynamics of DiTs.

In the first stage, Layer‑wise Instance Initialization, the method creates a separate token branch for each user‑specified region (layer). At the initial diffusion timestep, the tokens corresponding to a region’s spatial cue are copied from the global latent to form an instance branch. Using the joint‑attention framework of DiTs, a specialized “Contextual Attention” operation updates each branch by attending to (i) the shared background image tokens (all tokens except those belonging to the current region) and (ii) the region‑specific textual prompt. This bidirectional binding allows each instance to incorporate its semantic description while staying grounded in the common background context, preserving global coherence. After a short early‑denoising interval (e.g., the first 10 % of steps), all instance branches are fused into a single latent according to a user‑defined layer order; the order directly encodes the occlusion hierarchy (farther layers are merged first, nearer layers later).

The second stage, Layer‑wise Semantic Nursing, refines the fused latent throughout the remaining diffusion steps. Each DiT attention block now runs two parallel paths: the standard global attention path and a sequential per‑layer contextual attention path. For each layer, the same contextual attention update is applied, but a Layer‑Transparency Scheduler modulates the strength of these updates over time. Early in the nursing phase the scheduler assigns high transparency, allowing strong per‑layer corrections; later it gradually reduces the influence, ensuring that the layout and occlusion established in the first stage remain stable while fine details (textures, lighting, color) are enhanced.

Key technical contributions include:

- Contextual Attention – a lightweight adaptation of joint attention that selectively masks queries, keys, and values to enable independent region updates without breaking the shared background context.

- Early‑stage latent rearrangement – leveraging the ODE‑based diffusion trajectory, a simple reordering of latent tokens at an early timestep deterministically propagates through the entire generation, providing an efficient control lever.

- Layer‑Transparency Scheduler – a time‑dependent scalar that balances per‑layer refinement against global consistency, preventing over‑correction that could disturb the established occlusion order.

- Plug‑and‑play design – LayerBind requires no additional training; it can be inserted into any pre‑trained DiT (e.g., FLUX, Stable Diffusion 2‑DiT) and operates purely at inference time.

Experiments on benchmark layout datasets (COCO‑Layout, ADE20K‑Layout) demonstrate that LayerBind achieves higher Intersection‑over‑Union for region placement and significantly better occlusion‑order accuracy compared to both training‑based adapters and prior training‑free methods. Image quality metrics (FID, PSNR, SSIM) remain on par with the original DiT, confirming that the controller does not sacrifice fidelity. Human preference studies show a strong preference for LayerBind outputs, especially in scenes with complex overlapping objects where competing methods often produce blended or misplaced elements.

An additional advantage is editable generation: because the early latent encodes a layered structure, users can later add, remove, or reorder layers without re‑running the entire pipeline, enabling interactive design workflows.

Limitations include increased memory consumption proportional to the number of layers and challenges with semi‑transparent or reflective occlusions, which the authors suggest could be addressed by integrating depth or neural‑radiance‑field cues in future work.

In summary, LayerBind provides a novel, training‑free mechanism that aligns region‑wise instance initialization with the early diffusion dynamics of DiTs and reinforces those structures via layer‑wise attention during later denoising. This yields high‑quality, layout‑accurate, and occlusion‑aware image synthesis while preserving the flexibility and speed of pre‑trained diffusion models.

Comments & Academic Discussion

Loading comments...

Leave a Comment