K-Gen: A Multimodal Language-Conditioned Approach for Interpretable Keypoint-Guided Trajectory Generation

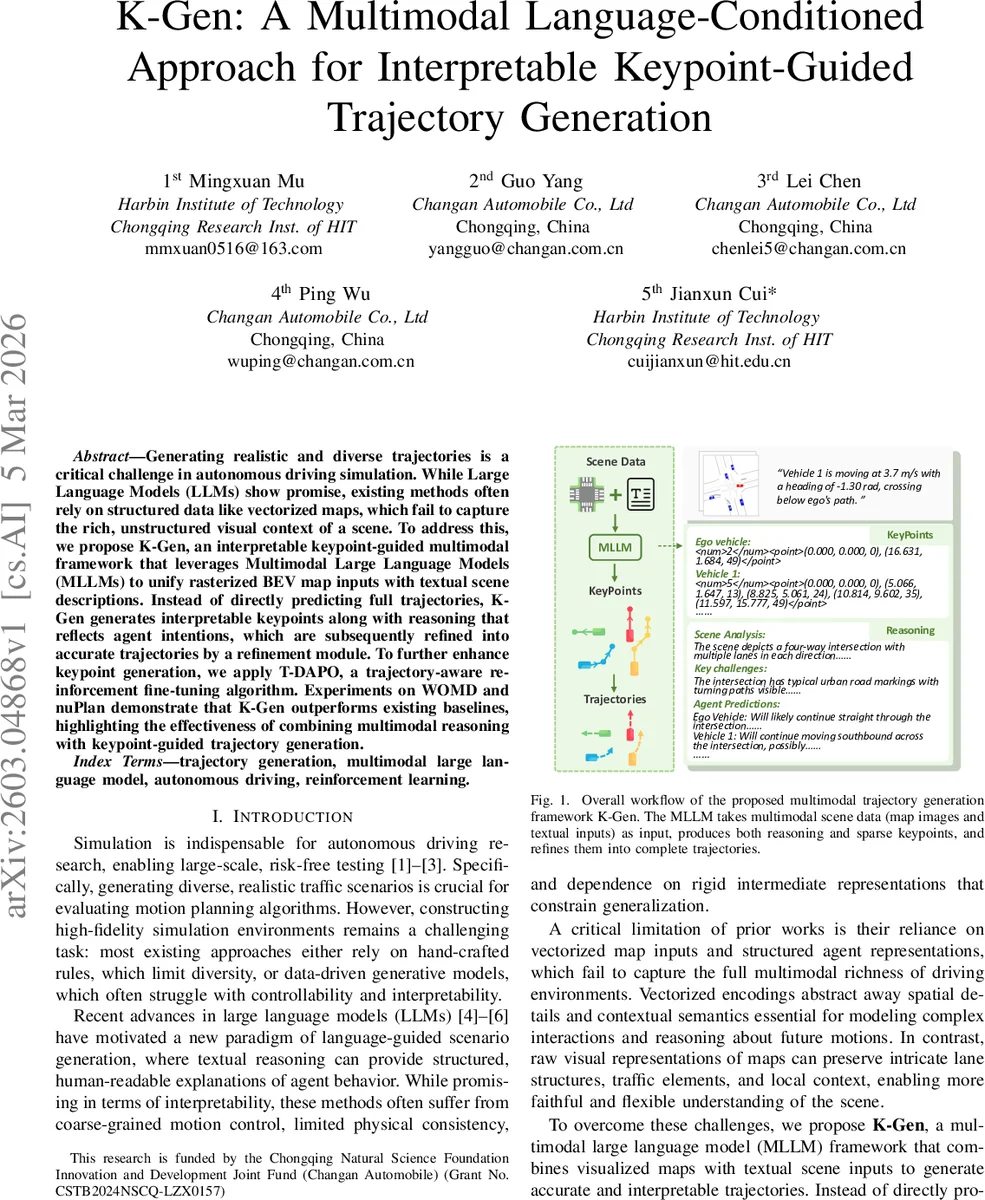

Generating realistic and diverse trajectories is a critical challenge in autonomous driving simulation. While Large Language Models (LLMs) show promise, existing methods often rely on structured data like vectorized maps, which fail to capture the rich, unstructured visual context of a scene. To address this, we propose K-Gen, an interpretable keypoint-guided multimodal framework that leverages Multimodal Large Language Models (MLLMs) to unify rasterized BEV map inputs with textual scene descriptions. Instead of directly predicting full trajectories, K-Gen generates interpretable keypoints along with reasoning that reflects agent intentions, which are subsequently refined into accurate trajectories by a refinement module. To further enhance keypoint generation, we apply T-DAPO, a trajectory-aware reinforcement fine-tuning algorithm. Experiments on WOMD and nuPlan demonstrate that K-Gen outperforms existing baselines, highlighting the effectiveness of combining multimodal reasoning with keypoint-guided trajectory generation.

💡 Research Summary

K‑Gen introduces a novel multimodal framework for generating realistic and diverse vehicle trajectories in autonomous driving simulation. Unlike prior works that rely on vectorized map representations and structured agent data, K‑Gen feeds rasterized bird‑eye‑view (BEV) map images together with natural‑language scene descriptions into a multimodal large language model (MLLM). The MLLM simultaneously produces chain‑of‑thought (CoT) reasoning and a sparse set of keypoints that encode the agent’s intended motion.

Keypoints are extracted using two complementary criteria: (1) geometric simplification via the Douglas‑Peucker algorithm to capture high‑curvature segments (K_g) and (2) dynamic events where the velocity change exceeds a threshold (K_v). The union K = K_g ∪ K_v preserves both shape and kinematic information. These keypoints are linearly interpolated to obtain a coarse trajectory (˜Y), which is then refined by the TrajRefiner module. TrajRefiner is a transformer‑based residual network that takes historical trajectories, current agent states, and the keypoints as inputs, applies cross‑attention, and predicts a residual correction ΔY. The final trajectory is ˆY = ˜Y + ΔY. Training of TrajRefiner combines a motion loss (position MSE), a kinematic consistency loss (angle and speed MSE), and a final‑point loss (endpoint MSE) to ensure physical feasibility.

The learning pipeline for the MLLM consists of two stages. First, supervised fine‑tuning (SFT) aligns the model with generated CoT and keypoint annotations. These annotations are produced by prompting Claude 3.7 Sonnet to analyze each scenario from three perspectives: road geometry, collision risk, and intention prediction. The model is trained to maximize the conditional likelihood of the concatenated reasoning and keypoint token sequence.

Second, reinforcement fine‑tuning (RFT) employs the proposed Trajectory‑aware Decoupled Clip and Dynamic Sampling Policy Optimization (T‑DAPO). T‑DAPO builds on Direct Preference Optimization (DPO) but introduces trajectory‑specific rewards: (a) an accuracy reward that exponentially penalizes average displacement error (ADE) and final displacement error (FDE), (b) a CoT length reward that discourages overly verbose reasoning, and (c) a format reward that checks for correct tag ordering (

Experiments are conducted on two large‑scale datasets: the Waymo Open Motion Dataset (WOMD) and nuPlan. Using InternVL‑3‑8B as the backbone, K‑Gen achieves mADE = 0.915, mFDE = 2.422, and scenario collision rate (SCR) = 0.006 on WOMD, outperforming prior language‑guided methods such as LCTGen and InteractTraj, as well as larger vision‑language models (InternVL‑2.5/3, Qwen‑VL). On nuPlan, K‑Gen records mADE = 0.591, mFDE = 1.478, SCR = 0.027, again surpassing baselines. The approach scales with model size, but the keypoint‑guided design yields a larger gain than raw model capacity alone. Inference runs at 1.63 seconds per 5‑second scenario (50 frames) on a single NVIDIA A100, demonstrating practical feasibility for simulation pipelines.

Key contributions of K‑Gen are: (1) a multimodal MLLM that fuses raster map imagery and textual context, enabling richer scene understanding; (2) a two‑stage generation process that first produces interpretable keypoints guided by CoT reasoning and then refines them into full trajectories; (3) the T‑DAPO reinforcement algorithm that emphasizes difficult samples and balances accuracy, reasoning brevity, and output format; and (4) extensive empirical validation showing superior trajectory fidelity and safety compared to state‑of‑the‑art methods. By integrating interpretability, multimodal perception, and targeted reinforcement learning, K‑Gen sets a new direction for controllable, safe, and explainable trajectory generation in autonomous driving simulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment