A Large-Scale Probing Analysis of Speaker-Specific Attributes in Self-Supervised Speech Representations

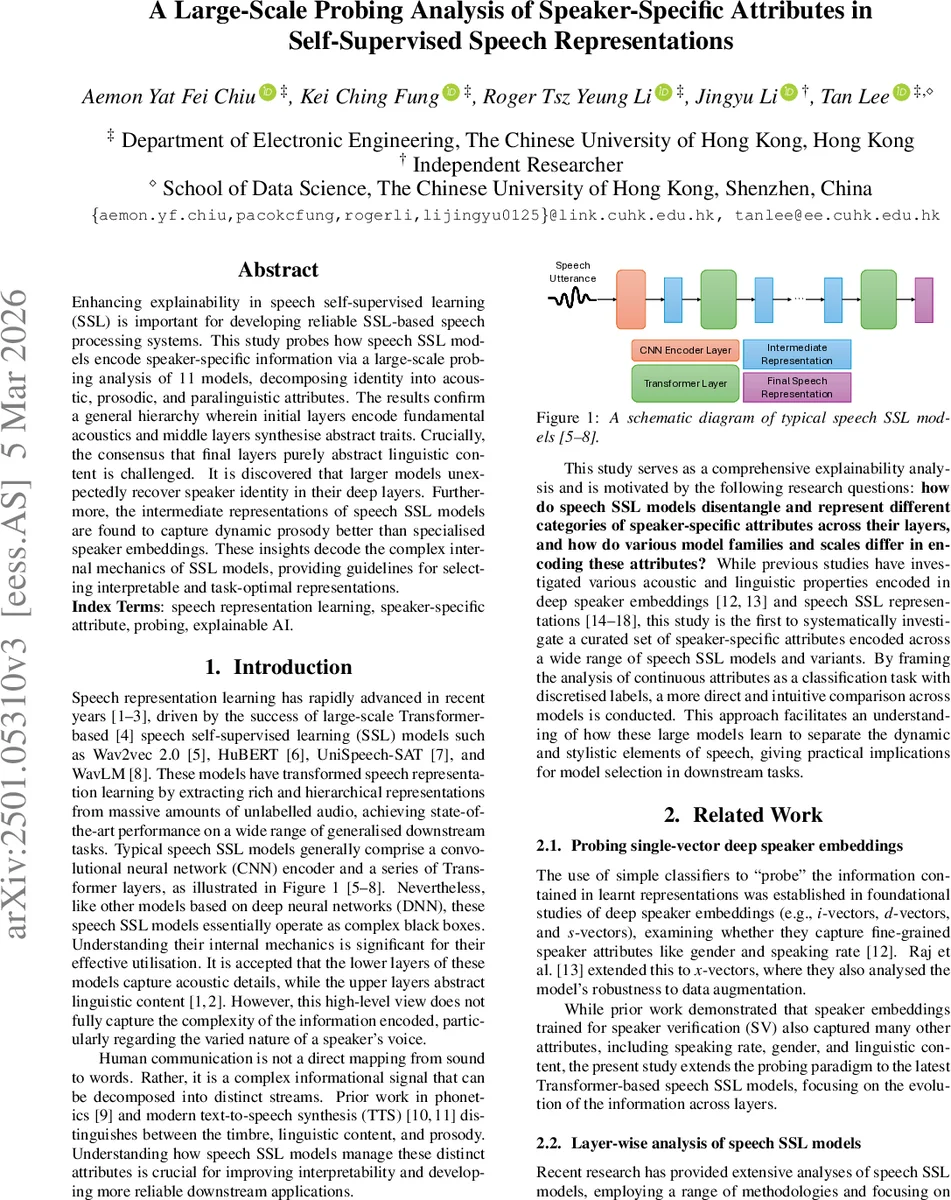

Enhancing explainability in speech self-supervised learning (SSL) is important for developing reliable SSL-based speech processing systems. This study probes how speech SSL models encode speaker-specific information via a large-scale probing analysis of 11 models, decomposing identity into acoustic, prosodic, and paralinguistic attributes. The results confirm a general hierarchy wherein initial layers encode fundamental acoustics and middle layers synthesise abstract traits. Crucially, the consensus that final layers purely abstract linguistic content is challenged. It is discovered that larger models unexpectedly recover speaker identity in their deep layers. Furthermore, the intermediate representations of speech SSL models are found to capture dynamic prosody better than specialised speaker embeddings. These insights decode the complex internal mechanics of SSL models, providing guidelines for selecting interpretable and task-optimal representations.

💡 Research Summary

This paper conducts a large‑scale probing study to uncover how modern self‑supervised speech (SSL) models encode speaker‑specific attributes. Eleven pretrained models spanning four families—Wav2vec 2.0, HuBERT, UniSpeech‑SAT, and WavLM—are examined in their base, base‑plus, large, and extra‑large configurations. The authors decompose speaker identity into five attributes: gender (a stable timbre cue), pitch, tempo, energy (dynamic prosodic cues), and emotion (a paralinguistic cue). Using the TextrolSpeech corpus (≈330 h, 1,327 speakers) they label each utterance for these attributes; pitch, tempo, and energy are discretized into high/normal/low, while gender and emotion are manually annotated.

Probing follows the classic “simple classifier” paradigm: frame‑averaged hidden states from each layer are fed to a single‑hidden‑layer MLP (500 units, ReLU) trained with cross‑entropy loss. The probing network is deliberately lightweight so that high classification accuracy indicates that the target information is already well‑encoded in the representation.

Key findings:

-

Hierarchical encoding – Across all models, the earliest layers (CNN encoder and first few Transformer blocks) excel at pitch and energy, confirming they act as acoustic feature extractors. Gender remains highly predictable throughout the network, reflecting its static spectral nature. Mid‑range layers (roughly layers 4‑12) see a rise in tempo and emotion accuracy, suggesting these layers abstract rhythmic and affective information while still retaining acoustic cues. The deepest layers generally suppress speaker identity and emphasize linguistic content, aligning with the conventional view of SSL hierarchies.

-

Scale‑dependent reversal – Contrary to the prevailing belief that speaker information vanishes in deep layers, several large‑scale models (WavLM‑Large, WavLM‑Base‑Plus, HuBERT‑XLarge) recover high speaker‑identification accuracy in their final layers. The authors attribute this to the massive parameter count and pre‑training on noisy, multi‑speaker data, which appears to encourage the preservation of speaker‑specific cues even after extensive abstraction.

-

Attribute‑specific trends – Pitch and gender dominate speaker‑identification performance; energy contributes less, indicating that energy fluctuations are less discriminative for distinguishing speakers. Tempo, tightly linked to linguistic rhythm, peaks in the middle layers, while emotion shows a relatively flat curve but modest gains in the same region.

-

Impact of model size – Large and extra‑large variants consistently outperform their base counterparts on high‑level attributes (speaker identity, emotion) by 3‑5 percentage points, whereas low‑level prosodic features (pitch, energy) see marginal gains. This suggests a trade‑off: for tasks requiring fine‑grained prosodic control, smaller models are cost‑effective; for tasks demanding robust speaker or affective modeling, larger models are advantageous.

-

Comparison with dedicated speaker embeddings – Deep speaker embedding models (ECAPA‑TDNN, ResNet‑r‑vector, CAM++) achieve near‑perfect accuracy on speaker and gender but lag behind SSL models on pitch, tempo, energy, and emotion. This demonstrates that general‑purpose SSL models learn richer, more multidimensional speaker representations, making their intermediate layers especially suitable for style transfer, expressive TTS, or emotion‑aware ASR.

The paper concludes with practical guidelines: select early layers for low‑level acoustic manipulation, middle layers for prosody and emotion control, and consider large‑scale models when high‑level speaker or affective fidelity is required. Limitations include the use of frame‑averaged vectors (which discard temporal dynamics) and the focus on English‑centric data; future work could explore sequence‑level probing, multilingual corpora, and direct analysis of attention patterns. Overall, the study provides a comprehensive map of where and how speaker‑specific information lives inside modern speech SSL architectures, bridging the gap between black‑box performance and interpretability.

Comments & Academic Discussion

Loading comments...

Leave a Comment