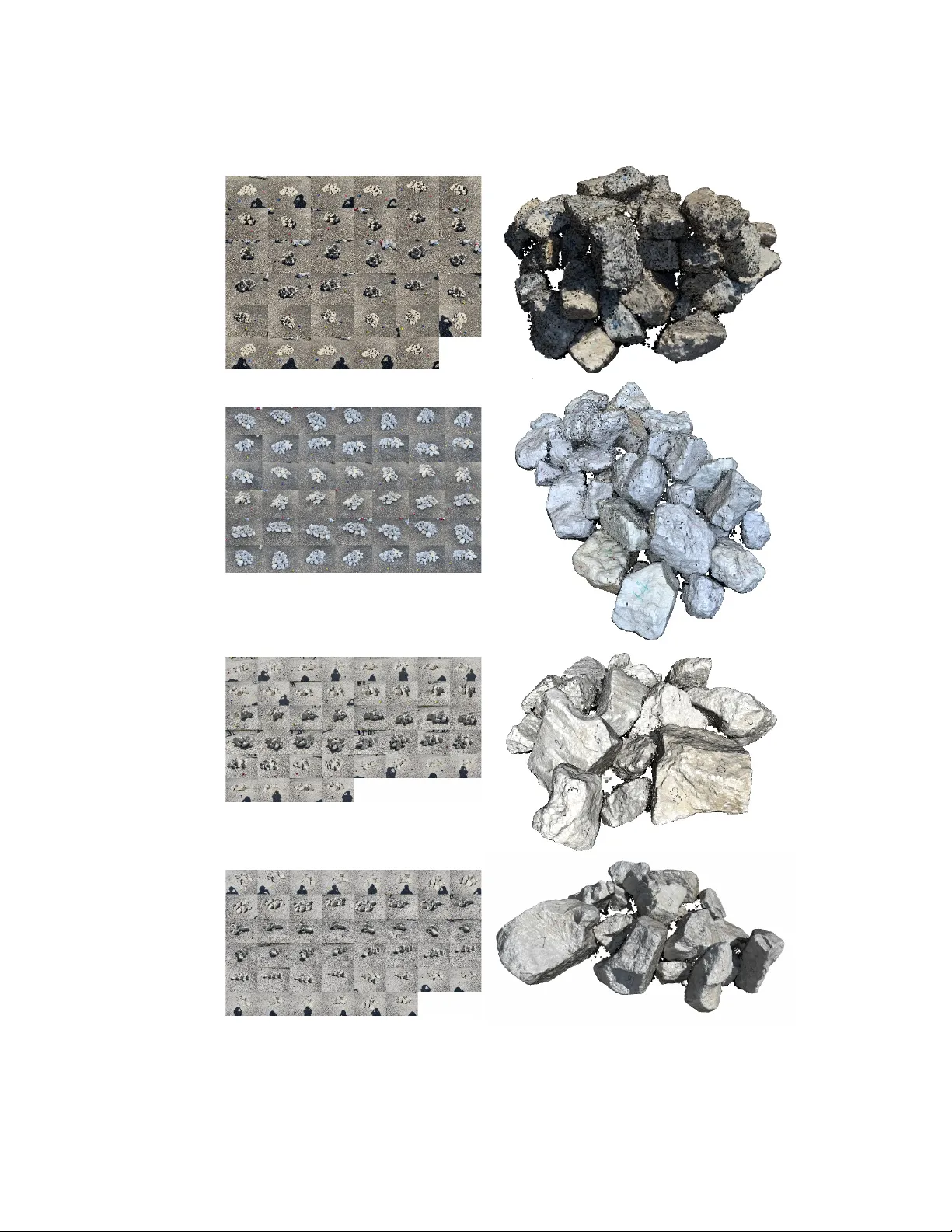

Field imaging framework for morphological characterization of aggregates with computer vision: Algorithms and applications

Construction aggregates, including sand and gravel, crushed stone and riprap, are the core building blocks of the construction industry. State-of-the-practice characterization methods mainly relies on visual inspection and manual measurement. State-o…

Authors: Haohang Huang