Secure Semantic Communications via AI Defenses: Fundamentals, Solutions, and Future Directions

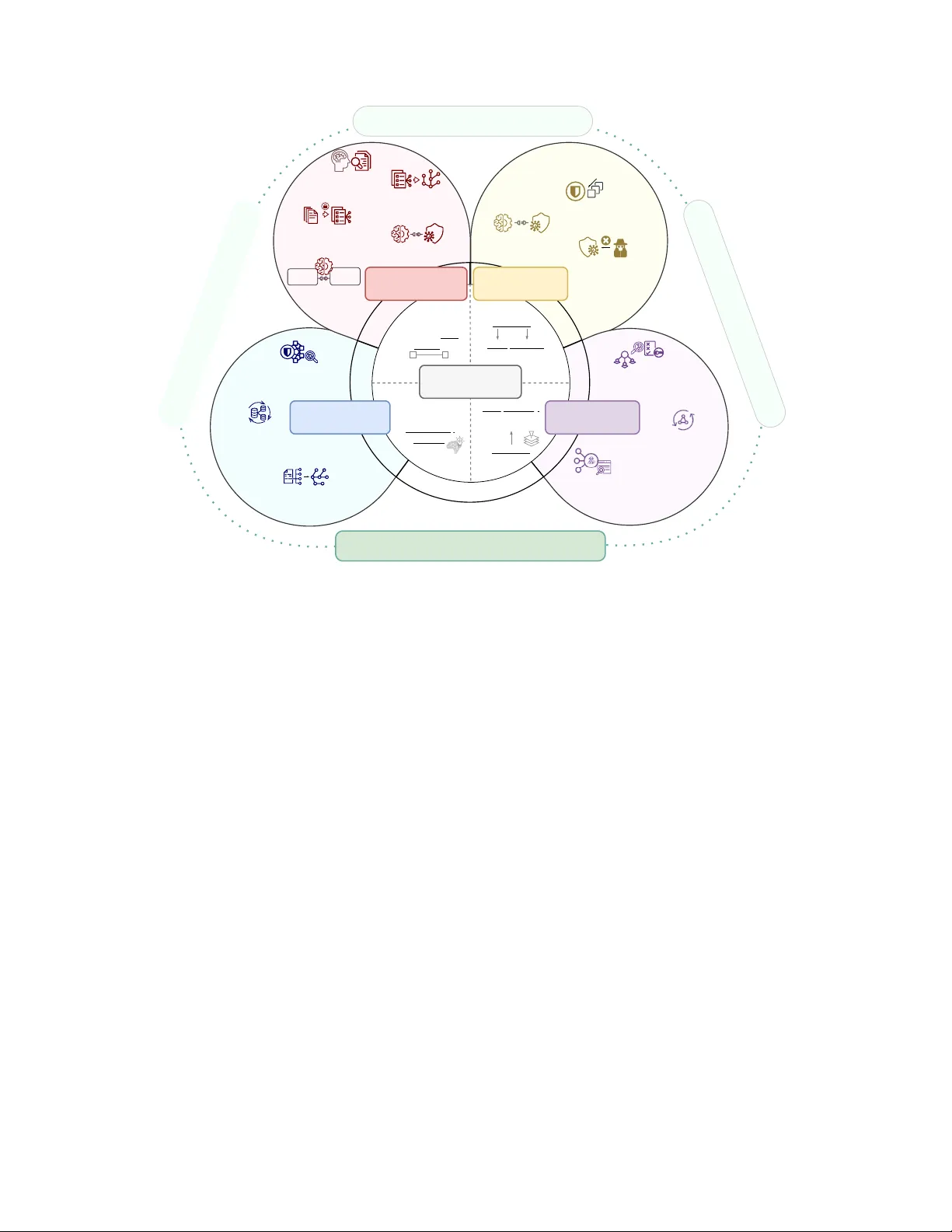

Semantic communication (SemCom) redefines wireless communication from reproducing symbols to transmitting task-relevant semantics. However, this AI-native architecture also introduces new vulnerabilities, as semantic failures may arise from adversari…

Authors: Lan Zhang, Chengsi Liang, Zeming Zhuang