CAPT: Confusion-Aware Prompt Tuning for Reducing Vision-Language Misalignment

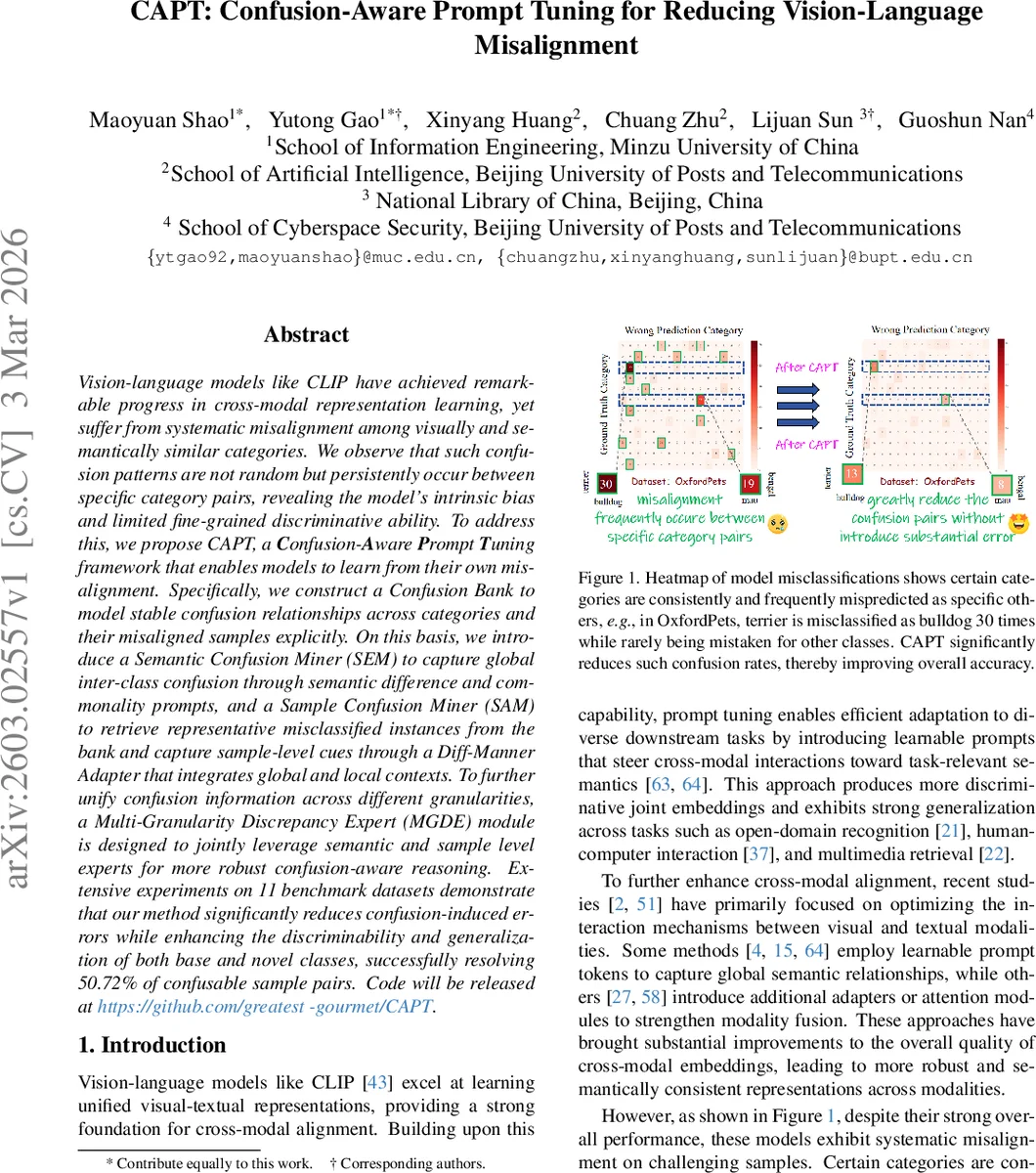

Vision-language models like CLIP have achieved remarkable progress in cross-modal representation learning, yet suffer from systematic misclassifications among visually and semantically similar categories. We observe that such confusion patterns are not random but persistently occur between specific category pairs, revealing the model’s intrinsic bias and limited fine-grained discriminative ability. To address this, we propose CAPT, a Confusion-Aware Prompt Tuning framework that enables models to learn from their own misalignment. Specifically, we construct a Confusion Bank to explicitly model stable confusion relationships across categories and misclassified samples. On this basis, we introduce a Semantic Confusion Miner (SEM) to capture global inter-class confusion through semantic difference and commonality prompts, and a Sample Confusion Miner (SAM) to retrieve representative misclassified instances from the bank and capture sample-level cues through a Diff-Manner Adapter that integrates global and local contexts. To further unify confusion information across different granularities, a Multi-Granularity Difference Expert (MGDE) module is designed to jointly leverage semantic- and sample-level experts for more robust confusion-aware reasoning. Extensive experiments on 11 benchmark datasets demonstrate that our method significantly reduces confusion-induced errors while enhancing the discriminability and generalization of both base and novel classes, successfully resolving 50.72 percent of confusable sample pairs. Code will be released at https://github.com/greatest-gourmet/CAPT.

💡 Research Summary

The paper tackles a persistent problem in large‑scale vision‑language models (VLMs) such as CLIP: systematic misclassifications between visually and semantically similar categories (e.g., “terrier” being repeatedly predicted as “bulldog”). Existing prompt‑tuning methods improve overall alignment but ignore these fixed confusion patterns, limiting fine‑grained discrimination.

CAPT (Confusion‑Aware Prompt Tuning) introduces a three‑stage framework to let the model learn from its own misalignments. First, a Confusion Bank records every misclassified sample together with the class it was mistakenly assigned to, thereby quantifying stable inter‑class confusion relationships.

Second, two miners extract complementary signals from this bank:

-

Semantic Confusion Miner (SEM) computes a pseudo‑ground‑truth (the model’s top‑confidence prediction) for each image and combines it with the bank’s confusion frequencies to produce a confusion score. Using large‑language‑model generated “commonality” and “difference” textual prompts for each high‑confusion class pair, SEM injects explicit semantic cues that highlight what the classes share and where they diverge.

-

Sample Confusion Miner (SAM) retrieves, for each semantic confusion pair, the most representative misclassified instance from the bank (selected by cosine similarity). A novel Diff‑Manner Adapter then fuses global context (via CLS‑token attention) and local detail (via depthwise 2‑D convolutions) with a learnable weight α, yielding a sample‑level confusion feature that captures both overall class‑level differences and fine‑grained visual cues.

Third, the Multi‑Granularity Discrepancy Expert (MGDE) integrates the semantic and sample features through separate expert modules and combines their outputs into a unified, confusion‑aware prompt. Training uses the standard cross‑entropy loss for ordinary classification plus an InfoNCE‑style confusion loss (L_confuse) that explicitly pushes apart positive image‑text pairs from their confusing counterparts. Additional tricks include clustering semantic prompt tokens to reduce redundancy and dynamically adjusting α to balance global versus local information.

Extensive experiments on eleven benchmark datasets (including OxfordPets, ImageNet‑R, CIFAR‑100, etc.) demonstrate that CAPT consistently outperforms prior prompt‑tuning approaches (e.g., CoOp, MaPLe) by 2–4 percentage points in accuracy. More importantly, CAPT resolves 50.72 % of the identified confusable sample pairs, substantially lowering the misalignment rate. Both base and novel categories benefit, achieving a harmonic‑mean accuracy of 83.90 %, the highest reported among comparable methods. Ablation studies confirm that each component—Confusion Bank, SEM, SAM, Diff‑Manner Adapter, and MGDE—contributes meaningfully to the overall gain.

In summary, CAPT provides a systematic way to capture, model, and correct the fixed confusion patterns inherent in VLMs. By turning misclassifications into a learning signal at both semantic and instance levels, it markedly improves fine‑grained discriminability without sacrificing the efficiency of prompt tuning. The framework is model‑agnostic and could be extended to other vision‑language architectures or to continual‑learning scenarios where confusion patterns evolve over time.

Comments & Academic Discussion

Loading comments...

Leave a Comment