SigmaQuant: Hardware-Aware Heterogeneous Quantization Method for Edge DNN Inference

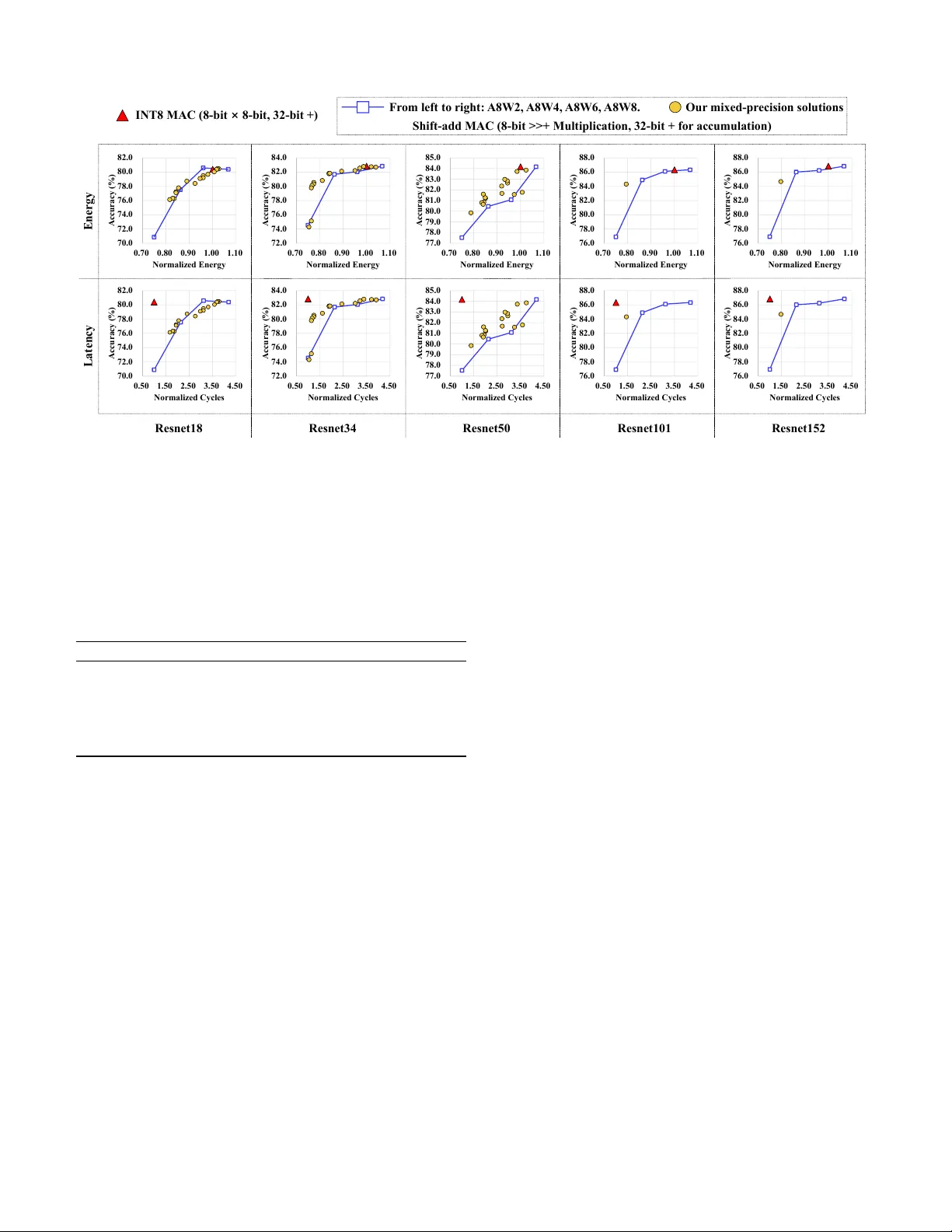

Deep neural networks (DNNs) are essential for performing advanced tasks on edge or mobile devices, yet their deployment is often hindered by severe resource constraints, including limited memory, energy, and computational power. While uniform quantiz…

Authors: Qunyou Liu, Pengbo Yu, Marina Zapater