Temporal Imbalance of Positive and Negative Supervision in Class-Incremental Learning

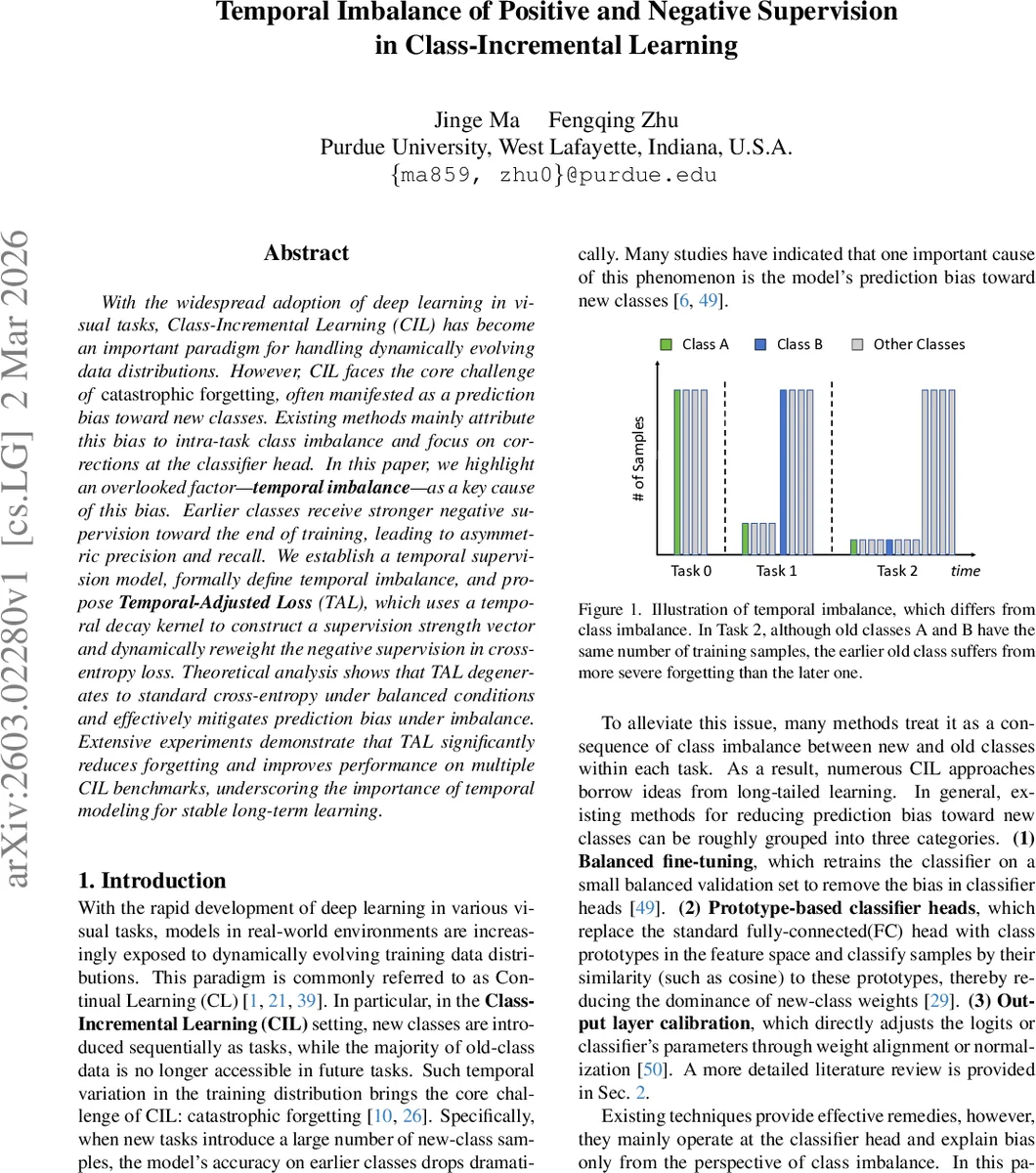

With the widespread adoption of deep learning in visual tasks, Class-Incremental Learning (CIL) has become an important paradigm for handling dynamically evolving data distributions. However, CIL faces the core challenge of catastrophic forgetting, often manifested as a prediction bias toward new classes. Existing methods mainly attribute this bias to intra-task class imbalance and focus on corrections at the classifier head. In this paper, we highlight an overlooked factor – temporal imbalance – as a key cause of this bias. Earlier classes receive stronger negative supervision toward the end of training, leading to asymmetric precision and recall. We establish a temporal supervision model, formally define temporal imbalance, and propose Temporal-Adjusted Loss (TAL), which uses a temporal decay kernel to construct a supervision strength vector and dynamically reweight the negative supervision in cross-entropy loss. Theoretical analysis shows that TAL degenerates to standard cross-entropy under balanced conditions and effectively mitigates prediction bias under imbalance. Extensive experiments demonstrate that TAL significantly reduces forgetting and improves performance on multiple CIL benchmarks, underscoring the importance of temporal modeling for stable long-term learning.

💡 Research Summary

Class‑incremental learning (CIL) suffers from catastrophic forgetting, which often appears as a bias toward newly introduced classes. Existing remedies mainly attribute this bias to intra‑task class imbalance and focus on correcting the classifier head (balanced fine‑tuning, prototype‑based heads, logit calibration). This paper argues that such explanations are incomplete because they ignore a temporal dimension of supervision. The authors introduce the notion of “temporal imbalance”: even when two old classes receive the same total number of positive samples, the class whose positives appear earlier in the training stream experiences stronger negative supervision later on, leading to higher precision but lower recall, while the later class shows the opposite pattern.

To formalize this, each training step n contributes a supervision signal a_k

Comments & Academic Discussion

Loading comments...

Leave a Comment