Demonstrating ViviDoc: Generating Interactive Documents through Human-Agent Collaboration

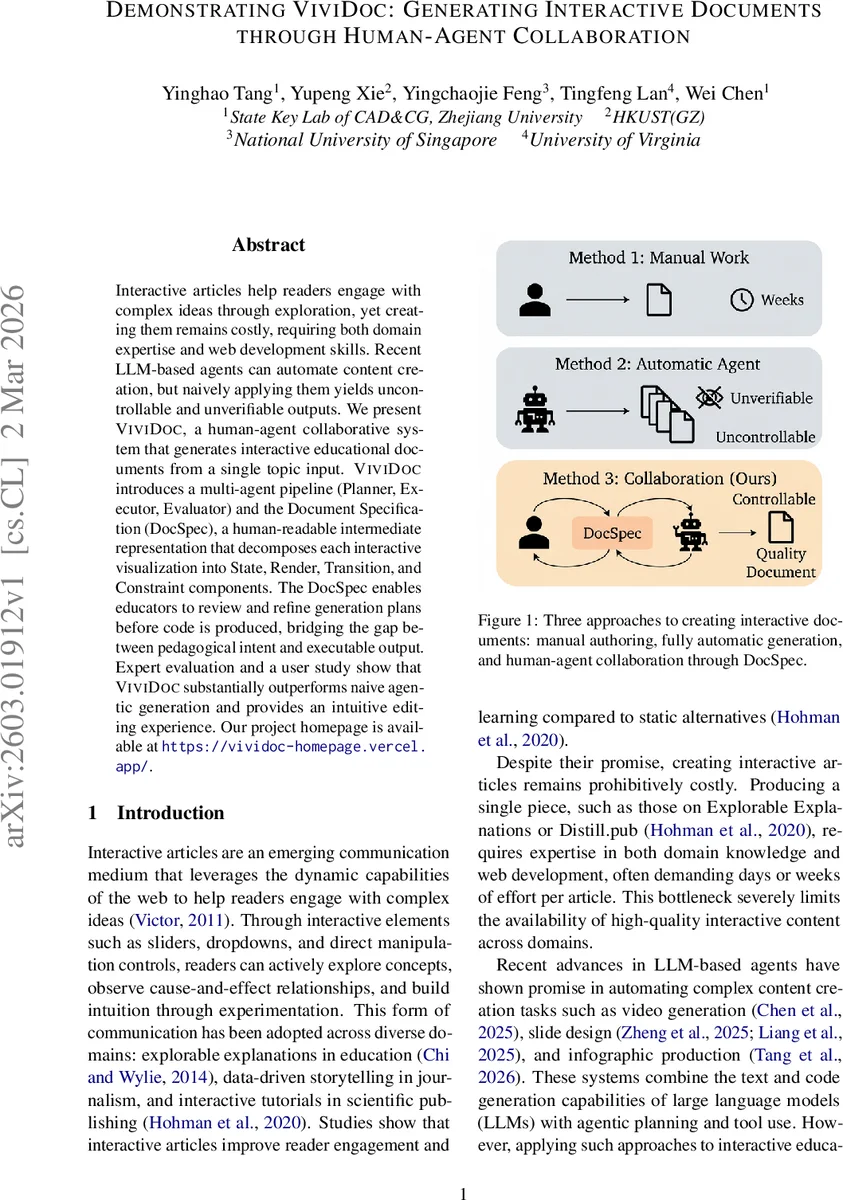

Interactive articles help readers engage with complex ideas through exploration, yet creating them remains costly, requiring both domain expertise and web development skills. Recent LLM-based agents can automate content creation, but naively applying them yields uncontrollable and unverifiable outputs. We present ViviDoc, a human-agent collaborative system that generates interactive educational documents from a single topic input. ViviDoc introduces a multi-agent pipeline (Planner, Executor, Evaluator) and the Document Specification (DocSpec), a human-readable intermediate representation that decomposes each interactive visualization into State, Render, Transition, and Constraint components. The DocSpec enables educators to review and refine generation plans before code is produced, bridging the gap between pedagogical intent and executable output. Expert evaluation and a user study show that ViviDoc substantially outperforms naive agentic generation and provides an intuitive editing experience. Our project homepage is available at https://vividoc-homepage.vercel.app/.

💡 Research Summary

The paper introduces ViviDoc, a human‑agent collaborative system that automates the creation of interactive educational documents from a single topic input. The authors identify three major obstacles that have prevented large‑scale generation of such content: (1) lack of controllability—LLM agents generate code based on implicit preferences that may not align with pedagogical goals; (2) opacity—educators have no meaningful way to intervene in the generation process; and (3) scarcity of evaluation datasets. To address these issues, ViviDoc combines a three‑agent pipeline (Planner, Executor, Evaluator) with a structured intermediate representation called the Document Specification (DocSpec).

The Planner receives the topic and decomposes it into an ordered list of knowledge units. Each unit contains a concise summary, a text description that guides natural‑language generation, and an Interaction Specification. The Interaction Specification follows an adapted Munzner “What‑Why‑How” framework, expanded into four explicit components: State (variables, types, ranges, defaults), Render (visual mapping), Transition (how user actions modify state), and Constraint (the pedagogical invariant the learner should discover). This SRTC schema forces the Planner to produce well‑formed, machine‑readable plans while remaining human‑readable.

The Executor takes the (optionally edited) DocSpec and generates the final HTML document in two stages. Stage 1 produces the explanatory text for each knowledge unit, using the text description as a prompt and maintaining contextual consistency across sections. Stage 2 synthesizes the interactive visualization code (HTML, CSS, JavaScript) guided by the SRTC specification. The Executor includes a retry mechanism that re‑generates a fragment up to k times if HTML validation fails, improving robustness.

The Evaluator checks the completed document for overall coherence using an LLM and validates each knowledge unit’s HTML output. It also verifies that the Constraint component of each interaction is correctly realized, providing feedback that can trigger selective re‑execution.

Human review is integrated at two points: after the Planner produces the DocSpec and after the Executor outputs the final document. Because DocSpec is a structured, editable artifact, educators can reorder units, modify text descriptions, adjust variable ranges, or add/remove interactions without writing code. The interface also supports natural‑language chat‑based edits, which are translated into DocSpec updates. This design gives users high‑impact control before code generation and a final quality‑check after rendering.

For evaluation, the authors assembled a dataset of 101 real‑world interactive documents spanning 11 domains from over 60 websites. They randomly sampled 10 topics and generated documents using two methods: (a) ViviDoc’s full pipeline (with human review disabled for a fair comparison) and (b) a “Naïve Agent” that prompts a single LLM call to produce the entire HTML document directly, without a DocSpec. Both methods used the same Gemini 3.0 Flash model. Three domain experts performed blind assessments on the 20 resulting documents, rating Content Richness, Interaction Quality, and Visual Quality on a 5‑point Likert scale. ViviDoc achieved substantially higher scores (average ≈ 4.2) compared to the Naïve Agent (≈ 2.8) across all dimensions, demonstrating superior depth, pedagogical interactivity, and aesthetic presentation.

A follow‑up user study with 15 educators and designers examined the usability of the DocSpec editing UI. Participants reported a short learning curve (≈ 5 minutes) and high satisfaction (4.5/5), confirming that the structured specification is intuitive and effective for guiding generation.

In summary, ViviDoc contributes (1) a multi‑agent architecture that isolates the most error‑prone translation step (intent → code) behind a verifiable contract, (2) the DocSpec intermediate format that makes the generation pipeline transparent, editable, and pedagogically grounded, and (3) empirical evidence that human‑in‑the‑loop collaboration dramatically improves the quality of automatically generated interactive educational content. The work paves the way for scalable production of high‑quality explorable explanations and suggests a general recipe for integrating structured specifications into LLM‑driven content creation pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment