Neural Operator-Grounded Continuous Tensor Function Representation and Its Applications

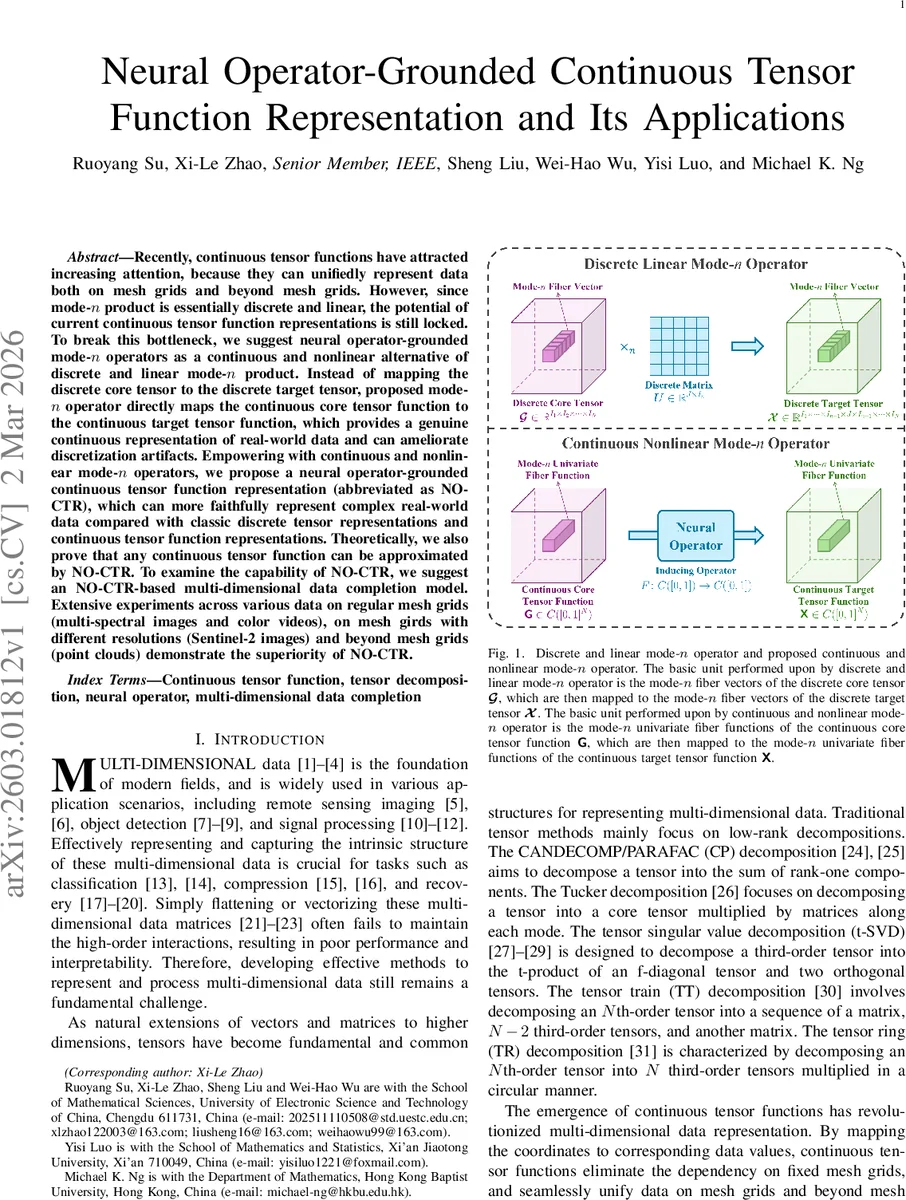

Recently, continuous tensor functions have attracted increasing attention, because they can unifiedly represent data both on mesh grids and beyond mesh grids. However, since mode-$n$ product is essentially discrete and linear, the potential of current continuous tensor function representations is still locked. To break this bottleneck, we suggest neural operator-grounded mode-$n$ operators as a continuous and nonlinear alternative of discrete and linear mode-$n$ product. Instead of mapping the discrete core tensor to the discrete target tensor, proposed mode-$n$ operator directly maps the continuous core tensor function to the continuous target tensor function, which provides a genuine continuous representation of real-world data and can ameliorate discretization artifacts. Empowering with continuous and nonlinear mode-$n$ operators, we propose a neural operator-grounded continuous tensor function representation (abbreviated as NO-CTR), which can more faithfully represent complex real-world data compared with classic discrete tensor representations and continuous tensor function representations. Theoretically, we also prove that any continuous tensor function can be approximated by NO-CTR. To examine the capability of NO-CTR, we suggest an NO-CTR-based multi-dimensional data completion model. Extensive experiments across various data on regular mesh grids (multi-spectral images and color videos), on mesh girds with different resolutions (Sentinel-2 images) and beyond mesh grids (point clouds) demonstrate the superiority of NO-CTR.

💡 Research Summary

The paper addresses a fundamental limitation in current continuous tensor function representations: the reliance on the traditional mode‑n product, which is inherently discrete and linear. While continuous tensor functions map coordinates to data values and thus can handle both grid‑based and off‑grid data, the use of a discrete, linear mode‑n product to connect a core tensor to the target tensor prevents these representations from fully exploiting continuity and non‑linearity.

To overcome this bottleneck, the authors introduce continuous and nonlinear mode‑n operators. Instead of operating on discrete fiber vectors, these operators act on the univariate fiber functions of a continuous tensor function. Each operator is realized by a neural operator—a learned mapping from one function to another—such as DeepONet or Fourier Neural Operator. Formally, for an N‑order continuous tensor function (G) and a neural operator (F), the mode‑n operator (F^{\langle n\rangle}) is defined as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment