WAFFLE: Finetuning Multi-Modal Models for Automated Front-End Development

Web development involves turning UI designs into functional webpages, which can be difficult for both beginners and experienced developers due to the complexity of HTML’s hierarchical structures and styles. While Large Language Models (LLMs) have shown promise in generating source code, two major challenges persist in UI-to-HTML code generation: (1) effectively representing HTML’s hierarchical structure for LLMs, and (2) bridging the gap between the visual nature of UI designs and the text-based format of HTML code. To tackle these challenges, we introduce Waffle, a new fine-tuning strategy that uses a structure-aware attention mechanism to improve LLMs’ understanding of HTML’s structure and a contrastive fine-tuning approach to align LLMs’ understanding of UI images and HTML code. Models fine-tuned with Waffle show up to 9.00 pp (percentage point) higher HTML match, 0.0982 higher CW-SSIM, 32.99 higher CLIP, and 27.12 pp higher LLEM on our new benchmark WebSight-Test and an existing benchmark Design2Code, outperforming current fine-tuning methods.

💡 Research Summary

The paper addresses the challenging problem of automatically generating HTML and CSS code from UI design images, a task that requires both an understanding of visual layouts and the hierarchical structure of HTML. While large language models (LLMs) and multimodal large language models (MLLMs) have shown strong performance in text‑to‑code generation, they struggle with two specific issues in UI‑to‑HTML conversion: (1) representing the tree‑like structure of HTML in a way that LLMs can exploit, and (2) aligning the visual information in UI screenshots with the textual representation of HTML/CSS.

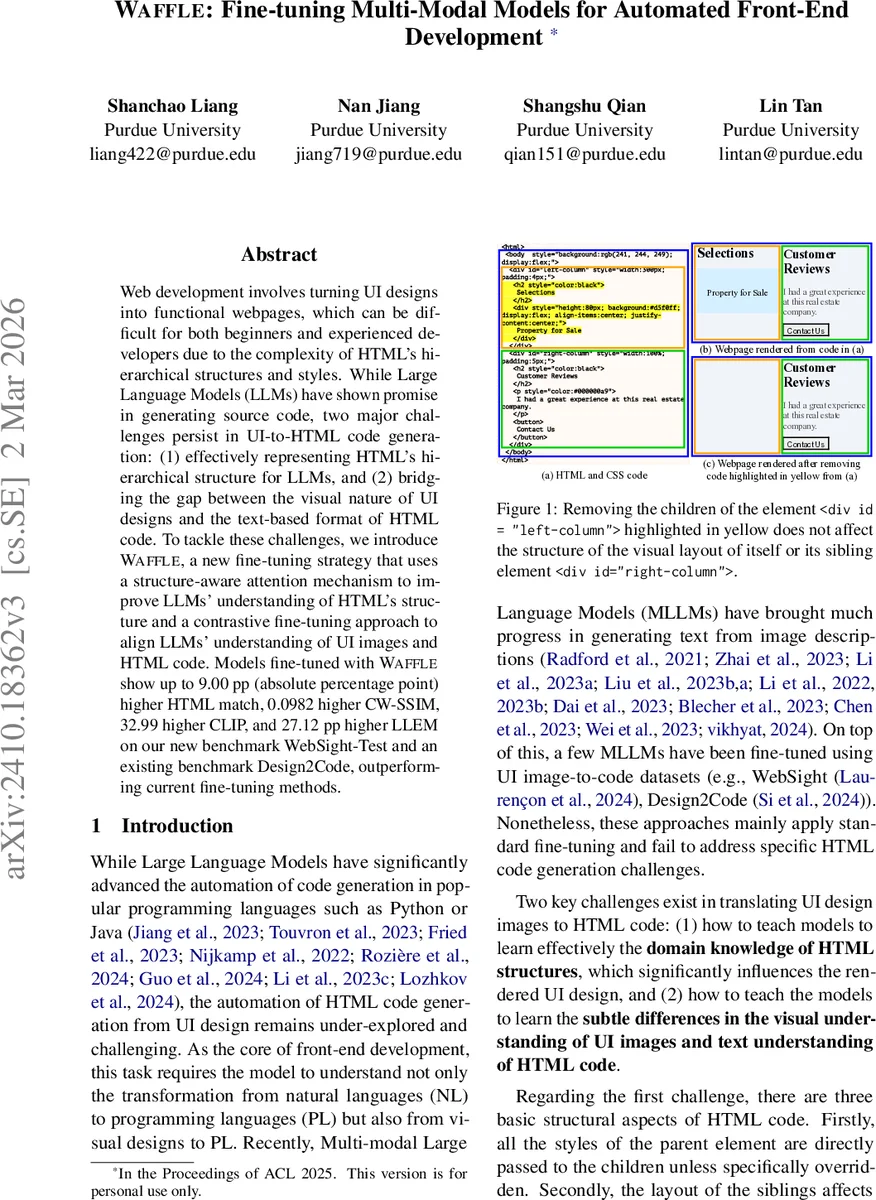

To solve these problems, the authors propose WAFFLE, a fine‑tuning pipeline that combines a novel structure‑aware attention mechanism with contrastive learning. The structure‑aware attention modifies the decoder of a transformer‑based language model so that each token can attend only to three categories of previous tokens: its parent element, its preceding siblings, and tokens within the same element. This mask explicitly encodes three domain‑specific principles of HTML rendering: inheritance of parent styles, sibling influence on layout, and independence from unrelated subtrees. The mask is applied to a subset of attention heads (by default one‑quarter) so that the model retains most of its pre‑trained knowledge while gaining a dedicated channel for structural reasoning.

For the visual‑textual alignment, the authors construct a contrastive learning dataset by mutating a large existing UI‑code corpus (WebSight‑v0.1). They first perform a failure analysis on 50 validation samples, identifying seven common error categories (e.g., color mismatches, missing layout blocks). Based on the frequency of each error type, they design realistic mutation rules that modify HTML/CSS attributes while preserving renderability. Each original sample is turned into four mutated versions, yielding 57,985 groups of (image, code) pairs. During training, each group provides multiple positive and negative examples: the image patches are encoded by a vision encoder, the HTML tokens by the language model with structure‑aware attention, and the average embeddings of patches and tokens represent the whole image and code respectively. A contrastive loss maximizes the cosine similarity of matching pairs while minimizing similarity to mismatched pairs, and it is combined with the standard language‑modeling loss (weighted by λ = 0.1).

The approach is evaluated on two backbones: VLM‑WebSight and Moondream2. Both models are first fine‑tuned on the original WebSight‑v0.1 data using standard language modeling, then further fine‑tuned with WAFFLE’s structure‑aware attention and contrastive loss. Experiments use two test sets: WebSight‑Test (500 synthetic webpages) and Design2Code (real‑world webpages). The metrics include HTML Match (exact token‑level agreement), CW‑SSIM (structural similarity of rendered pages), CLIP score (image‑text alignment), and LLEM (code‑rendering consistency). WAFFLE achieves up to 9 percentage‑point improvement in HTML Match, +0.0982 in CW‑SSIM, +32.99 in CLIP, and +27.12 pp in LLEM over baseline fine‑tuning. Ablation studies show that structure‑aware attention alone yields 3–4 pp gains, while the combination with contrastive learning provides the largest boost, confirming a synergistic effect.

Beyond performance, the authors release a new dataset of 231,940 webpage‑HTML pairs, facilitating future research on web‑focused multimodal models. They emphasize that WAFFLE is model‑agnostic: the attention mask and contrastive objective can be applied to any pre‑trained multimodal LLM without architectural changes, requiring only modest additional parameters via DoRA (a LoRA‑based efficient fine‑tuning method).

Limitations are acknowledged. The mutation rules target static HTML/CSS and do not cover dynamic JavaScript behavior or complex interactive components, which remain out of scope. Moreover, the structure‑aware attention is applied only to the language decoder, leaving the vision encoder unaware of HTML hierarchy; future work could explore joint visual‑structural encodings.

In summary, WAFFLE introduces a principled way to inject HTML structural knowledge into multimodal models and aligns visual UI representations with their textual code through contrastive learning. The resulting system significantly narrows the gap between UI screenshots and generated HTML, setting a new benchmark for automated front‑end development.

Comments & Academic Discussion

Loading comments...

Leave a Comment