Interaction2Code: Benchmarking MLLM-based Interactive Webpage Code Generation from Interactive Prototyping

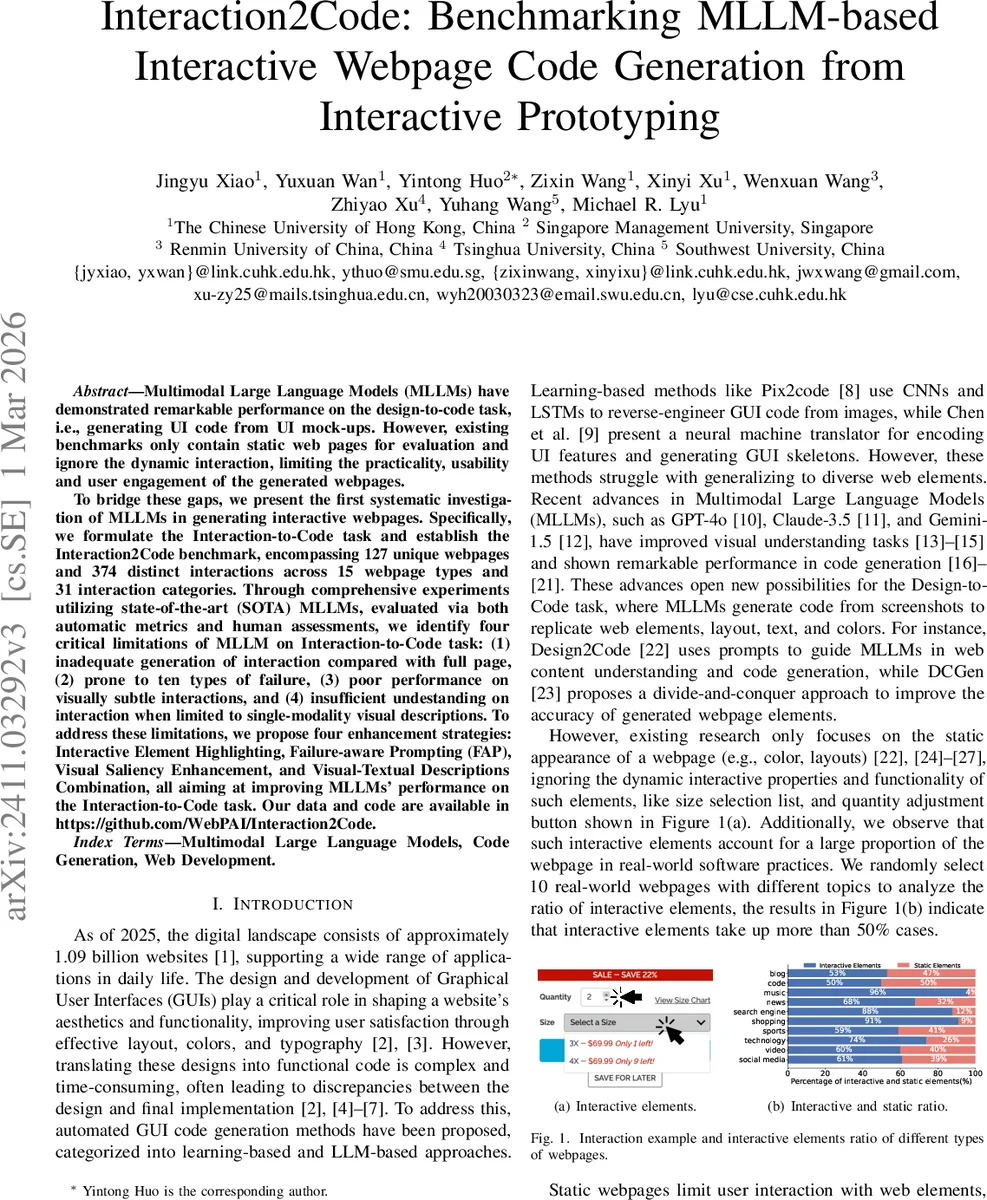

Multimodal Large Language Models (MLLMs) have demonstrated remarkable performance on the design-to-code task, i.e., generating UI code from UI mock-ups. However, existing benchmarks only contain static web pages for evaluation and ignore the dynamic interaction, limiting the practicality, usability and user engagement of the generated webpages. To bridge these gaps, we present the first systematic investigation of MLLMs in generating interactive webpages. Specifically, we formulate the Interaction-to-Code task and establish the Interaction2Code benchmark, encompassing 127 unique webpages and 374 distinct interactions across 15 webpage types and 31 interaction categories. Through comprehensive experiments utilizing state-of-the-art (SOTA) MLLMs, evaluated via both automatic metrics and human assessments, we identify four critical limitations of MLLM on Interaction-to-Code task: (1) inadequate generation of interaction compared with full page, (2) prone to ten types of failure, (3) poor performance on visually subtle interactions, and (4) insufficient undestanding on interaction when limited to single-modality visual descriptions. To address these limitations, we propose four enhancement strategies: Interactive Element Highlighting, Failureaware Prompting (FAP), Visual Saliency Enhancement, and Visual-Textual Descriptions Combination, all aiming at improving MLLMs’ performance on the Interaction-toCode task. The Interaction2Code benchmark and code are available in https://github. com/WebPAI/Interaction2Code.

💡 Research Summary

The paper introduces Interaction2Code, the first benchmark that evaluates multimodal large language models (MLLMs) on the task of generating interactive web pages from interactive prototypes, rather than static UI mock‑ups. The authors define a new “Interaction‑to‑Code” problem: given a pair of screenshots representing a UI before and after a user interaction, the model must produce HTML/CSS/JavaScript that reproduces the dynamic behavior. To support this, they construct a dataset of 127 real‑world web pages covering 15 topics and 31 interaction categories, yielding 374 distinct interactions. Data are sourced from Common Crawl and popular GitHub projects, and each interaction is manually annotated with tag‑level (button, input, link, etc.) and visual‑level (color change, position shift, text update, etc.) information.

Evaluation uses both automatic metrics (CLIP visual similarity, SSIM structural similarity, OCR‑BLEU text similarity, widget match) and human assessments (implementation rate, usability rate). State‑of‑the‑art MLLMs—including GPT‑4o, Claude‑3.5, and Gemini‑1.5—are prompted to generate code, and Selenium is employed to automatically trigger the generated interactive elements for comparison with the ground‑truth prototype.

Four major shortcomings emerge: (1) models generate the static layout reasonably well but struggle to reproduce the interactive portion; (2) ten distinct failure modes appear, such as missing interactive elements, incorrect event binding, style loss, DOM structure errors, and absent script logic; (3) interactions that are visually subtle (e.g., slight color shifts or minor position adjustments) are frequently missed; and (4) a single visual input is insufficient for the model to infer the intended interaction, leading to low implementation rates.

To mitigate these issues, the authors propose four enhancement strategies: (i) Interactive Element Highlighting – overlay visual markers (borders, color cues) on interactive components to draw model attention; (ii) Failure‑aware Prompting (FAP) – embed examples of known failure cases in the prompt so the model can avoid repeating them; (iii) Visual Saliency Enhancement (VSE) – increase contrast or saliency of the interaction region to improve visual perception; and (iv) Visual‑Textual Description Combination – supplement the image with a concise textual description of the interaction. Experiments show that each technique individually improves implementation and usability rates, and their combination yields the most significant gains, especially for subtle interactions.

The benchmark, annotation guidelines, evaluation scripts, and code are publicly released on GitHub, providing a reproducible platform for future research. By exposing the gap between static UI generation and true interactive behavior, and by offering concrete methods to close that gap, the work charts a clear path toward more practical, end‑to‑end automated front‑end development using multimodal AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment