RAISE: Requirement-Adaptive Evolutionary Refinement for Training-Free Text-to-Image Alignment

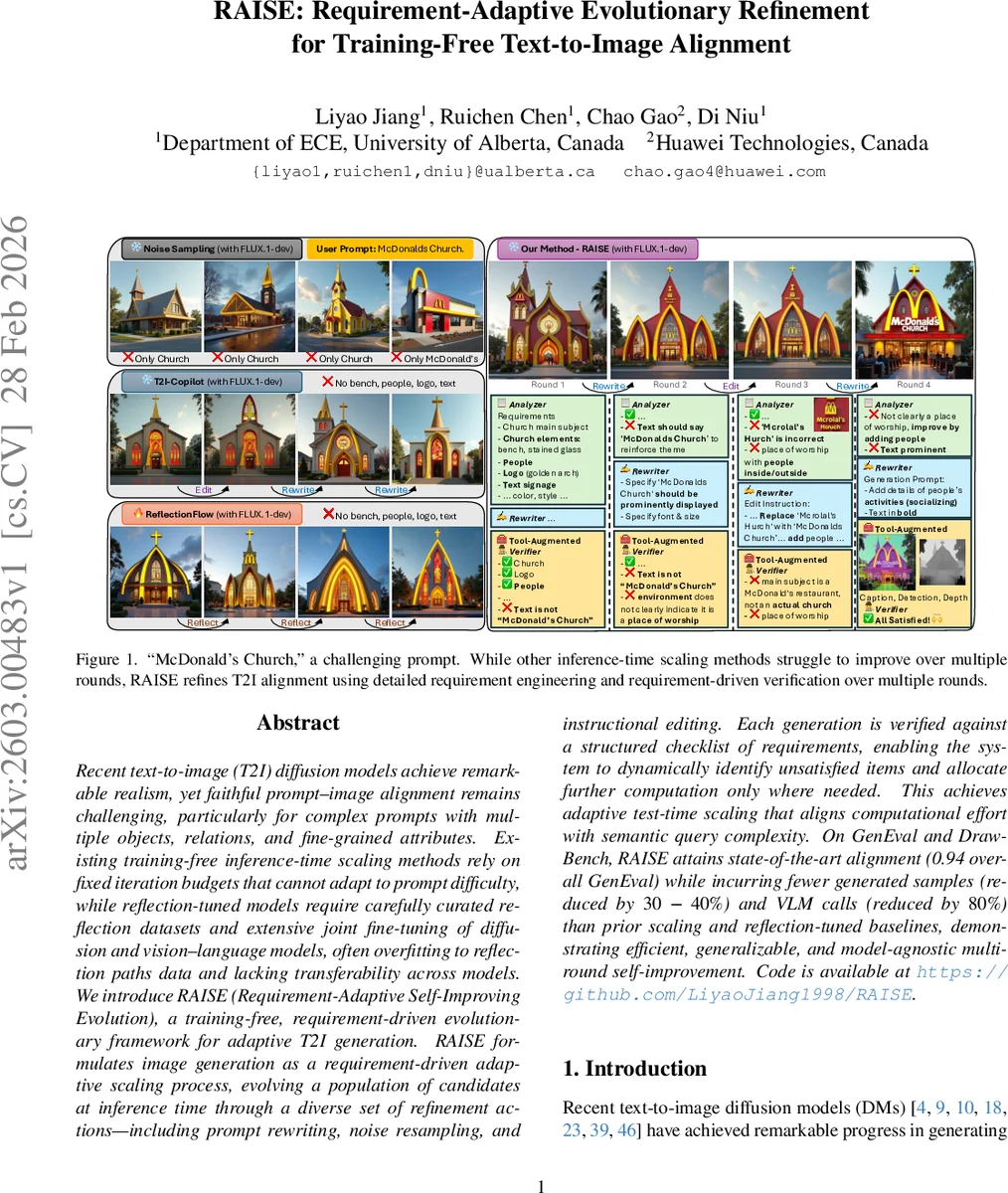

Recent text-to-image (T2I) diffusion models achieve remarkable realism, yet faithful prompt-image alignment remains challenging, particularly for complex prompts with multiple objects, relations, and fine-grained attributes. Existing training-free inference-time scaling methods rely on fixed iteration budgets that cannot adapt to prompt difficulty, while reflection-tuned models require carefully curated reflection datasets and extensive joint fine-tuning of diffusion and vision-language models, often overfitting to reflection paths data and lacking transferability across models. We introduce RAISE (Requirement-Adaptive Self-Improving Evolution), a training-free, requirement-driven evolutionary framework for adaptive T2I generation. RAISE formulates image generation as a requirement-driven adaptive scaling process, evolving a population of candidates at inference time through a diverse set of refinement actions-including prompt rewriting, noise resampling, and instructional editing. Each generation is verified against a structured checklist of requirements, enabling the system to dynamically identify unsatisfied items and allocate further computation only where needed. This achieves adaptive test-time scaling that aligns computational effort with semantic query complexity. On GenEval and DrawBench, RAISE attains state-of-the-art alignment (0.94 overall GenEval) while incurring fewer generated samples (reduced by 30-40%) and VLM calls (reduced by 80%) than prior scaling and reflection-tuned baselines, demonstrating efficient, generalizable, and model-agnostic multi-round self-improvement. Code is available at https://github.com/LiyaoJiang1998/RAISE.

💡 Research Summary

The paper addresses the persistent gap between textual prompts and the visual content produced by state‑of‑the‑art text‑to‑image diffusion models, especially when prompts contain multiple objects, intricate spatial relations, fine‑grained attributes, or embedded text. Existing inference‑time scaling methods either allocate a fixed amount of extra computation (e.g., more diffusion steps or a fixed number of prompt rewrites) or rely on reflection‑based fine‑tuning that requires large curated datasets and joint training of diffusion and vision‑language models. Fixed‑budget approaches cannot adapt to the varying difficulty of prompts, while reflection‑based methods suffer from over‑fitting to the reflection paths and lack portability across different base models.

RAISE (Requirement‑Adaptive Self‑Improving Evolution) is introduced as a training‑free, requirement‑driven framework that dynamically allocates computation based on the semantic complexity of each prompt. The system is built around three cooperating agents that share a common vision‑language model (VLM) backbone:

-

Analyzer – extracts a structured checklist of requirements from the user prompt and from verification feedback of previous rounds. Requirements cover object presence, attribute specifications, spatial relationships, count constraints, and any embedded textual elements. The analyzer partitions the checklist into satisfied (⁺) and unsatisfied (⁻) subsets, generates a set of binary verification questions, and decides whether further refinement is needed.

-

Rewriter – produces new textual prompts or editing instructions aimed at the unsatisfied requirements. It can rewrite the prompt (e.g., “Add a golden arch sign”) or generate concise edit commands for an image‑editing model (e.g., “Insert people sitting on benches”).

-

Verifier – evaluates generated candidates using tool‑grounded visual analysis. It calls off‑the‑shelf vision tools (captioning, object detection, depth estimation, OCR) to extract concrete evidence such as detected entities, attributes, bounding‑box relations, and textual overlays. The evidence is mapped to the binary questions supplied by the analyzer, yielding a fine‑grained satisfaction report that is fed back to the analyzer.

The refinement loop proceeds in iterative rounds. In each round, a population of candidate images is created by applying three mutation actions in parallel:

- Noise resampling – keeps the original prompt but draws new latent noise vectors, exploring alternative visual configurations.

- Prompt rewriting – leverages the VLM to modify the textual description according to unsatisfied requirements.

- Instructional editing – sends explicit edit commands to an image‑editing model to adjust the current best image.

Each candidate receives a fitness score f(y, x_user) that combines VLM‑based similarity with requirement satisfaction. The global best candidate is tracked across rounds. The adaptive scaling mechanism stops when either (a) the analyzer reports that all major requirements are satisfied, (b) the verifier confirms that every requirement (major and minor) is met, or (c) a predefined maximum number of rounds K_max is reached. A minimum round limit K_min guarantees sufficient exploration for difficult prompts.

Empirical evaluation uses the FLUX.1‑dev diffusion model as the base. On the GenEval benchmark, which tests multi‑object, relational, and attribute fidelity, RAISE achieves an overall score of 0.94 and a VQAScore of 0.885, surpassing prior methods such as T2I‑Copilot, SAN‑1.5, and reflection‑tuned models. On DrawBench, which emphasizes visual detail and compositional realism, RAISE also leads the leaderboard. Notably, RAISE reduces the number of generated samples by 30‑40 % and cuts vision‑language model calls by roughly 80 % compared with the strongest baselines, demonstrating substantial computational efficiency.

Key contributions are:

- Formulating T2I alignment as a requirement‑driven adaptive scaling problem, enabling computation to be allocated proportionally to prompt difficulty.

- Designing a multi‑action evolutionary framework that simultaneously explores noise, language, and image‑editing spaces, yielding a richer search landscape than single‑action loops.

- Introducing a structured, tool‑grounded verification pipeline that bridges visual perception and textual reasoning, providing interpretable, fine‑grained feedback for self‑correction.

Overall, RAISE showcases that sophisticated, self‑improving text‑to‑image generation can be achieved without any additional training, offering a scalable and model‑agnostic solution for high‑fidelity prompt adherence.

Comments & Academic Discussion

Loading comments...

Leave a Comment