OPGAgent: An Agent for Auditable Dental Panoramic X-ray Interpretation

Orthopantomograms (OPGs) are the standard panoramic radiograph in dentistry, used for full-arch screening across multiple diagnostic tasks. While Vision Language Models (VLMs) now allow multi-task OPG analysis through natural language, they underperf…

Authors: Zhaolin Yu, Litao Yang, Ben Babicka

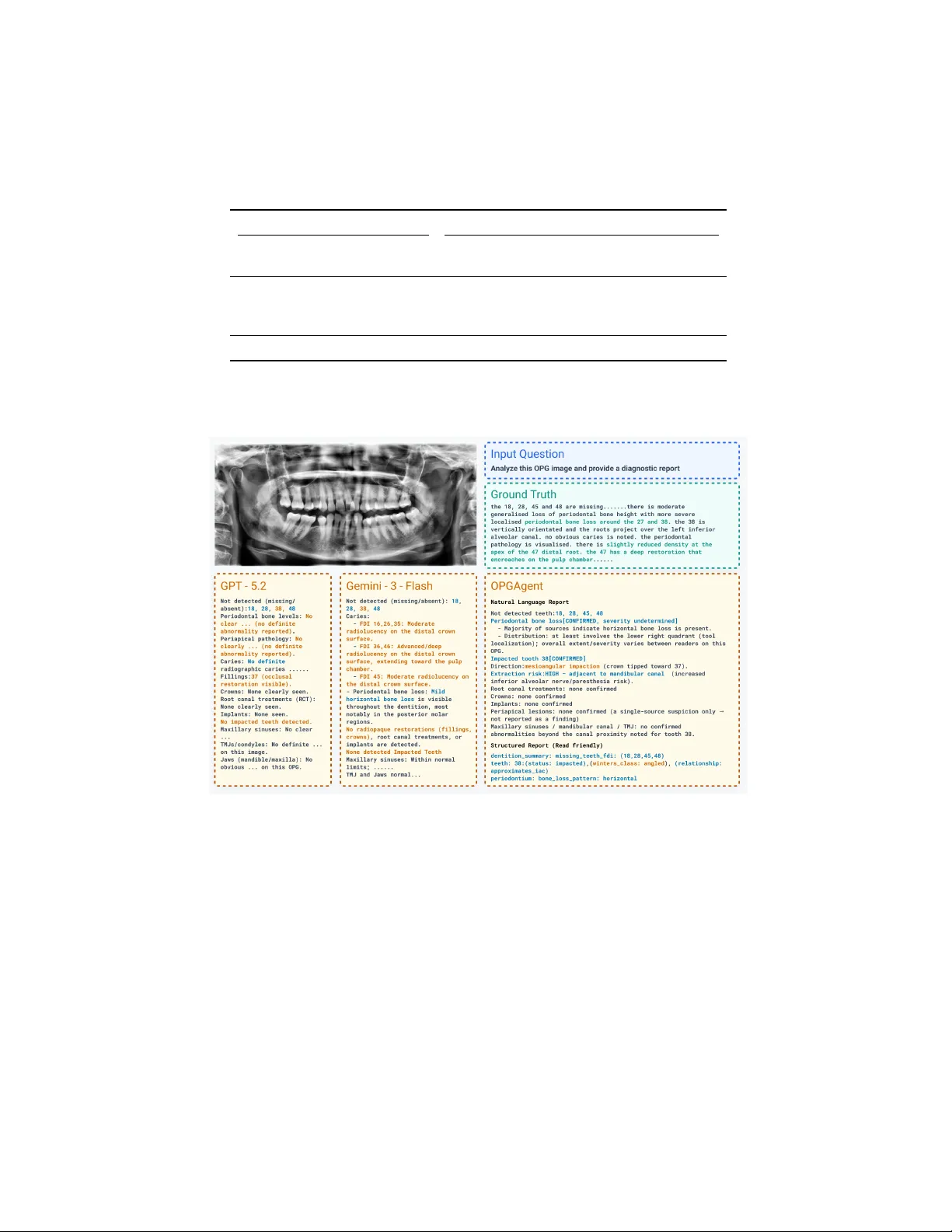

OPGAgen t: An Agen t for Auditable Den tal P anoramic X-ra y In terpretation Zhaolin Y u 1 , 2 , Litao Y ang 1 , 2 ( B ), Ben Babic k a 3 , Ming Hu 1 , 2 , Jing Hao 4 , An thony Huang 5 , James Huang 5 , Y ueming Jin 6 , Jiasong W u 7 , and Zongyuan Ge 1 , 2 , 5 ( B ) 1 AIM fo r Health Lab, F aculty of Information T echnology , Monash Universit y , Melb ourne, Australia 2 F aculty of Information T echnology , Monash Univ ersity , Melb ourne, Australia 3 Curae Health, Melb ourne, Australia 4 F aculty of Den tistry , The Univ ersity of Hong Kong, Hong K ong 5 Airdo c-Monash Research, Monash Univ ersity , VIC 3800, Australia 6 National Universit y of Singap ore, Singap ore Cit y , Singap ore 7 Southeast Universit y , Nanjing, China litao.yang@monash.edu, zongyuan.ge@monash.edu Abstract. Orthopan tomograms (OPGs) are the standard panoramic radiograph in den tistry , used for full-arch screening across m ultiple di- agnostic tasks. While Vision Language Models (VLMs) now allo w multi- task OPG analysis through natural language, they underp erform task- sp ecific mo dels on most individual tasks. Agentic systems that orches- trate sp ecialized to ols offer a path to b oth v ersatility and accuracy , this approac h remains unexplored in the field of dental imaging. T o address this gap, w e propose OPGAgent, a m ulti-tool agentic system for au- ditable OPG in terpretation. OPGAgent co ordinates sp ecialized p ercep- tion mo dules with a consensus mec hanism through three comp onents: (1) a Hierarchical Evidence Gathering mo dule that decomp oses OPG analysis in to global, quadran t, and to oth-level phases with dynamically in voking to ols, (2) a Sp ecialized T o olb ox encapsulating spatial, detection, utilit y , and exp ert zo os, and (3) a Consensus Subagent that resolves con- flicts through anatomical constraints. W e further prop ose OPG-Bench, a structured-rep ort proto col based on (Location, Field, V alue) triples de- riv ed from real clinical rep orts, whic h enables a comprehensive review of findings and hallucinations, extending b ey ond the limitations of VQA in- dicators. On our OPG-Benc h and the public MMOral-OPG benchmark, OPGAgen t outp erforms current dental VLMs and medical agen t frame- w orks across b oth structured-rep ort and VQA ev aluation. Co de will b e released up on acceptance. Keyw ords: Dental · Agen t · OPG · Structured Rep orting 1 In tro duction The Orthopan tomogram (OPG) captures the maxilla, mandible, and full denti- tion in a single panoramic exp osure and is routinely used for screening, diagno- 2 Z. Y u et al. sis, and treatment planning [4]. Deep learning mo dels no w exist for individual steps of OPG analysis, including caries detection [1], alveolar b one loss assess- men t [14], to oth segmentation [22,24], and to oth en umeration [8]. How ever, as recen t reviews note [7,19], since these separate mo dels cannot connect with each other, clinicians must use multiple to ols one b y one, making their workflo w in- efficien t. This motiv ates the developmen t of a unified den tal framework capable of concurren tly addressing diverse diagnostic tasks. T o address this need for multi-tasking, Vision Language Models (VLMs) take a different approach b y framing diverse visual tasks as a unified text generation problem. Den tal VLMs such as Den talGPT [2] and OralGPT-Omni [10], together with general medical VLMs like LLaV A-Med [15] and MedGemma [6], can p er- form pathology iden tification, anatomical description, and rep ort generation via m ultimo dal information. Their accuracy on individual tasks, how ever, lags b e- hind that of sp ecialized mo dels, where lo cal spatial cues dominate [5]. Therefore, o vercoming this p erformance limitation while sustaining multi-task adaptabilit y remains a c hallenge. AI agents [26] bridge this gap by co ordinating sp ecialized mo dels and exter- nal to ols, in tegrating high-precision multi-modal outputs into a unified frame- w ork. MedAgents [20] and MDAgen ts [13] use collab orative reasoning for clinical decision-making; MedAgen t-Pro [25] adds external p erception to ols to improv e diagnostic accuracy . While these frameworks excel in sp ecific modalities or broad clinical applications, they are not designed to capture the sp ecialized n uances of den tistry . Effective pro cessing of den tal imaging relies hea vily on domain kno wl- edge like FDI notation [8,12] and sp ecialized mec hanisms such as dynamic Region of Interest (R OI) cropping. Our exp eriments reveal a significan t performance gap when applying medical agen t designs (T able 1) to specialized dental tasks, un- derscoring the necessit y for a domain-sp ecific agent. T o reliably test these mo dels, we need a complete benchmark. How ev er, ex- isting VQA b enchmarks, whether closed-ended or op en-ended, measure precision only on the questions asked; recall dep ends entirely on whether those questions co ver all clinically relev an t findings. As a result, (1) any finding t ype absen t from the question set is invisible to ev aluation, and (2) hallucination severit y cannot be quan tified, since fabricated findings in unprompted regions go un- detected [3,28]. F urthermore, this question-and-answer paradigm fundamentally div erges from real-world clinical practice. Den tists do not analyze an OPG by answ ering isolated prompts; rather, they conduct comprehensive visual screen- ings to generate structured, holistic rep orts. Consequen tly , current b enchmarks fail to reflect a mo del’s true diagnostic utility in an actual clinical workflo w. T o address these issues, we prop ose OPGAgent, a multi-tool agentic system for auditable OPG interpretation that com bines sp ecialized p erception mo dules with a consensus mechanism. The system has three comp onents: (1) a Hierarchi- cal Evidence Gathering mo dule that decomp oses analysis into global, quadrant, and tooth-level phases; (2) a Sp ecialized T o olb ox encapsulating four categories; and (3) a Consensus Subagent that aggregates v otes from detection to ols and exp ert zo os and resolves conflicts through anatomical constrain ts. F urthermore, OPGAgen t: An Agen t for Auditable Dental Panoramic X-ray Interpretation 3 to align our ev aluation with real clinical workflo ws rather than isolated prompts, w e further prop ose OPG-Bench, a structured-rep ort protocol utilizing (Lo cation, Field, V alue) triples to o vercome the failure of VQA in reflecting true diagnos- tic capabilities. In summary , our contributions are threefold: (1) OPGAgen t , the first agentic system sp ecifically designed for OPG interpretation, featuring Hierarc hical Evidence Gathering, a Sp ecialized T oolb ox, and a Consensus Sub- agen t; (2) OPG-Bench , a nov el ev aluation proto col derived from real clinical rep orts that explicitly audits b oth pathological findings and hallucinations; and (3) State-of-the-art p erformance , demonstrating that OPGAgen t outper- forms all baselines on b oth OPG-Bench and MMOral-OPG [9], achieving higher F1 scores with few er false p ositives. 2 OPGAgen t 2.1 Ov erview. Fig. 1 illustrates the w orkflo w of OPGAgen t. Giv en an input OPG image I , OPGAgen t is a planner p ow ered b y GPT-5.2 following the ReAct paradigm [26] that orchestrates three mo dules: Hierarchical Evidence Gathering, Sp ecialized T o olb o x, and Consensus Subagent. In the Hierarc hical Evidence Gathering mo d- ule, OPGAgent decomposes the analysis in to three phases: global dentition, quadran t-level screening, and to oth-level screening, with findings stored in Mem- ory . The Specialized T oolb o x wraps perception mo dels and VLM experts as agen t-callable to ols in four categories: spatial, detection, utility , and exp ert zo os. The Consensus Subagent aggregates evidence from detection to ols and exp ert zo os, resolv es conflicts using anatomical constrain ts, and commits only w ell- supp orted findings to the final rep ort. 2.2 Hierarc hical Evidence Gathering T o generate refined, verifiable findings, we design a Hierarchical Evidence Gath- ering mo dule that progressively refines results through three phases, from full images to the to oth lev el, and stores all findings in Memory . Phase 1: Global Analysis. First, the agen t queries VLM exp erts on the full image to generate an initial reading. Simultaneously , it inv ok es detection to ols to establish the anatomical baseline: total tooth count, missing teeth, and FDI notation mapping [12] for each to oth. This phase creates a structured coordinate system in Memory for all subsequen t phases. Phase 2: Quadrant-Lev el Screening. Using the global context in Memory , OPGAgen t scans each quadran t (Q1–Q4). It uses co ordinates from Phase 1 to generate dynamic quadrant crops, which are sen t to VLM exp erts to screen for gross pathologies such as alveolar b one loss or large lesions. The marked regions will b e sen t to Phase 3 for to oth-level inv estigation. Phase 3: T o oth-Lev el Screening. F or detailed pathologies (e.g., caries, im- paction status), OPGAgent crops dynamic R OIs using co ordinates in Memory . 4 Z. Y u et al. Fig. 1. Overview of OPGAgent. The Agen t orchestrates three mo dules: Hierarchical Evidence Gathering, Sp ecialized T oolb ox, and Consensus Subagent. Because b ounding b oxes preserve lo cal context, relatively higher resolution crops are sent to VLM exp erts for assessmen t. If Phase 2 flags a p otential issue in a re- gion where Phase 1 detected no to oth (e.g., a ro ot remnant), OPGAgent requests a quadran t crop from indep endent anatomical detection (not to oth co ordinates), allo wing VLMs to recov er false negatives missed by the to oth-level scan. 2.3 Sp ecialized T o olb ox T o pro vide structured visual p erception for OPGAgen t, we implement p erception mo dules and exp ose them as agent-friendly tools. These to ols are p ow ered b y four underlying model types: (1) YOLO [23] trained on public dental datasets [8] for quadrant and tooth detection; (2) open-source den tal vision exp erts from OralGPT-Omni [10], based on MaskDINO [16] and DINO [27], for maxillary sin us, mandibular canal, and pathology detection; (3) MedSAM [17] for to oth mask segmen tation; and (4) multiple VLM for whole-image or R OI-level analysis. Spatial T ools. These to ols return masks or b ounding b oxes with co ordinates for teeth, quadrants, and detected findings. These co ordinates form the spatial reference for all subsequen t phases. Detection T o ols. These tools detect specific or all abnormal conditions using v arious pathological models (caries, p eriapical lesions, apical fillings, etc.) and determine whic h teeth are asso ciated with these abnormalities. OPGAgen t: An Agen t for Auditable Dental Panoramic X-ray Interpretation 5 Utilit y T o ols. This suite manages FDI n umbering, R OI extraction, and spatial reasoning. T o assess clinical risks such as the proximit y of a to oth ro ot to the mandibular canal, the agent computes the minimum con tour distance d ( m i , m j ) b et ween tw o p olygon masks m i and m j : d ( m i , m j ) = min p ∈ ∂ m i , q ∈ ∂ m j ∥ p − q ∥ 2 (1) where d ( m i , m j ) = 0 if the p olygons intersect. F or matching diseases to teeth, the agent prioritizes In tersection o ver Union (IoU), using the Euclidean distance b et ween b ox cen ters as a fallback when IoU is b elow a threshold. Exp ert Zo os. These to ols call DentalGPT, OralGPT-Omni, GPT-5.2, and Gemini-3-Flash to collect multiple exp ert opinions on whole images, whole im- ages with b ounding b ox, or specific ROIs. These opinions form the evidence base for the Consensus Subagen t. 2.4 Consensus Subagent T o mitigate hallucination risks in generativ e models, w e design a Consensus Subagen t that aggregates evidence from detection to ols and exp ert zo os. Evidence Aggregation. The Consensus Subagen t collects opinions from N sources and confirms a finding when ≥ 3 sources agree or ≥ 2 sources rep ort the same finding. All sources receive equal voting w eight so that rare conditions missed b y rule-based detectors can still pass through VLM votes. Conflict Resolution. Disagreements often concern exact attributes rather than the presence of a finding. When a finding is confirmed b y ma jority vote but sources disagree on its attributes (e.g., tooth n um b er or sev erit y grade), the subagen t chec ks the conflicting claims against hard constrain ts provided by the detection to ols. F or instance, if VLMs agree on a “low er left implant” but assign differen t to oth num b ers, the subagent consults the detection to ol’s FDI co or- dinate map to assign the finding to the spatially correct to oth, correcting the VLM output with deterministic co ordinates. 2.5 Structured Rep orting and Proto col Design Hierarc hical Clinical On tology . T o align the agent’s output with real-w orld clinical triage, we formalize the OPG interpretation into a structured, anatom y cen tric schema. W e define a comprehensive den tal rep ort as a set of pathological findings S = { t 1 , t 2 , . . . , t N } , where each finding is abstracted as an information triple t i = ( l i , f i , v i ) ∈ L × F × V . Here, L denotes the anatomical location space strictly adhering to the FDI W orld Dental F ederation notation (ISO 3950) for teeth, alongside global regions (e.g., TMJ, maxillary sin us). F represents the clin- ical field, and V is the categorical v alue space. T o ensure clinical completeness, V is constructed up on standardized dental guidelines, mapping complex numer- ical scales into semantic sev erity lev els (e.g., ICDAS [11] for caries, AAP/EFP 2017 [21] for p erio dontitis, and P AI [18] for periapical health). F ollowing the 6 Z. Y u et al. sparse represen tation principle of clinical rep orting, S only encapsulates anoma- lous findings; normal anatomical structures are in tentionally omitted. Constrained P arser Agent. A dedicated Parser Agent transforms the sys- tem’s Memory output into the formal S space. T o eliminate hallucinations and enforce sc hema adherence, it utilizes constrained deco ding—via JSON mode, Pydan tic v alidation, and few-shot learning. This deterministically maps findings in to v alid ( l i , f i , v i ) triples, strictly prev enting unsupp orted free-text diagnoses. T riple-based Hierarchical Ev aluation (OPG-Benc h). Overcoming V QA limitations, we ev aluate findings as ( location, f ield, v al ue ) triples via t wo com- plemen tary metrics. The Exact Match requires p erfect alignmen t of all ele- men ts. T o reflect clinical workflo ws, we also compute a step-wise partial match: Detection of pathology presence (Precision/Recall/F1), Lo calization of the anatomical target l i (Precision/Recall/F1), and Classification of the grade/t yp e v i (A ccuracy). Eac h step conditions on the previous one. This hierarc hical pro- to col cleanly disen tangles visual p erception from clinical reasoning. 3 Exp erimen ts Dataset. The OPG-Benc h dataset comprises 1,009 anonymized OPGs with paired clinical and structured rep orts, and 5,219 unguided V QA pairs; ev alu- ation follows the triple-based proto col defined in §2.4. Sourced from multiple clinics, the dataset is restricted to patients ≥ 16 y ears to fo cus on p ermanent den tition. Ground truths deriv e from real clinical rep orts and are v alidated via man ual sp ot-chec ks. Since real-world rep orts typically prioritize c hief complaints o ver incidental findings, our rep orted F alse Positiv e rates ma y b e slightly inflated when the agen t detects undo cumented yet v alid pathologies. Ev aluation Proto col. W e ev aluate OPGAgent against state-of-the-art general VLMs, dental-specific VLMs, and medical agents. T o isolate our framew ork’s arc hitectural contribution, MedAgent-Pro [25] is configured with the same LLM and to ols as OPGAgen t. T o decouple diagnostic accuracy from formatting, we emplo y a unified generation-ev aluation pip eline. All mo dels receive iden tical prompts (temp erature 0.3) to generate natural-language rep orts. A shared P arser Agen t then conv erts these into structured formats—ensuring parser bias applies equally across metho ds—follo wed by manual filtering to retain only v alid clinical findings. Results on OPG-Benc h. W e ev aluate OPGAgen t against general VLMs, and den tal VLMs [2,10] on the OPG-Bench dataset. As shown in T able 1, OPGA- gen t reaches an exact-matc h F1 of 42.3% and an aggregate score of 49.7%, sur- passing Gemini-3-Flash and DentalGPT. While Gemini-3-Flash obtains higher recall (45.1%), its precision drops to 27.6% with 10.58 false p ositiv es p er case; OPGAgen t maintains precision at 43.1% while keeping false p ositives at 4.89. Domain-sp ecific mo dels like OralGPT-Omni minimize false p ositives (4.02) but at the cost of lo w cov erage (F1 6.2%). OPGAgen t thus balances sensitivity and reliabilit y b etter than single-pass approaches. OPGAgen t: An Agen t for Auditable Dental Panoramic X-ray Interpretation 7 T able 1. Quantitativ e comparison on OPG-Bench dataset. Methods E.M. Det. Loc. Cls. Score P R F1 FP ↓ P R F1 P R F1 A cc Gemini-3-Flash 0.428 0.276 0.451 0.343 10.58 0.440 0.596 0.506 0.311 0.488 0.380 0.796 Kimi-k2.5 0.399 0.252 0.345 0.291 9.20 0.481 0.500 0.490 0.348 0.463 0.397 0.764 Gemini-3-Pro 0.399 0.323 0.294 0.308 5.60 0.556 0.431 0.485 0.458 0.319 0.376 0.732 GPT-5.2 0.357 0.283 0.273 0.278 6.17 0.624 0.359 0.456 0.392 0.193 0.258 0.754 Qwen3-VL-235B 0.230 0.106 0.191 0.137 14.50 0.316 0.436 0.366 0.107 0.175 0.133 0.614 Qwen3-VL-8B 0.197 0.102 0.070 0.083 5.50 0.409 0.259 0.317 0.175 0.124 0.145 0.635 DentalGPT 0.295 0.128 0.200 0.156 12.22 0.464 0.483 0.473 0.241 0.273 0.256 0.715 OralGPT-Omni 0.157 0.094 0.046 0.062 4.02 0.457 0.151 0.227 0.145 0.075 0.099 0.603 MedAgent-Pro 0.278 0.171 0.183 0.177 8.00 0.412 0.438 0.425 0.206 0.204 0.205 0.632 OPGAgent 0.497 0.431 0.415 0.423 4.89 0.715 0.456 0.557 0.625 0.376 0.469 0.801 Sc or e = 0 . 5 × EM_F1 +0 . 2 × Det_F1 +0 . 2 × L o c_F1 +0 . 1 × Cls_A c c (b alancing exact and p artial matches). E.M. (Exact Match), Det. (Pr esenc e), L o c. (L o c ation), Cls. (Gr ade/T yp e), FP (F alse Positives/Case). T able 2. Performance comparison on VQA benchmarks. Methods MMOral-OPG OPG-Bench (V QA) Acc. T ee. Pat. Jaw HisT Acc. Mis. Muc. Bone Anat. Api. Gemini-3-Flash 48.47% 54.1% 42.6% 59.7% 66.2% 59.9% 48.4% 47.9% 60.0% 65.1% 42.2% GPT-5.2 43.58% 37.2% 32.4% 65.9% 52.1% 51.5% 48.8% 44.7% 50.5% 65.1% 27.7% OralGPT-Omni 59.06% 53.5% 44.6% 77.5% 69.7% 36.1% 13.6% 15.7% 23.2% 30.8% 17.0% DentalGPT 48.27% 42.3% 40.5% 65.1% 47.2% 27.8% 11.4% 22.2% 4.2% 13.0% 17.5% OPGAgent 62.53% 59.7% 52.7% 72.1% 71.8% 61.2% 67.0% 65.2% 76.8% 80.8% 35.0% T e e. (T e eth-r elate d), Pat. (Patholo gy), HisT (History & T r e atment). Mis. (Missing T e eth), Muc. (Muc osal Change), Bone (Bone V ariant), Anat. (A natomic al V ariants), Api. (Apic al Status). Results on VQA Benc hmarks. As shown in T able 2, OPGAgent leads on b oth b enc hmarks (62.53% on MMOral-OPG, 61.2% on OPG-Bench). On MMOral- OPG, OralGPT-Omni scores highest on Ja w questions (77.5%) but falls be- hind on T eeth and Pathology categories, where OPGAgent’s multi-tool pip eline pro vides clearer gains. On OPG-Bench, domain-sp ecific VLMs (OralGPT-Omni 36.1%, DentalGPT 27.8%) score muc h low er than general VLMs, despite OralGPT- Omni p erforming well on its own MMOral-OPG b enchmark (59.06%). This gap suggests that mo dels trained on generated QA pairs may not fully capture the distribution of real clinical rep orts. Ablation Studies. T able 3 adds comp onents cum ulatively . By design, the agent LLM serves only as a planner. Without external to ols (Ro w 1), it is forced to act as the sole diagnostician in a ReA ct lo op, yielding a 27.78% F1. Adding Exp ert Zo os (Row 2) impro ves precision (38.62%) and minimizes false p ositiv es (6.17 → 2.37), but plummets recall (16.64%) b ecause findings lack exact FDI coordinates and fail strict triple matching. Spatial T o ols (Row 3) resolve this grounding b ottlenec k, restoring recall (35.98%) and F1 (36.55%). Finally , Detection T o ols (Ro w 4) provide complementary lo calized evidence, achieving the p eak 42.30% F1. 8 Z. Y u et al. T able 3. Ablation study on OPG-Bench with cum ulative component additions. Comp onen ts Metrics Exp ert Zo os Spatial T ools Detection T ools Precision Recall F1 FP/Case ↓ ✗ ✗ ✗ 28.32% 27.25% 27.78% 6.17 ✓ ✗ ✗ 38.62% 16.64% 23.25% 2.37 ✓ ✓ ✗ 37.13% 35.98% 36.55% 5.47 ✓ ✓ ✓ 43.15% 41.48% 42.30% 4.89 Exp ert Zo os (GPT-5.2, Gemini-3-Flash, DentalGPT, Or alGPT-Omni), Dete ction T o ols (Patholo gy Dete ction), Sp atial T o ols (T o oth/Quadr ant Lo- c alization). Fig. 2. Vision comparison. OPGAgen t correctly identifies missing teeth, bone loss, and impacted to oth 38 via consensus, while GPT-5.2 and Gemini-3-Flash each miss or hallucinate findings. Blue for correct; Orange for wrong; Green for all undetected. 4 Conclusion W e present OPGAgent, a multi-tool agentic framew ork for auditable OPG in- terpretation that com bines Hierarc hical Evidence Gathering, a Sp ecialized T o ol- b o x, and a Consensus Subagent. Through phased analysis, multi-source voting, anatomical conflict resolution, and structured triple-based ev aluation, OPGA- gen t pro duces more accurate and auditable dental reports than existing VLMs OPGAgen t: An Agen t for Auditable Dental Panoramic X-ray Interpretation 9 and agen t frameworks. Ablation studies confirm that eac h mo dule contributes to the o verall p erformance. References 1. Ba yraktar, Y., et al.: A nov el deep learning-based p ersp ective for to oth n umbering and caries detection. Clinical Oral In vestigations 28 , 178 (2024). doi:10.1007/s00784-024-05566-w 2. Cai, Z., et al.: DentalGPT: Incentivizing Multimodal Complex Reasoning in Den- tistry . arXiv preprint arXiv:2512.11558 (2025) 3. Chen, J., et al.: Detecting and Ev aluating Medical Hallucinations in Large Vision Language Mo dels. In: ICLR (2025). 4. F raser, J.: Artificial intelligence and dental panoramic radiographs: where are we no w? British Den tal Journal (2024). doi:10.1038/s41432-024-00978-9 5. Ghimire, A., et al.: When CNNs Outp erform T ransformers and Mambas: Re- visiting Deep Architectures for Dental Caries Segmentation. arXiv preprint arXiv:2511.14860 (2025) 6. Golden, D., Pilgrim, R., et al.: MedGemma: Op en mo dels for health AI developmen t. Google Research (2025). https://developers.google.com/ health- ai- developer- foundations/medgemma 7. Grünhagen, T., et al.: Automating Dental Condition Detection on Panoramic Radiographs: Challenges, Pitfalls, and Opportunities. Diagnostics 14 (20), 2336 (2024). doi:10.3390/diagnostics14202336 8. Hamamci, I.E., et al.: DENTEX: Dental Enumeration and T o oth Pathosis Detec- tion Benchmark for P anoramic X-rays. arXiv preprint arXiv:2305.19112 (2023) 9. Hao, J., et al.: T ow ards b etter den tal AI: A multimodal b enchmark and instruction dataset for panoramic X-ray analysis. arXiv preprint arXiv:2509.09254 (2025) 10. Hao, J., et al.: OralGPT-Omni: A V ersatile Den tal Multimo dal Large Language Mo del. arXiv preprint arXiv:2511.22055 (2025) 11. Ismail, A.I., et al.: The International Caries Detection and Assessmen t System (IC- D AS): an in tegrated system for measuring dental caries. Communit y Dentistry and Oral Epidemiology 35 (3), 170–178 (2007). doi:10.1111/j.1600-0528.2007.00347.x 12. ISO 3950:2016. Dentistry—Designation system for teeth and areas of the oral ca v- it y . In ternational Organization for Standardization (2016) 13. Kim, Y., et al.: MDAgen ts: An Adaptiv e Collab oration of LLMs for Medical Decision-Making. arXiv preprint arXiv:2404.15155 (2024) 14. Kurt-Ba yrakdar, S., et al.: Detection of p erio don tal bone loss patterns and furca- tion defects from panoramic radiographs using deep learning algorithm. BMC Oral Health 24 , 155 (2024). doi:10.1186/s12903-024-03896-5 15. Li, C., et al.: LLaV A-Med: T raining a Large Language-and-Vision Assistant for Biomedicine in One Da y . In: NeurIPS 2023 Datasets and Benchmarks T rack (2023). 16. Li, F., et al.: Mask DINO: T ow ards A Unified T ransformer-based F ramework for Ob ject Detection and Segmentation. In: CVPR (2023). 17. Ma, J., et al.: Segmen t Anything in Medical Images. Nature Communications 15 , 654 (2024). doi:10.1038/s41467-024-44824-z 18. Ørsta vik, D., Kerekes, K., Eriksen, H.M.: The p eriapical index: A scoring system for radiographic assessment of apical perio dontitis. Dental T raumatology 2 (1), 20– 34 (1986). doi:10.1111/j.1600-9657.1986.tb00119.x 10 Z. Y u et al. 19. Sohrabniy a, F., et al.: Exploring a decade of deep learning in dentistry: A comprehensiv e mapping review. Clinical Oral In vestigations 29 , 143 (2025). doi:10.1007/s00784-025-06216-5 20. T ang, X., et al.: MedAgen ts: Large Language Mo dels as Collaborators for Zero-shot Medical Reasoning. In: Findings of ACL, pp. 599–621 (2024). 21. T onetti, M. S., Greenw ell, H., Kornman, K.S.: Staging and grading of p erio dontitis: F ramework and proposal of a new classification and case definition. Journal of P erio dontology 89 (S1) (2018). doi:10.1002/JPER.18-0006 22. v an Nistelro oij, N., et al.: Combining public datasets for automated to oth assessmen t in panoramic radiographs. BMC Oral Health 24 , 387 (2024). doi:10.1186/s12903-024-04129-5 23. W ang, C.-Y., Y eh, I.-H., Liao, H.-Y.M.: YOLOv9: Learning What Y ou W ant to Learn Using Programmable Gradien t Information. arXiv preprin t arXiv:2402.13616 (2024) 24. W ang, Y., et al.: MICCAI STS 2024 Challenge: Semi-Sup ervised Instance-Level T o oth Segmentation in Panoramic X-ray and CBCT Images. arXiv preprin t arXiv:2511.22911 (2025) 25. W ang, Z., et al.: MedAgent-Pro: T o wards Evidence-based Multi-mo dal Medical Diagnosis via Reasoning Agentic W orkflow. arXiv preprint arXiv:2503.18968 (2025) 26. Y ao, S., et al.: ReA ct: Synergizing Reasoning and Acting in Language Mo dels. In: ICLR (2023). 27. Zhang, H., et al.: DINO: DETR with Improv ed DeNoising Anchor Boxes for End- to-End Ob ject Detection. In: ICLR (2023). 28. Zhao, L., et al.: MARINE: Mitigating Ob ject Hallucination in Large Vision- Language Mo dels via Image-Grounded Guidance. In: ICML (2025)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment