Toward Reasoning on the Boundary: A Mixup-based Approach for Graph Anomaly Detection

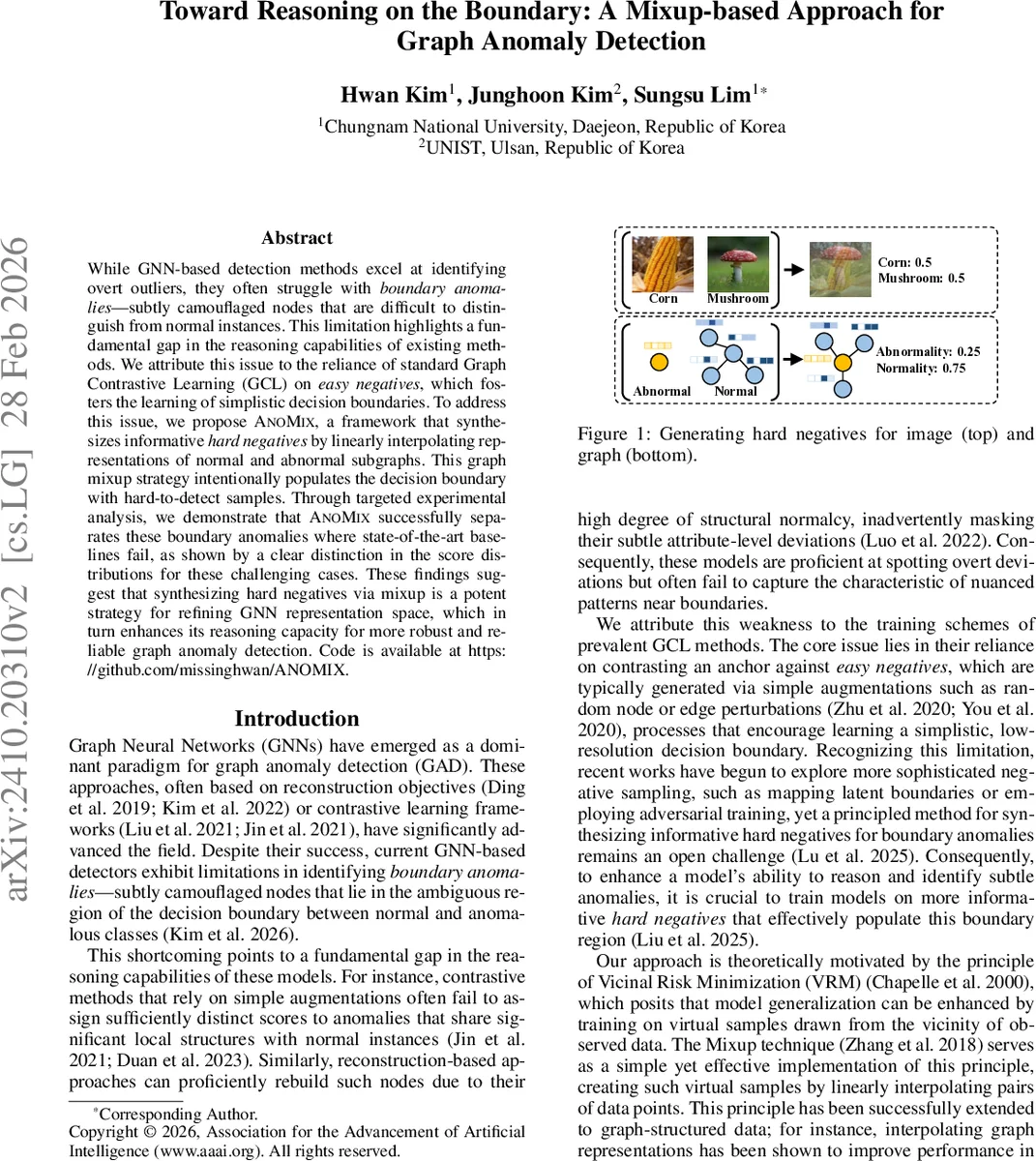

While GNN-based detection methods excel at identifying overt outliers, they often struggle with boundary anomalies – subtly camouflaged nodes that are difficult to distinguish from normal instances. This limitation highlights a fundamental gap in the reasoning capabilities of existing methods. We attribute this issue to the reliance of standard Graph Contrastive Learning (GCL) on easy negatives, which fosters the learning of simplistic decision boundaries. To address this issue, we propose ANOMIX, a framework that synthesizes informative hard negatives by linearly interpolating representations of normal and abnormal subgraphs. This graph mixup strategy intentionally populates the decision boundary with hard-to-detect samples. Through targeted experimental analysis, we demonstrate that ANOMIX successfully separates these boundary anomalies where state-of-the-art baselines fail, as shown by a clear distinction in the score distributions for these challenging cases. These findings suggest that synthesizing hard negatives via mixup is a potent strategy for refining GNN representation space, which in turn enhances its reasoning capacity for more robust and reliable graph anomaly detection. Code is available at https://github.com/missinghwan/ANOMIX.

💡 Research Summary

The paper tackles a critical shortcoming of current graph neural network (GNN)‑based graph anomaly detection (GAD) methods: their inability to reliably detect “boundary anomalies,” i.e., nodes that lie in the ambiguous region between normal and anomalous classes. Existing reconstruction‑based or contrastive learning (GCL) approaches typically rely on easy negatives generated by simple augmentations (random node/edge perturbations). This leads to low‑resolution decision boundaries that separate overt outliers well but fail on subtle, camouflaged anomalies.

To remedy this, the authors propose ANOMIX, a framework that explicitly synthesizes hard negatives through a graph‑mixup operation. For each target node, two contextual ego‑nets are constructed: a normal context (G_no) sampled via random walks from the target itself, and an abnormal context (G_ab) sampled from walks starting at a randomly chosen known anomaly (the method assumes a small set of labeled anomalies). The representations of these two subgraphs are linearly interpolated:

G_mix = λ · G_ab + (1 – λ) · G_no,

where λ ∼ Beta(α, α). This mixup follows the Vicinal Risk Minimization (VRM) principle, creating virtual samples that lie near the observed data distribution, specifically in the decision‑boundary region. A feature‑masking step zeros out the target node’s attributes inside the subgraphs to prevent information leakage.

ANOMIX then trains a multi‑level contrastive objective. At the node level, the model distinguishes the original target node embedding from its masked counterpart within the subgraph context. At the subgraph level, the target node embedding is contrasted with a read‑out summary of the entire subgraph. Positive pairs (e.g., target‑context) are encouraged to have high similarity, while negative pairs (including the synthetic hard negative G_mix) are pushed apart. The loss is summed over both normal and mixed views, forcing the encoder to learn representations that are sensitive to subtle structural and attribute deviations.

During inference, anomaly scores are derived from the aggregated similarity gaps across multiple stochastic subgraph samplings. Both the mean and standard deviation of these gaps are used, capturing not only the magnitude but also the instability typical of anomalous nodes.

The authors evaluate ANOMIX on six widely used datasets (Cora, CiteSeer, Pubmed, ACM, Facebook, Amazon) and compare against ten state‑of‑the‑art baselines covering reconstruction‑based, contrastive, and semi‑supervised methods. ANOMIX achieves the highest AUC on all datasets, improving up to 8.44 % absolute over the best baseline. Notably, on dense networks like ACM and real‑world anomaly‑rich Facebook, the mixup‑generated hard negatives lead to substantial gains, while on Pubmed (sparse with synthetic clique anomalies) the advantage is modest, reflecting fewer boundary cases.

A focused analysis on “boundary anomalies” is performed by training a standard GCL model (CoLA) and labeling the bottom 30 % of anomaly scores as “boundary” and the top 30 % as “obvious.” Baselines’ score distributions for boundary anomalies heavily overlap with normal nodes, confirming their difficulty. In contrast, ANOMIX’s distributions are clearly separated, with boundary anomalies receiving high scores comparable to obvious ones. This pattern holds across multiple datasets, demonstrating that the hard‑negative mixup effectively sharpens the decision boundary.

Ablation studies further validate the design choices. Removing mixup (w/o Mixup) reduces performance to that of a vanilla GCL model. Randomly mixing arbitrary subgraphs (Random Mixup) yields modest improvements, but the targeted normal‑abnormal mixup consistently outperforms it, underscoring that the benefit stems from the purposeful construction of hard negatives rather than mixup per se.

The paper acknowledges limitations: the mixing coefficient λ is drawn from a static Beta distribution, and the current approach is limited to homogeneous static graphs. Future work includes adaptive λ strategies, extensions to heterogeneous, multi‑relational, or dynamic graphs, and more sophisticated hard‑negative generation mechanisms.

In summary, ANOMIX introduces a principled way to populate the decision‑boundary region with informative hard negatives via graph mixup, thereby enhancing GNNs’ reasoning capability for subtle anomalies. The method bridges a gap between theoretical VRM concepts and practical graph anomaly detection, delivering consistent empirical gains and offering a promising direction for further research in robust graph‑based outlier detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment